Discover TalkRL: The Reinforcement Learning Podcast

TalkRL: The Reinforcement Learning Podcast

TalkRL: The Reinforcement Learning Podcast

Author: Robin Ranjit Singh Chauhan

Subscribed: 291Played: 4,405Subscribe

Share

© 2026 Robin Ranjit Singh Chauhan

Description

TalkRL podcast is All Reinforcement Learning, All the Time.

In-depth interviews with brilliant people at the forefront of RL research and practice.

Guests from places like MILA, OpenAI, MIT, DeepMind, Berkeley, Amii, Oxford, Google Research, Brown, Waymo, Caltech, and Vector Institute.

Hosted by Robin Ranjit Singh Chauhan.

In-depth interviews with brilliant people at the forefront of RL research and practice.

Guests from places like MILA, OpenAI, MIT, DeepMind, Berkeley, Amii, Oxford, Google Research, Brown, Waymo, Caltech, and Vector Institute.

Hosted by Robin Ranjit Singh Chauhan.

74 Episodes

Reverse

Joseph Modayil is the Founder, President & Research Director of Openmind Research Institute.Featured References Openmind Research Institute The Alberta Plan for AI Research Richard S. Sutton, Michael Bowling, Patrick M. Pilarski Additional References Joseph Modayil on Google Scholar Joseph Modayil Homepage

Danijar Hafner was a Research Scientist at Google DeepMind until recently.Featured References Training Agents Inside of Scalable World Models [ blog ] Danijar Hafner, Wilson Yan, Timothy LillicrapOne Step Diffusion via Shortcut ModelsKevin Frans, Danijar Hafner, Sergey Levine, Pieter AbbeelAction and Perception as Divergence Minimization [ blog ] Danijar Hafner, Pedro A. Ortega, Jimmy Ba, Thomas Parr, Karl Friston, Nicolas Heess Additional References Mastering Diverse Domains through World Models [ blog ] DreaverV3l Danijar Hafner, Jurgis Pasukonis, Jimmy Ba, Timothy Lillicrap Mastering Atari with Discrete World Models [ blog ] DreaverV2 ; Danijar Hafner, Timothy Lillicrap, Mohammad Norouzi, Jimmy Ba Dream to Control: Learning Behaviors by Latent Imagination [ blog ] Dreamer ; Danijar Hafner, Timothy Lillicrap, Jimmy Ba, Mohammad Norouzi Video PreTraining (VPT): Learning to Act by Watching Unlabeled Online Videos [ Blog Post ], Baker et al

David Abel is a Senior Research Scientist at DeepMind on the Agency team, and an Honorary Fellow at the University of Edinburgh. His research blends computer science and philosophy, exploring foundational questions about reinforcement learning, definitions, and the nature of agency. Featured References Plasticity as the Mirror of Empowerment David Abel, Michael Bowling, André Barreto, Will Dabney, Shi Dong, Steven Hansen, Anna Harutyunyan, Khimya Khetarpal, Clare Lyle, Razvan Pascanu, Georgios Piliouras, Doina Precup, Jonathan Richens, Mark Rowland, Tom Schaul, Satinder Singh A Definition of Continual RL David Abel, André Barreto, Benjamin Van Roy, Doina Precup, Hado van Hasselt, Satinder Singh Agency is Frame-Dependent David Abel, André Barreto, Michael Bowling, Will Dabney, Shi Dong, Steven Hansen, Anna Harutyunyan, Khimya Khetarpal, Clare Lyle, Razvan Pascanu, Georgios Piliouras, Doina Precup, Jonathan Richens, Mark Rowland, Tom Schaul, Satinder Singh On the Expressivity of Markov Reward David Abel, Will Dabney, Anna Harutyunyan, Mark Ho, Michael Littman, Doina Precup, Satinder Singh — Outstanding Paper Award, NeurIPS 2021 Additional References Bidirectional Communication Theory — Marko 1973 Causality, Feedback and Directed Information — Massey 1990 The Big World Hypothesis — Javed et al. 2024 Loss of plasticity in deep continual learning — Dohare et al. 2024 Three Dogmas of Reinforcement Learning — Abel 2024 Explaining dopamine through prediction errors and beyond — Gershman et al. 2024 David Abel Google Scholar David Abel personal website

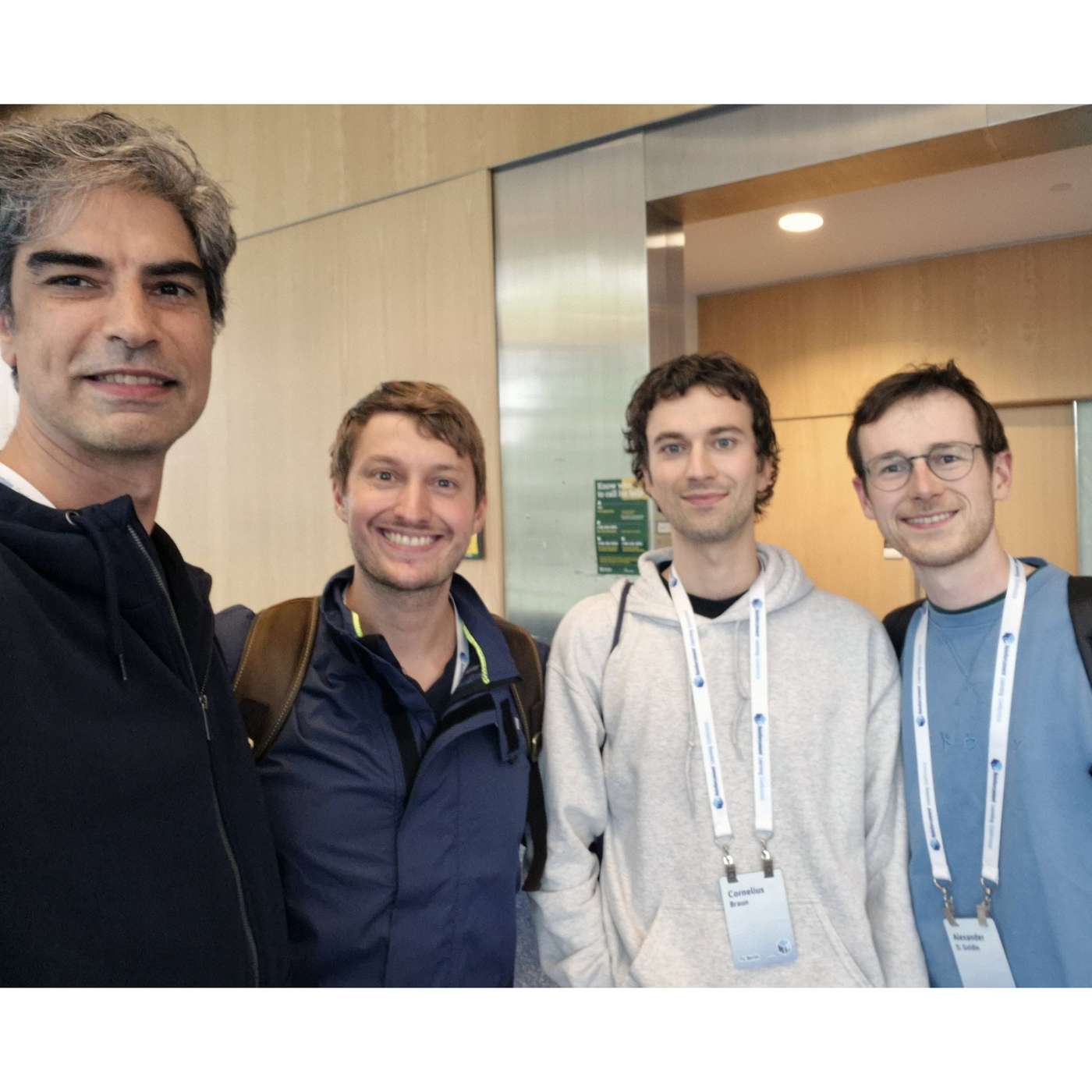

Recorded at Reinforcement Learning Conference 2025 at University of Alberta, Edmonton Alberta Canada.Featured ReferencesLecture on the Oak Architecture, Rich SuttonAlberta Plan, Rich Sutton with Mike Bowling and Patrick Pilarski Additional ReferencesJacob Beck on Google Scholar Alex Goldie on Google ScholarCornelius Braun on Google ScholarReinforcement Learning Conference

We caught up with the RLC Outstanding Paper award winners for your listening pleasure. Recorded on location at Reinforcement Learning Conference 2025, at University of Alberta, in Edmonton Alberta Canada in August 2025.Featured References Empirical Reinforcement Learning ResearchMitigating Suboptimality of Deterministic Policy Gradients in Complex Q-functionsAyush Jain, Norio Kosaka, Xinhu Li, Kyung-Min Kim, Erdem Biyik, Joseph J LimApplications of Reinforcement LearningWOFOSTGym: A Crop Simulator for Learning Annual and Perennial Crop Management StrategiesWilliam Solow, Sandhya Saisubramanian, Alan FernEmerging Topics in Reinforcement LearningTowards Improving Reward Design in RL: A Reward Alignment Metric for RL PractitionersCalarina Muslimani, Kerrick Johnstonbaugh, Suyog Chandramouli, Serena Booth, W. Bradley Knox, Matthew E. TaylorScientific Understanding in Reinforcement LearningMulti-Task Reinforcement Learning Enables Parameter ScalingReginald McLean, Evangelos Chatzaroulas, J K Terry, Isaac Woungang, Nariman Farsad, Pablo Samuel Castro

We caught up with the RLC Outstanding Paper award winners for your listening pleasure. Recorded on location at Reinforcement Learning Conference 2025, at University of Alberta, in Edmonton Alberta Canada in August 2025.Featured References Scientific Understanding in Reinforcement Learning How Should We Meta-Learn Reinforcement Learning Algorithms? Alexander David Goldie, Zilin Wang, Jakob Nicolaus Foerster, Shimon Whiteson Tooling, Environments, and Evaluation for Reinforcement Learning Syllabus: Portable Curricula for Reinforcement Learning Agents Ryan Sullivan, Ryan Pégoud, Ameen Ur Rehman, Xinchen Yang, Junyun Huang, Aayush Verma, Nistha Mitra, John P Dickerson Resourcefulness in Reinforcement Learning PufferLib 2.0: Reinforcement Learning at 1M steps/s Joseph Suarez Theory of Reinforcement Learning Deep Reinforcement Learning with Gradient Eligibility Traces Esraa Elelimy, Brett Daley, Andrew Patterson, Marlos C. Machado, Adam White, Martha White

Prof Thomas Akam is a Neuroscientist at the Oxford University Department of Experimental Psychology. He is a Wellcome Career Development Fellow and Associate Professor at the University of Oxford, and leads the Cognitive Circuits research group.Featured ReferencesBrain Architecture for Adaptive BehaviourThomas Akam, RLDM 2025 TutorialAdditional ReferencesThomas Akam on Google ScholarpyPhotometry : Open source, Python based, fiber photometry data acquisition pyControl : Open source, Python based, behavioural experiment control.Uncertainty-based competition between prefrontal and dorsolateral striatal systems for behavioral control, Nathaniel D Daw, Yael Niv, Peter Dayan, 2005Further analysis of the hippocampal amnesic syndrome: 14-year follow-up study of H. M., Milner, B., Corkin, S., & Teuber, H. L., 1968Internally generated cell assembly sequences in the rat hippocampus, Pastalkova E, Itskov V, Amarasingham A, Buzsáki G. Science. 2008Multi-disciplinary Conference on Reinforcement Learning and Decision 2025

Stefano V. Albrecht was previously Associate Professor at the University of Edinburgh, and is currently serving as Director of AI at startup Deepflow. He is a Program Chair of RLDM 2025 and is co-author of the MIT Press textbook "Multi-Agent Reinforcement Learning: Foundations and Modern Approaches".Featured ReferencesMulti-Agent Reinforcement Learning: Foundations and Modern ApproachesStefano V. Albrecht, Filippos Christianos, Lukas SchäferMIT Press, 2024RLDM 2025: Reinforcement Learning and Decision Making ConferenceDublin, IrelandEPyMARL: Extended Python MARL frameworkhttps://github.com/uoe-agents/epymarlBenchmarking Multi-Agent Deep Reinforcement Learning Algorithms in Cooperative TasksGeorgios Papoudakis and Filippos Christianos and Lukas Schäfer and Stefano V. Albrecht

Professor Satinder Singh of Google DeepMind and U of Michigan is co-founder of RLDM. Here he narrates the origin story of the Reinforcement Learning and Decision Making meeting (not conference).Recorded on location at Trinity College Dublin, Ireland during RLDM 2025.Featured ReferencesRLDM 2025: Multi-disciplinary Conference on Reinforcement Learning and Decision Making (RLDM)June 11-14, 2025 at Trinity College Dublin, IrelandSatinder Singh on Google Scholar

Posters and Hallway episodes are short interviews and poster summaries. Recorded at NeurIPS 2024 in Vancouver BC Canada. Featuring Claire Bizon Monroc from Inria: WFCRL: A Multi-Agent Reinforcement Learning Benchmark for Wind Farm Control Andrew Wagenmaker from UC Berkeley: Overcoming the Sim-to-Real Gap: Leveraging Simulation to Learn to Explore for Real-World RL Harley Wiltzer from MILA: Foundations of Multivariate Distributional Reinforcement Learning Vinzenz Thoma from ETH AI Center: Contextual Bilevel Reinforcement Learning for Incentive Alignment Haozhe (Tony) Chen & Ang (Leon) Li from Columbia: QGym: Scalable Simulation and Benchmarking of Queuing Network Controllers

Posters and Hallway episodes are short interviews and poster summaries. Recorded at NeurIPS 2024 in Vancouver BC Canada. Featuring Jonathan Cook from University of Oxford: Artificial Generational Intelligence: Cultural Accumulation in Reinforcement Learning Yifei Zhou from Berkeley AI Research: DigiRL: Training In-The-Wild Device-Control Agents with Autonomous Reinforcement Learning Rory Young from University of Glasgow: Enhancing Robustness in Deep Reinforcement Learning: A Lyapunov Exponent Approach Glen Berseth from MILA: Improving Deep Reinforcement Learning by Reducing the Chain Effect of Value and Policy Churn Alexander Rutherford from University of Oxford: JaxMARL: Multi-Agent RL Environments and Algorithms in JAX

Posters and Hallway episodes are short interviews and poster summaries. Recorded at NeurIPS 2024 in Vancouver BC Canada. Featuring Jiaheng Hu of University of Texas: Disentangled Unsupervised Skill Discovery for Efficient Hierarchical Reinforcement Learning Skander Moalla of EPFL: No Representation, No Trust: Connecting Representation, Collapse, and Trust Issues in PPO Adil Zouitine of IRT Saint Exupery/Hugging Face : Time-Constrained Robust MDPs Soumyendu Sarkar of HP Labs : SustainDC: Benchmarking for Sustainable Data Center Control Matteo Bettini of Cambridge University: BenchMARL: Benchmarking Multi-Agent Reinforcement Learning Michael Bowling of U Alberta : Beyond Optimism: Exploration With Partially Observable Rewards

Abhishek Naik was a student at University of Alberta and Alberta Machine Intelligence Institute, and he just finished his PhD in reinforcement learning, working with Rich Sutton. Now he is a postdoc fellow at the National Research Council of Canada, where he does AI research on Space applications. Featured References Reinforcement Learning for Continuing Problems Using Average Reward Abhishek Naik Ph.D. dissertation 2024 Reward Centering Abhishek Naik, Yi Wan, Manan Tomar, Richard S. Sutton 2024 Learning and Planning in Average-Reward Markov Decision Processes Yi Wan, Abhishek Naik, Richard S. Sutton 2020 Discounted Reinforcement Learning Is Not an Optimization Problem Abhishek Naik, Roshan Shariff, Niko Yasui, Hengshuai Yao, Richard S. Sutton 2019 Additional References Explaining dopamine through prediction errors and beyond, Gershman et al 2024 (proposes Differential-TD-like learning mechanism in the brain around Box 4)

What do RL researchers complain about after hours at the bar? In this "Hot takes" episode, we find out! Recorded at The Pearl in downtown Vancouver, during the RL meetup after a day of Neurips 2024. Special thanks to "David Beckham" for the inspiration :)

Posters and Hallway episodes are short interviews and poster summaries. Recorded at RLC 2024 in Amherst MA. Featuring: 0:01 David Radke of the Chicago Blackhawks NHL on RL for professional sports 0:56 Abhishek Naik from the National Research Council on Continuing RL and Average Reward 2:42 Daphne Cornelisse from NYU on Autonomous Driving and Multi-Agent RL 08:58 Shray Bansal from Georgia Tech on Cognitive Bias for Human AI Ad hoc Teamwork 10:21 Claas Voelcker from University of Toronto on Can we hop in general? 11:23 Brent Venable from The Institute for Human & Machine Cognition on Cooperative information dissemination

Posters and Hallway episodes are short interviews and poster summaries. Recorded at RLC 2024 in Amherst MA. Featuring: 0:01 David Abel from DeepMind on 3 Dogmas of RL 0:55 Kevin Wang from Brown on learning variable depth search for MCTS 2:17 Ashwin Kumar from Washington University in St Louis on fairness in resource allocation 3:36 Prabhat Nagarajan from UAlberta on Value overestimation

Posters and Hallway episodes are short interviews and poster summaries. Recorded at RLC 2024 in Amherst MA. Featuring: 0:01 Kris De Asis from Openmind on Time Discretization 2:23 Anna Hakhverdyan from U of Alberta on Online Hyperparameters 3:59 Dilip Arumugam from Princeton on Information Theory and Exploration 5:04 Micah Carroll from UC Berkeley on Changing preferences and AI alignment

Posters and Hallway episodes are short interviews and poster summaries. Recorded at RLC 2024 in Amherst MA. Featuring: 0:01 Hector Kohler from Centre Inria de l'Université de Lille with "Interpretable and Editable Programmatic Tree Policies for Reinforcement Learning" 2:29 Quentin Delfosse from TU Darmstadt on "Interpretable Concept Bottlenecks to Align Reinforcement Learning Agents" 4:15 Sonja Johnson-Yu from Harvard on "Understanding biological active sensing behaviors by interpreting learned artificial agent policies" 6:42 Jannis Blüml from TU Darmstadt on "OCAtari: Object-Centric Atari 2600 Reinforcement Learning Environments" 8:20 Cameron Allen from UC Berkeley on "Resolving Partial Observability in Decision Processes via the Lambda Discrepancy" 9:48 James Staley from Tufts on "Agent-Centric Human Demonstrations Train World Models" 14:54 Jonathan Li from Rensselaer Polytechnic Institute

Posters and Hallway episodes are short interviews and poster summaries. Recorded at RLC 2024 in Amherst MA. Featuring: 0:01 Ann Huang from Harvard on Learning Dynamics and the Geometry of Neural Dynamics in Recurrent Neural Controllers 1:37 Jannis Blüml from TU Darmstadt on HackAtari: Atari Learning Environments for Robust and Continual Reinforcement Learning 3:13 Benjamin Fuhrer from NVIDIA on Gradient Boosting Reinforcement Learning 3:54 Paul Festor from Imperial College London on Evaluating the impact of explainable RL on physician decision-making in high-fidelity simulations: insights from eye-tracking metrics

Finale Doshi-Velez is a Professor at the Harvard Paulson School of Engineering and Applied Sciences. This off-the-cuff interview was recorded at UMass Amherst during the workshop day of RL Conference on August 9th 2024. Host notes: I've been a fan of some of Prof Doshi-Velez' past work on clinical RL and hoped to feature her for some time now, so I jumped at the chance to get a few minutes of her thoughts -- even though you can tell I was not prepared and a bit flustered tbh. Thanks to Prof Doshi-Velez for taking a moment for this, and I hope to cross paths in future for a more in depth interview. References Finale Doshi-Velez Homepage @ Harvard Finale Doshi-Velez on Google Scholar

Great podcast.

Hi Robin! Great Podcast I haven't heard of so far, unfortunately. I think you might easily increase the visibility and popularity of your podcast by giving your episodes a more detailed name. It would also help to choose between episodes as your listeners might not know all the people and their research. Keep up the good work and thank you very much for it! By the way, I found your podcast through a reddit post of you. ;)

Super interesting episode! Keep the good work up!