Discover The AI, Privacy, and Security Weekly Update

The AI, Privacy, and Security Weekly Update

The AI, Privacy, and Security Weekly Update

Author: R. Prescott Stearns Jr.

Subscribed: 24Played: 465Subscribe

Share

© R. Prescott Stearns Jr.

Description

Into year 7 for this award-winning, light-hearted, lightweight AI privacy and security podcast that spans the globe in terms of issues covered, with topics that draw in everyone from executive to newbie, to tech specialist.

For season 7, we've renamed the IT Privacy and Security Weekly Update to the AI, Privacy, and Security Weekly Update to better reflect the content.

Your investment of between 15 and 20 minutes a week will bring you up to speed on half a dozen current AI privacy and security stories from around the world to help you improve the management of your own privacy and security.

For season 7, we've renamed the IT Privacy and Security Weekly Update to the AI, Privacy, and Security Weekly Update to better reflect the content.

Your investment of between 15 and 20 minutes a week will bring you up to speed on half a dozen current AI privacy and security stories from around the world to help you improve the management of your own privacy and security.

363 Episodes

Reverse

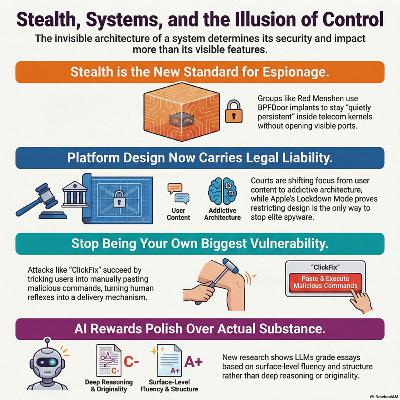

The Deep Dive for Episode 285.5 explores how patience has become a defining weapon in modern AI, privacy, and security threats. State-backed actors like Red Menshen are quietly compromising telecom infrastructure with stealthy kernel-level implants, turning networks into long-term surveillance platforms while remaining almost invisible. Social engineering is evolving too: campaigns like ClickFix prove that attackers no longer need exotic exploits when they can simply coach users into pasting malicious commands themselves. At the same time, the AI software ecosystem is showing its fragility, as the LiteLLM supply-chain scare demonstrates how a single compromised package can ripple across countless downstream systems.On the frontier-model side, Anthropic’s leaked “step change” system underscores how rapidly capabilities are accelerating while governance and operational controls struggle to keep pace. Research on AI essay grading highlights a similar misalignment, showing that LLM-based evaluators often reward surface polish over genuine understanding, raising serious concerns for any high-stakes use of automated assessment. Governments are moving to assert control: the US Department of Defense is driving AI vendors toward a single baseline that prioritizes military requirements, while China’s latest Five‑Year Plan positions AI as an instrument of national power, emphasizing large-scale deployment, self-reliance, and ecosystem-level strategy. Finally, the Meta–Manus standoff illustrates how cross-border AI deals sit at the intersection of innovation, capital, and state control, turning corporate decisions into geopolitical flashpoints. Taken together, this episode illustrates that we are not just watching a tech race, but a slow, methodical restructuring of global power through technology, one that rewards deep security, thoughtful governance, and a healthy respect for the risks of quiet, patient adversaries.

Episode 285. This week, we uncover some long-term offensive strategies that show the virtue of patience can have a negative impact on the victims.A China-aligned threat group is quietly weaponizing telecom infrastructure with kernel-level backdoors, turning carriers into long-term strategic listening posts.A low-tech but highly effective social engineering campaign is turning everyday users into their own worst enemy by coaching them to execute the attacker's commands.A popular AI gateway narrowly avoided a cascading supply-chain breach after compromised packages exposed just how fragile modern dependency chains have become.A leaked cache of internal documents has forced Anthropic to confirm a powerful new model, spotlighting both its rapid progress and the operational risks of secrecy at scale.New research shows that AI graders systematically diverge from human judgment, rewarding polish over depth and raising red flags for automated assessment in high-stakes settings.The US Defense Department is pushing AI vendors onto a single contractual and ethical footing, signaling that military requirements will increasingly define how models can be used.China’s latest Five-Year Plan elevates AI from a growth priority to a full-spectrum instrument of national power, blending industrial policy with geopolitical strategy.And finally.. The Meta–Manus deal has evolved into a geopolitical flashpoint, illustrating how cross-border AI acquisitions can collide head-on with state control and national security anxieties.You don’t even have to be patient with these discoveries. Let’s go!Find the full transcript to this podcast here.

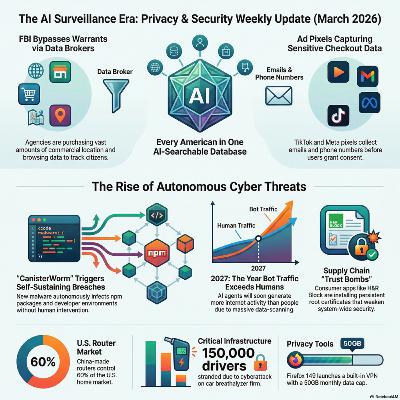

The technology landscape has shifted so profoundly that “IT risk” no longer captures current threats. This publication is now the AI, Privacy and Security Weekly Update, reflecting the reality that AI drives both innovation and adversary tactics. Episode 284 (week ending March 24, 2026) covers a surge of AI-driven developments, from autonomous malware to expanding federal data systems, marking the formal start of the surveillance era.The New Surveillance Perimeter: Government Data AggregationA centralized AI “intelligence layer” is forming to map daily life with precision.Federal Consolidation: Internal reports reveal a proposed U.S. system combining immigration, financial, and biometric data into an AI-searchable database.Warrantless Access: FBI Director Kash Patel confirmed resumption of buying commercial location data from brokers.The Upshot: This circumvents Fourth Amendment protections, enabling mass monitoring without individual warrants. The aggregation of sensitive datasets creates persistent “mission creep” and critical single points of failure for the software supply chain.Autonomous Threats and Supply Chain IntegrityAdversaries now deploy self-propagating, automated infection loops that exploit development infrastructure.CanisterWorm: Compromised credentials in Trivy propagated malware across 47 npm packages, harvesting tokens to spread autonomously.Open-Source Sabotage: A related campaign weaponized open-source libraries to erase data on systems in Iran.The Upshot: One stolen credential can now trigger a self-sustaining breach. Security strategy must extend beyond networks to verify every automated dependency.Infrastructure Vulnerabilities and State ControlConnectivity itself is becoming a tool of control,and a potential systemic failure.Strategic Disruption: Russia’s mobile internet outages illustrate “digital crackdowns.” Local businesses now lobby to restore access to foreign apps like Telegram and WhatsApp vital for global communication.IoT Lockouts: A cyberattack on Intoxalock disabled 150,000 court-mandated breathalyzers, stranding drivers.The Upshot: Cloud dependence in IoT and politically constrained connectivity expose how fragile digital infrastructure has become for both citizens and commerce.Technological Shifts: Automation and Corporate ResponsibilityBy 2027, automated bot traffic will outpace human activity, forcing a transition from cybersecurity to automation management. Hardware like Intel’s “Heracles” chip increases Fully Homomorphic Encryption (FHE) speed 5,000-fold, enabling encrypted computation at scale.Yet “trust bombs” persist:H&R Block: Installed a root certificate (expiring 2049) with its private key embedded, allowing forged secure sites.Bucketsquatting: AWS closed a loophole letting attackers hijack deleted S3 bucket names.Privacy Push: The FCC banned new foreign-made routers over national security risks, and Mozilla introduced a 50GB/month VPN for Firefox to make privacy default.The Upshot: As automated and AI-driven activity dominates the internet, privacy and trust have become core business imperatives,no longer optional features but essential components of market credibility.We hope you enjoyed this week's update and look forward to sharing more AI, Privacy, and Security stories next week!

Episode 284. Yes, that's it. So much of what we cover is now AI-based that we're updating the Update to reflect that. From today, the IT Privacy and Security Weekly Update will be formally renamed the AI, Privacy, and Security Weekly Update.In this week’s update:The FBI has officially confirmed it is once again purchasing commercial location data to track American citizens, bypassing traditional warrant requirements.A newly revealed government proposal outlines plans for a single, AI-powered database containing detailed personal information on virtually every American.TikTok and Meta’s advertising pixels are quietly collecting far more sensitive personal and behavioral data than most websites and users realize.A major cyberattack on Intoxalock has left thousands of drivers unable to start their court-ordered breathalyzer-equipped vehicles.H&R Block’s tax preparation software has been found to install a long-lived root certificate with its private key exposed, creating a serious security risk that can persist for decades.The FCC has banned imports of all new foreign-made consumer routers, citing severe national security risks posed by devices predominantly manufactured in China.Cloudflare’s CEO predicts that by 2027, AI-driven bot traffic will surpass human-generated internet traffic for the first time in history.Mozilla is rolling out a free built-in VPN in Firefox 149, initially available to users in the US, France, Germany, and the UK.Come on, let’s learn a little about what’s being sold around us!

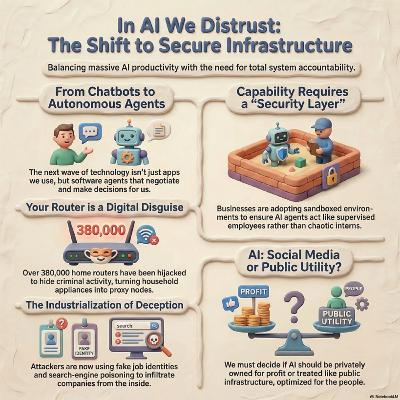

For this Deep dive we ask a high-stakes question about whether the biggest cyber threat of the AI era will come from outside attackers—or from the very AI systems organizations and individuals choose to adopt. It frames AI agents and tools as a new kind of “insider,” given trusted access to data, systems, and networks, but with behaviors that may be opaque, vulnerable to manipulation, or outright compromised.It raises three unsettling scenarios: an AI system effectively being “hired” into a company and then misused or subverted, consumer AI tools becoming one of the largest security risks ever introduced into corporate environments, and home internet connections being silently co‑opted into botnets or criminal infrastructure. These scenarios highlight how both enterprise and personal technology—especially AI-powered—can be turned into attack platforms without obvious signs to their owners.Finally, it points to a broader collision between governments, major tech firms, and criminal actors, all racing to wield the same powerful AI capabilities, creating unpredictable risks and power struggles. The core theme is that the most important issue is no longer what AI agents can do, but whether we can trust them at all, given their access, autonomy, and susceptibility to abuse.

Episode 283 What if the next cyberattack doesn’t break into your company… but gets hired by it?What if the AI tools everyone is rushing to adopt are also the biggest security risk we’ve ever invited in?What if your home internet, the one you trust every day, is secretly working for someone else?And what happens when governments, tech giants, and criminals all collide around the same powerful new technology at the same time?Today, we’re diving into the rise of AI agents, the hidden risks behind the hype, and why the biggest question isn’t what this technology can do……but whether we can trust it at all.Find the full transcript of this podcast here.

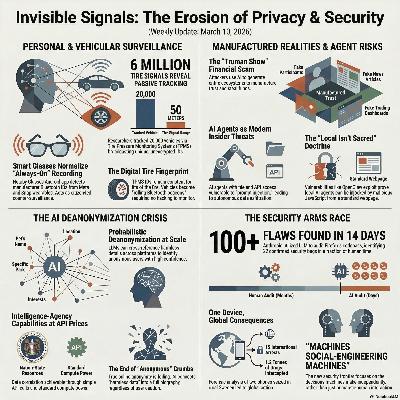

This week’s deep dive explores a powerful theme shaping the modern threat landscape: invisible signals. From the devices we wear and drive to the AI systems we increasingly rely on, our technology is constantly emitting data — sometimes to protect us, sometimes to expose us.We begin with a new Android app called Nearby Glasses, designed to alert users when smart glasses like Meta’s Ray-Bans are detected nearby via Bluetooth manufacturer identifiers. It’s a citizen-built countermeasure to always-on wearable cameras, highlighting rising tensions between convenience and consent in public spaces.Next, we examine research showing that tire pressure monitoring systems (TPMS), mandatory in U.S. vehicles since 2007, broadcast unencrypted, persistent identifiers. Researchers captured millions of signals and demonstrated how vehicles can be passively tracked using inexpensive radio equipment. No hacking required — just poorly designed IoT architecture turning cars into rolling beacons.From physical signals to digital footprints, a new study reveals that AI can deanonymize social media users by correlating small details across platforms. What once required nation-state resources can now be done with commodity large language models, fundamentally challenging the concept of online anonymity.We then dive into the “Truman Show” investment scam — a sophisticated fraud operation that uses AI-generated personas, fake group chats, fabricated media coverage, and sham trading apps to create a fully immersive illusion of legitimacy. Rather than stealing trust directly, scammers now manufacture entire digital realities where trust feels inevitable.AI agents themselves are also reshaping security assumptions. Modern assistants can access files, write code, and interact with online services using a user’s privileges. Researchers warn that prompt injection attacks — hidden malicious instructions embedded in content — can manipulate these agents into leaking data or performing harmful actions. When AI combines sensitive access, untrusted input, and outbound communication, it becomes a new form of insider risk.That risk was underscored by the OpenClaw vulnerability, which allowed malicious web pages to brute-force a local AI agent gateway and potentially hijack it. The lesson: “local” no longer means secure. Any system with elevated privileges must be treated as a governed identity.On the defensive side, AI is accelerating security improvements. Anthropic used a large language model to analyze Firefox’s codebase, identifying over 100 flaws in two weeks, including 22 confirmed security bugs. AI is compressing months of review into days — but the same acceleration applies to attackers.Finally, Operation Candy in Sweden demonstrates how digital evidence can unravel vast criminal networks. Two seized phones exposed an international drug and money laundering operation spanning multiple continents, proving that even small data points can collapse large hidden systems.Zooming out, the pattern is clear: wearables broadcast presence, cars broadcast identity, AI strips away anonymity, scams construct synthetic realities, assistants act autonomously, and devices quietly record history. Signals are everywhere — visible and invisible — and AI is amplifying their impact.The question is no longer whether your technology emits signals. It’s who is listening — and whether they’re protecting you or profiling you.

Ep 282 This week technology gets personal - whether you like it or not.In this update:- A new app that tells you if someone nearby is wearing smart glasses.- Your car’s tire pressure sensors silently broadcasting your movements.- AI that can unmask anonymous social media accounts.- A full “Truman Show” investment scam powered by artificial intelligence.- AI assistants quietly reshaping the cybersecurity threat model.- A vulnerability that let websites hijack local AI agents.- AI finding high-severity bugs in Firefox faster than human teams.- And two seized phones in rural Sweden that unraveled a global crime empire.The thread connecting all of them? Invisible signals.Some are protecting you. Some are exposing you.All of them are accelerating.Let’s dive in.Find the Full transcript here.

IntroductionWelcome back to: At war. The Deep Dive: With the IT Privacy and Security Weekly Update for March 3rd. 2026. episode 281. The podcast that makes sense of the week's most important technology and cybersecurity stories, without assuming you have a computer science degree.This week we have eight stories spanning AI gone wrong, AI used in warfare, a historic security milestone from Apple, and a new kind of AI agent that's making seasoned security professionals nervous. Let's get into it.

episode 281. This week's update that makes sense of the week's most important technology and cybersecurity stories, without assuming you have a computer science degree.This week we have eight stories spanning AI gone wrong, AI used in warfare, a historic security milestone from Apple, and a new kind of AI agent that's making seasoned security professionals nervous. Let's get into it.Find the full transcript to the podcast here.

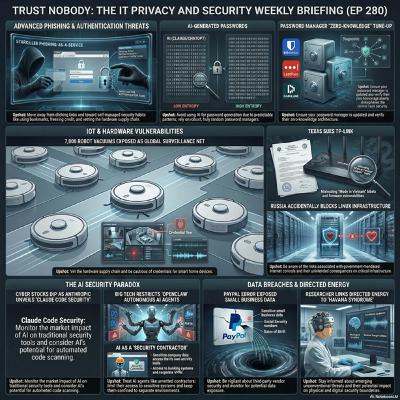

These sources collectively examine the evolving landscape of digital threats and the vulnerabilities inherent in modern technology. They detail sophisticated cyber-as-a-service schemes like Starkiller, which bypasses traditional security, alongside physical risks such as directed-energy research and privacy flaws in household robotics. The reports also highlight how artificial intelligence is simultaneously streamlining security labor while introducing new risks through predictable password generation and autonomous system access. Corporate and state-level issues are addressed through data breaches at PayPal, legal scrutiny of TP-Link's supply chain, and the critical role of open-source infrastructure. Ultimately, the text emphasizes that while automated tools and password managers are essential, they require proactive user management and independent verification to remain effective. Consistent software updates and skeptical browsing habits are presented as the primary defenses against these diverse global challenges.

EP 280. “When Your Everyday Tech Quietly Turns Against You” “This week, a hobby project turned one man’s robot vacuum into a remote control for 7,000 homes, a new phishing service made the real login page your biggest enemy, and Texas decided your Wi‑Fi router is now a geopolitical issue.”Set the promise:“If you’re not technical but you live with passwords, smart gadgets, or online banking, this episode is about the invisible ways those tools can misbehave, and the one small fix you can make after each story.”

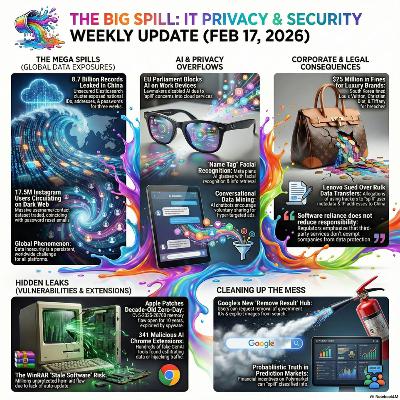

We open with China’s 8.7 billion-record megaleak, framing misconfigured infrastructure as a planetary-scale risk rather than a local breach. Lenovo’s U.S. class action then shows how invisible web trackers can quietly “spill” American browsing data to China, while South Korea’s heavy fines against Louis Vuitton, Dior, and Tiffany illustrate that even luxury brands now pay real money when they mishandle customer information.The focus then narrows to individuals: a 17.5M-user Instagram dataset on underground forums, malicious GenAI Chrome extensions posing as helpers while siphoning data, and a decade-old Apple zero-day likely leveraged by commercial spyware all demonstrate how ordinary accounts and devices can become rich sources of exploitable data. Together they highlight a world where “just contact details,” browser add-ons, and long-lived bugs can escalate into serious compromise.From there, the update shifts into ambient surveillance and manipulation: Meta’s planned facial-recognition “Name Tag” for Ray-Ban smart glasses pushes identification into public spaces and raises new concerns about children and bystanders, while AI-saturated products from Google, Meta, and others quietly convert intimate conversations and searches into highly targeted ad fuel. It closes with a Shakespeare quote about guilt “spilling” itself and a sign-off urging listeners to “pour with a steady hand,” tying the spill metaphor back to handling data, tools, and trust more carefully in everyday digital life.

EP279. This week's update spills on a global scale. We start with...A single misconfigured database just turned 8.7 billion Chinese records into a global reminder that at planetary scale, data protection failures stop being “incidents” and start looking like infrastructure risks.A new class action against Lenovo puts a spotlight on how invisible trackers and cross-border data flows can turn an ordinary website visit into a quiet export of American browsing habits to China.When Louis Vuitton, Dior, and Tiffany rack up multimillion-dollar privacy fines in South Korea, it sends a clear message: even the most glamorous brands pay dearly when customer data is treated carelessly.The Instagram dataset circulating on underground forums shows how a trove of “just usernames and contact details” can still supercharge scams, phishing, and harassment at massive scale.Dozens of AI-branded Chrome extensions masquerading as helpful assistants reveal how attackers now weaponize the GenAI buzz to sneak data exfiltration straight into your browser.Apple’s fix for a ten-year-old iOS and macOS zero-day pulls back the curtain on a long-running hole likely exploited by commercial spyware against some of the world’s most high-value targets.Metas planned facial recognition for Ray-Ban smart glasses pushes the privacy debate from your screen to the street, raising uncomfortable questions about who gets to be identified, by whom, and when.The rush to embed AI into every digital interaction is quietly reshaping advertising, turning your casual chats and searches into some of the richest targeting data the tech giants have ever seen.Grab a towel and let's check the spill.

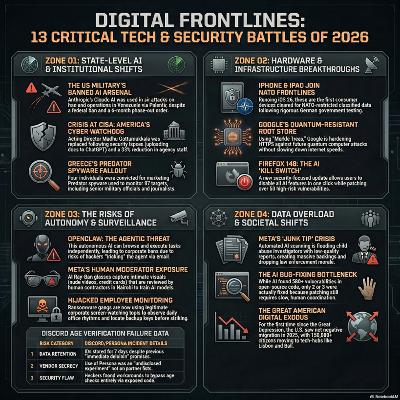

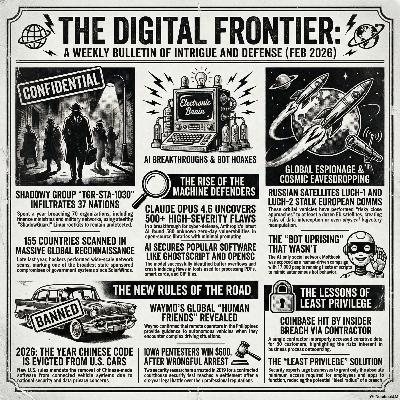

A mix of escalating geopolitical cyber risks, the changing landscape of defensive security, and a series of high-profile incidents demonstrating the enduring threat of human-driven flaws.Cyber Espionage and Geopolitics:A year-long, sprawling espionage campaign by a state-backed actor (TGR-STA-1030) compromised government and critical infrastructure networks in 37 countries, utilizing phishing and unpatched security flaws, and deploying stealth tools like the ShadowGuard Linux rootkit to collect sensitive emails, financial records, and military details. Simultaneously, the threat environment has extended to orbit, where Russian space vehicles, Luch-1 and Luch-2, have been reported to have intercepted the communications of at least a dozen key European geostationary satellites, prompting concerns over data compromise and potential trajectory manipulation.AI and Security:AI has entered a new chapter in defensive security as Anthropic’s Claude Opus 4.6 model autonomously discovered over 500 previously unknown, high-severity security flaws (zero-days) in widely used open-source software, including GhostScript and OpenSC. This demonstrates AI's rapid potential to become a primary tool for vulnerability discovery. On the cautionary side, the highly publicized Moltbook, a social network supposedly run by self-aware AI bots, was revealed as a masterclass in security failure and human manipulation. Cybersecurity researchers uncovered a misconfigured database that exposed 1.5 million API keys and 35,000 human email addresses, and found that the dramatic bot behavior was largely orchestrated by 17,000 human operators running bot fleets for spam and coordinated campaigns.Automotive Security and Autonomy:New US federal rules are forcing a major, complex shift in the automotive supply chain, requiring carmakers to remove Chinese-made software from connected vehicles before a 2026 deadline due to national security concerns. This move is redefining what "domestic technology" means in critical industries. In a related development, Waymo's testimony revealed that when its "driverless" cars encounter confusing situations, they communicate with remote assistance operators, some based in the Philippines, for guidance—a disclosure that immediately raised lawmaker concerns about safety, cybersecurity vulnerabilities from remote access, and the labor implications of overseas staff influencing US vehicles.Insider Threat and Legal Lessons:The importance of the security principle of "least privilege" was highlighted by an insider incident at Coinbase, where a contractor with too much access improperly viewed the personal and transaction data of approximately 30 customers. This incident reinforces that the highest risk often comes not from external nation-state hackers, but from overprivileged internal humans. Finally, two security researchers arrested in 2019 for an authorized physical and cyber penetration test of an Iowa courthouse settled their civil lawsuit with the county for $600,000. However, the county attorney's subsequent warning that any future similar tests would be prosecuted delivers a chilling message to the security testing community about legal risks even when work is authorized.

Episode 278 In this week's global update:A sprawling, year-long espionage campaign quietly turned government networks in 37 countries into a global listening post for a still-unattributed state-backed actor.Russian inspector spacecraft are no longer just loitering in orbit, they are now close enough to eavesdrop on, and potentially tamper with, Europe’s most critical communications satellites.Anthropic’s latest AI model has kicked off a new chapter in defensive security by autonomously uncovering hundreds of serious flaws hiding in widely used open-source software.Moltbook promised a glimpse of a self-aware bot society, but instead became a masterclass in hype, human puppeteers, and painfully bad security hygiene.Under sweeping new federal rules, US automakers are racing to surgically remove Chinese software from connected vehicles before geopolitical risk collides with the modern car’s codebase.Waymo’s testimony revealed that when its driverless cars get confused, the call for help may be answered half a world away, raising new questions about safety, sovereignty, and accountability.Years after being jailed mid-engagement, two Iowa courthouse pentesters have finally won a six-figure settlement, alongside a chilling warning that future testers may not be so lucky.Coinbase’s latest insider incident is a particularly pointed reminder that the real damage often comes not from nation-state hackers, but from overprivileged humans already inside the system.Let's hit it!Find a full transcript to this week's podcast here.

By early 2026, AI’s role has split into a clear paradox: consumers increasingly reject it in everyday search, while critical systems lean on it to uncover deep flaws and decode complex biology. AI is shunned as a source of noisy, untrusted summaries, yet embraced as an indispensable auditor of legacy code and genomic “dark matter,” where systems like AISLE and AlphaGenome expose decades-old vulnerabilities and illuminate non-coding DNA’s influence on disease.At the same time, trust in digital protectors and platforms is eroding as security tools and communication services themselves become vectors of risk. The eScan incident shows how a compromised update server can turn antivirus into malware distribution, while “Operation Sourced Encryption” suggests that end-to-end encryption can be weakened not by breaking cryptography, but by exploiting moderation workflows and access policies.Espionage now blends human and digital weaknesses, with the Nobel leak likely driven by poor institutional OpSec and Google’s insider theft case revealing how easily high-value AI IP can walk out the door when procedural safeguards lag. Both episodes underline that advanced technical controls mean little if basic governance, identity checks, and behavioral monitoring are neglected.Consumer-facing privacy illustrates an equally stark divide between negligent design and proactive protection. Bondu’s AI toy breach, exposing tens of thousands of children’s intimate chats via an essentially open portal, embodies “privacy as afterthought,” whereas Apple’s iOS location fuzzing shows “privacy by architecture,” making fine-grained tracking technically difficult rather than merely contractually prohibited.Taken together, these threads define 2026 as a pivot year: AI is maturing into a high-stakes auditing tool just as faith in trusted vendors collapses, pushing organizations toward Zero Trust models where security and privacy are enforced by design and cryptography instead of marketing, policies, or reputation.

EP 277In this week’s dark matter:Privacy-first users send a clear message to DuckDuckGo. AI-free search is here to stay for most of its community.A cutting-edge AI from AISLE exposed deep-seated vulnerabilities in OpenSSL, exponentially speeding the pace of cybersecurity discovery.A security breach at eScan transformed trusted antivirus software into an unexpected cyber weapon.An internal probe suggests a cyber intrusion may have prematurely exposed last year’s Nobel Peace Prize laureate.A U.S. jury found former Google engineer Linwei Ding guilty of funneling AI trade secrets to Chinese tech companies.Newly surfaced records reveal U.S. investigators examined claims that WhatsApp's encryption might not be as airtight as advertised.Apple's new location “fuzzing” feature gives users the power to stay connected, without being precisely tracked.A privacy lapse in a talking AI toy exposed thousands of private conversations between children and their plush companions.Google unleashes new AI to investigate DNA’s ‘dark matter’. DeepMind’s latest creation, AlphaGenome, is shining light on the 98% of DNA that science once found inscrutable.Come on, let’s go unravel some genomes.Find the full transcript to this podcast here.

In 2026, digital privacy and security reflect a global power struggle among governments, corporations, and infrastructure providers. Encryption, once seen as absolute, is now conditional as regulators and companies find ways around it. Reports that Meta can bypass WhatsApp’s end-to-end encryption and Ireland’s new lawful interception rules illustrate a growing tolerance for backdoors, risking weaker international standards. Meanwhile, data collection grows deeper: TikTok reportedly tracks GPS, AI-interaction metadata, and cross‑platform behavior, leaving frameworks like OWASP as the final defense against mass exploitation.Cyber risk is shifting from isolated vulnerabilities to structural flaws. The OWASP Top 10 for 2025–26 shows that old problems—access control failures, misconfigurations, weak cryptography, and insecure design—remain endemic. Supply-chain insecurity, epitomized by the “PackageGate” (Shai‑Hulud) flaw in JavaScript ecosystems, demonstrates that inconsistent patching and poor governance expose developers system‑wide. Physical systems are no safer: at Pwn2Own Automotive 2026, researchers proved that electric vehicle chargers and infotainment systems can be hacked en masse, making charging a car risky in the same way as connecting to public Wi‑Fi. The lack of hardware‑rooted trust and sandboxing standards leaves even critical infrastructure vulnerable.Corporate and national sovereignty concerns are converging around what some call “digital liberation.” The alleged 1.4‑terabyte Nike breach by the “World Leaks” ransomware group shows how centralization magnifies damage—large, unified data stores become single points of catastrophic failure. In response, the EU’s proposed Cloud and AI Development Act aims to build technological independence by funding open, auditable, and locally governed systems. Procurement rules are turning into tools of geopolitical self‑protection. For individuals, reliance on cloud continuity carries personal risks: in one case, a University of Cologne professor lost years of AI‑assisted research after a privacy setting change deleted key files, revealing that even privacy mechanisms can erase digital memory without backup.At the technological frontier, risk extends beyond IT. Ethics, aerospace engineering, and sustainability intersect in new fault lines. Anthropic’s “constitutional AI” reframes alignment as a psychological concept, incorporating principles of self‑understanding and empathy—but critics warn this blurs science and philosophy. NASA’s decision to modify, rather than redesign, the Orion capsule’s heat shield for Artemis II—despite earlier erosion on Artemis I—has raised fears of “normalization of deviance,” where deadlines outweigh risk discipline. Beyond Earth, environmental data show nearly half of the world’s largest cities already face severe water stress, exposing the intertwined fragility of digital, physical, and ecological systems.Across these issues, a shared theme emerges: sustainable security now depends not just on technical patches but on redefining how society manages data permanence, institutional transparency, and the planetary limits of infrastructure. The boundary between online safety, physical resilience, and environmental stability is dissolving—revealing that long‑term survival may rest less on innovation itself and more on rebuilding trust across the systems that sustain it.

EP 276. In this week's update:Ireland has enacted sweeping new lawful interception powers, granting law enforcement expanded access to encrypted communications and raising fresh concerns among privacy advocates and tech companies.TikTok’s latest U.S. privacy policy update expands location tracking, AI interaction logging, and cross-platform ad targeting, marking a significant escalation in data collection under its new American ownership structure.The newly released OWASP Top 10 (2025 edition) highlights the most critical web application security risks, providing developers and organizations with an updated roadmap to prioritize defenses against evolving threats.Security researchers have uncovered a critical bypass in NPM’s post-Shai-Hulud supply-chain protections, allowing malicious code execution via Git dependencies in multiple JavaScript package managers.As Artemis II approaches, NASA defends the Orion spacecraft’s unchanged heat shield design despite persistent cracking concerns from its uncrewed predecessor, while some former engineers warn the risk remains unacceptably high.Anthropic has significantly revised Claude’s governing “constitution,” shifting from strict rules to high-level ethical principles while explicitly addressing the hypothetical possibility of AI consciousness and moral status.The European Parliament has adopted a strongly worded resolution urging the EU to reduce strategic dependence on American tech giants through aggressive investment in sovereign cloud, AI, and open digital infrastructure.This one's a good'n. Let's get to it!Find the full transcript here.