Discover Data Science Tech Brief By HackerNoon

Data Science Tech Brief By HackerNoon

Data Science Tech Brief By HackerNoon

Author: HackerNoon

Subscribed: 25Played: 129Subscribe

Share

© 2026 HackerNoon

Description

Learn the latest data science updates in the tech world.

176 Episodes

Reverse

This story was originally published on HackerNoon at: https://hackernoon.com/clarifying-the-difference-between-data-strategy-analytics-and-ai-governance.

This article examines the structural distinctions between Data & Analytics (D&A) Strategy, D&A Governance, Data Governance, and AI Governance within enterprise

Check more stories related to data-science at: https://hackernoon.com/c/data-science.

You can also check exclusive content about #data-governance, #ai-governance, #responsible-ai, #data-strategy, #ethical-ai, #ai-trust-and-safety, #enterprise-information-systems, #data-analytics-strategy, and more.

This story was written by: @susmit82. Learn more about this writer by checking @susmit82's about page,

and for more stories, please visit hackernoon.com.

Organizations often struggle to scale analytics and AI because strategy and governance are blurred.

This article clarifies four distinct but connected layers:

D&A Strategy defines where and why data, analytics, and AI create business value.

D&A Governance defines how decisions are made, prioritized, and tracked at the enterprise level.

Data Governance ensures data can be trusted through ownership, quality, and compliance controls.

AI Governance ensures AI decisions can be trusted through risk, explainability, and lifecycle controls.

The paper proposes a hierarchical framework aligning these layers to prevent pilot sprawl, reduce AI risk, and enable scalable, value-driven analytics across industries such as mining, banking, healthcare, retail, and energy.

This story was originally published on HackerNoon at: https://hackernoon.com/the-store-everything-cloud-model-is-breaking-under-modern-ai-workloads.

The 'Store Everything' cloud model is dead. Discover how AI Edge Proxies cut storage costs by 60% and solve industrial latency. The era of Smart Data is here.

Check more stories related to data-science at: https://hackernoon.com/c/data-science.

You can also check exclusive content about #data-observability, #ai-observability, #modern-software-architecture, #scalable-software-architecture, #industry-4.0, #cloud-cost-optimization, #edge-ai, #hackernoon-top-story, and more.

This story was written by: @mannkamal. Learn more about this writer by checking @mannkamal's about page,

and for more stories, please visit hackernoon.com.

The cloud-first observability model is collapsing under latency, cost, and data overload. This article argues for AI edge proxies that filter noise, act in real time, and send only high-value insights upstream.

This story was originally published on HackerNoon at: https://hackernoon.com/ai-belongs-inside-dataops-not-just-at-the-end-of-the-pipeline.

AI shouldn’t sit at the end of the data pipeline. Learn why AI-augmented DataOps is essential for reliability, governance, and scale.

Check more stories related to data-science at: https://hackernoon.com/c/data-science.

You can also check exclusive content about #dataops-augmented-ai, #ai-in-data-engineering, #data-reliability-automation, #ai-driven-data-governance, #dataops-automation-at-scale, #upstream-ai-data-operations, #ai-readiness-data-pipelines, #good-company, and more.

This story was written by: @dataops. Learn more about this writer by checking @dataops's about page,

and for more stories, please visit hackernoon.com.

As AI drives higher demands for speed, scale, and governance, human-driven data operations no longer hold up. This article argues that AI must move upstream into DataOps, where it can automate enforcement, detect anomalies, maintain documentation, and evaluate readiness continuously. AI-augmented DataOps doesn’t replace engineers—it frees them to design better systems while improving reliability and trust at enterprise scale.

This story was originally published on HackerNoon at: https://hackernoon.com/stop-torturing-your-data-how-to-automate-rigor-with-ai.

Why improvisation kills research, and how to use AI to enforce methodological discipline.

Check more stories related to data-science at: https://hackernoon.com/c/data-science.

You can also check exclusive content about #data-science, #research-methodology, #ai-prompt, #statistics, #academic-writing, #analyst-strategist, #precommitment-strategy, #data-analysis, and more.

This story was written by: @huizhudev. Learn more about this writer by checking @huizhudev's about page,

and for more stories, please visit hackernoon.com.

Improvisation in data analysis leads to bias and "p-hacking." This article introduces a "Data Analysis Strategist" AI prompt that forces researchers to pre-commit to a rigorous roadmap. It acts as a flight plan, ensuring validity, checking assumptions, and preventing the "Garden of Forking Paths" effect.

This story was originally published on HackerNoon at: https://hackernoon.com/minimum-incident-lineage-mil-a-run-level-evidence-standard-for-reproducible-data-incidents.

Traditional data lineage shows dependencies—not proof. Learn how Minimum Incident Lineage helps teams reproduce, audit, and resolve data incidents faster.

Check more stories related to data-science at: https://hackernoon.com/c/data-science.

You can also check exclusive content about #data-engineering, #minimum-incident-lineage, #data-lineage, #big-data-analytics, #data-quality, #data-observability, #data-pipeline-debugging, #incident-response-analytics, and more.

This story was written by: @anushakovi. Learn more about this writer by checking @anushakovi's about page,

and for more stories, please visit hackernoon.com.

Minimum Incident Lineage (MIL) is the minimal run-level evidence you must capture for each dataset published. It makes incidents replayable, auditable, and fast to triage, without storing raw data.

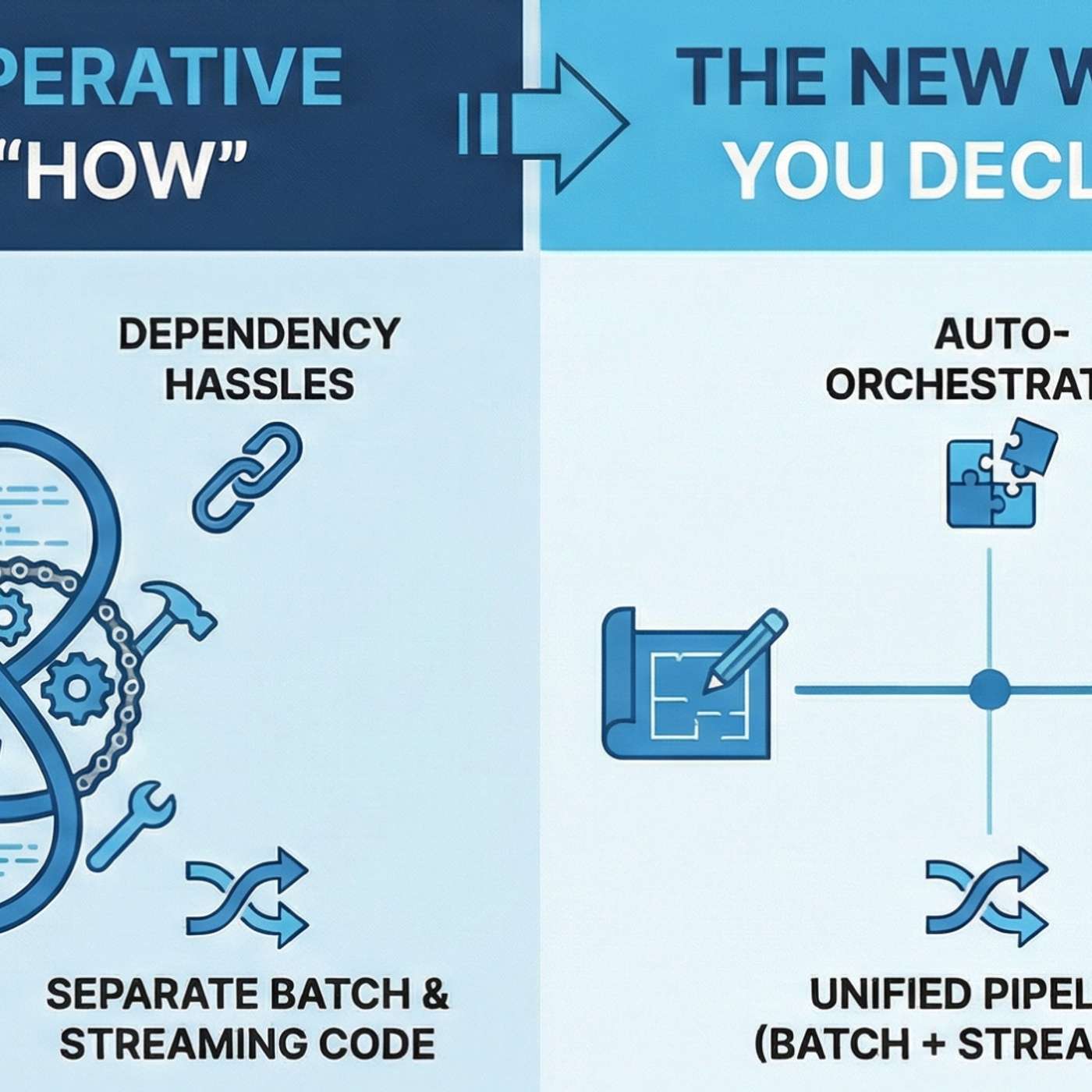

This story was originally published on HackerNoon at: https://hackernoon.com/5-ways-spark-41-moves-data-engineering-from-manual-pipelines-to-intent-driven-design.

Apache Spark 4.1 introduces significant architectural efficiencies designed to simplify Change Data Capture (CDC) and lifecycle management.

Check more stories related to data-science at: https://hackernoon.com/c/data-science.

You can also check exclusive content about #data-engineering, #declarative-programming, #apache-spark, #declarative-pipelines, #data-quality, #change-data-capture, #databricks, #spark-4.1, and more.

This story was written by: @amalik. Learn more about this writer by checking @amalik's about page,

and for more stories, please visit hackernoon.com.

Apache Spark 4.1 is moving away from the role of "orchestration plumber" and toward something far more strategic. We are entering an era of declarative clarity that promises to reduce pipeline development time by up to 90%. Materialized View (MV) is the end of "Stale Data" anxiety.

This story was originally published on HackerNoon at: https://hackernoon.com/beyond-prediction-econometric-data-science-for-measuring-true-business-impact.

Econometric methodologies model counterfactual consequences upfront so that an analyst can predict what would happen without intervention.

Check more stories related to data-science at: https://hackernoon.com/c/data-science.

You can also check exclusive content about #data-science, #analytics, #econometric-data-science, #business-impact, #real-world-constraints, #machine-learning, #business-strategies, #contemporary-econometrics, and more.

This story was written by: @dharmateja. Learn more about this writer by checking @dharmateja's about page,

and for more stories, please visit hackernoon.com.

Econometric methodologies model counterfactual consequences upfront so that an analyst can predict what would happen without intervention. This is crucial for determining actual ROI and avoiding misallocation of resources. Econometric data science provides the resources to deliver on this challenge.

This story was originally published on HackerNoon at: https://hackernoon.com/designing-economic-intelligence-econometrics-first-approaches-in-data-science.

Economic intelligence is embedding a structured way of reasoning into decision systems.

Check more stories related to data-science at: https://hackernoon.com/c/data-science.

You can also check exclusive content about #data-science, #analytics, #economic-intelligence, #econometrics, #analytics-outputs, #counterfactual-evaluation, #interoperability, #economics, and more.

This story was written by: @dharmateja. Learn more about this writer by checking @dharmateja's about page,

and for more stories, please visit hackernoon.com.

Economic intelligence is embedding a structured way of reasoning into decision systems. Econometrics is a logical springboard for these systems since it regards decisions as interventions in an economic context.

This story was originally published on HackerNoon at: https://hackernoon.com/from-forecasting-to-bi-inside-shravanthi-ashwin-kumars-data-driven-finance-playbook.

A deep dive into Shravanthi Ashwin Kumar’s data-driven approach to financial analytics, forecasting, and tech-powered decision-making AI!

Check more stories related to data-science at: https://hackernoon.com/c/data-science.

You can also check exclusive content about #data-driven-financial-decision, #financial-analytics-automation, #sql-python-finance-analytics, #finance-business-intelligence, #financial-modeling, #financial-forecasting, #finance-kpi-dashboard, #good-company, and more.

This story was written by: @sanya_kapoor. Learn more about this writer by checking @sanya_kapoor's about page,

and for more stories, please visit hackernoon.com.

Shravanthi Ashwin Kumar exemplifies the new generation of finance professionals blending analytics, automation, and strategic insight. With expertise in financial modeling, forecasting, risk analysis, and BI tools like SQL, Python, Power BI, and Tableau, she delivers measurable impact—boosting planning accuracy, reducing costs, and enabling smarter, faster data-driven decisions across industries.

This story was originally published on HackerNoon at: https://hackernoon.com/causal-thinking-in-the-age-of-big-data-modern-econometrics-for-data-scientists.

Predictive models now rule over modern analytics stacks from recommendation engines to demand forecasting and fraud detection.

Check more stories related to data-science at: https://hackernoon.com/c/data-science.

You can also check exclusive content about #data-science, #analytics, #economics, #predictive-models, #modern-econometrics, #data-scientists, #machine-learning, #counterfactual-thinking, and more.

This story was written by: @dharmateja. Learn more about this writer by checking @dharmateja's about page,

and for more stories, please visit hackernoon.com.

Predictive models now rule over modern analytics stacks from recommendation engines to demand forecasting and fraud detection. But as data scientists increasingly impact policy and strategy, the inherent limitation of prediction-only thinking has become obvious.

This story was originally published on HackerNoon at: https://hackernoon.com/data-pipeline-testing-the-3-levels-most-teams-miss.

Dashboards don’t represent actual state, models degrade unnoticed, and incidents show up as “weird numbers” instead of errors.

Check more stories related to data-science at: https://hackernoon.com/c/data-science.

You can also check exclusive content about #data-engineering, #data-quality, #data-pipelines, #data-infrastructure, #data-ops, #data-pipeline-testing, #quality-assurance, #data-testing-is-different, and more.

This story was written by: @timonovid_ir5em1fo. Learn more about this writer by checking @timonovid_ir5em1fo's about page,

and for more stories, please visit hackernoon.com.

Most data teams test code but not data.

That’s why dashboards don’t represent actual state, models degrade unnoticed, and incidents show up as “weird numbers” instead of errors.

This article breaks down **three levels of data testing** — schema, business logic, and contracts — and shows how to integrate them into CI/CD and monitoring without turning your data stack into a mess.

This story was originally published on HackerNoon at: https://hackernoon.com/hsm-the-original-tiering-engine-behind-mainframes-cloud-and-s3.

From mainframe DFSMShsm to cloud storage classes: a practical history of HSM, ILM, tiering, recall, and the products that shaped modern archives.

Check more stories related to data-science at: https://hackernoon.com/c/data-science.

You can also check exclusive content about #data-tiering, #hsm-vs-ilm, #hierarchical-storage-mgmt, #data-lifecycle-management, #tiered-data-storage, #object-storage, #object-storage-lifecycle, #hackernoon-top-story, and more.

This story was written by: @carlwatts. Learn more about this writer by checking @carlwatts's about page,

and for more stories, please visit hackernoon.com.

Hierarchical Storage Management (HSM) is the storage world’s oldest magic trick. It makes expensive storage look bigger by quietly moving data to cheaper tiers. HSM has five moving parts: a primary tier, secondary tiers, a policy engine, a recall mechanism, and a migration engine.

This story was originally published on HackerNoon at: https://hackernoon.com/navigating-architectural-trade-offs-at-scale-to-meet-ai-goals-in-2026.

Success in 2026 is predicated on having total clarity of the underlying data infrastructure.

Check more stories related to data-science at: https://hackernoon.com/c/data-science.

You can also check exclusive content about #data-science, #big-data, #data-analytics, #snowflake, #architectural-trade-offs, #ai-goals-in-2026, #petabyte-scale, #low-code, and more.

This story was written by: @anupmoncy. Learn more about this writer by checking @anupmoncy's about page,

and for more stories, please visit hackernoon.com.

Success in 2026 is predicated on having total clarity of the underlying data infrastructure. This requires a stable and secure foundation that uses auto-scaling compute and workload isolation.

This story was originally published on HackerNoon at: https://hackernoon.com/will-ai-take-your-job-the-data-tells-a-very-different-story.

Historically, technological revolutions have triggered similar waves of anxiety, only for the long-term outcomes to demonstrate a more optimistic narrative.

Check more stories related to data-science at: https://hackernoon.com/c/data-science.

You can also check exclusive content about #data-science, #analytics, #artificial-intelligence, #technology, #generative-ai, #data-analysis, #ai-job-loss, #ai-job-takeover, and more.

This story was written by: @dharmateja. Learn more about this writer by checking @dharmateja's about page,

and for more stories, please visit hackernoon.com.

Artificial intelligence (AI) raises an urgent question for workers, businesses, and policymakers. Will AI advancements ultimately lead to widespread unemployment? Historically, technological revolutions have triggered similar waves of anxiety, only for the long-term outcomes to demonstrate a more optimistic narrative.

This story was originally published on HackerNoon at: https://hackernoon.com/you-dont-need-an-api-for-everything-sometimes-scraping-is-enough.

You don't always need an API. Sometimes scraping public pages is the simplest, fastest way to turn repetitive browsing into usable data.

Check more stories related to data-science at: https://hackernoon.com/c/data-science.

You can also check exclusive content about #web-scraping, #automation, #developer-tools, #productivity, #programming, #wait-for-the-api, #api, #api-development, and more.

This story was written by: @fromight. Learn more about this writer by checking @fromight's about page,

and for more stories, please visit hackernoon.com.

APIs are useful, but they're not always available, complete, or worth the overhead. If the data you need is already public and you're manually checking a website, scraping is simply a way to automate that behavior. Small, low-frequency scrapers can turn repetitive browsing into structured data, save time, and reduce cognitive load making scraping a practical productivity tool rather than a heavy engineering decision.

This story was originally published on HackerNoon at: https://hackernoon.com/how-to-use-propensity-score-matching-to-measure-down-stream-causal-impact-of-an-event.

How can we know ours ads are making impact that we aim for? What if targeted ads are not working the way we want them to?

Check more stories related to data-science at: https://hackernoon.com/c/data-science.

You can also check exclusive content about #data-science, #data-analytics, #statistics, #analytics, #advertising, #big-data-analytics, #hackernoon-top-story-tag, #propensity-score-matching, and more.

This story was written by: @dharmateja. Learn more about this writer by checking @dharmateja's about page,

and for more stories, please visit hackernoon.com.

Ad exposure is not randomly assigned – algorithms may show ads more to highly active users. As a result, “unobservable factors make exposure endogenous,” meaning there are hidden biases in who sees the ad. This is where propensity score matching (PSM) comes in – it’s a statistical way to create apples-to-apples comparisons.

This story was originally published on HackerNoon at: https://hackernoon.com/how-to-analyze-call-sentiment-with-open-source-nlp-libraries.

Unlock call sentiment analysis using open-source NLP. Discover how to analyze customer emotions, improve service, and gain valuable insights from voice data.

Check more stories related to data-science at: https://hackernoon.com/c/data-science.

You can also check exclusive content about #nlp, #natural-language-processing, #call-sentiment, #open-source-nlp, #customer-service, #call-sentiment-analysis, #ai-for-customer-support, #sentiment-analysis, and more.

This story was written by: @devinpartida. Learn more about this writer by checking @devinpartida's about page,

and for more stories, please visit hackernoon.com.

Call sentiment analysis uses natural language processing (NLP) to surface those signals at scale. Sentiment signals often fall into three broad categories: polarity, intensity and temporal shifts. When applied across large call volumes, sentiment metrics reveal systemic trends that individual call reviews rarely uncover.

This story was originally published on HackerNoon at: https://hackernoon.com/how-bayesian-tail-risk-modeling-can-save-your-retail-business-marketing-budget.

Why average ROI fails. Learn how distributional and tail-risk modeling protects marketing campaigns from catastrophic losses using Bayesian methods.

Check more stories related to data-science at: https://hackernoon.com/c/data-science.

You can also check exclusive content about #data-science, #statistics, #machine-learning, #retail-marketing, #e-commerce, #digital-marketing, #marketing, #hackernoon-top-stories, and more.

This story was written by: @dharmateja. Learn more about this writer by checking @dharmateja's about page,

and for more stories, please visit hackernoon.com.

E-commerce marketing is often represented in terms of Return on Investment (ROI) But looking specifically at average ROI can be very misleading. Marketing outcomes can have "fat tails": rare but extreme events on the downside which conventional models' underestimate.

This story was originally published on HackerNoon at: https://hackernoon.com/architecting-trustworthy-healthcare-data-platforms-using-declarative-pipelines.

In Digital Healthcare data platforms, data quality is no longer a nice-to-have — it is a hard requirement.

Check more stories related to data-science at: https://hackernoon.com/c/data-science.

You can also check exclusive content about #databricks, #data-science, #healthcare-data-platforms, #declarative-pipelines, #declarative-data-quality, #production-grade-pipelines, #healthcare-etl-pipelines, #bad-data, and more.

This story was written by: @hacker95231466. Learn more about this writer by checking @hacker95231466's about page,

and for more stories, please visit hackernoon.com.

In Digital Healthcare data platforms, data quality is no longer a nice-to-have — it is a hard requirement.

This story was originally published on HackerNoon at: https://hackernoon.com/when-ab-tests-arent-possible-causal-inference-can-still-measure-marketing-impact.

Learn how to measure marketing impact without A/B tests using causal inference, Diff-in-Diff, synthetic control, and GeoLift.

Check more stories related to data-science at: https://hackernoon.com/c/data-science.

You can also check exclusive content about #ab-testing, #data-analytics, #data-analysis, #causal-inference, #ab-testing-alternatives, #geolift, #diff-in-diff, #causal-inference-marketing, and more.

This story was written by: @radiokocmoc_l45iej08. Learn more about this writer by checking @radiokocmoc_l45iej08's about page,

and for more stories, please visit hackernoon.com.

In many real‑world settings, running a randomized experiment is simply impossible. We’ll walk through Diff‑in‑Diff, Synthetic Control, and Meta’s GeoLift. We show how to prep your data, and provide ready‑to‑run code.