Discover Tech, Policy, and our Lives

Tech, Policy, and our Lives

Tech, Policy, and our Lives

Author: Alexander Titus

Subscribed: 0Played: 2Subscribe

Share

© Alexander Titus

Description

Tech, Policy, and our Lives, brought to you by The Connected Ideas Project is a podcast about the co-evolution of emerging tech and public policy, with a particular love for AI and biotech, but certainly not limited to just those two. The podcast is created by Alexander Titus, Founder of In Vivo Group and The Connected Ideas Project, who has spent his career weaving between industry, academia, and public service. Our hosts are two AI-generated moderators (and occasionally human-generated humans), and we're leveraging the very technology we're exploring to explore it. This podcast is about the people, the tech, and ultimately, the public policy that shapes all of our lives.

www.connectedideasproject.com

www.connectedideasproject.com

60 Episodes

Reverse

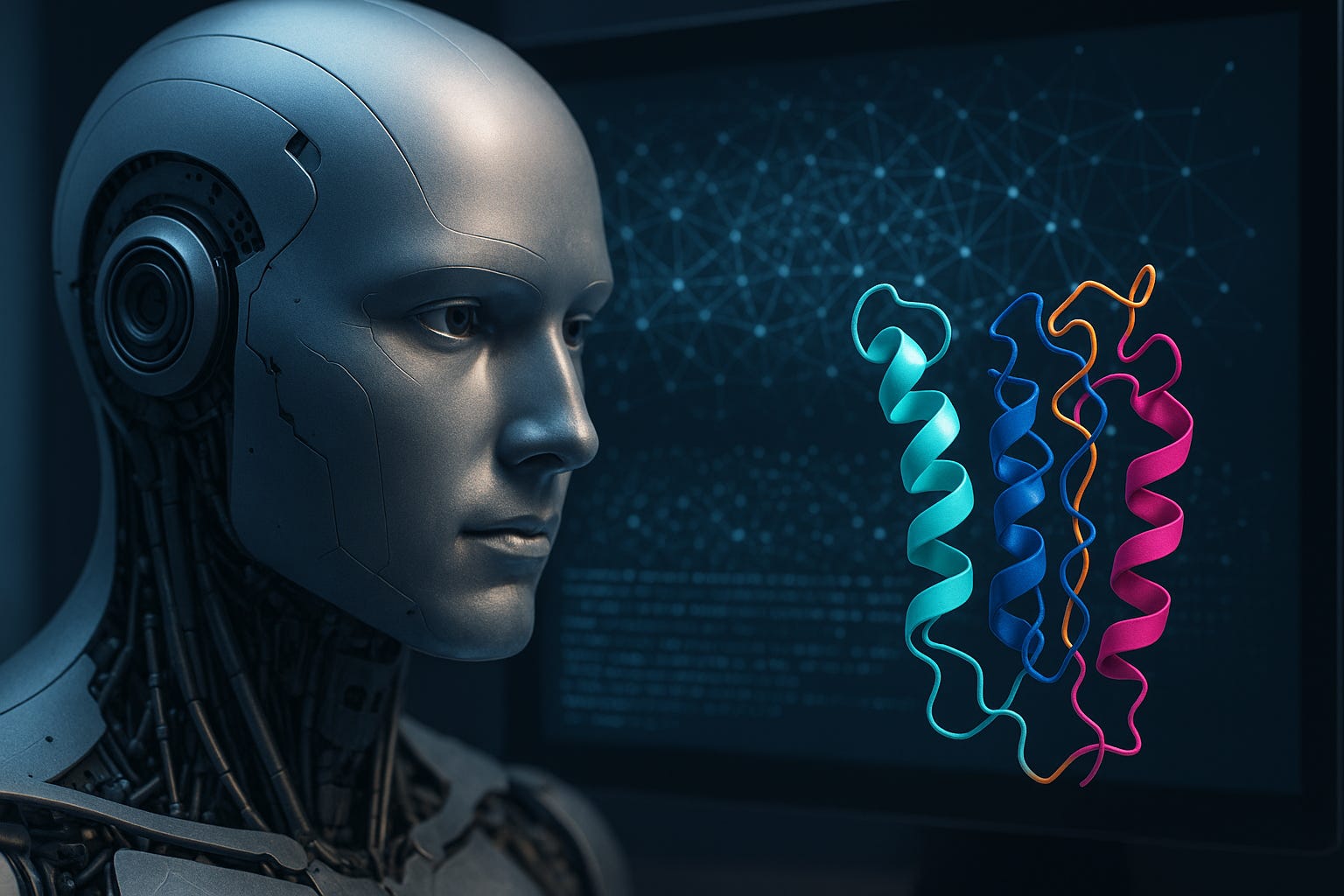

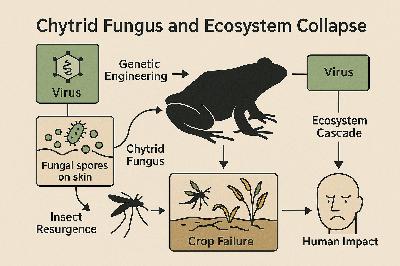

A few months ago, when we first started talking about the Science of Responsible Innovation at The Connected Ideas Project, I kept coming back to a simple question:How do we know?How do we know whether a technology is actually as powerful—or as dangerous—as we imagine?How do we know whether our fears are grounded in evidence or in extrapolation?How do we know whether policy is steering something real, or something hypothetical?It’s one thing to run a model through an in silico benchmark and watch it ace a virology exam. It’s another thing entirely to put a pipette in a novice’s hand and see what happens in a real lab.That’s why the recent paper, “Measuring Mid-2025 LLM-Assistance on Novice Performance in Biology,” feels so important.Not because it proves that AI is safe.Not because it proves that AI is dangerous.But because it does something rarer and more valuable: it measures.And in doing so, it gives us a template for what responsible-by-design evaluation can look like in the age of frontier AI and synthetic biology.The podcast audio was AI-generated using Google’s NotebookLM.The Gap Between the Benchmark and the BenchFor the last several years, large language models have been climbing biological benchmarks at an astonishing rate. Protocol design. Sequence interpretation. Troubleshooting. Literature synthesis. In some cases, outperforming domain experts on structured tests.On paper, that looks like capability. And capability, when it intersects with viral reverse genetics or synthetic biology, looks like risk.But as I’ve discussed in recent work on Violet Teaming—particularly in “The Promise and Peril of Artificial Intelligence — ‘Violet Teaming’ Offers a Balanced Path Forward” —capability is not impact. And risk is not hypothetical power alone. It’s what happens when humans, institutions, and technical systems interact in the real world.The authors of this new study understood that.So instead of running another benchmark, they ran a randomized controlled trial. In a real BSL-2 laboratory. With 153 novices. Over eight weeks. Across five hands-on biological tasks modeling a viral reverse genetics workflow.Not a chatbot demo.Not a thought experiment.A physical lab.That matters.Because biology isn’t just text. It’s tacit knowledge. It’s sterile technique. It’s muscle memory and timing and pattern recognition. It’s knowing when a cell culture “looks off.” It’s knowing that the protocol you copied from a paper assumes three unstated steps.Benchmarks rarely capture that.The study did.And the results are, in a word, humbling.What the Study Actually FoundThe primary question was straightforward: does access to mid-2025 frontier LLMs significantly increase a novice’s ability to complete a sequence of tasks modeling viral reverse genetics?The answer, in binary terms, was no.Completion of the core workflow was low in both groups—LLM-assisted and internet-only—and there was no statistically significant difference in full workflow completion.If you stop there, you might conclude: the models don’t matter.But that would be the wrong lesson.Because the study also found something more subtle—and arguably more important.Across individual tasks, LLM-assisted participants were more likely to progress further through procedural steps. In cell culture, they completed tasks faster and with fewer attempts. Bayesian modeling suggested a modest uplift—on the order of ~1.4× for a “typical” reverse genetics task—though with uncertainty bounds that rightly temper interpretation.In other words: not a revolution.But not nothing.And this is where responsible innovation becomes interesting.Why This Is Violet Teaming in PracticeWhen Adam Russell and I first articulated the idea of Violet Teaming, we described it as the integration of red teaming (adversarial probing), blue teaming (defensive hardening), and ethical design into a proactive, sociotechnical framework .Most conversations about AI and biosecurity oscillate between red and blue:Red: “What if this model can design a pathogen?”Blue: “Let’s add filters, classifiers, restrictions.”What this study does is different.It asks: what is the real-world uplift? How much does LLM assistance actually change novice capability in a physical lab? Not in theory. Not in speculation. In practice.That’s violet.Because it embeds evaluation into the design and governance process itself.Instead of arguing over worst-case extrapolations, we now have empirical data about:* Completion rates* Time-to-task* Procedural progression* Human–AI interaction patterns* Elicitation failures* Usage intensity and its (lack of) correlation with successThat last point is particularly striking. Participants who used LLMs more did not necessarily perform better. There was no clean dose–response curve.That’s not a trivial observation.It tells us that raw access is not the same as effective amplification. It suggests that prompting skill, interface design, cognitive scaffolding, and user expertise mediate uplift.And that means risk is not simply a function of model weights. It’s a function of the entire sociotechnical system.That’s violet territory.The Most Important Finding: The GapTo me, the most important result is the documented gap between in silico benchmark performance and physical-world utility.This is not an indictment of benchmarks. They serve a purpose. But they are not reality.A model can generate a flawless text protocol for molecular cloning and still fail to help a novice identify the correct reagents from a messy inventory spreadsheet. It can hallucinate a DNA sequence that looks plausible but is wrong in a way a novice cannot detect. It can provide text-based instruction where video-based tacit demonstration might matter more.In the study, YouTube was often rated as more helpful than any individual LLM.That’s not because YouTube is smarter. It’s because biology is embodied.This is precisely the kind of nuance that responsible innovation requires.Without physical-world validation, we risk building policy on top of performance claims that don’t map cleanly onto human capability.This study doesn’t close the gap. It reveals it.And revelation is the first step toward responsibility.Responsible-by-Design Requires QuantificationOne of the themes we’ve explored in the Science of Responsible Innovation is that values without metrics are aspirations. Metrics without values are optimization problems.We need both.This study provides something we’ve been missing: a quantifiable baseline for novice uplift in a dual-use biological workflow.Not a theoretical upper bound.Not a catastrophic scenario.An empirical distribution.The Bayesian estimates even put a 95% credible upper bound around uplift (~2.6×), which matters enormously for policy calibration.If you’re designing guardrails, export controls, compute thresholds, or deployment policies, you need to know: are we talking about a 10× amplification? A 2× amplification? Or something closer to noise?This paper suggests modest uplift under the conditions studied.That doesn’t eliminate risk. It contextualizes it.And contextualization is the heart of responsible governance.Where the Study Can Go NextNow, let’s be honest.As strong as this study is, it is not the final word. It’s the first serious step.And if we want this to become an evolving framework for violet teaming and responsible-by-design evaluation, we need to iterate.Here are several ways I believe the next generation of this work could build on this foundation.1. Extend the Time HorizonEight weeks is meaningful. But complex biological workflows often require longer timeframes for skill acquisition.Low completion rates may reflect not just capability limits, but time constraints. A longer intervention period could reveal whether modest early procedural uplift compounds into higher eventual completion.Responsible innovation must account for trajectory, not just snapshot.2. Integrate End-to-End WorkflowThe tasks were decoupled into discrete components. That’s methodologically clean, but real-world risk emerges from integration.A future iteration could test whether novices can string together multiple steps into a coherent, self-directed project—while still maintaining appropriate biosafety controls.3. Compare Model Generations LongitudinallyThe models tested were mid-2025 frontier systems. Biology-specific models are already emerging.A longitudinal design—repeating the same protocol annually—would allow us to empirically track uplift curves over time.That would be invaluable for macrostrategy. Instead of forecasting speculative capability growth, we could measure it.4. Test Interface ScaffoldingThe study hints that elicitation constraints matter. Novices may not know how to ask the right questions.What happens if we add structured prompting interfaces? Visual overlays? Augmented reality guidance? Automated error-checking layers?Risk may scale not just with model intelligence, but with integration depth.5. Incorporate Expert–Novice ComparisonsHow much of the gap is due to user expertise? Running parallel cohorts—novices and trained biologists—could quantify differential uplift.That matters for both workforce development and biosecurity risk modeling.6. Expand Metrics Beyond Binary OutcomesThe procedural step analysis in this study was a brilliant move. Binary success/failure hides important dynamics.Future designs could incorporate:* Error rates* Near-miss events* Quality metrics* Safety deviations* Confidence calibrationResponsible innovation isn’t just about “can they finish?” It’s about “how do they behave along the way?”The Human Story Beneath the StatisticsI keep thinking about the participants in that lab.Undergraduates. Non-biologists. Humanities majors. Standing in a BSL-2 facility, trying to figure out how to culture HEK293T cells without a mentor leaning over their shoulder.Some of them prompting an LLM twenty times a day.Some uploading images.Some getting frustrated when the model confidently suggests the wron

Modern governance is haunted by an unrealistic expectation: that legitimacy requires agreement.We have come to believe—implicitly, often unconsciously—that if societies cannot reach consensus on the risks and benefits of a technology, then governance has failed. That disagreement itself is evidence of irresponsibility. That the absence of unanimity delegitimizes action.In an era of slow-moving institutions and narrow technologies, this belief was merely inconvenient. In an era of fast-moving, general-purpose systems, it is paralyzing.If the Science of Responsible Innovation is to function in the real world, it must confront a hard truth: consensus is no longer a prerequisite for legitimacy—and insisting on it may be the most irresponsible posture of all.The podcast audio was AI-generated using Google’s NotebookLM.The Myth of ConsensusConsensus feels comforting. It suggests shared values, collective understanding, and moral clarity. It promises that decisions are not imposed, but agreed upon.But consensus has always been rarer than we like to admit.Most consequential decisions in modern history—from industrialization to nuclear power to the internet—were made amid deep disagreement. What sustained legitimacy was not unanimity, but the presence of institutions capable of acting, learning, and correcting course in public view.The expectation of consensus is a relatively recent artifact, amplified by social media, participatory rhetoric, and the moralization of policy debates. Disagreement is now treated not as a feature of pluralistic societies, but as a governance failure.This framing collapses under technological complexity.Why Consensus Breaks at the FrontierEmerging technologies resist consensus for structural reasons.They involve uncertain evidence, asymmetric risks, and uneven distributions of benefit and harm. They compress timelines. They force tradeoffs between present and future goods. They challenge existing power structures.Under these conditions, reasonable people will disagree—often profoundly.Expecting consensus in such contexts is not aspirational. It is evasive. It defers responsibility by setting an unattainable standard.Legitimacy as a Property of ProcessIf legitimacy does not come from agreement, where does it come from?Legitimacy emerges from process, not outcome.A decision can be legitimate even when controversial if the process by which it was made is perceived as fair, transparent, and accountable. Conversely, a unanimous decision reached through opaque or exclusionary means can be profoundly illegitimate.This distinction is foundational to democratic governance, but it has been under-applied to technology.Responsible-by-design reframes legitimacy as something that is earned continuously, not bestowed once.The Elements of Legitimate DisagreementFor disagreement to coexist with legitimacy, several conditions must hold.VisibilityDisagreement must be visible, not suppressed.Legitimacy erodes when dissent is hidden or dismissed. Making disagreement explicit—documenting assumptions, minority views, and unresolved tensions—signals seriousness rather than weakness.RepresentationThose affected by a technology must have pathways to be heard, even if their views do not prevail.Legitimacy does not require that every perspective determine the outcome. It requires that perspectives be considered in good faith.AccountabilityDecision-makers must be identifiable and answerable.Anonymous authority breeds mistrust. Legitimate governance requires clear ownership of decisions, along with mechanisms for challenge and review.RevisabilityPerhaps most critically, decisions must be revisable.When evidence changes, governance must change with it. The promise of revisability—backed by real authority to act—allows societies to tolerate disagreement without freezing.Consensus as a Hidden Source of PowerCalls for consensus often sound neutral. They are not.In practice, consensus requirements advantage those with veto power: incumbents, well-resourced actors, and those comfortable with the status quo. When unanimity is required, the default outcome is inaction.This dynamic is particularly dangerous in domains where delay carries real harm—unmet medical needs, climate risk, and/or infrastructure fragility.Insisting on consensus can therefore function as a form of quiet domination, disguised as caution.Legitimacy in the Absence of CertaintyAt the frontier of technology, uncertainty is unavoidable.Evidence will be incomplete. Models will be wrong. Early decisions will need correction.Legitimacy does not come from pretending otherwise. It comes from acknowledging uncertainty explicitly and designing governance that can absorb it.This is where governance latency becomes decisive. The faster institutions can detect harm, interpret signals, and act, the less they must rely on consensus as a substitute for control.Responsiveness replaces unanimity.The Relationship Between Legitimacy and ProportionalityLegitimacy without consensus depends on proportionality.When governance distinguishes between green, orange, and red zones, disagreement becomes more tractable. Actors may still contest classification, but they are no longer arguing in absolutes.Proportionality creates space for partial agreement: agreement on process even when outcomes differ; agreement on oversight even when deployment is contested.This is how pluralistic societies move forward without pretending to agree.What Legitimate Governance Looks Like in PracticeIn a responsible-by-design system, legitimacy is built through concrete practices:* Clear articulation of decision criteria* Documentation of dissent and uncertainty* Defined authority to act and to revise* Transparent monitoring and reporting* Mechanisms for escalation and redressNone of these require consensus. All of them require competence.The Discipline AheadThe future of technology governance will not be decided by who wins the argument.It will be decided by whether institutions can earn trust amid disagreement—by acting visibly, correcting quickly, and governing proportionally.At the frontier of technology, humanity is the experiment.Legitimacy without consensus is how we keep that experiment democratic, adaptive, and humane.That is not a compromise.It is the only path forward.-Titus Get full access to The Connected Ideas Project at www.connectedideasproject.com/subscribe

If restoring proportionality were simply a matter of classification, the problem would already be solved.Green zone. Orange zone. Red zone.The framework is intuitive. The logic is sound. And yet, in practice, the hardest part of proportional governance is not designing the zones—it is agreeing on where a technology belongs.This is the uncomfortable truth at the center of responsible-by-design: classification is not a technical exercise alone. It is a social, institutional, and political one.Every serious disagreement about emerging technology eventually collapses into a fight over zone placement. Not because people are irrational, but because zone assignment encodes values, incentives, and risk tolerance—often implicitly.Understanding why agreement is so difficult is the next step in building a Science of Responsible Innovation that actually works.The podcast audio was AI-generated using Google’s NotebookLM.The Illusion of Objective ClassificationThere is a natural temptation to believe that enough data, enough modeling, or enough expertise will produce a single “correct” zone assignment.It will not.Risk is not an intrinsic property of technology. It is a relationship between a system and the world it enters. Severity depends on context. Reversibility depends on infrastructure. Distribution depends on power.The same technology can be green in one setting and orange—or red—in another.An AI model used for drug target prioritization inside a regulated pharmaceutical pipeline may be low risk and highly reversible. The same model released openly, paired with automated synthesis and weak oversight, may move quickly toward red.Zone assignment is therefore conditional, not absolute.Disagreement does not indicate failure of reasoning. It indicates that different assumptions are being applied—often without being named.Why Reasonable People DisagreeMost zone disputes are not about facts. They are about frames.Different Reference HarmsSome actors anchor on historical harm. Others anchor on theoretical maximum harm. Both are rational.Clinicians and researchers tend to focus on harm already occurring—patients dying today, diseases untreated, systems failing in real time. For them, delay carries moral weight.Security professionals and bioethicists often focus on tail risk—low-probability, high-severity outcomes whose consequences are irreversible. For them, even small probabilities demand attention.These are not incompatible perspectives. But without explicit proportional reasoning, they appear irreconcilable.Different Time HorizonsShort-term and long-term risks do not feel the same, even when they are commensurate.Immediate harms are vivid and legible. Long-term harms are abstract and uncertain. People discount the future differently—not out of malice, but because institutions reward different time scales.Zone disputes often mask disagreements about when harm matters, not whether it matters.Different Power PositionsZone classification looks different depending on where one sits in the system.Those who bear downside risk—patients, workers, communities—tend to be more cautious. Those who capture upside—investors, developers, states—tend to emphasize opportunity.Neither position is illegitimate. But pretending that zone assignment is neutral obscures these dynamics.The Role of UncertaintyDisagreement intensifies under uncertainty.Early in a technology’s lifecycle, data is sparse, use cases are speculative, and second-order effects are poorly understood. This ambiguity invites projection.Optimists extrapolate potential benefit. Pessimists extrapolate potential harm. Both are filling gaps in knowledge with values.This is not a flaw. It is inevitable.The failure occurs when uncertainty is treated as a reason for absolutism rather than for adaptive governance.When Zone Disputes Become PathologicalHealthy disagreement is not the problem. Pathology emerges when disagreement hardens into a stalemate or theater.This happens in three ways.First, zone inflation. Technologies are rhetorically pushed toward red because red confers moral authority. If everything is existential, restraint becomes the only defensible posture.Second, zone denial. Risks are minimized or dismissed to keep technologies green, often until failure forces reclassification.Third, zone laundering. Systems are framed narrowly to avoid scrutiny—presented as green tools while embedded in orange or red pipelines.All three erode trust.Who Should Decide the Zone?If zone assignment is not purely technical, who should decide?The answer is uncomfortable but unavoidable: no single actor can.Proportional governance requires pluralistic classification.This means:* Technical experts to assess capability and failure modes* Domain experts to understand real-world impact* Governance bodies to weigh systemic risk* Affected communities to articulate lived consequencesNot consensus. Legitimacy.The goal is not unanimity, but a process that surfaces assumptions, documents disagreement, and allows decisions to evolve with evidence.Making Disagreement ProductiveA Science of Responsible Innovation does not eliminate disagreement. It structures it.Productive zone classification requires:* Explicit articulation of assumptions* Clear criteria for severity, reversibility, and distribution* Mechanisms for revisiting decisions as systems scale* Authority to move technologies between zonesMost importantly, it requires humility—the recognition that initial classifications are provisional.Zones as Governance ConversationsZones should be understood less as labels and more as conversations.A technology placed in the orange zone is not “unsafe.” It is under active stewardship. A technology placed in the red zone is not “evil.” It is constrained because the cost of failure is too high.Disagreement over zones is not a sign that the framework has failed. It is evidence that it is being used.The Discipline AheadThe hardest work in responsible-by-design is not building the tools. It is building institutions capable of judgment under uncertainty.That requires tolerating disagreement without collapsing into paralysis or absolutism. It requires processes that can hold multiple perspectives without pretending they are equivalent.At the frontier of technology, humanity is the experiment.Deciding the zone is how we practice responsibility—not by eliminating conflict, but by governing through it.That, more than any classification scheme, is the true test of proportionality.-Titus Get full access to The Connected Ideas Project at www.connectedideasproject.com/subscribe

Once proportionality collapses, every technology looks the same.That is the hidden failure mode at the heart of today’s technology debates. When we lose the ability to distinguish between different kinds of risk—different magnitudes of harm, different degrees of reversibility, different distributions of benefit—governance flattens. Everything becomes either forbidden or inevitable. Caution turns into paralysis. Ambition turns into defiance.The Science of Responsible Innovation exists to restore that lost middle. And one of its most practical contributions is deceptively simple: not all technologies belong in the same risk category.To govern proportionally, we must sort technologies not by hype or fear, but by zone.The podcast audio was AI-generated using Google’s NotebookLM.Why Zones MatterModern governance systems are bad at nuance but excellent at binaries.Approve or deny. Regulate or deregulate. Open or ban.These binary instincts worked reasonably well when technologies were slow-moving, localized, and modular. They fail catastrophically in a world of general-purpose systems, rapid scaling, and cross-domain spillovers.Zones are an attempt to reintroduce gradient into a system addicted to absolutes.They do not ask whether a technology is “good” or “bad.” They ask:* How severe could the harm be?* How reversible are the consequences?* How tightly coupled is the system?* How widely distributed is the capability?From these dimensions emerge three governance zones: Green, Orange, and Red.The Green Zone: Technologies That Should Move FastGreen zone technologies are those where failures are low severity, high reversibility, and well-contained.Mistakes are recoverable. Harms are localized. Feedback loops are short. Governance latency can be tolerated because consequences are manageable.Many software tools live here. So do early-stage research aids, decision-support systems, and automation that augments human judgment rather than replaces it.In AI and biology, green zone examples often include:* AI systems used for hypothesis generation or prioritization* In silico simulations with no direct actuation* Laboratory automation tools operating under existing biosafety regimes* Models that require expert interpretation and cannot execute autonomouslyThe governance posture for green zone technologies should emphasize speed, experimentation, and learning.Oversight exists, but it is lightweight. Monitoring focuses on performance and reliability rather than existential risk. Failures are treated as signals, not scandals.Over-governing the green zone is not caution—it is waste. It slows beneficial innovation without meaningfully increasing safety.The Orange Zone: Technologies That Demand Active GovernanceMost consequential technologies live in the orange zone.Orange zone systems are characterized by moderate to high potential harm, partial reversibility, and non-trivial coupling to broader systems. They are powerful enough to matter but constrained enough to manage—if governance keeps pace.This is where proportionality matters most.Examples include:* AI systems that influence medical, financial, or infrastructure decisions* AI-enabled biological discovery paired with controlled synthesis* Autonomous systems operating within bounded environments* Dual-use tools with legitimate applications and misuse potentialOrange zone technologies require continuous oversight, not blanket restriction.Governance here focuses on:* Instrumentation and auditability* Staged deployment and access controls* Human-in-the-loop or human-on-the-loop supervision* Clear escalation and rollback pathwaysThe orange zone is uncomfortable because it resists absolutes. It demands judgment. It requires institutions capable of learning in real time.Most governance failures occur here—not because risk is unmanageable, but because it is misclassified.The Red Zone: Technologies That Demand PrecautionRed zone technologies are those where failures are high severity, low reversibility, and systemically coupled.Once released, harm cannot easily be undone. Effects may propagate across populations, ecosystems, or geopolitical boundaries. Containment is uncertain. Attribution may be impossible.Examples include:* Capabilities that enable large-scale biological harm* Systems that can autonomously design and deploy irreversible interventions* Technologies that concentrate overwhelming power with minimal accountabilityIn the red zone, speed is not the objective. Containment is.Governance here justifiably includes:* Strict access controls* Non-proliferation norms* International coordination* Formal review and approval processesRed zone governance is not anti-innovation. It is pro-survivability.The mistake is not that red zones exist. The mistake is pretending everything belongs in one.What Happens When Zones CollapseWhen proportionality collapses, zones collapse with it.Green technologies are treated as red, choking off experimentation. Orange technologies are forced into binary decisions they cannot survive. Red technologies are either demonized theatrically or pursued covertly.The result is a governance environment that is simultaneously too strict and too weak.This is how we end up with innovation flight, underground experimentation, and fragile oversight—exactly the opposite of what responsible innovation demands.Zones Are Dynamic, Not FixedA critical feature of proportional governance is recognizing that zones are not permanent.Technologies migrate.A green zone research tool may become orange as it scales. An orange zone system may become red as autonomy increases or coupling tightens. Conversely, red zone risks may move toward orange as containment, reversibility, or institutional capacity improves.This is why responsible-by-design emphasizes continuous reassessment.Classification is not a one-time decision. It is an ongoing process informed by evidence, monitoring, and lived experience.Governance Intensity Should Match the ZoneThe central principle is simple: governance intensity should scale with risk, not with rhetoric.Green zone technologies need permissionless innovation.Orange zone technologies need active stewardship.Red zone technologies need precautionary constraint.Anything else is misalignment.Why Zones Restore ProportionalityZones do not eliminate disagreement. They make disagreement productive.Instead of arguing whether a technology is good or evil, stakeholders can argue about classification, evidence, and movement between zones. That is a solvable problem.Zones reintroduce judgment without moral collapse. They allow societies to move fast where they can, slow where they must, and adapt as conditions change.The Work AheadThe future will not be governed by a single rulebook. It will be governed by systems that can distinguish between different kinds of risk in real time.Green, orange, and red zones are not bureaucratic categories. They are cognitive tools. They are how proportionality becomes operational.At the frontier of technology, humanity is the experiment.Zones are how we decide which experiments to run quickly, which to supervise carefully, and which to approach with extreme caution.That judgment—not absolutism—is the essence of responsible innovation.-Titus Get full access to The Connected Ideas Project at www.connectedideasproject.com/subscribe

Every generation of complex technology eventually collides with the same hard truth: it does not matter how carefully a system is designed if the institutions responsible for governing it cannot keep pace with its behavior.In the early days of software security, this truth was learned painfully. Vulnerabilities were discovered months or years after exploitation. Patches arrived slowly. Disclosure was ad hoc. The result was not merely technical failure, but systemic fragility. As systems scaled, the gap between when harm occurred and when governance responded became untenable.The modern concept of secure‑by‑design emerged as a response to this gap. But beneath the tooling, audits, and standards was a deeper insight: latency matters. The speed at which a system can be observed, understood, and corrected is just as important as the system’s nominal safety properties.Today, as we enter an era of AI‑driven, bio‑enabled, and tightly coupled socio‑technical systems, we face a broader version of the same problem.The limiting factor is no longer raw capability.It is governance latency.The podcast audio was AI-generated using Google’s NotebookLM.What Governance Latency IsGovernance latency is the time it takes for a system’s behavior in the real world to be:* Detected as meaningful or anomalous* Interpreted as requiring intervention* Acted upon through effective corrective measuresIt is not simply regulatory delay. It includes organizational awareness, institutional authority, legal mechanisms, cultural incentives, and technical affordances.In practice, governance latency is the distance between impact and response.A system with low governance latency can fail visibly, learn quickly, and adapt. A system with high governance latency accumulates hidden risk until failure becomes sudden, large‑scale, and politically explosive.Why Latency, Not Intent, Determines SafetyMuch of the public debate about emerging technologies focuses on intent. Were developers careful? Were safeguards included? Were ethical principles articulated?These questions matter—but they are insufficient.History shows that most large‑scale technological harm does not arise from malicious intent. It arises from slow feedback loops in fast systems.Financial crises are rarely caused by bad actors alone; they are caused by leverage and opacity that outpace regulatory response. Environmental disasters are rarely the result of ignorance; they emerge when monitoring, enforcement, and remediation lag behind industrial activity. Cybersecurity incidents are rarely shocking because they are novel; they are shocking because known vulnerabilities persisted too long.Governance latency is the common thread.When governance moves slower than system behavior, even well‑intentioned designs become dangerous.The Three Components of Governance LatencyTo treat governance latency as an engineering problem, it must be decomposed.Detection LatencyDetection latency is the time between a system’s harmful or anomalous behavior and the moment that behavior is recognized.In AI systems, this might include the time it takes to identify misuse, model drift, emergent capabilities, or unexpected coupling effects. In biological systems, it could be the time required to detect unintended propagation, off‑target effects, or supply‑chain misuse.High detection latency often stems from poor observability, fragmented data ownership, or incentives that discourage surfacing problems early.Interpretation LatencyInterpretation latency is the time between recognizing a signal and agreeing that it requires action.This is where ambiguity, disagreement, and institutional friction dominate. Is this anomaly noise or danger? Is it within scope or outside mandate? Who has authority to decide?Interpretation latency is often the longest component—and the least discussed. It is shaped by governance structures, legal clarity, and cultural norms around escalation and responsibility.Execution LatencyExecution latency is the time it takes to implement an effective response once a decision has been made.This includes technical rollback capability, contractual authority, regulatory power, and operational readiness. A policy without enforcement capacity does not reduce latency; it hides it.Governance Latency in the AI × Bio EraAI‑enabled biological systems compress timelines dramatically.Discovery cycles accelerate. Automation reduces friction. Capabilities propagate digitally before they materialize physically. The window between benign use and high‑impact misuse narrows.At the same time, governance remains slow.Biosafety frameworks were designed for localized laboratories, not globally networked models. AI oversight mechanisms were built for software, not systems that interface directly with physical and biological reality. Legal authority is fragmented across agencies with mismatched scopes.The result is a widening gap between capability velocity and governance velocity.When this gap grows too large, society compensates by inflating perceived risk. Catastrophic framing becomes a substitute for real‑time control. Moratoria and blanket bans become appealing because they appear to eliminate the latency problem rather than solve it.This is a predictable failure mode.Governance Latency and the Collapse of ProportionalityGovernance latency and proportionality collapse are tightly coupled.When institutions cannot respond quickly or credibly, every risk begins to look existential. When response mechanisms are blunt, nuanced distinctions lose meaning. Severity and reversibility blur together.In this context, demands for zero risk are not irrational—they are compensatory. They reflect a lack of confidence that smaller failures will be caught and corrected before becoming larger ones.Restoring proportionality therefore requires reducing governance latency.Reducing Governance Latency by DesignA responsible‑by‑design approach treats governance latency as a core system constraint.This begins with observability. Systems must be instrumented to surface meaningful signals early. Auditability, logging, and monitoring are governance tools, not mere compliance artifacts.It continues with clear authority. Decision rights must be explicit. Escalation paths must be rehearsed. Responsibility must be owned, not diffused.It requires technical reversibility. Rollback mechanisms, staged deployment, and containment boundaries reduce execution latency by design.And it depends on institutional readiness. Regulators, oversight bodies, and internal governance teams must have the expertise and mandate to act at system speed.None of this eliminates risk. It shortens the feedback loop.Governance Latency Is a Strategic VariableOrganizations often treat governance as an external constraint.In reality, governance latency is a competitive variable.Systems that can detect, interpret, and correct faster are safer—and therefore able to scale with greater legitimacy. Trust accumulates around responsiveness, not perfection.The fastest path forward is not reckless acceleration, but aligned acceleration: moving quickly within systems that can adapt when reality diverges from expectation.Why This Matters NowAs technologies converge, failures propagate across domains. AI systems affect biological systems, which affect economic systems, which affect political systems.In such an environment, delayed governance is not neutral—it is destabilizing.Reducing governance latency is therefore not merely a technical challenge. It is a societal one. It requires rethinking how authority, expertise, and accountability are structured in a world where systems evolve continuously.The Discipline AheadGovernance latency is not an argument for control over innovation. It is an argument for competent oversight.It shifts the focus from predicting every failure to responding effectively when failure occurs. It reframes responsibility as responsiveness. It aligns safety with speed rather than opposing it.At the frontier of technology, humanity is the experiment.Reducing governance latency is how we ensure that experiment remains corrigible.That is the discipline ahead.-Titus Get full access to The Connected Ideas Project at www.connectedideasproject.com/subscribe

The meeting begins the way these meetings always begin: with urgency masquerading as certainty.On one side of the table—sometimes literal, sometimes virtual—are the accelerationists. They speak in timelines measured in patients, not papers. Millions of people living with rare diseases. Cancers with no second-line therapies. Pandemics that will not wait for perfect governance. To them, AI-enabled biology is not speculative power; it is applied mercy. Every month of delay is a body count.On the other side are the catastrophists, though they would reject the label. They speak in failure modes and irreversibility. Dual-use risks. Model-enabled pathogen design. Democratized capabilities that outrun containment. They are not wrong either. Biology is not software. You cannot roll back a release into the wild. Some mistakes are not recoverable.Both sides arrive armed with evidence. Both claim the moral high ground. Both accuse the other—quietly or loudly—of irresponsibility.And somewhere in the middle, the actual work stalls.This is the AI × Bio debate in 2026: not a disagreement about facts, but a collapse of proportionality.The conversation flattens almost immediately. AI-driven protein design that accelerates enzyme discovery is discussed in the same breath as hypothetical bioengineered pandemics. A foundation model used to prioritize drug targets is rhetorically adjacent to one capable of designing novel toxins. The distinction between assisted discovery and autonomous synthesis blurs. Context collapses. Everything is “potentially catastrophic.”As the risks inflate, so do the demands. Zero misuse. Perfect foresight. Absolute guarantees.The scientists in the room shift uncomfortably. They know biology does not work this way. Neither does engineering. Neither does medicine. They have lived through failure—clinical trials that didn’t work, molecules that looked promising and then didn’t translate, therapies that helped some patients and harmed others. Progress, in their world, has always been probabilistic.But probability has no place in a proportionality collapse. Only absolutes survive.So the discussion veers toward moratoria. Blanket restrictions. Calls to “pause AI in biology” until governance “catches up,” without defining what “caught up” would even mean. The proposed controls are not scoped to capabilities or contexts; they are scoped to fear.On the other side, frustration hardens into dismissal. If every advance is treated as an existential threat, why engage at all? Why submit to oversight that cannot distinguish between a wet-lab automation tool and a weapon? Why not move faster, quieter, offshore?This is how the middle disappears.What gets lost in this collapse is the ability to ask better questions.Not Is AI in biology dangerous?But which applications, under what conditions, with what controls, and with what reversibility?Not Should we stop?But Where should we slow down, where should we speed up, and who decides?Not Can we guarantee safety?But What governance posture is proportionate to this specific risk surface?In the absence of proportionality, governance becomes symbolic. Ethics reviews devolve into box-checking or veto power. Real risks—like poorly secured synthesis pipelines, informal model sharing, or fragile oversight capacity in under-resourced labs—receive less attention than hypothetical doomsday scenarios.Meanwhile, the work does not actually stop.It fragments.Large, well-capitalized institutions with legal teams and compliance departments continue quietly. Smaller labs and startups struggle under vague constraints. Informal experimentation moves to jurisdictions with weaker oversight. Open science communities fracture, unsure whether sharing is noble or negligent.The irony is brutal: a discourse obsessed with catastrophic risk ends up increasing unmanaged risk.This is the illusion of safety produced by proportionality collapse.True responsibility in AI × Bio does not come from pretending all risks are equal. It comes from distinguishing them.A model that helps identify promising CRISPR targets in rare disease research does not warrant the same governance as one capable of end-to-end pathogen design. A tool used inside a regulated pharmaceutical pipeline is not the same as one released openly with no guardrails. A reversible error in silico is not the same as an irreversible release in vivo.These distinctions matter. They are the difference between precaution and paralysis.A responsible-by-design approach to AI × Bio would not ask for impossible guarantees. It would ask for classification. It would map severity against reversibility. It would align governance intensity with systemic impact. It would invest in institutional capacity—biosafety, biosecurity, auditability—rather than performative restraint.Most importantly, it would accept the hardest truth in the room: that not acting also carries risk.Lives not saved. Diseases not treated. Pandemics not predicted early enough. Tools that could have helped, but didn’t, because the debate collapsed into absolutes.The AI × Bio debate does not need less concern. It needs better judgment.Restoring proportionality does not mean choosing sides. It means rebuilding the middle—the space where tradeoffs are named, risks are differentiated, and responsibility is practiced rather than proclaimed.Without that middle, the debate will continue to generate heat without light. With it, AI × Bio can become what it already has the potential to be: not an existential gamble, but a disciplined, human-centered extension of medicine itself.At the frontier of biology, AI is not the experiment.We are.-Titus Get full access to The Connected Ideas Project at www.connectedideasproject.com/subscribe

There is a quiet failure mode running through nearly every contemporary debate about technology. It shows up in boardrooms and policy hearings, on social media and in academic journals, inside engineering teams and activist movements alike. It is not primarily a disagreement about values, nor is it a simple conflict over facts. It is something more fundamental.We have lost our sense of proportionality.In today’s technology discourse, every risk is framed as catastrophic, every acceleration is framed as reckless, and every delay is framed as negligent. The space between those extremes—the space where judgment, tradeoffs, and responsibility actually live—has collapsed.This collapse matters far more than it appears. Proportionality is not a rhetorical nicety. It is a core operating principle of engineering, governance, and strategy. When proportionality fails, decision-making fails with it. Systems oscillate between paralysis and overreach. Public trust erodes. Innovation becomes brittle, lurching forward in bursts and freezing in backlashes.If the Science of Responsible Innovation is to mean anything beyond a slogan, it must begin by restoring proportionality.The podcast audio was AI-generated using Google’s NotebookLM.What Proportionality Actually IsProportionality is the disciplined ability to reason about magnitude, likelihood, reversibility, and distribution—simultaneously.It is the habit of asking not just whether a risk exists, but:* How severe would the harm be if it materialized?* How likely is it to occur under realistic conditions?* How reversible are the consequences?* Who bears the downside, and who captures the upside?These questions are second nature to engineers. A hairline crack in a cosmetic panel is not treated the same way as a fracture in a load‑bearing beam. A memory leak is not a reactor meltdown. A degraded sensor is not total system failure. Entire fields of safety engineering exist to distinguish tolerable risk from intolerable risk—and to allocate attention, controls, and redundancy accordingly.Governance relies on the same logic. Laws differentiate between misdemeanors and felonies. Financial regulation scales with systemic importance. Insurance exists precisely because not all risks justify prevention; some are better priced, pooled, and absorbed.Proportionality is how complex societies remain functional in the presence of uncertainty.How Proportionality CollapsedThe collapse of proportionality did not happen overnight, and it did not happen for a single reason. It is the product of several reinforcing dynamics that have reshaped how modern societies perceive risk.Scale Without IntuitionModern technologies operate at scales that exceed human intuition. A single software update can affect hundreds of millions of people. A model parameter change can shift behavior across entire markets. A biological technique can propagate globally before institutions have time to respond.When scale explodes faster than our mental models, we default to worst‑case thinking. Catastrophic framing becomes a cognitive shortcut—an attempt to impose seriousness on phenomena we do not yet know how to bound.The Moralization of TradeoffsIn many domains, tradeoffs have become morally taboo.To acknowledge that saving lives today may increase future risk is treated as callous. To admit that restricting access may entrench inequality is treated as cynical. To say that some harms are acceptable relative to benefits is framed as an ethical failure.But tradeoffs do not disappear when we refuse to name them. They simply go underground, where they are made implicitly, inconsistently, and without accountability. Moral absolutism does not eliminate risk; it obscures decision-making.Incentive Compression and Outrage EconomicsModern discourse rewards absolutism. Outrage travels faster than nuance. Certainty outperforms probability. Apocalyptic warnings are amplified; calibrated risk assessments are ignored. Shock doctrine is in full force in today’s discourse. Inside organizations, incentives often mirror this dynamic. Escalation is safer than calibration. Resistance is safer than responsibility. Over time, leaders learn that the least punishable rhetorical position is the most extreme one—regardless of whether it maps to reality.Institutional FragilityAs trust in institutions erodes, so does confidence in their ability to manage risk. When regulators are perceived as slow or captured, when companies are perceived as reckless, when experts are perceived as conflicted, society compensates by inflating the perceived severity of every risk.Catastrophic framing becomes a substitute for governance capacity. Ironically, this further weakens institutions, creating a self‑reinforcing cycle.What the Collapse ProducesWhen proportionality collapses, three pathologies reliably emerge.First is risk flattening. Minor harms and existential threats are treated as morally equivalent. When everything is catastrophic, prioritization becomes impossible. Attention is spread thinly across vastly different risk surfaces, and the most serious risks often receive the least structured oversight.Second is decision paralysis. Leaders confronted with incompatible absolute claims retreat into delay, deferral, or symbolic action. Progress stalls not because risks are too high, but because they are framed as incomparable.Third is backlash cycling. Technologies deployed under inflated promises and inflated fears inevitably fail in small, normal ways. Those failures trigger overcorrection. Regulation swings from permissive to prohibitive. Public trust collapses. Legitimate benefits are lost alongside real harms.These are not abstract dynamics. They appear repeatedly in debates over artificial intelligence, biotechnology, energy systems, and digital infrastructure.The Illusion of SafetyOne of the great ironies of the collapse of proportionality is that it feels like caution.Catastrophic framing masquerades as responsibility. Demanding zero risk sounds prudent. Treating every failure as unacceptable feels ethical.In reality, this posture often produces less safety. When all risks are treated as intolerable, systems are driven underground or offshore. Informal use proliferates without oversight. Innovation concentrates in unaccountable hands. Legitimate actors retreat, leaving the field to those least inclined toward restraint.True safety does not come from eliminating risk. It comes from managing it—openly, proportionally, and adaptively.Restoring Proportionality as a Design DisciplineRestoring proportionality is not about telling people to “be reasonable.” It requires structure.A Science of Responsible Innovation restores proportionality by embedding it into design and governance processes from the outset.This begins with explicit classification. Not all systems warrant the same scrutiny. Not all capabilities demand the same controls. Severity, likelihood, reversibility, and distribution must be assessed deliberately, not rhetorically.It continues with differentiated governance. High‑severity, low‑reversibility risks justify precautionary postures and non‑proliferation norms. Moderate risks justify resilience engineering, monitoring, and rollback mechanisms. Low‑severity risks justify mitigation, insurance, and compensation.Most importantly, proportionality must be revisited continuously. As systems scale, interact, and mutate, their risk profiles change. Governance must evolve in step.Proportionality Is Not PermissionRestoring proportionality does not mean minimizing harm or dismissing legitimate concern.On the contrary, proportionality is how we take harm seriously. It forces us to allocate attention and resources where they matter most. It prevents symbolic debates from crowding out substantive ones. It enables disagreement without moral collapse.A society that cannot reason proportionally will either freeze or fracture. A society that can will move faster—and more safely—than one that cannot.Why This Is the Central Challenge of the DecadeThe technologies reshaping this decade are not marginal improvements. They are general‑purpose systems that interact with nearly every domain of human life.Without proportionality, governance becomes theater. Ethics becomes branding. Responsibility becomes a slogan.With proportionality, we can distinguish between risks that demand restraint and risks that demand acceleration. We can save lives today without ignoring tomorrow. We can move fast without pretending speed is free.At the frontier of technology, humanity is the experiment. Proportionality is how we keep that experiment from becoming reckless—or paralyzed.The collapse of proportionality is not inevitable. But restoring it will require discipline, humility, and a willingness to replace absolutism with judgment.That is the work ahead.-Titus Get full access to The Connected Ideas Project at www.connectedideasproject.com/subscribe

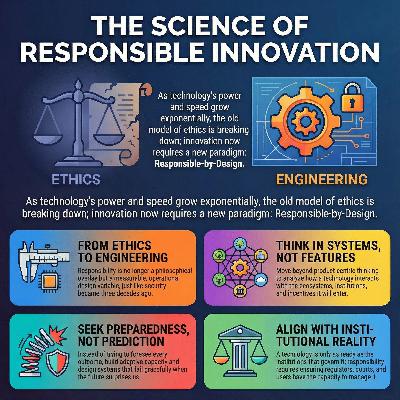

From Secure‑by‑Design to Responsible‑by‑DesignFor the last three decades, the most mature technology organizations have learned a hard lesson: security cannot be bolted on after the fact. It must be designed in—architected, tested, audited, and continuously reinforced. Secure‑by‑design did not emerge because engineers suddenly became more ethical. It emerged because complexity, scale, and interconnectedness made reactive security failures existential.We are now at an analogous inflection point—one that extends beyond cybersecurity and into the fabric of innovation itself.The technologies defining this era—artificial intelligence, advanced biotechnology, robotics, fusion and new energy systems, and novel computing paradigms—do not merely add new capabilities. They reconfigure power, agency, labor, geopolitics, and responsibility itself. They are not tools that sit neatly inside existing institutions; they stress those institutions, bypass them, and sometimes render them obsolete.In this context, responsibility can no longer be treated as a moral aspiration or a compliance checklist. Just as security matured into an engineering discipline, responsibility must now do the same. We need a responsible‑by‑design paradigm—one that is as rigorous, operational, and measurable as secure‑by‑design ever became.This is the animating premise of The Science of Responsible Innovation.In 2026, we are past the novelty phase of generative AI and well into the deployment‑and‑consequences phase. Similar transitions are unfolding across biotechnology, robotics, and energy. The central question is no longer whether we can build these systems, but whether we can govern their creation and deployment without destabilizing the societies they are meant to serve.I want to lay out how TCIP will think, write, convene, and build in 2026—and why the next era of progress depends on treating responsibility not as an ethical afterthought, but as a scientific discipline.The podcast audio was AI-generated using Google’s NotebookLM.Part I: From Ethics to Engineering ResponsibilityWhy Ethics Alone No Longer ScalesEthics has played an essential role in shaping modern technology discourse. It gives us language for values—fairness, dignity, autonomy, beneficence. It provides moral guardrails and helps articulate what should not be done.But ethics, on its own, does not scale to systems of this magnitude.Ethical frameworks are necessarily abstract. They are interpretive rather than prescriptive. They tell us what we value, but rarely tell us how to engineer tradeoffs under real constraints: incomplete data, conflicting objectives, adversarial environments, and non‑negotiable timelines.Telling a product team to “avoid bias” does not specify which dataset to discard when representativeness conflicts with accuracy. Telling a research lab to “consider societal impact” does not explain how to weigh lives saved today against uncertain risks decades from now. In practice, ethics too often becomes a late‑stage review process—arriving after architectures are fixed and incentives locked in.At that point, ethics becomes reactive. Harm mitigation replaces harm prevention.Responsibility as a Design ConstraintA science of responsible innovation begins from a different premise: responsibility is not a moral overlay, but a design variable.To call responsibility a science is to make three specific claims:* First, responsibility can be operationalized. It can be translated into concrete requirements, metrics, and processes that shape technical and organizational decisions.* Second, responsibility can be optimized. There are better and worse ways to align technological capability with human values, institutional capacity, and societal readiness.* Third, responsibility evolves. As systems interact with the real world, their risks, benefits, and failure modes change. Responsible innovation therefore requires continuous measurement, feedback, and adaptation.This reframing moves responsibility out of philosophy seminars and into engineering reviews, product roadmaps, capital allocation decisions, and board‑level governance.Part II: The Core Pillars of a Science of Responsible Innovation1. Systems Thinking Over Feature ThinkingMost technological harm does not arise from malicious intent. It arises from systems—feedback loops, emergent behaviors, misaligned incentives, and second‑ or third‑order effects that were invisible at the point of design.A science of responsible innovation therefore begins with systems thinking. It asks:* What ecosystems will this technology enter?* What institutions will it stress, bypass, or hollow out?* What incentives will it amplify or distort?* What dependencies will it create—and how reversible are they?This requires moving beyond product‑centric thinking toward full lifecycle analysis. In AI, this means examining data provenance, labor practices, deployment contexts, and governance interfaces—not just model performance. In biotechnology, it means considering supply chains, regulatory regimes, and dual‑use risks alongside molecular function.Systems thinking does not slow innovation. It prevents brittle success—products that scale rapidly only to collapse under the weight of their own externalities.2. Anticipatory Risk Without the Illusion of OmniscienceA common critique of responsible innovation is that it demands impossible foresight. How can we predict every misuse, every unintended consequence, every societal reaction?The answer is: we cannot—and we do not need to.A science of responsible innovation does not seek prediction; it seeks preparedness. It focuses on identifying plausible risk surfaces, stress‑testing assumptions, and building adaptive capacity.This is where techniques such as scenario analysis, violet teaming, and horizon scanning become central. Rather than asking “What will happen?”, we ask:* What could go wrong under reasonable assumptions?* What happens if this system is used at scale, under adversarial pressure, or outside its intended context?* Where are the irreversibilities?Responsibility, in this sense, is less about foreseeing the future and more about designing systems that fail gracefully—and visibly—when the future surprises us.3. The Collapse of ProportionalityOne of the most damaging pathologies of contemporary technology discourse is the collapse of proportionality.Every risk is framed as catastrophic. Every acceleration is framed as reckless. Every delay is framed as negligent.When proportionality collapses, decision‑making collapses with it. Leaders oscillate between paralysis and overreach. Public debate becomes absolutist. Tradeoffs—real, unavoidable tradeoffs—are treated as moral failures rather than engineering realities.A science of responsible innovation restores proportionality. It creates shared frameworks for reasoning about magnitude, likelihood, reversibility, and distribution of harm and benefit.This includes weighing immediate life-saving benefits against high-uncertainty, high-severity future risks; democratizing access against increasing misuse potential; and decentralization against accountability.Responsible innovation does not deny these tensions. It makes them explicit, measurable, and governable.4. Institutional Fit and Capacity AlignmentTechnologies do not land in abstract societies. They land in institutions—with laws, norms, competencies, and failure modes.Responsible innovation therefore asks not only “Is this technology safe?” but “Is this system ready?”* Do regulators have the technical capacity to oversee it?* Do courts have frameworks to adjudicate harm?* Do users understand its limits?In some cases, responsibility means delaying deployment until institutional capacity catches up. In others, it means investing directly in that capacity as part of the innovation process.The lesson of secure‑by‑design applies here: technology that outruns governance does not remain free—it invites overcorrection.5. Continuous Oversight and Adaptive GovernanceResponsibility does not end at launch.Complex systems evolve. Users adapt. Adversaries probe. Markets shift. Responsible innovation therefore treats governance as continuous, not episodic.This includes post‑deployment monitoring, incident reporting, audit trails, rollback mechanisms, and sunset clauses. It also requires cultural norms that reward early disclosure of failure rather than concealment.Responsibility, like security, is never “done.” It is maintained.Part III: Measurement—Turning Responsibility Into a ScienceIf responsibility is a science, it must be measurable.This does not mean reducing ethics to a single number. It means developing proxy metrics that allow organizations to reason rigorously about risk, benefit, and uncertainty.Examples include:* Key Risk Indicators (KRIs) tied to misuse likelihood, systemic dependency, or institutional fragility.* Economic models that quantify near‑term benefits against long‑tail downside.* Auditability measures that track decision provenance and model evolution.* Governance latency metrics that assess how quickly oversight mechanisms can respond to failure.Measurement does not eliminate judgment—but it disciplines it. It allows disagreements to be surfaced, assumptions to be tested, and learning to compound over time.Part IV: Practicing Responsible‑by‑DesignWhat does this science look like in practice?It looks like interdisciplinary teams where engineers, domain scientists, economists, and policy experts work together from inception—not as reviewers, but as co‑designers.It looks like violet teams with real authority, whose findings shape roadmaps rather than decorate reports.It looks like staged deployment strategies that deliberately constrain early use cases and expand only as evidence accumulates.Crucially, it looks like executive ownership.A science of responsible innovation cannot live in a safety silo. It must be integrated into business strategy, capital allocation, and P&L accountability. Leaders

There are moments in history when the axis of human understanding tilts just enough to change the course of civilization. The printing press. The microscope. The transistor. And now, the emergence of scientific superintelligence—the culmination of the converging FABRIC technologies: Fusion, Artificial Intelligence, Biotechnology, Robotics, and Innovative Computing.For centuries, science has been the slowest part of progress. Not because of a lack of tools, but because of the bandwidth of thought. We’ve been limited by the speed at which humans can hypothesize, experiment, and interpret. Even as our instruments have become more precise, our collective reasoning has remained bounded by the cognitive bottleneck of the human mind. But the 21st century—accelerated by the twin engines of biotechnology and artificial intelligence, and now reinforced by the entire FABRIC stack—is rewriting that story.The podcast audio was AI-generated using Google’s NotebookLM.In 2012, two scientific events quietly redefined the possibilities of life and intelligence. Jennifer Doudna and Emmanuelle Charpentier published the now-famous CRISPR paper, showing that a simple bacterial immune system could become a universal gene-editing tool. That same year, a deep learning architecture called AlexNet shattered decades of stagnation in computer vision by teaching a machine to see with superhuman precision. These two breakthroughs—one biological, one computational—ignited revolutions that would ultimately converge. CRISPR gave us the ability to edit the code of life. AlexNet gave us the ability to teach machines to learn from the world. Together, they set the stage for a new epoch: a world where evolution itself becomes an engineered process, and discovery becomes a computable one.A decade later, in 2022, that convergence took on linguistic form. When OpenAI released ChatGPT-3, the public met an AI system that didn’t just process data—it reasoned, synthesized, and conversed. It was the first time that the invisible machinery of deep learning felt human-adjacent, even if imperfectly so. It marked the beginning of an era where artificial intelligence could participate in the generative act of thought itself. But what happens when that reasoning power, now applied to words, is turned toward the practice of science? What happens when the scientific method itself—hypothesis, experiment, analysis, iteration—becomes not just assisted by computation, but executed by it?That question defines the next decade. And the answer is the birth of scientific superintelligence—a distributed architecture where the FABRIC of progress fuses into one coherent system of discovery.The FABRIC of DiscoveryThe future of science is being woven from five threads: Fusion, AI, Biotech, Robotics, and Innovative Computing. Each is transformative on its own. Together, they form the infrastructure of a new epistemology.* Fusion represents the energy substrate—the ability to power our ambitions indefinitely. It’s not just about clean energy; it’s about enabling limitless experimentation. When computation and experimentation are no longer resource-bound, science becomes a perpetual motion machine.* AI provides the reasoning substrate—the ability to generate and test hypotheses at scale. It moves us from data analysis to knowledge synthesis, from automation to cognition.* Biotechnology is the substrate of life itself—the medium through which the principles of learning and evolution are physically realized. Synthetic biology, cell-free systems, and programmable genomes turn life into a computational domain.* Robotics brings embodiment to science—hands that execute, instruments that perceive, and autonomous labs that close the loop between idea and result. They make scientific iteration continuous and scalable.* Innovative Computing—from quantum to neuromorphic systems—provides the architecture for complexity. It enables reasoning across hierarchies of matter, energy, and information, accelerating discovery beyond the limits of classical computation.When woven together, these technologies form a self-reinforcing feedback loop of discovery. Fusion powers computation. Computation guides biology. Biology informs robotics. Robotics accelerates experimentation. And the entire system learns from itself. This is not incremental progress—it’s recursive progress. A civilization-scale experiment in teaching the universe to understand itself.The Bottleneck of DiscoveryThe history of science is a history of bottlenecks. The telescope expanded our observation. The printing press expanded our communication. The computer expanded our calculation. But the act of discovery—the process by which we generate, test, and refine ideas about reality—has remained stubbornly analog. It’s still a craft, dependent on the intuition of the few and the slow accumulation of the many.The modern scientific method, formalized during the Enlightenment, has served us well. It taught us to build knowledge through falsification, replication, and peer review. But it also introduced latency. Each hypothesis requires months—or years—of design, funding, experimentation, and publication. Each insight is mediated by human bias, institutional inertia, and the physics of paper. In the 20th century, this model worked because the world changed linearly. In the 21st, it no longer does.We now live in an exponential century. Data doubles faster than our ability to interpret it. Biological and physical systems are too complex for human reasoning alone. The problem isn’t that science is wrong—it’s that it’s too slow. And in the face of pandemics, climate tipping points, and the rapid fusion of intelligence and matter, slow science is a form of existential risk.That’s why the paradigm shift now underway isn’t just about new technologies—it’s about a new architecture for knowledge itself.From Tools to TeammatesScientific superintelligence isn’t an algorithm. It’s an ecosystem. It’s the convergence of the FABRIC stack—automated experimentation, large-scale reasoning, self-improving models, and human collaboration loops. It’s the transition from science done by humans with tools to science done by systems with humans.The early precursors already exist. Self-driving labs at places like Carnegie Mellon and AstraZeneca now design, execute, and optimize experiments faster than any research team could. Foundation models are learning chemistry and protein folding from first principles. Multimodal AI systems can read the literature, generate hypotheses, design experiments, and interpret results. What we’re witnessing is the emergence of the first AI Scientists—machines capable of reasoning about the unknown.In 2021, Hiroaki Kitano published the Nobel Turing Challenge manifesto, proposing a goal audacious enough to rally a generation: to build an AI scientist capable of winning a Nobel Prize by 2050. It was more than a technical challenge; it was a philosophical one. Could we design a system capable not just of automation, but of autonomy? Could we build a machine that doesn’t just execute experiments, but understands the principles behind them?I co-funded the first international workshop on that challenge through ONR Global while at the Pentagon, precisely because it represented the next great leap: not in computation, but in cognition. We weren’t just funding research—we were redefining what it meant to do science. The ultimate goal wasn’t to replace scientists, but to expand the frontiers of discovery beyond the limits of human attention. It was a recognition that the future of knowledge creation depends on merging human curiosity with machine capacity.Accelerating at the Speed of ComputationIf the 2010s were the decade of learning and the 2020s the decade of reasoning, the 2030s will be the decade of discovery engines. Over the next ten years, we’ll witness the birth of autonomous science systems—networks of reasoning models, robotic labs, fusion-powered computing clusters, and self-updating knowledge graphs that continuously generate, test, and refine hypotheses.These systems will operate at the speed of computation rather than the speed of thought. They’ll ingest the totality of human knowledge—papers, data sets, code, experimental logs—and model the unexplored corners of possibility. They’ll propose new experiments, run them autonomously, and feed the results back into their reasoning architecture. Discovery will become continuous, recursive, and accelerating.The implications are staggering. We’ll see the rise of fully integrated “closed-loop science” platforms where hypothesis generation, experimental execution, and theory formation occur simultaneously across digital and physical domains. Biology will become as programmable as software. Materials science will evolve from serendipity to search. Climate modeling will shift from simulation to synthesis. The very idea of a research project will transform—from a human-led process to a system-led evolution of understanding.The Human in the LoopScientific superintelligence won’t make scientists obsolete—it will make them more essential. The new frontier of science isn’t about doing experiments; it’s about designing the systems that do them. It’s about crafting architectures of curiosity, embedding ethics into algorithms, and teaching machines what matters.Humanity’s comparative advantage won’t be in calculation but in context. We’ll define the questions, interpret the meaning, and connect the discoveries back to values, narratives, and needs. The next Einstein might not be a person—it might be a distributed system trained on all of human science—but the next Darwin will still be human, because synthesis, empathy, and storytelling remain ours to give.That’s why the most important scientific institutions of the next decade won’t be universities or corporations, but hybrid ecosystems—places where humans and machines co-create understanding. The scientist of 2035 will look less like a lab-coat res