Discover Weekly Dose of GenAI Adoption

Weekly Dose of GenAI Adoption

Weekly Dose of GenAI Adoption

Author: Indy Sawhney

Subscribed: 5Played: 40Subscribe

Share

© Indy Sawhney

Description

Welcome to the "Accelerating GenAI Adoption Podcast" hosted by Indy Sawhney. Weekly AI-hosted discussions explore GenAI insights, use cases, and research based on Indy's LinkedIn newsletters. Experience newsletter content as dynamic audio conversations to enhance your understanding of generative AI technologies. Tune in for thought-provoking dialogues that empower you to navigate the evolving GenAI landscape.

Music credits:

I Miss You, Southern Winds by | e s c p | https://www.escp.space

https://escp-music.bandcamp.com

Music credits:

I Miss You, Southern Winds by | e s c p | https://www.escp.space

https://escp-music.bandcamp.com

104 Episodes

Reverse

This newsletter by Indy Sawhney argues that token consumption serves as the most accurate, real-time indicator of how effectively a company is integrating artificial intelligence. While many executives focus on lagging metrics like productivity gains or simple inputs like the number of licenses purchased, the author suggests that monitoring the volume of AI processing reveals the true extent of daily usage across teams. The text provides a strategic framework for enterprise leaders, encouraging them to treat tokens as a management tool rather than just a technical expense. By tracking these metrics by business unit and workflow, organizations can move past performative pilots to achieve genuine transformation. Ultimately, the source emphasizes that visibility into token data allows leaders to identify adoption gaps before they impact financial results.

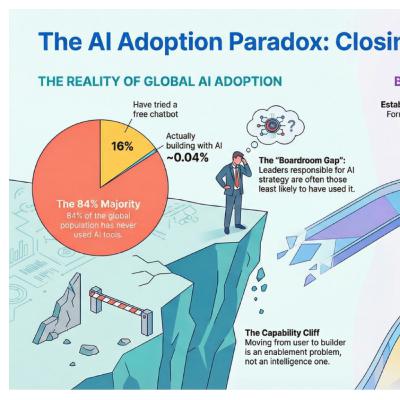

This newsletter by Indy Sawhney addresses the critical gap between rapid AI deployment and organizational governance. It highlights a significant "onramp" problem where executive leaders often approve AI strategies without having personally used the technology, leading to institutional risk. The text argues that the difficulty in moving from a casual user to a builder is an enablement failure rather than a lack of intelligence. To resolve this, the author suggests forming dedicated AI Councils and requiring hands-on learning for all decision-makers. Ultimately, the source serves as a guide for enterprise leaders to build sustainable structures that keep pace with technological evolution.

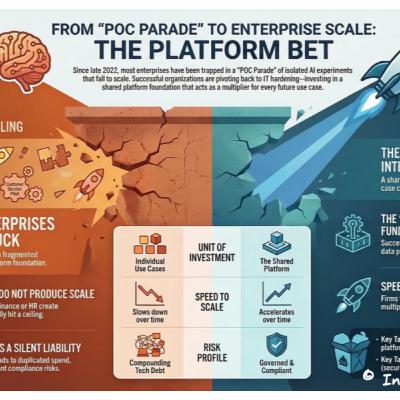

In this newsletter, Indy Sawhney explains why enterprises must transition from isolated AI experimentation to a unified platform strategy. He argues that while proof-of-concept projects generate early excitement, they eventually hit a ceiling that prevents true organizational scaling. To overcome this, leaders are encouraged to invest in foundational infrastructure, including robust security architecture, governed data pipelines, and consistent compliance guardrails. This shift from chasing individual use cases to building a shared enterprise foundation reduces long-term costs and minimizes technical debt. Ultimately, the source provides a strategic framework for moving beyond the pilot phase to achieve sustainable and responsible AI adoption.

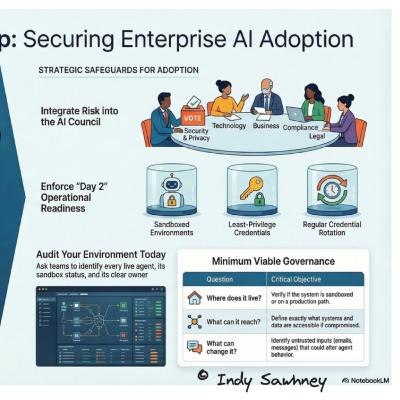

This newsletter from Indy Sawhney at AWS explores the critical security risks associated with the rapid deployment of agentic AI in enterprise environments. The author highlights how developers often copy vulnerable reference patterns into production, creating dangerous visibility gaps for security teams. By referencing incidents like the Clawbot and MCP breaches, the text illustrates how unauthorized access can occur when agents lack proper sandboxing or oversight. To mitigate these threats, the source advocates for minimum viable governance and moving risk assessment directly into the AI Council. Ultimately, the text argues that sustainable AI adoption requires treating agents like production-grade systems rather than experimental tools.

This newsletter commemorates the 100th edition of a series by Indy Sawhney, an expert at Amazon Web Services who specializes in implementing generative AI within large organizations. The text identifies the primary obstacle to progress as a lack of alignment among different corporate stakeholders, such as financial and security officers, who often view technology goals differently. To overcome these hurdles, the author recommends bridging the gap between grassroots experiments and executive priorities through a dedicated AI Council. Success also depends on operational readiness, which involves establishing clear financial models and internal skills rather than just accessing new software. Finally, the source emphasizes that effective governance is built on consistent, small habits and documentation rather than complex, rigid policies. Together, these insights form the foundation of a forthcoming book titled Scaling AI Adoption.

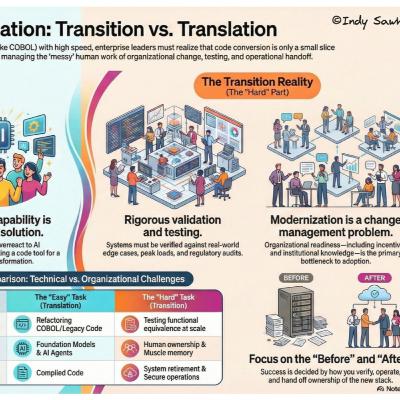

The provided text is an edition of a professional newsletter authored by Indy Sawhney, an expert in artificial intelligence strategy at Amazon Web Services. The author argues that while modern tools can easily automate code translation, true enterprise transformation depends on managing the complex human and operational shifts that follow. Using the example of mainframe modernization, the source explains that technical capabilities often outpace an organization's ability to test, validate, and integrate new systems safely. Leaders are encouraged to prioritize change management and operational readiness over the mere adoption of new software features. Ultimately, the text serves as a guide for navigating the practical challenges of scaling generative AI within large, regulated industries.

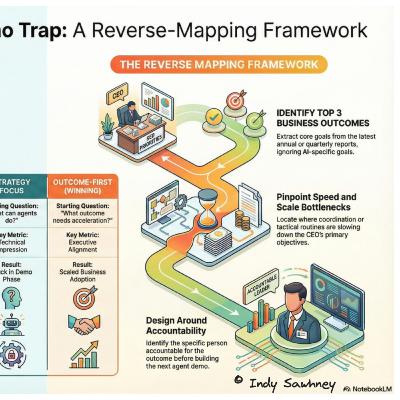

This newsletter by Indy Sawhney, an AI strategy leader at AWS, offers practical advice for organizations struggling to scale Agentic AI. The text argues that many initiatives fail because teams focus on the novelty of technology rather than addressing specific business outcomes prioritized by executive leadership. Using a pharmaceutical case study, the author illustrates how to move past impressive demos by identifying operational bottlenecks where speed and scale are most critical. The source provides a framework for reverse mapping AI capabilities to the goals found in annual reports, ensuring projects secure budget and support. Ultimately, the newsletter serves as a guide for leaders to bridge the gap between experimental automation and meaningful corporate strategy.

The provided text features a newsletter by Indy Sawhney, an AWS technology leader, focused on the strategic integration of generative AI within professional environments. The author argues that while AI may not eliminate jobs entirely, employees who successfully master AI workflows will gain a significant competitive edge over those who do not. To foster this transition, the source provides actionable advice for leaders, suggesting they conduct learning surveys and encourage small, low-risk experiments rather than utilizing fear-based tactics. The newsletter defines "AI-native" skills as the ability to design processes that balance machine automation with essential human judgment and governance. Overall, the content serves as a guide for both individuals and organizations to accelerate technology adoption through practical, incremental steps and open collaboration.

This newsletter excerpt by Indy Sawhney outlines a tiered risk framework designed to manage the growing challenge of unregulated AI usage within large organizations. The author proposes a structured governance model that categorizes AI agents into three distinct tiers based on their autonomy and data sensitivity. High-stakes applications require rigorous monitoring and legal oversight, while lower-risk tools focus on building operational muscle memory through simpler intake forms. To bridge the gap between executive policy and technical practice, leaders are encouraged to evaluate every AI pilot using three core questions regarding data origin, human intervention, and personal accountability. Ultimately, the text argues that enterprises must balance innovation speed with safety protocols to scale generative AI effectively without incurring technical debt.

This newsletter excerpt by Indy Sawhney outlines a strategic framework for successfully launching AI initiatives within large organizations. The author argues that most digital transformations fail due to employee apprehension and rigid structures rather than technical shortcomings. To overcome these hurdles, the source recommends focusing on minimum viable products, flexible governance, and consistent momentum to build psychological safety. By prioritizing small, specific wins and iterative reviews, leaders can transform initial experiments into scalable blueprints for enterprise-wide adoption. Ultimately, the text serves as a practical guide for moving past the "hype" phase to achieve meaningful impact through disciplined execution.

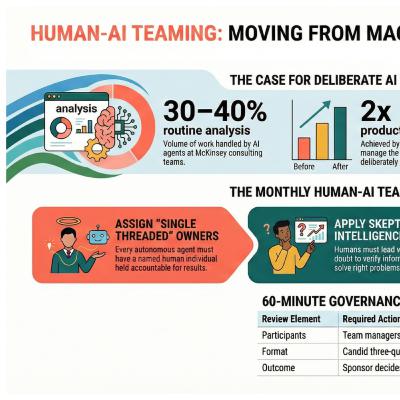

This newsletter from Indy Sawhney discusses the transition of AI agents from simple tools to active workplace teammates. It highlights how major firms are already using these agents to handle a significant portion of routine analysis, leading to substantial productivity gains. To manage this shift, the author proposes a Monthly Human-AI Teaming Review to establish formal governance and oversight. This framework ensures that humans remain accountable for autonomous tasks while developing necessary skills like skeptical intelligence. Ultimately, the text argues that treating AI as part of the supervised workforce prevents inefficiency and clarifies the human-AI interface.

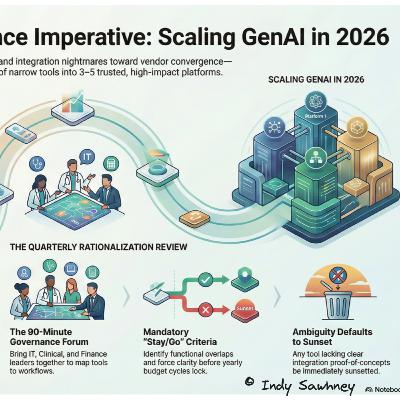

Indy Sawhney’s newsletter highlights a major shift in the corporate landscape from experimental AI pilots to a more disciplined vendor convergence strategy. The author argues that organizations must transition from managing numerous small-scale tools to focusing on a few integrated enterprise platforms to avoid budget dilution and technical debt. To achieve this, Sawhney proposes a Quarterly AI Vendor Rationalization Review, a governance framework designed to map tools to specific workflows and eliminate redundant software. This structured approach moves accountability from individual departments to executive leadership, ensuring that AI investments provide measurable value. By adopting these standardized rhythms, leaders in sectors like healthcare can better scale innovation while maintaining strict oversight of their digital ecosystems.

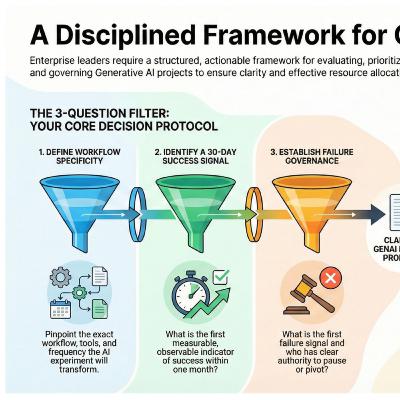

This newsletter excerpt from Indy Sawhney outlines a structured decision-making framework designed to help corporate leaders manage the growing volume of generative AI proposals. The author introduces a three-question filter that requires project teams to define their specific workflows, immediate success indicators, and clear protocols for potential failure. To operationalize this strategy, Sawhney recommends a monthly governance forum where teams present concise, one-page narratives to ensure clarity and accountability before resources are committed. By shifting the analytical burden to initiative leads, this method helps management avoid bottlenecks while prioritizing actionable experiments over vague concepts. Ultimately, the text serves as a practical guide for scaling AI adoption responsibly within large organizations through disciplined evaluation and human-led oversight.

This newsletter marks the 91st edition of a series focused on the practical implementation of generative AI within organizations. The primary focus of this issue is the introduction of a custom application built on Amazon PartyRock designed to evaluate a team's preparedness for automation. Using the R.E.A.L. framework, the tool assesses concrete workflows, organizational support, technical proficiency, and leadership commitment. Author Indy Sawhney encourages small teams to move beyond theoretical strategies by launching targeted experiments on specific daily tasks. By providing actionable insights and a new companion podcast, the source aims to simplify complex digital transformations for the healthcare and life sciences sectors. The ultimate goal is to foster responsible innovation through incremental, evidence-based improvements in workplace efficiency.

This newsletter reflects on the current state of artificial intelligence adoption and provides a strategic framework for future growth. The author argues that real progress occurs through small, agile teams rather than high-level corporate presentations. To facilitate this, the R.E.A.L. readiness model is introduced, emphasizing practical workflows, leadership support, and technical skills. Beyond technical implementation, the text explores how AI is subtly altering human language and cultural norms. Ultimately, the source serves as a guide for leaders to move from theoretical planning to tangible experimentation in the evolving landscape of 2026.

The 89th edition of the Weekly Dose of GenAI Adoption newsletter provides a strategic framework for implementing generative AI within corporate environments. The author, Indy Sawhney, argues that true organizational value is found at the "edge"—where individual teams operate—rather than through high-level executive roadmaps. To address common obstacles like fear and lack of direction, the source introduces the R.E.A.L. framework, which focuses on identifying real work workflows, ensuring empowerment and air cover, building ability through skills, and fostering leadership nerve. By prioritizing these practical human factors over technical capabilities, managers can better prepare their teams for AI integration in the coming years. The text serves as both a reflection tool and a call to action for leaders to move beyond theoretical support toward measurable experimentation.

The provided text originates from the "Weekly Dose of GenAI Adoption newsletter," specifically the 88th edition authored by Indy Sawhney, a Generative AI Strategy & Adoption Leader at AWS. The core focus of this installment is the critical need for "Sponsorship You Can See" in accelerating GenAI and agentic AI projects within enterprises. Sawhney argues that unlike previous transformations, these initiatives require visible, active executive backing that goes beyond mere permission, stressing that leaders must clear the path by removing weekly friction and tell the story by connecting the AI work to existing business problems. Ultimately, effective sponsors must own the outcome by linking the AI pilot's success or failure to established, trackable business metrics, thereby elevating the project from an experiment to a firm commitment.

This edition of the "Weekly Dose of GenAI Adoption" newsletter, authored by AWS expert Indy Sawhney, provides a practical framework for organizations seeking to accelerate Generative AI adoption. The author introduces the "Mini Iceberg" exercise, a localized method inspired by MIT's national Project Iceberg Index, which estimates AI's technical capacity to perform tasks representing significant national wage value. This simple, team-level workshop instructs employees to list their routine work and score it based on three criteria: Repetition, Structure, and AI Helpfulness. Tasks with a resulting high score (11–15) are identified as strong candidates for Agentic AI assistance and immediate experimentation. The ultimate purpose of this methodology is to shift the corporate conversation from potential job displacement to proactively identifying specific work that can be safely automated, thereby allowing human workers to focus on complex, high-judgment activities.

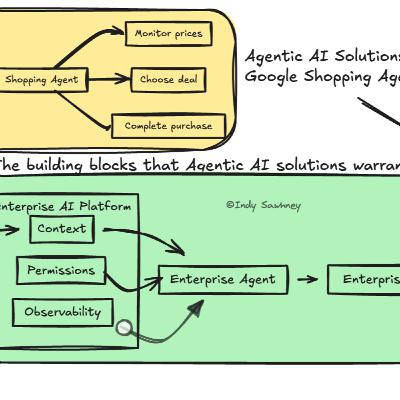

The source material is derived from a professional newsletter discussing strategies for accelerating Generative AI (GenAI) adoption within large organizations, with a special focus on the healthcare and life sciences sectors. The author uses consumer AI functions, such as Google’s autonomous shopping agents, to illustrate the high expectations employees and customers now have for AI that can execute entire workflows rather than merely answering questions. This shift exposes the significant Agent-Ready Enterprise Platform Gap, citing a corporate lack of infrastructure needed to safely host acting agents that require consistent context, clear permissions and policy, and robust observability. To achieve enterprise readiness, the text advises leaders to start with manageable, high-pain workflows, define pre-approved actions explicitly, and proactively integrate risk and compliance teams into the design phase. Success ultimately depends on funding the underlying platform capabilities early to ensure agents can act with the necessary context and auditability required in regulated industries.

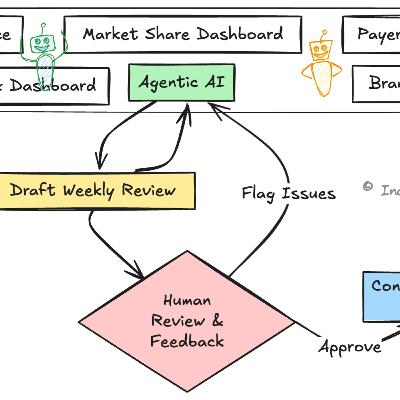

The provided text comes from the "Weekly Dose of GenAI Adoption" newsletter, edition #85, authored by Indy Sawhney, a Generative AI Strategy & Adoption Leader at AWS. The central focus is on practical, actionable insights for accelerating the adoption of Generative AI (GenAI) within the enterprise, particularly in the healthcare and life sciences sector. A significant portion of the newsletter details a specific experiment within a big pharma company where an "agentic AI" was deployed to automate the manual, time-consuming Commercial Business Review process, successfully freeing up over four hours per manager per week. The author emphasizes that real-world ROI in GenAI adoption stems from addressing genuine pain points, iterative refinement with Subject Matter Expert (SME) feedback, and focusing on tangible gains like time savings, accuracy, and reduced stress.