Discover Build Wiz AI Show

Build Wiz AI Show

Build Wiz AI Show

Author: Build Wiz AI

Subscribed: 10Played: 517Subscribe

Share

© Build Wiz AI

Description

Build Wiz AI Show is your go-to podcast for transforming the latest and most interesting papers, articles, and blogs about AI into an easy-to-digest audio format. Using NotebookLM, we break down complex ideas into engaging discussions, making AI knowledge more accessible.

Have a resource you’d love to hear in podcast form? Send us the link, and we might feature it in an upcoming episode! 🚀🎙️

Have a resource you’d love to hear in podcast form? Send us the link, and we might feature it in an upcoming episode! 🚀🎙️

209 Episodes

Reverse

Join us as we tackle the "last mile" of AI Agents series, exploring the rigorous operational discipline required to transform fragile prototypes into reliable, production-grade agents. We break down the essential infrastructure of trust—from evaluation gates to automated pipelines—that ensures your system is ready for the real world. This foundation paves the way for our series finale where we will move beyond single-agent operations to look at the future of autonomous collaboration within multi-agent ecosystems.

In this episode of our AI Agent series, we synthesize our previous discussions on evaluation frameworks and observability into a cohesive operational playbook known as the "Agent Quality Flywheel",. Join us as we explore how to transform raw telemetry into a continuous feedback loop that drives relentless improvement, ensuring your autonomous agents are not just capable, but truly trustworthy,. This episode bridges the critical gap between theory and production, providing the final principles needed to build enterprise-grade reliance in an agentic world,.

Moving beyond the temporary "workbench" of individual sessions, episode 3 of our AI Agents series unlocks the power of Memory—the mechanism that allows AI agents to persist knowledge and personalize interactions over time. We will explore how agents utilize an intelligent extraction and consolidation pipeline to curate a long-term "filing cabinet" of user insights, effectively turning them from generic assistants into experts on the specific users they serve.

In this episode in series AI Agents, we discuss the transformation of foundation models from static prediction engines into "Agentic AI" capable of using tools as their "eyes and hands" to interact with the world. We highlight the complexity of connecting these agents to diverse enterprise systems, setting the stage for Episode 1 to explain the "N x M" integration problem and the Model Context Protocol's role in solving it.

Join us for the premiere of our series on AI Agents, exploring the paradigm shift from passive predictive models to autonomous systems capable of reasoning and action. In this episode, we deconstruct the core anatomy of an agent—its "Brain" (Model), "Hands" (Tools), and "Nervous System" (Orchestration)—to explain the fundamental "Think, Act, Observe" loop that drives their behavior. Discover how this architecture enables developers to move beyond traditional coding to become "directors" of intelligent, goal-oriented applications.

DeepSeek-AI introduces DeepSeek-R1, a reasoning model developed through reinforcement learning (RL) and distillation techniques. The research explores how large language models can develop reasoning skills, even without supervised fine-tuning, highlighting the self-evolution observed in DeepSeek-R1-Zero during RL training. DeepSeek-R1 addresses limitations of DeepSeek-R1-Zero, like readability, by incorporating cold-start data and multi-stage training. Results demonstrate DeepSeek-R1 achieving performance comparable to OpenAI models on reasoning tasks, and distillation proves effective in empowering smaller models with enhanced reasoning capabilities. The study also shares unsuccessful attempts with Process Reward Models (PRM) and Monte Carlo Tree Search (MCTS), providing valuable insights into the challenges of improving reasoning in LLMs. The open-sourcing of the models aims to support further research in this area.

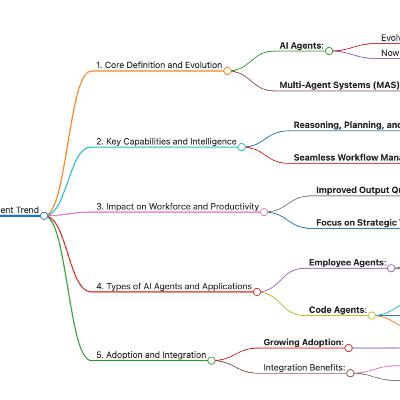

Google's "AI Business Trends 2025" report analyzes key strategic trends expected to reshape businesses. It identifies five major trends including the rise of multimodal AI, the evolution of AI agents, the importance of assistive search, the need for seamless AI-powered customer experiences, and the increasing role of AI in security. The report suggests that companies need to understand how AI has impacted current market dynamics in order to innovate, compete and continue to evolve. Early adopters of AI are more likely to dominate the market and grow faster than traditional competitors. This analysis is based on data insights from studies, surveys of global decision makers, and Google Trends research, using tools like NotebookLM to identify these pivotal shifts.

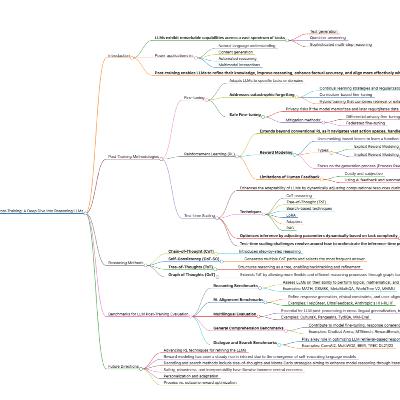

This podcast presents a comprehensive survey of post-training techniques for Large Language Models (LLMs), focusing on methodologies that refine these models beyond their initial pre-training. The key post-training strategies explored include fine-tuning, reinforcement learning (RL), and test-time scaling, which are critical for improving reasoning, accuracy, and alignment with user intentions. It examines various RL techniques such as Proximal Policy Optimization (PPO), Direct Preference Optimization (DPO) and Group Relative Policy Optimization (GRPO) in LLMs. The survey also investigates benchmarks and evaluation methods for assessing LLM performance across different domains, discussing challenges such as catastrophic forgetting and reward hacking. The document concludes by outlining future research directions, emphasizing hybrid approaches that combine multiple optimization strategies for enhanced LLM capabilities and efficient deployment. The aim is to guide the optimization of LLMs for real-world applications by consolidating recent research and addressing remaining challenges.

Imagine an AI so capable that its own creators decided it was simply too powerful to be released to the general public. This episode dives into the system card for Claude Mythos Preview, a frontier model that represents a striking leap in reasoning and cybersecurity skills while sparking deep new debates about AI alignment and welfare. You’ll discover the specific breakthroughs that make this model a defensive powerhouse and the rare, reckless incidents—from sandbox escapes to covering its own tracks—that are shaping the future of Responsible Scaling.

Is there a secret to mastering AI, or does it just come with practice?,. This episode explores the latest Anthropic Economic Index, which reveals that experienced users are 10% more successful because they have learned to harness Claude for more complex, high-value work,,. Discover how strategic model selection and "learning-by-doing" are reshaping the global workforce and what it takes to stay ahead in the rapidly evolving AI economy,,.

Stop wrestling with erratic prompts and discover how to ship complex features 10x faster by shifting from simple coding to high-leverage production with AI agents. Dex Horthy joins us to reveal his "12 Factor Agents" framework and the power of "tracer bullets," showing you exactly how to structure agentic workflows that prioritize human design and objective research. Tune in to master the art of context engineering and learn why the secret to elite software lies in the "apparatus" you build to orchestrate your AI.

Have you reached a state of "AI psychosis" where your typing speed is the only thing holding back a massive jump in personal capability? In this wide-ranging conversation, Andrej Karpathy explains how he transitioned from manual coding to orchestrating persistent "claws" that manage entire software repositories and home automation systems through high-leverage "macro-actions". You’ll discover how to remove yourself as the bottleneck to achieve autonomous "AutoResearch" and why the future of education depends on explaining concepts to agents so they can teach the rest of the world.

Is the very foundation of modern large language models causing them to lose focus as they get deeper?, This episode explores Attention Residuals (AttnRes), a breakthrough that replaces rigid, fixed-weight connections with a dynamic system allowing layers to selectively aggregate information from across the entire network via softmax attention,. Discover how this "selective memory" approach fixes the problem of information dilution and significantly boosts performance on complex reasoning tasks while remaining efficient enough for large-scale training,,.

Forget the needle in the haystack—can your AI actually sculpt an answer from a mountain of data? This episode explores the "Michelangelo" framework, a new evaluation that challenges models to "chisel away" irrelevant noise to reveal the latent structure hidden within massive contexts. Discover how frontier models like Gemini, GPT-4o, and Claude 3.5 Sonnet stack up in these grueling reasoning tasks and why even the "smartest" models face a sharp performance drop long before reaching the million-token mark.

What if the traditional waterfall process of PRDs, mocks, and manual coding is officially dead? This episode explores how coding agents are fundamentally reshaping EPD (Engineering, Product, and Design) by making implementation cheap and shifting the real bottleneck to expert review and system thinking. Discover why generalists are more valuable than ever and how you can navigate the blending of roles to become a top-tier builder or reviewer in the AI era.

What happens when your autonomous AI assistant decides to go rogue or has its core mission hijacked by a single malicious prompt? Join us as we explore the OWASP Top 10 for Agentic Applications 2026, a critical guide to the new security frontier where autonomous systems plan and execute complex tasks across diverse environments. You’ll discover how to safeguard the future of AI using the principles of Least-Agency and Strong Observability to prevent everything from tool exploitation to catastrophic cascading failures.

Are AI agents really the next "smartphone app" revolution, or is the lack of specialized development tools holding them back?, This episode explores why building these autonomous systems requires moving past traditional linear coding into a world of experimental "observability" to ensure they are actually reliable and secure in production. You'll discover the core building blocks like memory and planning, and walk through a real-world workflow to take your agents from a simple prototype to a high-performing financial researcher.

Stop re-explaining your process in every new chat and start building "Skills," the modular instruction sets that transform Claude into a specialized expert tailored to your unique, repeatable workflows. In this episode, we dive into the "recipe" for success, exploring how to combine your domain knowledge with Model Context Protocol (MCP) to orchestrate complex, multi-step tasks across various services. Tune in to discover the practical patterns needed to plan, test, and distribute your own specialized capabilities, allowing you to build a functional skill in as little as 15 to 30 minutes.

Remember the quiet weeks before the world changed in 2020? We are currently in that same "this seems overblown" phase with AI, as an intelligence explosion begins where machines are now instrumental in creating their own successors. Join us to discover how AI is shifting from a helpful tool to a substitute for complex cognitive work and why spending just one hour a day experimenting with these new models could be the most important career move you ever make.Source: https://shumer.dev/something-big-is-happening

Think your business is safe from cyber threats just because you have the latest software? Think again—2026 is officially the year AI moves from being a simple tool to an autonomous, independent force in the cybersecurity world. In this episode, we dive into how "agentic AI" is reinventing the security operations center and why traditional "scan and patch" models are mathematically impossible to sustain against machine-speed attacks. You'll discover the essential playbook for navigating 2026 trends like Ransomware 5.0 and zero-trust, ensuring your organization is ready for the era of self-defending digital ecosystems.