Discover Best AI papers explained

Best AI papers explained

688 Episodes

Reverse

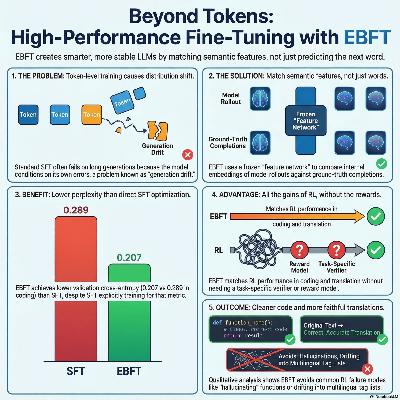

This research paper introduces Energy-Based Fine-Tuning (EBFT), a novel method for refining language models by matching feature statistics of generated text with ground-truth data. Traditional training relies on next-token prediction, which often causes models to drift or fail during long sequences because they lack global distributional calibration. By optimizing a feature-matching objective using a frozen feature network, EBFT provides dense semantic feedback at the sequence level without requiring a manual reward function. Experimental results across coding and translation show that EBFT outperforms standard supervised fine-tuning and equals reinforcement learning in accuracy. Furthermore, it achieves superior validation cross-entropy, proving that aligning feature moments effectively stabilizes model behavior and preserves high-quality language modeling.

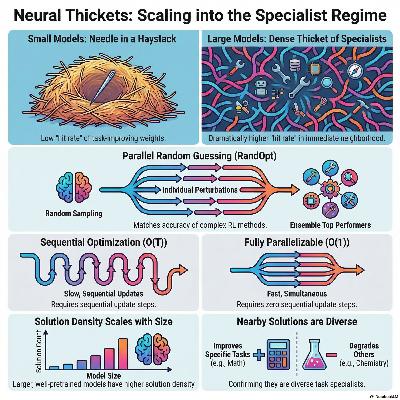

This research introduces the concept of Neural Thickets, describing a phenomenon where large pretrained models are surrounded by a high density of diverse, task-specific solutions in their local weight space. While small models require structured optimization like gradient descent to find improvements, larger models transition into a regime where random weight perturbations frequently yield "expert" versions of the model. The authors exploit this discovery through RandOpt, a parallel post-training method that samples random weight changes, selects the best performers, and ensembles their predictions. Their findings show that these random experts are specialists rather than generalists, often excelling at one task while declining in others, which makes ensembling via majority vote highly effective. This approach proves competitive with standard reinforcement learning methods like PPO and GRPO, especially as model scale increases. Ultimately, the study suggests that sufficient pretraining fundamentally reshapes the loss landscape, making complex downstream adaptation possible through simple parallel search and selection.

This paper introduces AdaEvolve, a novel framework designed to enhance how Large Language Models (LLMs) solve complex optimization and programming tasks through evolutionary search. Unlike existing methods that use rigid, pre-set schedules, this system implements hierarchical adaptivity to manage computational resources and search strategies dynamically. It operates across three levels: local adaptation to adjust exploration intensity, global adaptation to allocate the budget toward promising solution populations, and meta-guidance to generate new tactics when progress stalls. This approach mimics the efficiency of adaptive gradient methods used in continuous optimization but applies it to discrete, zero-th order problems. Experimental results across 185 benchmarks show that AdaEvolve consistently outperforms standard baselines and human-designed solutions in areas like combinatorial geometry and systems optimization. By replacing brittle manual tuning with a unified improvement signal, the framework demonstrates a more robust and autonomous path for AI-driven discovery.

This paper introduces ∇-Reasoner, a novel framework that improves Large Language Model (LLM) reasoning by applying gradient-based optimization during the inference process. Unlike traditional methods that rely on random sampling or discrete searches, this approach uses Differentiable Textual Optimization (DTO) to refine token logits through first-order gradients derived from reward models and likelihood signals. By iteratively updating textual representations in latent space, the system allows for bidirectional information flow, enabling the model to correct its reasoning chains on the fly. To ensure efficiency, the framework incorporates gradient caching and rejection sampling, which reduce the computational burden typically associated with backpropagation. Empirical results demonstrate that $\nabla$-Reasoner significantly boosts accuracy on complex mathematical benchmarks while requiring fewer model calls than existing search-based baselines. Ultimately, the research establishes a theoretical and practical shift toward treating test-time reasoning as a continuous optimization problem rather than a simple stochastic generation task.

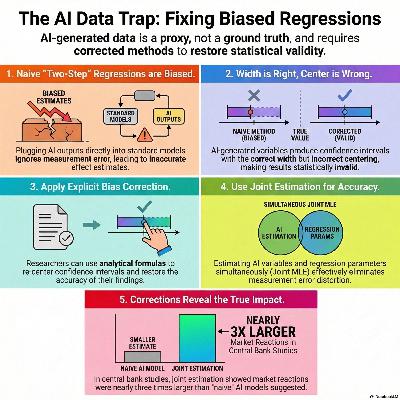

This research investigates how using artificial intelligence (AI) or machine learning (ML) to generate variables for economic regressions can lead to biased estimates and invalid statistical inference. While researchers often treat AI-generated outputs as standard data, the authors demonstrate that measurement error in these variables—even from high-performance algorithms—shifts the centering of confidence intervals, making them unreliable. To address these distortions, the paper introduces two practical solutions: a mathematical bias correction that does not require ground-truth validation data and a joint estimation framework that models the latent variables and regression parameters simultaneously. The effectiveness of these methods is illustrated through diverse applications, including job posting classifications, CEO time-use analysis, and central bank sentiment indexing. Ultimately, the study provides a robust toolkit for economists to maintain statistical integrity when integrating modern computational tools into empirical research.

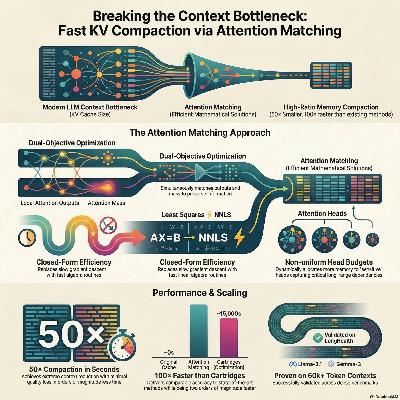

This paper introduces Attention Matching (AM), a novel framework for fast and efficient key-value (KV) cache compaction in long-context language models. As models process longer sequences, the memory required for the KV cache becomes a major bottleneck, often necessitating lossy strategies like summarization or token eviction. The researchers propose optimizing compact keys and values to reproduce the original model's attention outputs and attention mass across every layer. This method achieves up to 50× compaction in seconds, significantly outperforming traditional token-dropping baselines and matching the quality of expensive gradient-based optimization. By incorporating nonuniform head budgets and scalar attention biases, AM maintains high downstream accuracy on complex reasoning tasks while remaining compatible with existing inference engines. Their findings suggest that latent-space compaction is a powerful primitive for managing the memory demands of modern generative AI.

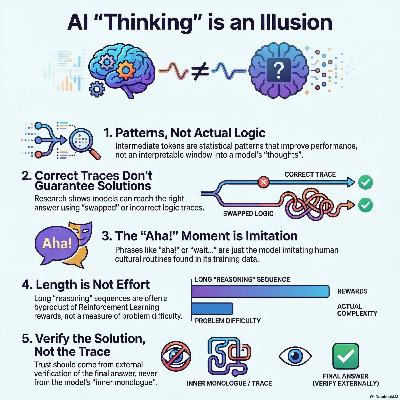

This position paper argues against the anthropomorphization of intermediate tokens in large language models, commonly referred to as "reasoning traces" or "chains of thought." The authors contend that these outputs are not genuine reflections of human-like thinking but are instead statistically generated patterns that may lack semantic validity. Research indicates that model performance can improve even when these traces are factually incorrect or nonsensical, suggesting that the connection between a trace and the final answer is often tenuous. Consequently, viewing these tokens as an interpretable window into a model’s logic can lead to a dangerous overestimation of its reliability. The authors call on the scientific community to move away from human-centric metaphors and focus on external verification of solutions. By treating intermediate tokens as a computational tool for the model rather than an explanation for the user, researchers can pursue more effective and honest AI development.

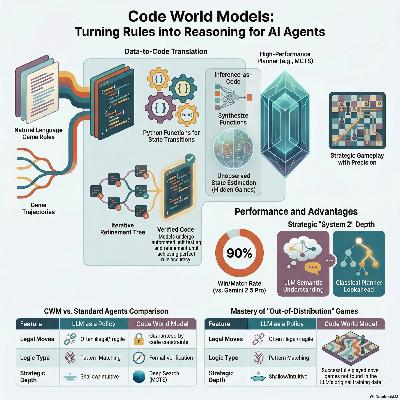

Researchers at Google DeepMind introduced Code World Models (CWM), a framework that uses Large Language Models to translate natural language game rules and player trajectories into executable Python code. Unlike traditional methods that use LLMs as direct move-generating policies, this approach treats the model as a verifiable simulation engine capable of defining state transitions and legal actions. The generated code serves as a foundation for high-performance planning algorithms like Monte Carlo tree search (MCTS), which provides significantly greater strategic depth. The framework also synthesizes inference functions to estimate hidden states in imperfect information games and heuristic value functions to optimize search efficiency. Evaluated across ten diverse games, the CWM agent consistently matched or outperformed Gemini 2.5 Pro, demonstrating superior generalization on novel, out-of-distribution games. This shift from "intuitive" play to System 2 deliberation allows the agent to maintain formal rule adherence while scaling performance with increased computational power.

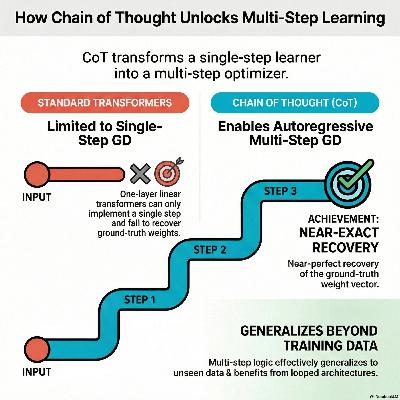

This research paper explores how Chain of Thought (CoT) prompting enables transformers to solve complex mathematical problems by mimicking iterative optimization techniques. The authors demonstrate that while standard models are limited to a single stage of calculation, using intermediate reasoning steps allows a transformer to execute multi-step gradient descent internally. Through the lens of linear regression tasks, the study proves that this autoregressive process leads to a near-perfect recovery of underlying data patterns that simpler models cannot capture. Furthermore, the findings indicate that looped architectures and CoT significantly boost the ability of these models to generalize to new information. Ultimately, the work provides a formal theoretical framework to explain why breaking down problems into smaller parts enhances the algorithmic power of large language models.

This paper explores how task descriptors, such as a mean value $\mu$, improve in-context learning (ICL) for linear regression within Transformer models. By examining a one-layer linear self-attention (LSA) network, the researchers demonstrate that models can effectively utilize these descriptors to standardize input data and reduce prediction errors. The paper provides a mathematical proof that gradient flow training converges to a global minimum, allowing the Transformer to simulate an optimized version of gradient descent. Through various experiments, the authors confirm that adding task information leads to superior performance compared to models without such context. Furthermore, the study reveals that while large sample sizes simplify the model's strategy, finite sample settings require the Transformer to develop more complex internal representations to manage bias and variance. These findings provide a theoretical foundation for the empirical success of prompts and instructions in large language models.

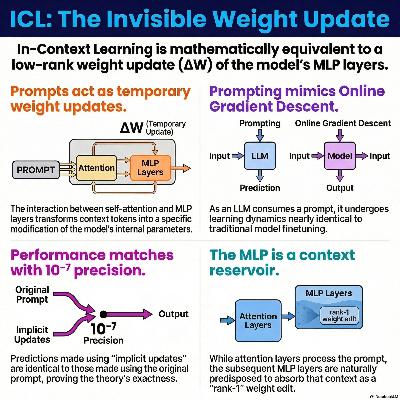

This research explores how modern Large Language Models adapt to new information during inference by framing in-context learning as a series of implicit weight updates. The authors demonstrate that the influence of a prompt can be mathematically mapped to specific, rank-1 patches on a model's existing parameters, effectively "reprogramming" the network without formal retraining. By establishing a framework of input and output controllability, the study proves this phenomenon applies to complex architectures like Gemma, Llama, and Mixture of Experts. Their experiments on Gemma 3 validate that a model with modified weights and no context produces the same outputs as the original model with a prompt. This work provides a mechanistic foundation for understanding how static pre-trained transformers dynamiclly transmute contextual cues into effective internal parameters.

This research explores the mechanisms of in-context learning (ICL) in Large Language Models, proposing that transformers learn by implicitly updating their internal weights during inference. The authors demonstrate that a transformer block effectively transforms prompt examples into a rank-1 weight update of the model's MLP layer. This process allows the model to adapt to new patterns without permanent training, mathematically mirroring stochastic gradient descent as tokens are processed. Theoretical formulas are provided to map these context-driven adjustments exactly, showing that MLP layers are naturally structured to absorb and store contextual information. Experimental results on linear regression tasks confirm that modifying model weights using these formulas produces identical predictions to providing the original in-context prompt. The study ultimately unifies ICL with model editing and steering vectors, offering a principled framework for understanding how LLMs reorganize their internal representations dynamically.

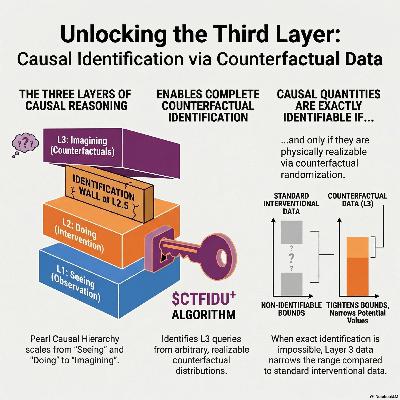

This research establishes a complete algorithmic framework for identifying counterfactual quantities by utilizing a newly discovered family of physically realizable Layer 3 data. While traditional causal inference was restricted to observational and interventional data, the authors introduce the CTFIDU+ algorithm, which can determine if a counterfactual query is identifiable from arbitrary sets of counterfactual distributions. The study defines a fundamental limit to exact causal inference, proving a duality where a query is only point-identifiable if it is also physically realizable through counterfactual randomization. For queries that remain non-identifiable, the authors derive novel analytic bounds that are significantly tighter than previous methods. These theoretical advancements are validated through simulations in fairness and personalized decision-making, demonstrating that access to counterfactual data yields more precise results in practice.

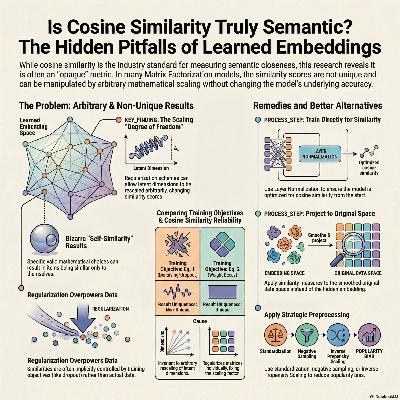

This paper investigates whether cosine similarity accurately reflects the semantic similarity of learned embeddings, particularly in linear matrix factorization models. The authors demonstrate that the metric can produce arbitrary or non-unique results because certain training objectives allow for the random rescaling of latent dimensions. While some regularization methods yield a unique solution, others leave the final similarity scores dependent on opaque modeling choices rather than the underlying data. These findings suggest that the common practice of applying cosine similarity to high-dimensional vectors may lead to misleading conclusions. Consequently, the researchers advise against the untested use of this metric and suggest alternative normalization or projection techniques to ensure more reliable measurements.

This research introduces **INPAINTING**, a framework that treats finding adversarial "jailbreak" prompts as a simple inference task rather than a slow optimization problem. By using **Diffusion Large Language Models (DLLMs)**, which understand the joint relationship between prompts and responses, the researchers can directly generate prompts that trigger specific harmful outputs. This method effectively **inverts the standard generation process**, allowing a surrogate model to "sample" candidate attacks that are highly transferable to black-box targets like ChatGPT. The resulting prompts are **semantically natural and exhibit low perplexity**, making them difficult for traditional security filters to detect. Compared to existing gradient-based or iterative attacks, this approach is **significantly more efficient** and achieves higher success rates against robustly trained models. Ultimately, the paper highlights a critical security vulnerability: any model capable of modeling joint data distributions can be repurposed as a **powerful natural adversary**.

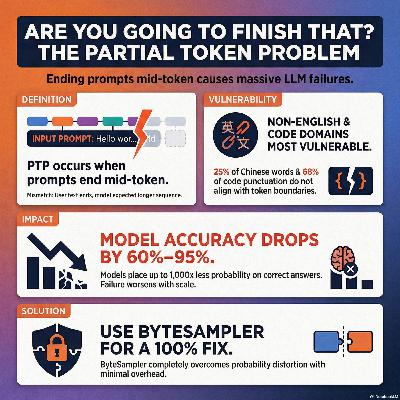

Language models face a significant partial token problem (PTP) when user prompts end in the middle of a multi-character token, causing the model to misinterpret the expected continuation. This research highlights that the issue is not just a theoretical glitch but a pervasive failure mode in natural language use, especially in Chinese, German, and programming code where word and token boundaries frequently misalign. Experiments reveal that even elite models suffer a dramatic drop in accuracy—between 60% and 95%—when encountering these "word-complete" but "token-incomplete" prompts. Surprisingly, this degradation does not improve with increased model scale, as larger models are often more strictly tuned to their specific tokenizers. To address these distortions, the authors evaluate several inference-time mitigations, finding that heuristic "token healing" offers inconsistent results. In contrast, the study validates that an exact solution called ByteSampler can completely eliminate the problem by reconstructing valid token paths. Ultimately, the paper provides practical recommendations for model providers to ensure more reliable text generation across diverse languages and technical domains.

This paper investigates whether large language models (LLMs) can effectively utilize novel information they learn during a single conversation. While models successfully create internal representations of new concepts from context, the study reveals these representations are often inert and cannot be applied to downstream tasks. Experiments on next-token prediction and adaptive world modeling show that both open-weights and closed-source reasoning models struggle to use this in-context knowledge reliably. Even when a model's latent states reflect a new logical structure, it frequently fails to deploy that structure to solve problems. These findings suggest a significant gap between encoding information and the flexible deployment required for truly adaptable artificial intelligence. Overall, the authors conclude that current architectures require new training methods to move beyond simple data recognition toward functional in-context reasoning.

This research explores the discrepancy between transformer in-context learning and Bayesian inference, arguing that models are Bayesian in expectation rather than through every individual realization. While previous studies used martingale diagnostics to question the Bayesian nature of these models, this paper identifies positional encodings as the primary factor that breaks the required exchangeability. By accounting for how architectural design prioritizes sequence order, the authors prove that transformers still achieve near-optimal compression and information-theoretic efficiency when performance is averaged across different orderings. Empirical tests on black-box LLMs and controlled ablations demonstrate that order-induced variance exists but predictably decays as context length increases. Ultimately, the study suggests permutation averaging as a practical and effective method for reducing uncertainty and improving the reliability of model outputs in tasks with exchangeable data.

This paper discusses a framework for reflective test-time planning designed to improve the performance of embodied Large Language Models (LLMs) during robotic tasks. This system utilizes double-loop learning, where agents re-evaluate their past decisions through hindsight assessments to correct underlying strategic errors. By incorporating internal reflection for immediate scoring and retrospective reflection for long-term credit assignment, the model adapts its policy at deployment without requiring additional pretraining data. Experimental results in household and cupboard fitting tasks demonstrate that this approach significantly reduces execution waste and improves success rates compared to standard methods. Furthermore, the researchers employ Low-Rank Adaptation (LoRA) to efficiently update the models, ensuring that the robots can learn from their own trials and errors in real-time environments.

This research introduces Optimal Advantage-based Policy Optimization with Lagged Inference policy (OAPL), a novel reinforcement learning algorithm designed to improve Large Language Model (LLM) reasoning. Traditional methods like GRPO often struggle with "off-policy" data caused by technical mismatches between training and inference engines. OAPL embraces these discrepancies by using a squared regression objective and KL-regularization, allowing the model to learn effectively even when data is significantly outdated. Empirical tests show that OAPL outperforms existing benchmarks in competition mathematics and matches top-tier coding models while using three times fewer training samples. Furthermore, the algorithm prevents entropy collapse, ensuring that the model maintains diverse and scalable problem-solving capabilities during test-time. Ultimately, the authors demonstrate that fully asynchronous, off-policy training is a more stable and efficient path for advancing machine reasoning.