Discover Deep Dive - Frontier AI with Dr. Jerry A. Smith

Deep Dive - Frontier AI with Dr. Jerry A. Smith

85 Episodes

Reverse

Medium: https://medium.com/@jsmith0475/your-ai-forgets-you-mid-conversation-heres-the-architecture-that-doesn-t-9df34fdd61fd

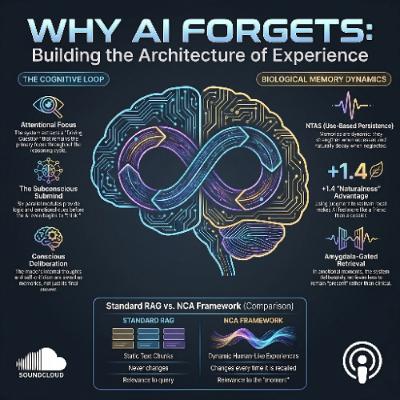

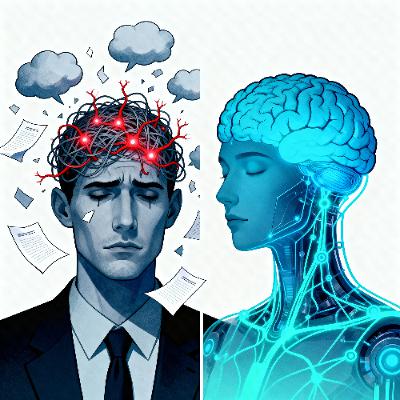

The provided text introduces the Neuro-Cognitive Agent (NCA), a framework designed to solve the chronic "forgetting" problem in large language models by mimicking biological brain structures. Rather than simply expanding storage, this architecture implements a cognitive loop featuring a simulated hippocampus for experiential memory and specialized subconscious modules for emotional and logical analysis. A key innovation is the Neuromorphic Temporal Attention Scaling, which allows the system to prioritize important memories while letting irrelevant details naturally fade over time. Through iterative development, the researchers discovered that true intelligence requires a judgment layer to prevent clinical, over-analytical responses in favor of natural, empathetic interaction. Ultimately, the source argues that AI must move beyond simple data retrieval to achieve genuine cognition and meaningful, long-term relational continuity.

Medium Article: https://medium.com/@jsmith0475/god-what-math-tells-us-about-consciousness-and-reality-f4b0334da1f4

Dr. Jerry A. Smith argues that the TREE function indicates that brain complexity exceeds the mathematical limits of physics. Since physical laws cannot account for the irreducible consciousness found in neural structures, a transcendent, intentional source—resembling God—must exist to explain experience.

Medium: https://medium.com/@jsmith0475/why-consciousness-cant-be-reduced-and-mathematics-proves-it-6d6fd1b8518e

This paper, by Dr. Jerry A. Smith, argues that consciousness is irreducible due to the transarithmetical complexity of neural tree space. Using Friedman’s TREE function, the author shows that brain connectivity transcends standard formal systems, making a reductive account mathematically impossible.

Medium Article: https://medium.com/@jsmith0475/federated-ai-agents-418ce3d2b441

This article, by Dr. Jerry A. Smith, discusses how future AI should mimic distributed nervous systems rather than monolithic brains. Federated agents use asynchronous messaging (like MQTT) and heterogeneous models to improve privacy, scalability, and reliability. This architecture thrives where data cannot leave local boundaries.

Medium Article: https://medium.com/@jsmith0475/exotic-reasoning-a-topological-framework-for-understanding-emergent-intelligence-in-large-language-f76eefbae209

The author, Dr. Jerry A. Smith, introduces the Exotic Reasoning Conjecture, a theoretical framework that posits that emergent intelligence in large language models is rooted in high-dimensional topology. Dr. Jerry A. Smith suggests that instead of gradual improvement, scaling allows models to suddenly access exotic manifolds—reasoning paths that are logically equivalent to standard logic but geometrically disconnected. The author draws a parallel to Milnor’s exotic spheres, arguing that some cognitive transformations require "corners" or discontinuities that current linear transformer architectures may struggle to navigate. Consequently, the paper posits that achieving true general intelligence might require diverse computational mechanisms, such as diffusion or spiking networks, to explore these isolated geometric territories. Ultimately, the source frames the future of AI not just as a matter of scale, but as a challenge of mapping a complex landscape of unreachable reasoning structures.

Medium Article: https://medium.com/@jsmith0475/multi-dimensional-ai-analysis-for-pharmaceutical-stability-reports-beyond-sequential-review-926319112a16

The author, Dr. Jerry A. Smith, introduces a novel AI framework designed to improve pharmaceutical stability reports by moving beyond simple, linear compliance checklists. Traditional automated reviews often miss why a document is rejected because they ignore the simultaneous tensions between regulatory rules, scientific rigor, and specific client expectations. The researchers propose a multi-dimensional analysis that evaluates eight quality areas in parallel to visualize the trade-offs authors make during the writing process. By identifying these hidden patterns, the system can predict reviewer objections before a report is even submitted. Ultimately, the source argues that treating quality as a complex landscape rather than a binary pass-fail test reduces revision cycles and ensures documents are optimized for their specific audience.

Medium Article: https://medium.com/@jsmith0475/the-100x-cost-reduction-reshaping-enterprise-ai-0e2779fca872

This article, by Dr. Jerry A. Smith, explores a fundamental transition in the artificial intelligence industry from massive, general-purpose models toward specialized ecosystems of smaller, more efficient tools. Driven by a 100x reduction in operational costs, enterprises are increasingly adopting Small Language Models (SLMs) that rival the performance of larger counterparts on specific tasks. This shift is characterized by a hybrid architecture in which routine queries are handled by low-cost models, whereas complex reasoning is delegated to frontier systems only when necessary. Furthermore, the rise of multi-agent orchestration allows these specialized units to work together like a "microservices" network to complete intricate workflows. This modular approach not only enhances economic viability and data security but also mitigates risks such as model collapse and safety failures associated with opaque, monolithic systems. Ultimately, the text argues that future AI success will be defined by the strategic coordination of diverse, targeted models rather than the pursuit of raw parameter scale.

Medium: https://medium.com/@jsmith0475/the-scientific-method-for-smarter-ai-how-orthogonal-arrays-can-transform-agentic-goal-achievement-41f43d06d92f

Dr. Jerry A. Smith introduces Orthogonal Agentic Architecture, a scientific framework designed to optimize complex AI systems by overcoming the "curse of dimensionality." Drawing on industrial statistical techniques such as orthogonal arrays and the Taguchi Method, the approach enables developers to test multiple variables simultaneously with a fraction of the usual number of experiments. The source outlines a multi-agent system that automates the scientific method through specialized roles, including orchestrators, execution technicians, and analytical agents. By implementing rigid success tiers and stage-based scoring, the framework replaces subjective "gut feelings" with empirical data to quantify how factors such as temperature and persona interact. Ultimately, this methodology transforms AI development from an intuitive art into a rigorous engineering discipline that exposes hidden misconceptions. Through iterative cycles of testing and refinement, organizations can achieve high-performance agentic behavior with unprecedented computational efficiency.

Medium Article: https://medium.com/@jsmith0475/the-hidden-toll-neuro-cognitive-harm-from-sustained-manual-verification-work-c5788b210ac9?postPublishedType=initial

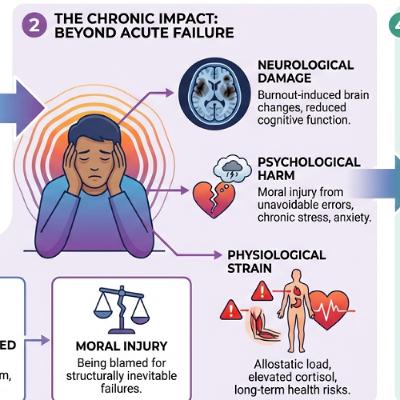

Dr. Jerry A. Smith argues that sustained manual numeric verification is a cognitively impossible task that inflicts long-term neurological and physiological damage on workers. The author explains that constant exposure to high-stakes, repetitive data checking triggers chronic stress and burnout, leading to measurable structural changes in the brain’s prefrontal cortex. Furthermore, employees suffer moral injury when they are unfairly blamed for inevitable errors stemming from human cognitive limitations rather than from a lack of diligence. Consequently, the source redefines AI automation as an essential imperative for worker protection rather than merely a tool for operational efficiency. Organizations are urged to fulfill their duty of care by transitioning humans away from these harmful roles to protect their long-term well-being.

Medium Article:

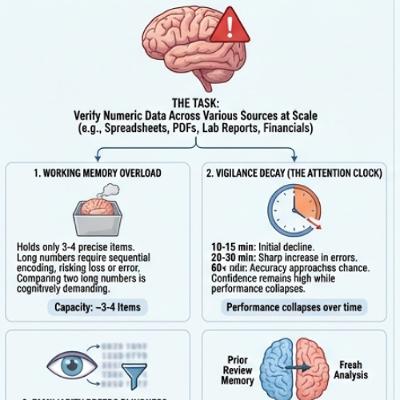

Dr. Jerry A. Smith argues that manual numeric data verification is fundamentally unreliable due to inherent human cognitive limitations, such as fatigue, memory constraints, and confirmation bias. While traditional automation handles basic formatting, it lacks the semantic understanding necessary to catch complex, contextual errors. Frontier AI is presented as a structural necessity because it maintains consistent attention and processes vast amounts of information without the psychological lapses that affect people. By mapping AI capabilities to specific neurocognitive deficits, the author suggests a shift in which machines handle exhaustive verification and humans provide high-level oversight. Ultimately, the source advocates for a system-based accountability model that recognizes the ethical and operational risks of relying solely on human vigilance.

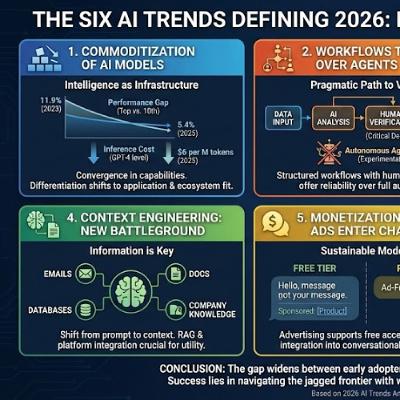

Industry research indicates that by 2026, the competitive landscape of artificial intelligence will shift from raw model performance to practical application layer integration. As high-end models become standardized commodities, users should prioritize structured workflows over fully autonomous agents and focus on providing comprehensive data context rather than perfect prompting. The technical divide is expected to narrow, enabling non-technical professionals to perform complex tasks such as coding and data analysis independently. Furthermore, the introduction of ad-supported tiers will likely democratize access to frontier models as AI transforms physical machinery into evolving software endpoints. Ultimately, success in this era depends on proactive learning and organizational habits rather than waiting for flawless technological solutions.

Medium Article: https://medium.com/@jsmith0475/what-holds-an-ai-together-063fcb26c876

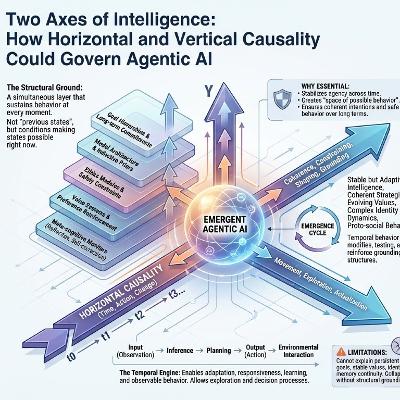

"What Holds an AI Together? The case for vertical causality in machine intelligence," by Dr. Jerry A. Smith, argues that contemporary artificial intelligence systems are fundamentally incomplete because they rely solely on horizontal causality, which governs the sequential flow of actions and feedback across time. This reliance on the temporal axis results in systems that are locally competent but lack global coherence, leading the author to introduce the concept of vertical causality. Vertical causality describes simultaneous, structural dependencies—such as the underlying architecture, goal representations, and identity models—that sustain the system and ground its purpose at every moment action occurs. The author explains that achieving genuine artificial agency requires integrating both dimensions in a "duplex ecosystem," where vertical structures define the space of possible behaviors while horizontal processes explore it. Consequently, robust AI alignment should focus not just on sequential checks but on the architecture itself, ensuring that essential commitments are structurally operative rather than merely procedural outcomes.

Medium Article: https://medium.com/@jsmith0475/when-all-your-ai-agents-are-wrong-together-c719ca9a7f74?postPublishedType=initial

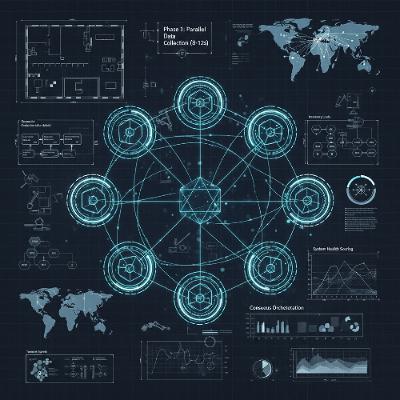

"When All Your AI Agents Are Wrong Together," by Dr. Jerry A. Smith, discusses advanced architectures for achieving million-step reliability in Large Language Model (LLM) agents, building upon the foundational success of the existing MAKER system. Although MAKER demonstrates long-horizon stability using probabilistic voting, which relies on logarithmic cost scaling against exponential reliability, the article identifies three major flaws: vulnerability to correlated errors, the requirement for a fully explicit state representation, and high per-step costs. To address these limitations, the author proposes a new structure called TAC-HAVA-K, which incorporates adversarial reasoning (Thesis, Antithesis, Consolidator), hierarchical verification (Belief States, World Model, Verifier), and K-fold parallelism to create a more robust, cost-efficient, and generalizable system capable of operating in ambiguous, partially observed environments. Ultimately, the new architecture aims to achieve reliability through structural diversity of verification rather than relying solely on statistical independence.

Medium Article:

"The Devil’s Advocate Architecture," by Dr. Jerry A. Smith, introduces a novel framework for designing highly reliable artificial intelligence (AI) by mirroring principles of human psychological and organizational decision-making. The core argument is that modern AI fails due to overconfidence and a lack of doubt, which the proposed multi-agent system counters through structured conflict and debate. This architecture employs three distinct roles—the Worker (proposing a solution), the Devil’s Advocate (critiquing risks), and the Reviewer (synthesizing the final decision)—to overcome cognitive biases like groupthink. Crucially, suppose the Reviewer’s confidence falls below a set threshold. In that case, the system initiates a Genetic Mutation Loop, forcing agents to fundamentally evolve their strategies in response to the identified failure mode, leading to antifragile, battle-hardened solutions. A case study of IT incident resolution demonstrates how this dialectical process and targeted evolution yield comprehensive, contingent plans, making the approach applicable to high-stakes fields such as medical diagnosis and financial planning.

Medium Article: https://medium.com/@jsmith0475/the-math-that-kills-growth-how-we-built-an-ai-system-that-solves-manufacturings-50m-dcdf6e352124

"The Math That Kills Growth" describes a framework called VALORE, a multi-agent AI system designed to solve the Coordination Paradox that stifles growth in scaling manufacturing companies. The author, Dr. Jerry A. Smith, explains that as manufacturing operations grow, coordination complexity increases exponentially, leading to a collapse in decision velocity and massive financial losses, often totaling $15M to $50M annually. The VALORE system replaces manual, email-dependent coordination with specialized AI agents—such as the Scout, Analyst, and Strategist—that communicate and negotiate in real-time to manage disruptions like supply chain shocks or quality failures within seconds. The implementation of this agentic AI is proposed through a three-phase roadmap—Visibility, Assistance, and eventual Autonomy—to build trust in the regulated manufacturing environment gradually. Ultimately, the system aims to transform coordination from a growth-capping liability into a competitive advantage by achieving significant reductions in operational friction and cost.

Medium Article: https://medium.com/@jsmith0475/when-machines-learn-to-negotiate-reimagining-manufacturing-coordination-through-multi-agent-ai-fb6a60fc17eb

WebApp: https://main.d2oyp76axtxaek.amplifyapp.com

This article, by Dr. Jerry A. Smith, outlines a theoretical framework for revolutionizing complex manufacturing coordination, specifically within the regulated medical device sector, by using Multi-Agent AI (Artificial Intelligence). The core problem addressed is the exponential coordination complexity of running multiple facilities that current centralized enterprise software cannot handle effectively. Dr. Smith proposes a system where specialized AI agents—each an expert in an area like inventory or compliance—would negotiate and coordinate decisions within seconds, significantly improving speed and accuracy over current human-centered, email-based processes. Crucially, this framework advocates for a hybrid intelligence approach combining transparent mathematical formulas with Large Language Models (LLMs) to ensure both adaptability and the explainability required for regulatory audits. While the concept is compelling, the author acknowledges significant practical hurdles including expensive data integration, the need for robust safety mechanisms, and organizational resistance to such a dramatic shift.

Medium Article: https://medium.com/@jsmith0475/your-ai-isnt-intelligent-it-s-just-really-good-at-pretending-ac2fe872e838?postPublishedType=initial

The source, an excerpt titled "From AI Simulation to Synthetic Intelligence," argues that current Artificial Intelligence (AI) models, such as Large Language Models (LLMs), are fundamentally limited because they operate as sophisticated simulations based on probabilistic pattern matching rather than genuine cognition. Authored by Dr. Jerry A. Smith, the text identifies several critical architectural flaws in today’s AI, including catastrophic forgetting (the inability to continuously learn new information without overwriting old knowledge) and a reliance on correlation instead of causal reasoning, which leads to unpredictable failures in novel scenarios. Smith posits that the solution is a transition to Synthetic Intelligence (SI), a new paradigm designed for genuine, non-imitative cognition based on three pillars: Material-Based Intelligence (integrating memory and processing), Nested Learning architectures (allowing continuous learning), and the integration of causal reasoning to enable true adaptability and understanding. This shift is presented as necessary to overcome the scaling wall, economic costs, and reliability issues inherent in current, simulation-based AI systems.

Medium: https://medium.com/@jsmith0475/we-built-a-10-agent-ai-system-that-monitors-our-225k-project-in-real-time-heres-what-we-learned-1f0de27ca852

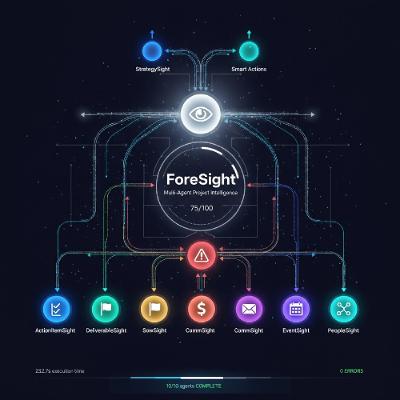

"We Built a 10-Agent AI System That Monitors Our $225K Project in Real-Time - Here's What We Learned," written by Dr. Jerry A. Smith, detailing the development and performance of a specialized AI system. This system utilizes ten distinct, collaborating AI agents to continuously monitor a $225,000 consulting project by synthesizing data from multiple sources like email, calendar, budget, and task trackers. The core achievement of ForeSight is its ability to detect complex project risks 7 days earlier than human managers could and dramatically reduce status reporting time from hours to just 4.2 minutes. The author argues that this multi-agent architecture, which relies on parallel execution and inter-agent communication via a Redis message queue, shifts project management from reactive data compilation to proactive strategic decision-making. The article concludes by emphasizing that the collaborative intelligence of specialized agents offers a massive return on investment by saving hundreds of thousands of dollars in manual labor and preventing costly delays or budget overruns.

Medium: https://medium.com/@jsmith0475/your-ai-might-be-thinking-in-17-dimensions-youre-only-using-2-1a2a56131a1b

"Your AI Might Be Thinking in 17 Dimensions. You’re Only Using 2." presents a conceptual framework and research agenda by Dr. Jerry A. Smith, proposing that the popular chain-of-thought prompting method, which forces AI to "think step-by-step," severely limits the system's native capabilities. The author argues that AI models operate in high-dimensional embedding spaces, handling numerous constraints simultaneously, and forcing linear reasoning is akin to flattening a complex sculpture onto a single line of text. The proposed solution is Higher-Dimensional Collaboration, where users specify constraints and objectives across multiple dimensions, allowing the AI to explore the full solution landscape rather than following a human-mimicking sequential path. While acknowledging that step-by-step reasoning is necessary for interpretability and regulation, the article advocates for prioritizing the computational efficiency of exploration for complex, multi-objective problems. Ultimately, the text calls for researchers and practitioners to rethink how they collaborate with AI to leverage its parallel, multi-dimensional processing strengths.

"Your Brain Isn't Built for Meetings. Here's How AI Fixes That" provides an in-depth analysis of how Artificial Intelligence (AI) can address the inherent neurological challenges of modern professional meetings, arguing that human working memory is insufficient for the demands of multi-tasking and note-taking. Authored by Jerry A. Smith, the text synthesizes neuroscience research and cognitive theory to establish that typical meeting behavior results in cognitive overload and poor information encoding, citing studies on working memory limits and the ineffectiveness of manual note-taking. The core argument examines the potential benefits of AI augmentation—such as liberating working memory and creating permanent institutional memory—while thoroughly exploring critical risks, including privacy concerns, the potential for cognitive dependency (the "Google effect"), and the creation of a cognitive class system due to unequal access to expensive technology. Ultimately, the piece calls for rigorous controlled studies and ethical policy frameworks to ensure AI augmentation systems are designed for human flourishing rather than corporate surveillance or increased inequality.