Discover The Strategic Linguist Podcast

The Strategic Linguist Podcast

The Strategic Linguist Podcast

Author: The Strategic Linguist

Subscribed: 0Played: 1Subscribe

Share

© Rebecca Wicker

Description

Revealing how language shapes power, markets, and competitive advantage | Expert analysis from workplace dynamics to global strategy

thestrategiclinguist.substack.com

thestrategiclinguist.substack.com

37 Episodes

Reverse

There’s a good chance someone, at some point, stood in front of you with a PowerPoint slide and declared with absolute confidence:“93% of all communication is nonverbal.”What Mehrabian Actually Said (01.55)The Slide Always Wins (06.08)What the Misquote Actually Taught People (10.35)What Linguistics Actually Says (13.24)The 38% Problem (16.13)Two Stories Running at Once (19.48)The World This Creates (25.13)Let’s Start Again (29.27)And when you see the pie chart - because you will see the pie chart - remember that the man whose name is on it spent decades trying to correct it, on a website that didn’t travel, in a format that didn’t spread, with caveats that didn’t fit on a slide.The correction was always there. The format just couldn’t carry it. This is a public episode. If you'd like to discuss this with other subscribers or get access to bonus episodes, visit thestrategiclinguist.substack.com/subscribe

Welcome to my second episode of Linguistic Unscripted where I go off script and discuss topics from my posts that didn’t quite fit on the page. Today, we’re talking about accents.I talk about my experiences in my phonetics and phonology classes, why I love accents and a defining moment in my teaching career teaching accent reduction at call centres in Sri Lanka.We get into some interesting consonents in the phonemic chart (see below). Lori does a fantastic job at recording how some of these sounds come together (it’s way more complicated than you’d think) as well as how phonemes, intonation and word stress creates accents. Meow Factor by Edith is the real expert here as a dialectologist - I hope she will forgive me for my aging knowledge!I use two of my favourite Bollywood actresses to explain how code switching works, how you’re often made to choose who you are depending on who you’re talking to - and how much criticism comes with it. Both beautiful, famous women, have been harassed and hounded online for their accents.Speakers face the authenticity paradox: maintain your accent and risk professional marginalisation, or modify it and face accusations of inauthenticity, of abandoning your roots, of “talking white” or “putting on airs.” For many, there is no comfortable middle ground, only a series of calculated choices about which version of yourself to present in which context, knowing that each choice carries consequences for how you’re perceived both professionally and within your own community.Videos about Priyanka Chopra’s accent in the mediaVideos about Deepika Pudokone’s accent in the media^^ Fahah Khan, the director for Deepika’s debut in Om Shanti Om explaing in an interview how she didn’t like Deepika’s south Indian accent and listened to her audition tape with the sound off because she was “disturbed” by her voice 😳These complexities reveal that accent isn’t simply something speakers “have” - it’s something they perform, negotiate, and strategically deploy. The question isn’t whether people can or should change their accents. The question is why some accents require changing in the first place, and what it costs individuals to constantly navigate between linguistic authenticity and professional acceptance. The burden of adaptation falls disproportionately on those whose voices already carry less institutional power, creating yet another invisible tax on belonging.With AI, there is a way for us to include identity and language and accents without appropriating or flattening language to reduce it to something that is “accessible” in some ways but aligned to the majority in its essence.For me, language is about expressing yourself, in the ways you can preserve who you are, regardless of how you sound what you say and where youre from.These two use cases show how accents are so fluid, misunderstood but above all - apart of who we are. This is a public episode. If you'd like to discuss this with other subscribers or get access to bonus episodes, visit thestrategiclinguist.substack.com/subscribe

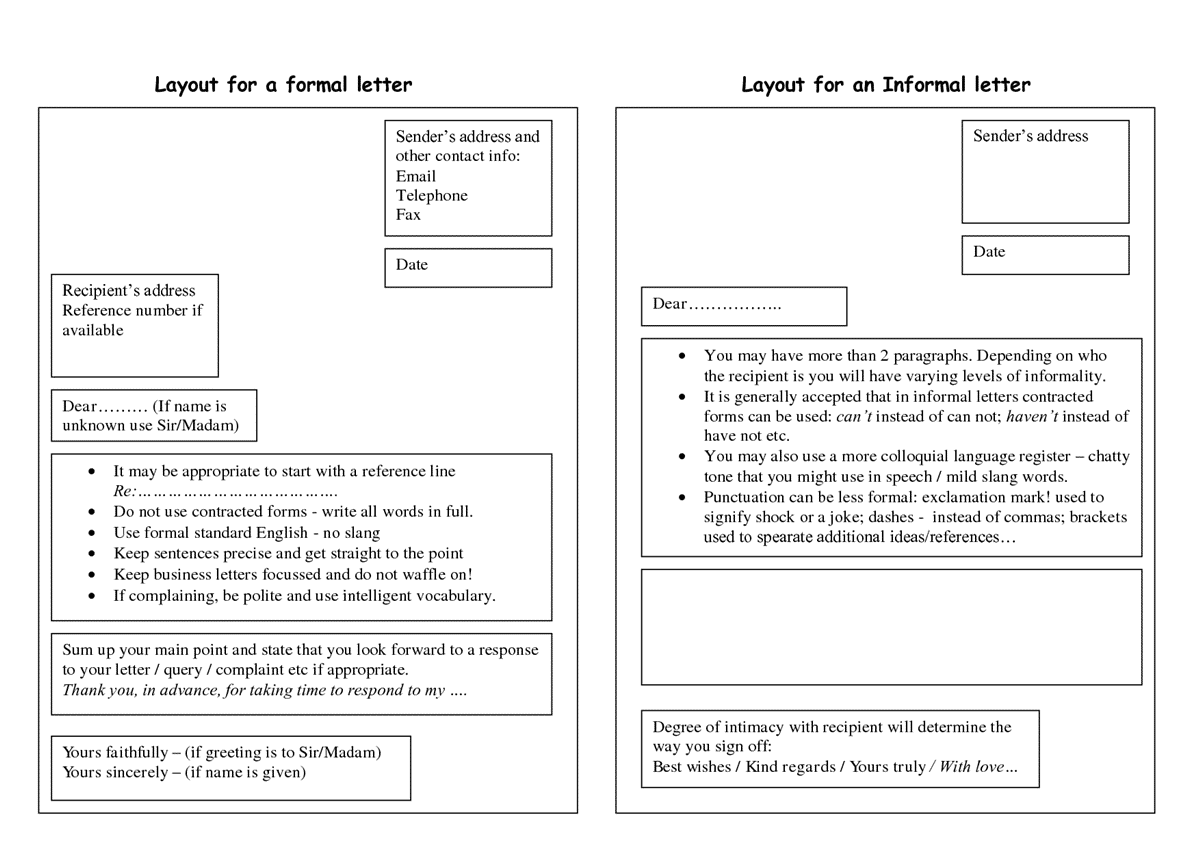

A note before we begin: This piece examines how linguistic authority operates in online platforms, with particular focus on Substack as a case study. It draws on sociolinguistic research into computer-mediated communication, stance, and algorithmic systems. The analysis focuses on specific patterns rather than offering comprehensive coverage of all digital linguistic phenomena.I also want to make clear (because words matter) that there is an important distinction and difference between authority - what I talk about in this series - and trust. Building trust online is a very different piece to what is here.Authority may command attention; trust has to be earned.When you publish on Substack, your authority doesn’t come from your voice, your office, or the institution listed on your business card. There’s no accent to mark your class background, no pitch to signal your gender, no physical presence to command attention. Yet somehow, certain writers dominate the conversation while others remain invisible, regardless of the quality of their ideas.Online, authority gets constructed through language choices, platform mechanics, and algorithmic systems that amplify some voices while silencing others. The performance of credibility has replaced the presumption of authority, and the rules of this performance favor particular linguistic styles while marginalising others.If accent marks the body in offline interaction, online language marks position, and on platforms like Substack, where writers compete for attention in an oversaturated information economy, understanding how linguistic authority operates has never been more critical.It’s also not so straight-forward, as we’ll see. There’s a lot of “damned if you do, damned if you don’t” moments that come with navigating authentic communication online where we try to be the same person IRL as we do online. Digital platforms haven't eliminated hierarchies of voice and authority, they've relocated them from embodied markers (like accent, institutional position) to linguistic performance and algorithmic reward systems.Until we confront how these systems privilege particular voices, we'll keep replicating offline hierarchies in digital spaces with new mechanisms and justifications.From Embodied to Discursive Authority (3.42)How Substack’s Design Shapes Authority (15.08)Stance, Voice, and the Performance of Credibility (20.46)Algorithms as Linguistic Gatekeepers (23.51)Who Gets Heard—and Who Doesn’t (26.43)Beyond Substack: Patterns Across Platforms (29.42)The Substack Specific: What Makes This Platform Different (32.01)What This Means for Writers (and Readers) (35.30)The Question of Confidence (38.07) This is a public episode. If you'd like to discuss this with other subscribers or get access to bonus episodes, visit thestrategiclinguist.substack.com/subscribe

A quick note before we begin: the study of voice, accent, and identity is vast and complex, encompassing decades of research across sociolinguistics, discourse analysis, and related fields. This article offers a curated exploration focused on specific use cases rather than a comprehensive audit of the field. Many important perspectives and dimensions of this topic remain beyond the scope of this piece.Every time we speak, we communicate far more than our words. Our voices carry social information - about our background, our education, even our supposed competence. They also communicate who we are, or at least who listeners perceive us to be. Research in sociolinguistics reveals that listeners make snap judgements about speakers based on vocal characteristics like pitch, register, and accent, often within seconds of hearing someone speak. These judgements can profoundly impact professional opportunities, perceived credibility, and social mobility.The Vocal Fry Paradox (2.28)The Pitch Problem: High Voices and Perceived Incompetence (5.23)The Elizabeth Holmes Case Study: Performing Authority Through Voice (9.28)British Accents and the Prestige Paradox (11.35)Theoretical Frameworks: Understanding the Power of Voice and Identity (17.25)The Real-World Stakes (20.57)Solidarity, Code-Switching, and the Complexity of Linguistic Identity (26.04)Toward Linguistic Justice (29.39)In the next piece in this series, we’ll examine linguistic identity in contexts of conflict and post-conflict reconstruction, exploring how language becomes a site of political struggle and identity negotiation. Later, we’ll turn to how digital communication reshapes linguistic identity and creates new forms of belonging and exclusion in online spaces.This post includes other works from The Strategic Linguistic in power, accent and identity. This is a public episode. If you'd like to discuss this with other subscribers or get access to bonus episodes, visit thestrategiclinguist.substack.com/subscribe

You speak English every day. You’ve been doing it since you were a toddler. You text, you chat, you argue about the Oxford comma. So naturally, you might think:How complicated can the study of language really be?This assumption is the field’s biggest problem.The Identity CrisisThe Illusion of ExpertiseSeeing Systems Where Others See “Just Talk”The Invisible InfrastructureWhy Linguistics Remains Under the RadarWhat Linguistics Gives You That Other Fields Don’tWhy This Actually MattersThe Power You’re Holding This is a public episode. If you'd like to discuss this with other subscribers or get access to bonus episodes, visit thestrategiclinguist.substack.com/subscribe

Welcome to the first episode of Linguistics Unscripted - my little corner of Substack where I can talk about linguistics, reference my work and others that inspire me and discuss some of the important topics.In this episode, I discuss my most read and listened to post in 2025… The Prompt Engineering MythWhat I talk about in this episode:* Why I’m starting this audio series (shoutout to Jen Benford’s Notes on staying true to your values and what you enjoy)* How this post relates to something I wrote back in the summer on writing skills and AI* The importance of writing skills when it comes to prompting AI, particularly in the workplace as we move in and out of writing forms* Why writing about AI was terrifying for me when I first started writing, and why voices like Slow AI , SheWritesAI, Code Like A Girl are crucial to allow more people to talk about AI* What speech communities have to do with the narrative of AI on Substack* Getting into the post - why prompting doesn’t have to be as complicated as everyone makes it out to be and why there is asymmetry built into instruction and how it blurs the lines between human-to-human and human-to-computer interaction* Why I’m dedicating this first episode to Kanan Gill 🥰* What’s next in this line of thinking - The Grammar of the Prompt This is a public episode. If you'd like to discuss this with other subscribers or get access to bonus episodes, visit thestrategiclinguist.substack.com/subscribe

In Part 1, I argued that hedging is not a weakness. That I think, I believe, as far as I know are epistemic markers — they tell listeners exactly how you are holding your knowledge. That coaching them out of your speech doesn’t produce clearer communication; it produces communication that carries less information about whether you actually know.All of that stands but I promised something more uncomfortable.What that argument didn’t account for: a significant portion of hedging in professional environments has nothing to do with epistemic uncertainty. The speaker isn’t hedging because they don’t know. They’re hedging because they’ve done a rapid, often unconscious calculation about what happens if they don’t.The Face Theory (and Why It Matters Here)Positive and Negative Politeness at WorkThe Cost of the Diagnostic Being RightHedging as a Read of the HierarchyWhat The Room Is Actually Asking ForThe Signal in the HedgeThe Thing Trained On Human LanguageThe human speaker who hedges is, at minimum, telling you something true about how they are holding their knowledge. The LLM that doesn’t learned from us — specifically, from the part of us that keeps promoting the confident one.Next in this series: LLMs don’t just hedge like men — they were trained by them. What happens when a system learns language from a corpus that was never neutral, rated by humans who were never representative, and presents the result as the default. This is a public episode. If you'd like to discuss this with other subscribers or get access to bonus episodes, visit thestrategiclinguist.substack.com/subscribe

DISCLAIMER: this is not intended to be an all-encompassing post on the topic of healthcare and the fertility industry. I am focusing on a few key areas and the areas of language that have made the most impact to me.The Industry’s Linguistic PlaybookWhen Uncertainty Becomes Self-BlameThe Friend Who Cracked the Code: Influencer Language and the Illusion of SecretsThe Language Gap: When Medical Discourse Keeps You SubordinateThe Performance of Normal: Hiding Grief at WorkThe Linguistic ConfessionYou’re not alone. There are some beautiful, wonderful voices out there that don’t make you feel awful so I’m including those voices that have helped quiet the noise around me - voices that don’t claim to know how you’re feeling, what you need or what you’re missing. They’re voices who know the pain and help you keep space for yourself. They’ve been harder to find:🇬🇧 Based in the UK, we have* Hannah Pearn’s podcast, brilliantly named Don’t Tell Me To Relax and Instagram are light, captures a vibe that I always need and has a great community - I’ve sent her podcast to a lot of people. I just wish I was in the UK to go to her acupuncture clinic.* Helen Davenport-Peace on Substack but also has some great words-of-wisdom for Instagram that I’m eternally grateful for. I feel like she’s heard the same things and had the same reactions - I love that she’s always looking at language.🇦🇺 Based in Australia, Katie Dunn’s Afterglow has been refreshing content on Substack. Katie started writing and building a positive community around IVF and plan A not working out. She also felt the silence of this narrative in the broader fertility landscape was/is deafening, looking for others and hardly finding any. My favourite articles of hers below: This is a public episode. If you'd like to discuss this with other subscribers or get access to bonus episodes, visit thestrategiclinguist.substack.com/subscribe

This piece examined how hierarchical power amplifies effectiveness. Linguistic capital determines who deploys reality-contradicting claims with minimal cost. Definitional authority determines whose version becomes organisational truth. Documentation often serves gaslighters rather than protecting targets.How The Patterns Change With PowerThe Compounding EffectWhy “Speaking Up” Advice FailsCounter-Strategies That Work Within ConstraintsRecognising Patterns So You Don’t Internalise ThemProtecting Your Sense of RealityKnowing When to Document vs. Disengage vs. ExitUnderstanding What You Can and Can’t ControlThe Metacognitive ShiftWhen Organisations Lose SignalWhat This Series RevealedPart 1 examined linguistic structures: speech acts with double direction of fit, three types of assertives, face-threatening acts disguised as concern, anti-performatives. We learned why there’s no checklist (welp) - gaslighting mimics cooperative communication while violating it.Part 2 showed how AI systems reproduce these patterns through training on human discourse, creating direct gaslighting and discourse reproduction. Automated systems scale the linguistic patterns that destabilise reality. This is a public episode. If you'd like to discuss this with other subscribers or get access to bonus episodes, visit thestrategiclinguist.substack.com/subscribe

In the previous piece in this series, I examined how gaslighting operates through specific discourse structures: three types of assertives (explicit, covert, inclusive), the double direction of fit, face-threatening acts disguised as concern, and anti-performatives. These patterns exploit the cooperative principle - our assumption that people communicate truthfully - to destabilise someone’s grip on reality.AI systems reproduce these patterns without intention, awareness or malice. The result is the same: users learn to doubt their own knowledge in favour of confidently delivered misinformation.The Three Types of Assertives, Automated (2.33)The Authority Problem: Why We Trust Machines Over Ourselves (7.38)Memory-Less Systems and Contradictory Realities (10.40)Training Data: Learning Gaslighting From Human Text (14.29)The Double Risk: Direct Gaslighting and Discourse Reproduction (18.29)Normalising Reality Instability (21.25)Workplace AI: When Gaslighting Becomes Documentation (25.19)When Gaslighting Scales (29.04)What This Means For Reality at Work (32.03)Recognition Is the First Step (34.20).This is Part 2 of a three-part series on gaslighting and linguistics. Part 1 examined the linguistic structures of human gaslighting. Part 3 will explore practical strategies for protecting your reality in hierarchical settings where someone - or something - has more definitional authority than you do. This is a public episode. If you'd like to discuss this with other subscribers or get access to bonus episodes, visit thestrategiclinguist.substack.com/subscribe

You’re in a meeting. Your manager says, “I never said we’d launch in Q2.” You remember the conversation clearly, you have notes but they continue: “I think you’re misremembering. We always planned for Q3.” Then they add, “You seem stressed lately. Are you sure you’re tracking everything correctly?”Something feels wrong but you can’t pinpoint what. The words sound reasonable. The tone is concerned, even caring. Your reality and theirs don’t match but theirs is delivered with such certainty that you start doubting your own memory.This is gaslighting. Not just someone disagreeing with you or remembering events differently, that’s normal conversation. Gaslighting is a systematic pattern of communication that makes you doubt your own perception, memory and sanity. It’s psychological manipulation through language, where someone distorts reality and then makes you feel responsible for the confusion.What Gaslighting Actually IsThe Core Behavioural PatternsThe Linguistic Structures Behind the BehavioursWhy There’s No Simple ChecklistThree Types of Assertives in GaslightingThe Double Direction of FitFace-Threatening Acts Disguised as CareAnti-Performatives: Saying One Thing, Doing AnotherWhy Some People Can Recognise It FasterThe Hierarchical AdvantageWhat Linguistic Analysis RevealsWhat This Means for RecognitionDISCLAIMER: I’ve purposely not spoken about the psychological aspects of gaslighting because it is not my place to. I am unfortunately very familiar with it but I would be irresponsible if I didn’t share the right sources for more information.If you’d like to learn more from the experts, I’d recommend reading works by:* Suzy’s Substack* Ryan Hwa* Unmasking Narcissism with Sarah Khan* Nathalie Martinek PhD* Annie Tanasugarn, PhD This is a public episode. If you'd like to discuss this with other subscribers or get access to bonus episodes, visit thestrategiclinguist.substack.com/subscribe

When you type instructions into ChatGPT or Claude, you’re not having a conversation. You might feel like you are because the interface looks like a chat window, the AI responds with “That’s a great question!” and adjusts its tone based on yours but what’s actually happening bears little resemblance to human communication.Prompt engineering is constraint optimisation dressed up as dialogue. Understanding this distinction matters deeply for anyone building AI products, setting user expectations, or trying to understand what these systems actually do.What Communication Actually RequiresThe Asymmetry of InstructionWhy LLMs Feel Like ConversationLinguistic Frameworks We Already UseThe Product Development ProblemThe Monetisation of UnfamiliarityWhat This Means for AI ProductsThe Deeper QuestionThe human capacity for communication, bidirectional, negotiated, grounded in mutual agency, remains distinctive. Recognising that distinction helps us use powerful tools like LLMs more effectively, without confusing their constrained optimisation processes with the richer, messier, genuinely collaborative process of human dialogue. This is a public episode. If you'd like to discuss this with other subscribers or get access to bonus episodes, visit thestrategiclinguist.substack.com/subscribe

Meetings are battlegrounds for narrative control. The story that wins becomes the official record, shapes future decisions and determines who gets credited or blamed.The Turn-Taking System You Never Knew You Were FollowingLinguistic Capital: The Hidden Currency of MeetingsSix Conversational Moves of Narrative Control1. First Narrator Advantage2. The Pre-Framing Move3. Narrative Interruption Tactics4. The Counter-Narrative5. The Collaborative Hijack6. The Meta-Narrative MoveThe Linguistics of Power in ActionDiscourse Structure and Who Gets CreditRepair Work and Narrative Damage ControlThe Compound Interest ProblemWhat This Reveals About Signal LossWhat This Means for YouThe Pattern You Can’t UnseeThe linguistics has been clear for fifty years: turn-taking is systematic, interruption is patterned, and both are linked to power. What’s still being negotiated is whether organisations will continue treating this as individual communication style, or recognise it as a structural feature of how they distribute authority, credit, and ultimately, opportunity.The hidden system is now visible. The question is what you do with that knowledge. This is a public episode. If you'd like to discuss this with other subscribers or get access to bonus episodes, visit thestrategiclinguist.substack.com/subscribe

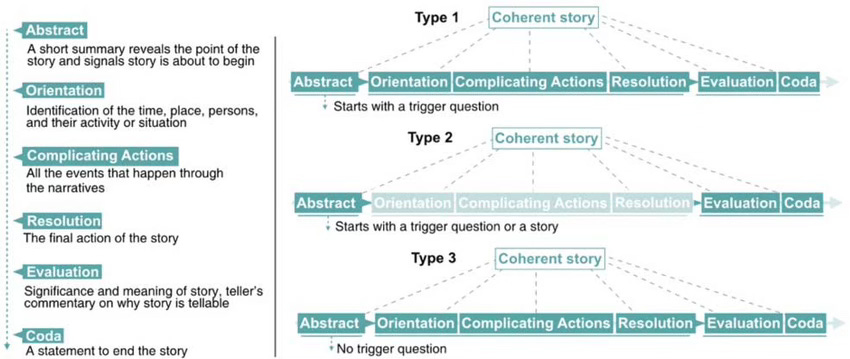

Language follows patterns. Jokes work because they set up expectations and violate them at precise moments. Songs feel satisfying because verses and choruses follow predictable structures. Stories engage us because they move through established sequences - setup, complication, resolution - that our minds recognise and anticipate.Business storytelling is no exception. Yet the advice about storytelling at work rarely addresses linguistic structure. We talk about storytelling as performance - being a confident speaker, building to crescendos, using the right body language, controlling the room. We focus on delivery mechanics while ignoring the actual architecture of the narrative.Six narrative structures dominate acceptable business storytelling. Each follows specific linguistic patterns that signal competence and control.The Six Acceptable Structures1. The Obstacle Overcome2. The Pivot Story3. The Innovation Journey4. The Scaling Story5. The Collaboration Win6. The Vision RealisedThe Grammar That Makes These Structures WorkTransitive verbsTemporal markersPronoun distributionCausal connectivesWhen you bring linguistic analysis to business narratives, you gain leverage others don’t have. You can see patterns in how successful people tell stories. You can identify why certain narratives succeed regardless of underlying merit. You can construct your own narratives deliberately, choosing structures that position you strategically.The person who controls narrative structure controls how their work gets interpreted, remembered, and valued. That control comes from understanding language as a system with rules and patterns that can be analysed and deployed strategically. Not from presentation coaching, not from confidence building but from linguistic analysis that almost no one applies to professional contexts.Once you see how linguistic structure shapes professional reality, you can’t unsee it and you gain tools for navigating that reality that most people never acquire. This is a public episode. If you'd like to discuss this with other subscribers or get access to bonus episodes, visit thestrategiclinguist.substack.com/subscribe

We have been telling stories as a way to understand the world since the dawn of human existence - no matter where it began. We’re hardwired to look for stories, lessons and experiences that we can aspire to, be inspired by, and see ourselves in, to find meaning in our own lives.We need to keep telling these stories - the uncomfortable ones, the ones that make us feel exposed - because that’s how we find each other in the dark. This is a public episode. If you'd like to discuss this with other subscribers or get access to bonus episodes, visit thestrategiclinguist.substack.com/subscribe

The ability to tell the canonical story is the ability to define reality. When your narrative becomes the accepted version of events, you control what counts as success, who gets credit, and what the organisation learns from its experiences.The Narrator Privilege: Controlling Causality (01.00)Narrative structure gives the storyteller enormous power. When you tell a story, you decide the temporal boundaries, the causal connections and which details matter. Story Rights: The Pragmatics of Narrative Permission (5.10)Not everyone has equal rights to tell the same story. H. Paul Grice’s Cooperative Principle describes how conversational participants navigate implicit rules about what can be said and when. His maxims of quantity, quality, relation and manner explain how speakers signal meaning beyond literal content. But Grice’s framework assumes relatively equal participants. In organisational hierarchies, story rights are distributed unequally.The LinkedIn Learning Narrative: Status Signalling Through Failure Stories (06.26)LinkedIn has become a laboratory for observing narrative permission in action. The platform is saturated with posts that follow a specific template: “I failed spectacularly, and here’s what I learned.” These narratives appear vulnerable and authentic. In practice, they function as status signals.Story rights extend to genre. Some people have permission to tell aspirational stories about organisational transformation. Others are expected to confine themselves to technical reports about completed tasks. The ability to position yourself as a narrator of the organisation’s future, rather than just its past, marks significant status.The Canonical Story Problem: How Narratives Solidify (14.30)Organisations eventually crystallise one version of events. This canonical story becomes “what happened.” Alternative interpretations shift from different perspectives to disloyalty.This solidification process involves repeated tellings that gradually eliminate variation. Early accounts of an event may include hedging, alternative explanations, and acknowledged uncertainty. As the story gets retold, these qualifications disappear. The narrative structure becomes more streamlined. Causal connections become more definite. What was “probably” becomes “clearly.”The Strategic Implications: From Performance Reviews to Industry Narratives (18.00)Understanding narrative as a linguistic power structure operates at multiple scales. The same mechanisms that determine whose story prevails in a project debrief also shape how companies position themselves in markets and how entire industries understand their own evolution.The Micro Level: Individual Career Narratives (18.29)Performance reviews represent high-stakes narrative contests. The review document becomes the canonical story of your work. Who writes that story matters profoundly.The Organisational Level: Corporate Brand Narratives (21:29)Companies construct narratives about themselves that determine market positioning and internal identity. These narratives operate through the same linguistic mechanisms as individual stories but at organisational scale.The Market Level: Industry and Competitive Narratives (25.24)At the broadest scale, narrative control shapes how entire industries understand themselves and how markets interpret business categories.Industries develop canonical narratives about their own evolution. The technology sector tells stories about disruption cycles and paradigm shifts. These narratives position certain types of companies as forward-looking and others as obsolete.Narrative Leverage Across Scales (28.31)The same linguistic mechanisms operate at individual, organisational and market levels. Temporal framing determines whether events are interpreted as setbacks or stepping stones. Causal connectives establish who deserves credit and who bears responsibility.Certain narrative structures dominate corporate storytelling because they conform to organisational requirements for clarity, agency, and replicability. Other structures, no matter how honest, violate these requirements and mark the storyteller as outside acceptable business discourse. Understanding which narrative structures are acceptable, and why they work linguistically, determines whether your stories about your own work help or hurt your career. This is a public episode. If you'd like to discuss this with other subscribers or get access to bonus episodes, visit thestrategiclinguist.substack.com/subscribe

The Spider and the Mockingbird: Power Through Language in Westeros and the WorkplaceAn exploration of how Lord Varys and Petyr "Littlefinger" Baelish from Game of Thrones embody timeless archetypes of power manipulation through language—and why these characters echo across centuries of human storytelling.Key Themes:How leaders throughout history have relied on intermediaries to bridge the gap between power and peopleThe linguistic frameworks behind two distinct approaches to wielding influence: institutional authority vs. personal manipulationVarys as master of "linguistic capital" and off-record communication strategiesLittlefinger's weaponization of intimacy and emotional manipulation through languageInformation as currency: how both characters convert knowledge into social advantage through radically different linguistic patternsLinguistic Concepts Explored:Bourdieu's linguistic and cultural capitalBrown & Levinson's politeness theory and face-threatening actsGoffman's face-work and social identity managementImplicature and accommodation theory in discourse analysisWhy It Matters: These archetypes persist because they reflect fundamental truths about how power operates through communication in any hierarchical system—from ancient courts to modern organizations. The stories we tell reveal the dynamics we live. This is a public episode. If you'd like to discuss this with other subscribers or get access to bonus episodes, visit thestrategiclinguist.substack.com/subscribe

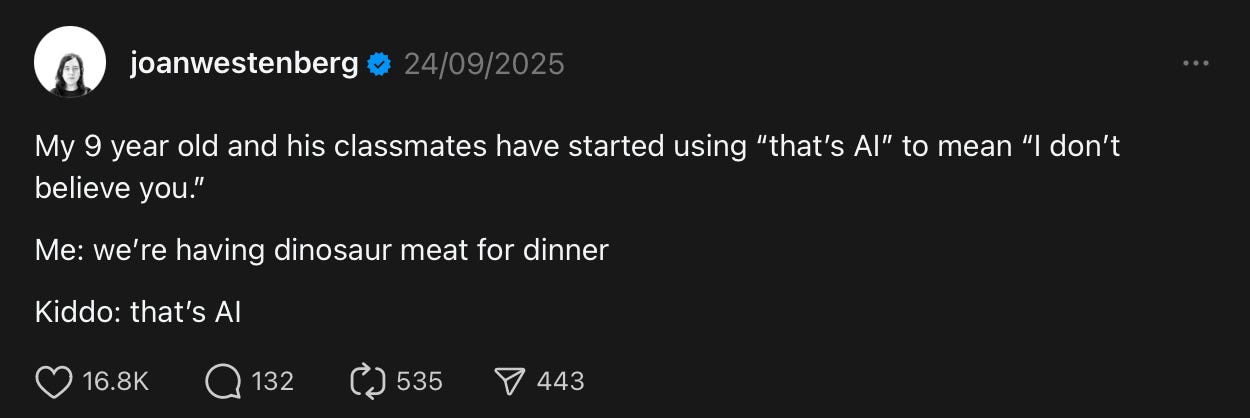

Episode OverviewWhile tech CEOs spend billions on compute power and algorithms, they're missing the linguistic patterns that determine whether AI products actually work for billions of users. This isn't a technical problem—it's a cultural intelligence gap at the highest levels of tech leadership.Key Topics DiscussedWhen AI Meets Reality Google AI Overviews telling users to put glue on pizza and eat rocksWhy this wasn't a technical failure—it was linguistic blindnessHow AI can't decode social context, sarcasm, and reliability markersDetection vs. Comprehension: The Critical Gap Why detecting patterns isn't the same as understanding meaningHow AI can spot sarcasm without comprehending its social functionThe fundamental limitation that scale and data can't solveThe ChatGPT Bias Problem How formal academic language gets better AI responsesLinguistic hierarchies embedded in training dataWhen Linguistic Blindness Becomes Dangerous Chatbots missing suicide risk signals in teen languageHow communication style variations across cultures affect crisis detectionThe life-and-death stakes of linguistic intelligence gapsSingapore's Strategic Advantage How multilingual AI development creates competitive edgeWhy cultural alignment beats computational power in global marketsWhat Silicon Valley is missing about linguistic diversityThe Storytelling Problem Meta's failed cooking assistant and smart glasses demosHow Silicon Valley culture prevents honest communication about AI limitsGeneration Alpha's "that's AI" skepticism revealing the trust gapBuilding Linguistic Intelligence What AI leadership needs beyond technical expertiseHow to audit products for cultural blind spotsWhy this capability determines future competitive advantageKey TakeawaysAI can detect linguistic patterns but cannot comprehend human meaning and social contextBillions in AI investment fail because tech leadership lacks cultural intelligenceLinguistic diversity isn't a translation problem, it's a fundamental design challengeCompanies that develop linguistic intelligence capabilities will dominate global markets This is a public episode. If you'd like to discuss this with other subscribers or get access to bonus episodes, visit thestrategiclinguist.substack.com/subscribe

In Part 1, I explored how the shift from keyword search to conversational commerce represents a fundamental linguistic change - from locutionary acts (literal word matching) to illocutionary acts (understanding intent and context). We looked at three key changes: context-dependence, anaphoric resolution, and the move from lexical to compositional semantics (essentially, from SEO to AEO).Now let’s talk about what this means for payments infrastructure and why learning to speak human is harder than it sounds.When Saying Becomes Doing: Payments as Speech ActsIn linguistic philosophy, a speech act is when saying something is doing something. “I promise” doesn’t describe a promise, it creates one. “I apologise” doesn’t report an apology, it performs one.In the agent era, payments could become speech acts. Saying “yes, charge me” in conversation might not initiate a separate checkout flow, it could be the transaction itself. The utterance and the action could collapse into the same moment.This would require infrastructure that treats natural language confirmation as authorisation. Security would shift toward pragmatic authentication (verifying the speaker has authority) rather than primarily form-based authentication (requiring explicit credential entry).Biometric verification, voice recognition, behavioural patterns alongside or instead of CVV codes. The question would shift from “Do you have the password?” to “Are you the person I’ve been talking to?”And if that makes you think carefully about security implications, good. That’s appropriate. Because we’re talking about fundamentally reconsidering what “authorisation” means in a linguistic sense, not just a technical one.Why This Transition Isn’t Straightforward (Even for Those Who Seem to Get It)There’s a philosopher, Paul Grice, who said successful conversation relies on four cooperative principles:* Quantity: be as informative as necessary* Quality: be truthful * Relation: be relevant* Manner: be clearThese aren’t abstract philosophy, they’re practical considerations for merchant-agent communication. Merchants who game agent recommendations with misleading attributes (violating Quality) will likely face consequences, just like keyword-stuffing affected SEO. Products tagged with irrelevant attributes (violating Relation) may not get recommended as effectively. Ambiguous product data (violating Manner) might get passed over.But here’s what strikes me: most merchants didn’t grow up in an environment where semantic clarity and conversational cooperation were technical requirements. Just like some of us didn’t grow up seeing certain workplace communication patterns modelled, most commerce platforms developed in an environment of forms and databases.They learned the language of structured data. Now there’s a shift toward the language of discourse and meaning-making. That’s not a simple transition.And it’s not just merchants and payment companies that need to adapt, consumers do too.Here’s something I’ve written about before: many people have no idea how to articulate what they actually need. I’ve seen this as a writing teacher, as someone who built AI writing platforms for higher education, and as someone who’s watched the American business writing crisis unfold in the last decade.The same clarity problem that costs US businesses approximately $2 trillion annually in poor communication shows up in how people interact with AI agents. When someone can’t write a clear email, they also can’t construct a clear prompt. When they don’t understand their own thinking process well enough to articulate it to a colleague, they definitely can’t articulate it to an AI agent trying to help them buy something.AI agents are demanding something we’re not used to providing: clear expression of unclear needs. “I need something for focus” isn’t a failure of the agent to understand, it’s the human not yet knowing whether they need noise cancellation, caffeine, ergonomic support, or time management software. The agent has to work with what linguistics calls “underspecified input.”This creates a new kind of cooperative burden. Grice’s maxims assumed both parties knew what they were trying to communicate but in agent-mediated commerce, humans often don’t know what they need until the agent helps them figure it out through conversation. The agent becomes a collaborative thinking partner, not just a transaction processor.This is why the shift to conversational commerce isn’t just about better AI, it’s about helping humans develop what linguists call metalinguistic awareness: the ability to think consciously about what they’re trying to communicate. Writing is thinking made visible, as composition researchers have shown, and prompting an agent is the same skill: making your thinking visible enough for a system to work with it.But let’s be honest about what agents can and can’t do.In Part 1, I talked about how agents excel at understanding discourse, parsing syntax, interpreting compositional semantics, understanding context, and that’s true, AI agents are remarkably good at the receptive side: understanding what you mean from what you say.Where they still struggle is with the deeper pragmatics. The power dynamics in conversation. The subtle social hierarchies. The cultural context that shapes what’s appropriate to say when. The emotional undercurrents that humans navigate instinctively.I’ve written before about how different aspects of language present varying challenges for AI versus humans. Modern LLMs demonstrate impressive proficiency in syntax and semantics. They can generate grammatically correct sentences and handle complex semantic relationships with accuracy.But pragmatics, especially the deeply social aspects like recognising authority relationships, understanding when language establishes dominance or submission, interpreting the political dimensions of discourse, remains challenging for artificial systems. The nuanced ways humans navigate complex social relationships through language, the unspoken hierarchies, the microaggressions, the cultural implications of linguistic choices - these require embodied, social, experiential knowledge.So when we talk about agents understanding discourse, we need to be precise. They’re learning to understand the what (syntax and semantics). They’re still learning the why and the how (pragmatics and social context). They can process conversational structure, but they can’t always navigate conversational dynamics the way humans do.This matters for payments because transactions aren’t just semantic exchanges, they’re social ones. There’s power, trust, vulnerability, cultural expectation embedded in “yes, charge me.” Agents can process the authorisation. Whether they can navigate the full social and emotional context of that authorisation is still an open question.What This Transition Might Look LikeLook, I’m not going to pretend anyone has fully solved this yet but what’s becoming clear is that payment companies are exploring something that doesn’t quite exist yet in its complete form: a semantic payments layer.This would involve middleware that can:* Parse conversational intent into transaction parameters without requiring structured input* Maintain discourse state across multi-turn conversations * Resolve ambiguous references by accessing conversational history* Negotiate uncertainty through clarifying questions rather than error messages* Support compositional transactions where payment is part of a larger conversational goalSome companies are experimenting with this, like Stripe’s work with OpenAI explores payment confirmation embedded in chat interfaces, which is an interesting approach to the discourse challenge.But the linguistic infrastructure goes way deeper than APIs. It involves rethinking: * How product data is structured (ontological, not just categorical)- How merchant catalogs are indexed (semantic, not just lexical)* How pricing is communicated (contextual, not static)* How fulfilment gets coordinated (conversational, not just form-based)This isn’t about adding a chatbot. This is about building commerce infrastructure that could understand discourse the way humans actually use it.The Learning Curve We’re All OnHere’s what I keep coming back to: the people building payments infrastructure right now learned their craft in a world where commerce meant filling out forms. Each piece of information had its designated box. Everything was structured, predictable, defined.That’s the paradigm they were trained in. Structured data: everything has a place, a format, and a clear relationship to everything else.Now with AI agents, commerce happens through conversation. Information comes out of order (”send it to my mum” before you’ve even said what “it” is). Context shapes meaning (”I’ll take it” means entirely different things depending on what you just discussed). Intent is implicit - you don’t fill out a field that says “execute payment,” you just say “yes, charge me” and the system has to understand that means authorisation.This is what linguists call unstructured discourse - natural human communication.But there’s another layer here that I hinted at in Part 1: trust. We learned not to trust technology with full sentences because it failed us so many times. Years of clunky chatbots, voice assistants that couldn’t understand basic requests, and “smart” systems that weren’t very smart taught us to dumb down our language. We trained ourselves to speak in keywords because anything else didn’t work. Now agents need to rebuild that trust, proving they can actually understand discourse, not just pretend to. That’s a psychological barrier, not just a technical one. And it’s as significant as any infrastructure challenge.Just like I wrote about people not knowing how to negotiate or handle workplace conflict because they never saw those patterns modeled growing up, payment infrastructure developers never saw conversational commerce modeled. They learned t

That colleague who asks “How’s that project coming along?” might actually be evaluating your competence.Your friend who says “You know we all hang out at that new place downtown” could be trying to manipulate your social choices.The person who opens with “I’m curious about your decision to...” probably isn’t curious at all.What if you could decode these hidden messages instantly? What if you could recognise when someone is trying to control you through their questions, and know exactly how to respond? Even better, what if you could master these same techniques to become more influential in your own conversations?Welcome to the secret world of conversational power dynamics, where understanding the linguistics of questions gives you an almost unfair advantage in every interaction.The game of conversational power will continue whether you play it consciously or not. The question is: Will you keep getting blindsided by moves you don’t see coming, or will you master the rules that everyone else is playing by instinctively? This is a public episode. If you'd like to discuss this with other subscribers or get access to bonus episodes, visit thestrategiclinguist.substack.com/subscribe