Discover Compiling Ideas Podcast

Compiling Ideas Podcast

Compiling Ideas Podcast

Author: Patrick Koss

Subscribed: 0Played: 0Subscribe

Share

© Patrick Koss

Description

Deep dives on systems, software, and the strange beauty of engineering — compiled, not copy-pasted.

patrickkoss.substack.com

patrickkoss.substack.com

21 Episodes

Reverse

Ever deleted a user that was never created? Or watched a payment process before money hit the account? Welcome to the wild world of distributed systems, where events happen everywhere at once and getting the order wrong can turn your database into a zombie apocalypse. This episode breaks down the theory and practice of keeping things straight when everything’s running in parallel.DescriptionDistributed systems are messy. Events fire off on different servers, different continents, all at the same time. Some of them need to happen in a specific order. Some don’t. Get it wrong and you’re deleting accounts that don’t exist or charging credit cards with zero balance.In this episode, we dig into the fundamentals of concurrency and causality. We start with Leslie Lamport’s happens-before relationship and work our way through the real-world systems that enforce order at scale. You’ll learn how consensus algorithms like Raft use leader terms and epochs to reject stale messages from old leaders. How linearizability gives you the illusion of a single timeline even when a hundred things are happening at once. And how Apache Kafka maintains ordering using partitions and keys, routing related events into the same ordered log while letting independent streams run wild in parallel.This isn’t academic theory. This is the difference between a system that works and one that silently corrupts your data. Whether you’re debugging a race condition or designing cross-datacenter replication, the same principles apply. Know your events. Understand their relationships. Make sure your system respects causality.By the end, you’ll see that parallelism and causality aren’t enemies. They’re two sides of the same coin. Master both and you can build systems that are fast and correct, taking advantage of doing many things at once without losing the plot of the story they’re telling.Key TopicsThe TheoryWe start with what it means for events to be truly parallel versus sequential. When two things happen at the same time with no causal link, they’re concurrent. Neither can affect the other. But when one event depends on another, that’s a happens-before relationship. Delete can only happen after create. Payment can only happen after deposit. Causality defines the arrows of time in your system.Consensus and RaftDistributed consensus algorithms like Raft exist to impose a single sequence on chaos. We explore how Raft uses a leader to order events, how term numbers act like epochs to reject messages from old leaders, and how majority agreement creates a total order broadcast. Every node applies events in the exact same sequence, even when they’re being proposed in parallel.LinearizabilityThe strongest consistency model. Linearizability makes a distributed system behave like there’s one copy of the data and one timeline of operations. If an update finished before a read started in real time, the read will see it. No surprises. No stale data. We break down how consensus-based systems achieve this illusion and why it matters for correctness.Kafka’s Pragmatic ApproachApache Kafka shows you don’t always need total order on everything. Kafka partitions topics and guarantees ordering within each partition using keys. All events with the same key land in the same partition, in sequence. Events with different keys can be processed in parallel across partitions. It’s a compromise that gives high throughput while maintaining order where it counts.Real-World LessonsWe tie it all together with the principle that matters most. Identify what needs ordering and what can run in parallel. Group causally related events so they’re ordered within their group. Use techniques like logical clocks, consensus protocols, and careful partitioning to navigate the sea of concurrent events. The goal is to build systems that are both fast and correct, orchestrating parallelism without descending into chaos. Get full access to Compiling Ideas at patrickkoss.substack.com/subscribe

Ever wonder why some websites load instantly while others make you wait? It’s not magic. It’s an invisible army of caches working together at five different layers, passing data like a relay team. From DNS lookups to browser storage, from Redis to database buffers, every click you make triggers a cascade of caching decisions. And somewhere, a developer is losing sleep over whether to set a TTL of 60 seconds or 300.DescriptionWhen you click a link, your request doesn’t just teleport to a server and back. It goes on a journey. And at every stop along the way, there’s a cache waiting to either hand you the answer immediately or pass you along to the next layer.This episode walks through the entire lifecycle of a web request, meeting every cache along the way. We start with DNS resolution, where your system keeps an address book of websites to avoid repetitive lookups. Then we hit the browser cache, which prevents you from downloading the same logo 47 times. Modern web apps add their own caching layer on top, using LocalStorage, IndexedDB, and service workers to enable offline-first experiences.On the backend, distributed caches like Redis shield databases from getting hammered into the ground. And databases themselves? They keep hot data in memory buffers so they don’t have to hit the disk every time someone asks for your user profile.We also break down the four major caching strategies: cache-aside (lazy loading), read-through, write-through, and write-behind. Each has different trade-offs between speed, consistency, and complexity. Picking the right one can make your app feel instant instead of sluggish.Sure, caching introduces complexity. Cache invalidation is famously one of the two hardest problems in computer science (along with naming things). But the performance gains are so massive that it’s worth it. A well-cached system can handle 10x or 100x more traffic than an uncached one.Next time you load a page and it feels instant, remember: there’s an invisible relay race happening behind the scenes. And it’s beautiful.Key Topics- DNS caching and TTL (Time to Live)- Browser HTTP caching with Cache-Control, ETag, and Last-Modified headers- Cache busting strategies with versioned filenames- Frontend application caching with LocalStorage, IndexedDB, and service workers- Progressive Web Apps (PWAs) and offline-first architecture- Backend distributed caching with Redis and Memcached- Cache-aside pattern (lazy loading)- Read-through, write-through, and write-behind caching strategies- Database buffer pools and query plan caching- Cache invalidation trade-offs and TTL strategies- Performance scaling through multi-layer caching Get full access to Compiling Ideas at patrickkoss.substack.com/subscribe

Remember when slapping “.com” on your company name could triple your stock price overnight? Now we’re doing the same thing with “AI.” History doesn’t repeat, but it sure does rhyme. In this episode, we dig into four tech gold rushes, figure out who actually struck it rich, and try to answer the question: is AI the real deal, or are we all just panning for fool’s gold again?DescriptionEvery decade brings a new technology that makes everyone lose their minds. The internet boom turned garage startups into trillion-dollar empires (and vaporized thousands of others). Crypto promised to replace banks and minted Bitcoin millionaires out of random nerds. NFTs convinced people to pay millions for cartoon apes. And now? AI is the newest gold rush, with ChatGPT breaking the internet and VCs throwing $60 billion at anything with “AI” in the pitch deck.This episode walks through the pattern. We start with the 1990s dot-com frenzy when Pets.com spent millions on Super Bowl ads before figuring out how to make money. We watch Amazon go from a garage bookstore to a $570 billion juggernaut. We see Google turn targeted ads into a money-printing machine and Facebook bet that people are addicted to stalking their friends (spoiler: they were right).Then we jump to crypto. Bitcoin went from worthless nerd money you could mine on your laptop to nearly $70,000 per coin. Ethereum introduced programmable money. Dogecoin started as a literal meme and somehow became worth billions because Elon tweeted about it. And NFTs? People paid $24 million at Sotheby’s for computer-generated ape pictures. The whole thing felt like tulip mania, except with pixels.Now it’s AI’s turn. ChatGPT hit 100 million users in two months, the fastest growth in history. AI coding tools like Cursor raised $900 million at a $9 billion valuation in just three years. OpenAI is being valued at $300 billion, more than McDonald’s or Nike. The hype is real, the money is insane, and everyone’s convinced this time is different.But here’s the thing about gold rushes: they follow a pattern. New tech emerges. Early adopters get rich. Everyone else rushes in. The bubble pops. Most people lose money. A few winners reshape the world. We’ve seen this movie four times now. So where does AI fit in? Are we at the beginning of a transformative era, or are we about to watch another spectacular crash?We break down the gold rush pattern, compare AI to previous booms, and try to figure out if you can actually strike gold in this wave (spoiler: yes, but probably not). We talk about the real opportunities, the real risks, and how to prospect wisely without losing your shirt.Whether you’re a founder chasing the next unicorn, an engineer trying to stay relevant, or just someone wondering what all the fuss is about, this episode gives you the context to understand where we are in the hype cycle and what actually matters.Grab your digital pan and start sifting. Just remember: for every prospector who found gold in California, hundreds went home broke. The difference? The winners knew when to dig, when to hold, and when to walk away.Key TopicsThe Internet Boom and Its WinnersHow adding “.com” to your business plan could make you a billionaire. We explore the late 90s frenzy, the spectacular 2000 crash, and how Amazon, Google, and Facebook actually found gold while thousands of startups went bust.Crypto and the NFT ManiaFrom Bitcoin’s mysterious origins to Dogecoin memes to $24 million cartoon apes. We trace the crypto gold rush, the fortunes made and lost, and whether blockchain is the future or just an elaborate Ponzi scheme.AI Breaks the InternetChatGPT hits 100 million users in 60 days. AI startups raise $60 billion in three months. Coding assistants get $9 billion valuations. We examine the current AI frenzy and compare it to previous tech booms.The Gold Rush PatternNew tech emerges. Early adopters strike it rich. Everyone piles in. The bubble pops. The tech changes the world anyway. We break down the five-stage pattern that repeats across every major tech wave.Can You Actually Strike Gold in AI?The honest answer: yes, but probably not. We discuss the real opportunities, the genuine risks, and how to participate in the AI wave without being stupid about it.How to Prospect WiselyBe enthusiastic, but don’t be an idiot. We share practical advice for navigating the AI gold rush, whether you’re building, investing, or just trying to upskill before your job gets automated. Get full access to Compiling Ideas at patrickkoss.substack.com/subscribe

Your career trajectory isn’t just about the code you ship. It’s about the story running in your head while you’re shipping it. This episode unpacks how choosing optimistic interpretations turns you into the engineer who stays calm in fires, attracts the best projects, and builds teams that actually want to work together. No fluff. Just the feedback loops that separate engineers who plateau from those who keep leveling up.DescriptionIt’s 8:57 a.m. and the database migration just nuked half the platform. Your heart should be racing, but instead you’re thinking “well, this’ll make a great post-mortem.” That split-second difference in your internal monologue? It decides everything that happens next.We dive into the invisible circuit board of workplace optimism and trace how a single generous assumption cascades into better solutions, stronger relationships, and a career that looks suspiciously like an exponential curve. You’ll discover why two engineers staring at the same legacy mess see completely different realities, how positive feedback loops compound like interest, and why the most interesting projects always land on certain desks.This isn’t motivational-poster philosophy. It’s basic cause-and-effect you can wire up like any other system. We break down the pseudo-code of mindset loops, explore why resilient minds treat rejection as latency instead of fatal errors, and reveal how emotional contagion spreads through teams faster than network packets.Plus, you get three practical training protocols to flash your mindset firmware: the Interpretation Pause, the Small-Win Ledger, and the Language Linter. No affirmations in the mirror required. Just cognitive refactors as mundane as cleaning imports.Key TopicsThe Lens Effect - How interpretive bias acts like a compiler choosing default values for every uninitialized variable in your workday, and why those defaults become self-fulfilling propheciesUpward Spiral Engineering - Breaking down the while-loop of positivity: assume generous intent, act collaboratively, observe supportive responses, reinforce positive beliefs, repeatFailing Forward - Why optimists use temporary, specific explanations for failure while pessimists go global and permanent, and how that difference affects learning speed at the biochemical levelEmotional Wi-Fi - How mirror neurons create contagion effects in teams, why one upbeat engineer can reboot a whole room’s firmware, and what psychological safety actually means in practiceGravity-Defying Opportunities - The hidden network graph where optimism thickens relationship edges, and why managers allocate moonshot projects to engineers who keep the temperature downFirmware Flashing Protocols - Three TDD-style practices for training your inner coach: the five-second interpretation pause, the daily small-win ledger, and the real-time language linter for pessimistic code smells Get full access to Compiling Ideas at patrickkoss.substack.com/subscribe

It’s 3 a.m., you’re staring at a Terraform state lock that won’t release, and your deploy is blocked. State files lock you out. Monolithic applies slow you down. Drift happens and you only find out when you remember to run a plan. What if your infrastructure could be managed like your Kubernetes workloads? Always reconciling. Always watching. No state files to wrestle with. Enter Crossplane: the Kubernetes-native approach that might be the IaC evolution you didn’t know you needed.DescriptionTerraform dominated Infrastructure as Code for a decade, and for good reason. It brought declarative configuration, multi-cloud support, and repeatability to infrastructure management. But as teams scaled up and infrastructure grew more complex, some cracks started to show.In this episode, we walk through Terraform’s pain points that have become increasingly hard to ignore. The state file that locks out your entire team when someone runs a long apply. The monolithic plan that recalculates the world even when you want to change one database parameter. The drift that only gets caught when you remember to manually run a plan. The lack of continuous reconciliation.We explore Pulumi’s attempt to solve some of these problems by letting you write infrastructure in real programming languages—Python, TypeScript, Go—which is genuinely nice. But Pulumi still follows the Terraform execution model: one-shot CLI tool, state backend, no continuous drift correction. It’s “Terraform with a nicer language,” which is valuable, but doesn’t fundamentally change the paradigm.Then we dive into Crossplane: a Kubernetes-native control plane that runs continuously inside your cluster. Instead of a CLI tool you run occasionally, Crossplane extends Kubernetes with custom resources that represent cloud infrastructure. Controllers watch these resources and reconcile them against actual cloud state, just like Kubernetes reconciles Pods and Services.What does that get you? Continuous reconciliation that detects and corrects drift in near-real-time. No external state file—the Kubernetes API server is your source of truth. Parallel, independent operations instead of monolithic applies. Native integration with Kubernetes RBAC, admission controllers for policy enforcement, and GitOps workflows. When someone tries to create a database without encryption, the admission controller rejects it before it hits the cloud.We also cover the architectural patterns for running Crossplane, from single clusters with namespaces to dedicated management clusters to “control plane of control planes” for large organizations. And we’re honest about the trade-offs: you need Kubernetes skills, provider maturity isn’t quite at Terraform’s level yet, and you’re adding operational overhead by running another cluster.But for teams already invested in Kubernetes, who care about continuous compliance, and who want infrastructure that reconciles itself without manual intervention, Crossplane offers a compelling alternative. The future of IaC is cloud-native, and Crossplane is leading the charge.Key Topics- Why Infrastructure as Code exists: version control, repeatability, and escaping snowflake servers- Terraform’s decade of dominance: HCL, 1000+ providers, and the state file model- Where Terraform starts to hurt: state file hell (50%+ of users encounter state issues), monolithic sequential applies, drift detection gaps- The operational pain: 3 a.m. state locks, waiting 10 minutes for plans that touch 47 resources to change one thing- Pulumi’s approach: real programming languages (Python, TypeScript, Go) but still one-shot execution model- Crossplane’s paradigm shift: Kubernetes as your infrastructure control plane with continuous reconciliation- Continuous drift correction: controllers run in a loop, detecting and reverting manual changes within seconds- No external state file: Kubernetes API server (etcd) as source of truth, no locks, no corruption- Parallel operations: independent resources reconcile simultaneously, targeted updates without global plans- Policy enforcement via admission controllers: Kyverno or OPA/Gatekeeper rejecting non-compliant resources at API level- GitOps for infrastructure: store YAML in Git, use Argo CD or Flux for continuous application- Tight integration with application workloads: Crossplane auto-publishes connection details as Kubernetes Secrets- Architectural patterns: single cluster, dedicated management cluster, control plane of control planes- The trade-offs: Kubernetes skills required, provider maturity still growing, operational overhead of running clusters- Real-world adoption: CNCF graduated project used by Accenture, Deutsche Bahn, and others Get full access to Compiling Ideas at patrickkoss.substack.com/subscribe

Unsuccessful teams don’t fail because they lack smart engineers. They fail because of how they work: arguing about code behavior instead of writing tests, bikeshedding formatting instead of automating it, manually testing everything, optimizing for ego over outcomes. We break down eight patterns I’ve seen repeatedly in struggling teams and contrast them with what successful teams do differently. If you see your team here, it’s not an accusation—it’s a starting point.DescriptionEvery failing software team looks unique from the inside. Different products, different tech stacks, different company politics. But zoom out a bit, and the patterns repeat with almost embarrassing consistency.In this episode, we walk through the most common anti-patterns I’ve seen in unsuccessful teams and contrast them with what successful teams do instead. This isn’t about abstract “best practices.” It’s about day-to-day behavior: pull requests, naming, tests, deployments, documentation, and culture.We start with the PR debates that never end. Unsuccessful teams argue about code behavior in comments because there are no tests to prove anything. Successful teams write executable examples and let the tests settle the argument. Airbnb evolved from shipping mostly untested code to a culture where untested changes get flagged immediately. Netflix runs nearly a thousand functional tests per PR. They don’t argue about behavior—they prove it.Then there’s bikeshedding: massive energy spent on snake_case vs camelCase, brace placement, and naming conventions. We have tools for this. Successful teams push formatting and style into automated tooling—black, ruff, gofmt, clippy—so code reviews can focus on design, correctness, and clarity instead of style tribunals.We explore why manual testing kills velocity, how toxic team dynamics optimize for ego over outcomes (with Google’s Project Aristotle research showing psychological safety as the single most critical factor in team success), why inventing a new project structure in every repo creates chaos, and how the “hero engineer” with a bus factor of one is a structural problem, not an asset.Documentation and reflection tie it all together. Unsuccessful teams rely on tribal knowledge passed through Slack threads and half-remembered meetings. Successful teams capture decisions in Architecture Decision Records, maintain runbooks, and document the things people repeatedly ask about. And they regularly reflect on whether their process is actually working.The difference between unsuccessful and successful teams isn’t one big transformation. It’s a long series of small, deliberate corrections. This episode gives you a mirror. If you see your team here, pick one area and move it one step in the right direction.Key Topics- Arguing about behavior in PRs instead of proving it with tests (Airbnb and Netflix testing culture examples)- Bikeshedding: how Parkinson’s Law of Triviality wastes energy on formatting instead of architecture- The illusion of control in manual testing vs the reality of automated CI/CD pipelines (Netflix’s Spinnaker, Spotify’s 14-day-to-5-minute deployment transformation)- Toxic team dynamics: proving you’re smart vs building something together (Google’s Project Aristotle findings on psychological safety)- Why inventing a new structure in every repo creates cognitive overhead and slows reviews- The bus factor of one: why hero engineers are single points of failure (research shows 10 of 25 popular GitHub projects had bus factor of 1)- Documentation as a product: Architecture Decision Records, runbooks, and capturing knowledge before people leave- Never reflecting on how you work: why continuous improvement through retrospectives is critical (Spotify and Atlassian retro practices)- From quiet failure to deliberate success: picking one area and making small, deliberate corrections- Practical starting points: automate what can be automated, standardize what can be standardized, document what others will need, share knowledge instead of hoarding it Get full access to Compiling Ideas at patrickkoss.substack.com/subscribe

LLMs generate code 12x faster than you can type, and they’re getting better every month. Some engineers call it slop. Others are shipping production features at breakneck speed. So which is it—revolution or really fast tech debt? The answer depends on something that has nothing to do with the AI: whether you actually know your patterns, your boundaries, and your architecture. Because code was never the bottleneck. And now that it’s basically free, that’s more true than ever.DescriptionThere’s a weird divide in software engineering right now. One group looks at AI-generated code and sees unusable garbage that’ll haunt codebases for years. Another group is absolutely blown away, shipping features faster than ever and wondering why anyone still types boilerplate by hand.The reality? Both groups are right. And the difference comes down to one thing: domain and structure.In this episode, we break down why LLMs excel in well-documented domains like web development (where we used to copy from Stack Overflow anyway) but struggle in niche areas with sparse training data. We explore the dirty secret nobody talks about: code was never the hard part. Architecture was. Boundaries were. Maintainability was.Now we have tools that can generate thousands of lines of code in an afternoon. That means you can create a tightly-coupled mess at 12x speed. You can ship features that work today but will take three engineers two weeks to modify six months from now.The engineers thriving in this new era aren’t the fastest typers or syntax memorizers. They’re the ones who know their patterns deeply—when to use microservices vs modular monoliths, how to define clean boundaries, why TDD isn’t just nice-to-have but a survival strategy. They understand that LLMs have the same context problem as junior developers: show them a tangled codebase where everything depends on everything else, and they’ll write code that compiles but breaks production at 3 a.m.This is about the fundamental shift happening in software engineering. Your value isn’t in typing anymore. It’s in foresight. In knowing what happens when you scale. In designing systems that are maintainable not just by you, but by AI, by junior developers, by anyone who comes after you.Because code is cheap now. It’s getting cheaper every month. But the ability to structure systems so they don’t collapse under their own weight? That’s getting more valuable.Key Topics- The speed gap: LLMs generate 1200 words per minute vs human typing at 100 wpm, and why this is only the baseline- Why some engineers see gold and others see garbage: domain matters more than skill level- The web development advantage: oceans of training data vs niche domains with sparse documentation- The dirty secret: code was never the bottleneck—architecture, boundaries, and tech debt were (Stripe study shows devs spend 1/3 of time on tech debt, $3T global GDP impact)- How LLMs are like incredibly productive junior developers: terrible at long-term planning- Why you need to know your patterns: vertical vs horizontal slicing, domain models, event sourcing, when to use microservices vs monoliths- Real examples: Shopify’s modular monolith with 2.8M lines of Ruby, Uber’s SOA transition struggles- The context window problem: LLMs suffer from “lost in the middle” and need clean boundaries to succeed- Test-driven development as a survival strategy: defining contracts and boundaries that make safe changes possible- Your new job description: from feature factory to architect, from writing code to designing systems- Why learning the basics deeply (the why, not just the names) is the only way to keep up Get full access to Compiling Ideas at patrickkoss.substack.com/subscribe

Forget LeetCode marathons and whiteboard coding for millions of imaginary users. Germany’s tech hiring process is completely different from the US playbook—more practical, more real-world, way more chill. But don’t confuse ‘different’ with ‘easy.’ We break down what companies actually test at every level, from juniors building their first CRUD app to staff engineers designing systems that don’t collapse under their own weight.DescriptionAfter years on both sides of the interview table, I’ve noticed something: Germany’s software engineering hiring process operates on an entirely different wavelength than Silicon Valley’s algorithm-obsessed grind.No five-hour LeetCode gauntlets. No designing Instagram for a billion users on a whiteboard. Instead, it’s practical, grounded in real problems, and focused on whether you can actually build and explain working software. But the bar is still high—it’s just high in different ways.In this episode, we walk through the evolution of expectations from junior to staff level. For juniors and interns, it’s about fundamentals: can you build a functional CRUD API and explain your decisions? We discuss how AI-powered resume inflation has made CVs look incredible while practical skills remain inconsistent, and why portfolio projects matter more than polished bullet points.For mid and senior engineers, the task looks identical, but the questioning goes deep. We probe distributed systems, concurrency, HTTP semantics, database tradeoffs. Small inaccuracies lead to rejections. Title inflation has made “senior” nearly meaningless across Europe, so we test for actual depth, not credentials.At the staff and architect level, everything shifts. You’re not just coding anymore—you’re leading teams, designing resilient systems, and making judgment calls when there’s no obvious right answer. The interview becomes a technical discussion, not a performance. We want to learn something from you.This is a candid look at what German tech companies actually care about, how to prepare without grinding algorithm puzzles, and why “we’re not hiring your resume—we’re hiring you” isn’t just a platitude.Key Topics- Why Germany’s hiring process prioritizes practical skills over algorithmic performance- Junior/intern expectations: portfolio projects, take-home assignments, and the impact of AI resume inflation- How we test juniors with simple CRUD tasks and why explanation matters as much as working code- Mid/senior engineer interviews: same task, radically deeper questioning on fundamentals- The title inflation crisis in Europe and why “senior” no longer means senior- Real-world system design questions vs. abstract “design Instagram” nonsense- The staff/architect shift: leadership, judgment, and why many can’t code anymore (but still need to)- Why there’s no centralized playbook in Germany and what that means for interview prep- Practical advice: focus on fundamentals, understand tradeoffs, and bring real experience to the table Get full access to Compiling Ideas at patrickkoss.substack.com/subscribe

A single line of Rust code took down Cloudflare and half the Internet. But blaming unwrap() misses the real story: a database permission tweak that rolled straight to production without ever touching staging. We break down what actually happened and how to build systems where config changes die in dev instead of becoming headlines.DescriptionOn November 18, 2025, Cloudflare experienced its worst outage since 2019. The narrative quickly became “Rust’s unwrap() broke the Internet,” but that’s dangerously incomplete.In this episode, we dig past the clickbait to understand what really failed: a ClickHouse database permission change altered query behavior, generating a configuration file that violated a hard-coded 200-feature limit in the Bot Management module. That config rolled globally without failing in lower environments first. When the module hit the “impossible” state, Rust did exactly what it promises—it panicked.We explore why configuration deserves the same rigor as code, how staging environments need to actually mirror production (not just exist), and the defense-in-depth layers every critical system needs: pipeline validation, graceful degradation, and intentional error handling.Whether you’re a staff engineer reviewing incident postmortems or building latency-sensitive systems with heavy config dependencies, this breakdown turns one outage into actionable lessons for your entire development lifecycle.Key Topics- The real cascade: database permissions → query behavior → config generation → production panic- Why “config is code” and how to treat it with proper CI/CD rigor- The three requirements for staging to actually catch these bugs (representative data, same codepaths, environment-aware rollouts)- Defense in depth: config pipeline validation, service-level degradation, and code-level error handling- When to use unwrap() vs Result in Rust, and why panic policies matter for blast radius- Practical guidance: multi-stage config rollouts, canary deployments, and graceful failure modes- How to build systems where misconfigurations die in dev instead of taking down the Internet Get full access to Compiling Ideas at patrickkoss.substack.com/subscribe

Ever wonder why your beautifully trained machine learning model works perfectly in your Jupyter notebook but completely falls apart at 3 AM when it’s actually serving production traffic? You’re not alone. Most ML teams discover the hard way that the actual model code is only about 5% of building a real ML system. The other 95% is infrastructure, data pipelines, monitoring, and a thousand things that can break in spectacularly creative ways.In this episode, we’re diving deep into what it actually takes to build a machine learning platform that doesn’t crumble under pressure. We’re not talking high-level fluff here. This is a technical walkthrough of how companies like Netflix, Uber, and Airbnb designed their ML infrastructure to handle billions of predictions without falling over.We’ll break down the three critical pipelines every ML platform needs: data management, model training, and production deployment. You’ll learn why training-serving skew is one of the most insidious bugs in ML systems and how Google Play boosted their app install rate by 2% just by fixing it. We’ll explore why experiment tracking isn’t optional if you want any hope of reproducing your results, and how platforms like MLflow became the version control system for machine learning.But here’s where it gets interesting. For every component we discuss, we’re going to look at four approaches: the naive “bad” approach that everyone tries first, the “medium” approach that’s getting warmer, the “good” approach where things start working properly, and the “very good” approach that’s what you aim for when you need bulletproof systems.We’ll cover the infrastructure nobody talks about until it breaks: how to orchestrate distributed training across GPU clusters, how hyperparameter tuning platforms like Kubeflow’s Katib can try hundreds of model configurations in parallel using Bayesian optimization, and why model registries are the bridge between your experimentation chaos and production reliability.You’ll learn about canary deployments and how to roll out new models to 10% of traffic before betting the farm. We’ll talk about monitoring for data drift, because the world changes and yesterday’s perfect model becomes today’s garbage predictor. And we’ll discuss the fault tolerance patterns that let Netflix process trillions of events daily without the whole system collapsing when individual components fail.This isn’t for people looking for a gentle introduction to machine learning. This is for engineers in the trenches who need to understand how to build ML infrastructure that scales, how to debug models that mysteriously underperform in production, and how to set up systems that won’t require you to manually babysit every training run at 2 AM.Whether you’re building your first ML platform from scratch or trying to figure out why your current system keeps catching fire, this episode will give you the architectural patterns and war stories you need to build something that actually works.Let’s get into it.References[1] Sculley, D., Holt, G., Golovin, D., et al. (2015). “Hidden Technical Debt in Machine Learning Systems.” *Proceedings of NIPS 2015*. https://papers.nips.cc/paper_files/paper/2015/hash/86df7dcfd896fcaf2674f757a2463eba-Abstract.html[2] “MLOps as the Remedy to Tech Debt in Machine Learning.” Alectio Blog. https://alectio.com/2023/03/26/mlops-as-the-remedy-to-tech-debt-in-machine-learning/[3] “MLOps-Reducing the technical debt of Machine Learning.” MLOps Community. https://medium.com/mlops-community/mlops-reducing-the-technical-debt-of-machine-learning-dac528ef39de[4] “MLOps: Continuous delivery and automation pipelines in machine learning.” Google Cloud Architecture Center. https://cloud.google.com/architecture/mlops-continuous-delivery-and-automation-pipelines-in-machine-learning[5] “Top End to End MLOps Platforms and Tools in 2024.” JFrog ML. https://www.qwak.com/post/top-mlops-end-to-end[6] Rustamy, F. “Machine Learning Platforms Using Kubeflow.” Medium. https://medium.com/@faheemrustamy/machine-learning-platforms-using-kubeflow-a0a9be98f57f[7] “Architecture | Kubeflow.” Kubeflow Documentation. https://www.kubeflow.org/docs/started/architecture/[8] “Automating Machine Learning Pipelines on Kubernetes with Kubeflow.” IOD Blog. https://iamondemand.com/blog/automating-machine-learning-pipelines-on-kubernetes-with-kubeflow/[9] “MLflow: A Unified Platform for Experiment Tracking and Model Management.” Medium. https://medium.com/@pi_45757/mlflow-a-unified-platform-for-experiment-tracking-and-model-management-13dd8b8356db[10] “MLflow Tracking.” MLflow Documentation. https://mlflow.org/docs/latest/ml/tracking/[11] “How to Build an End-To-End ML Pipeline.” Neptune.ai Blog. https://neptune.ai/blog/building-end-to-end-ml-pipeline[12] “MLOps Architecture Guide.” Neptune.ai Blog. https://neptune.ai/blog/mlops-architecture-guide[13] “The Evolution of the Machine Learning Platform.” Scribd Technology Blog. https://tech.scribd.com/blog/2024/evolution-of-mlplatform.html[14] “Challenges of building high performance data pipelines for big data analytics.” Eyer.ai Blog. https://www.eyer.ai/blog/challenges-of-building-high-performance-data-pipelines-for-big-data-analytics/[15] “Industry Spotlight - Engineering the AI Factory: Inside Netflix’s AI Infrastructure (Part 3).” Vamsi Talks Tech. https://www.vamsitalkstech.com/ai/industry-spotlight-engineering-the-ai-factory-inside-netflixs-ai-infrastructure-part-3/[16] “Machine Learning Infrastructure.” LinkedIn Engineering. https://engineering.linkedin.com/teams/data/data-infrastructure/machine-learning-infrastructure[17] “Model Deployment Strategies: Discover How to Boost your ML Deployment Success.” Medium. https://medium.com/@juanc.olamendy/model-deployment-strategies-discover-how-to-boost-your-ml-deployment-success-d82b320ac118[18] “They Handle 500B Events Daily. Here’s Their Data Engineering Architecture.” Monte Carlo Data Blog. https://www.montecarlodata.com/blog-data-engineering-architecture/[19] “What Is a Feature Store?” Tecton Blog. https://www.tecton.ai/blog/what-is-a-feature-store/[20] “Top 3 Feature Stores To Ease Feature Management in Machine Learning.” Censius Blog. https://censius.ai/blogs/top-3-feature-stores-to-ease-feature-management-in-machine-learning[21] “What is training-serving skew in Machine Learning?” JFrog ML Blog. https://www.qwak.com/post/training-serving-skew-in-machine-learning[22] “Monitor models for training-serving skew with Vertex AI.” Google Cloud Blog. https://cloud.google.com/blog/topics/developers-practitioners/monitor-models-training-serving-skew-vertex-ai[23] “Meet Michelangelo: Uber’s Machine Learning Platform.” Uber Engineering Blog. https://www.uber.com/blog/michelangelo-machine-learning-platform/[24] “Open sourcing Feathr – LinkedIn’s feature store for productive machine learning.” LinkedIn Engineering Blog. https://engineering.linkedin.com/blog/2022/open-sourcing-feathr---linkedin-s-feature-store-for-productive-m[25] “Getting started with Kubeflow Pipelines.” Google Cloud Blog. https://cloud.google.com/blog/products/ai-machine-learning/getting-started-kubeflow-pipelines[26] “Experiment Tracking with MLflow in 10 Minutes.” Towards Data Science. https://towardsdatascience.com/experiment-tracking-with-mlflow-in-10-minutes-f7c2128b8f2c/[27] “Demystifying MLflow: A Hands-on Guide to Experiment Tracking and Model Registry.” Medium. https://dspatil.medium.com/demystifying-mlflow-a-hands-on-guide-to-experiment-tracking-and-model-registry-d99b6bfd1bda[28] “Machine Learning (ML) Orchestration on Kubernetes using Kubeflow.” InfraCloud Blog. https://www.infracloud.io/blogs/machine-learning-orchestration-kubernetes-kubeflow/[29] “Kubeflow: Architecture, Tutorial, and Best Practices.” Komodor Learn. https://komodor.com/learn/kubeflow-architecture-tutorial-and-best-practices/[30] “Overview | Kubeflow.” Kubeflow Training Documentation. https://www.kubeflow.org/docs/components/training/overview/[31] “GitHub - kubeflow/trainer: Distributed ML Training and Fine-Tuning on Kubernetes.” GitHub. https://github.com/kubeflow/trainer[32] “An overview for Katib.” Kubeflow Documentation. https://www.kubeflow.org/docs/components/katib/overview/[33] “Kubeflow Part 4: AutoML Experimentation in Kubeflow Using Katib.” Invisibl Blog. https://invisibl.io/blog/kubeflow-automl-experimentation-katib-kubernetes-mlops/[34] “Hyperparameter optimization - Wikipedia.” Wikipedia. https://en.wikipedia.org/wiki/Hyperparameter_optimization[35] “Kubeflow 1.9: New Tools for Model Management and Training Optimization.” Kubeflow Blog. https://blog.kubeflow.org/kubeflow-1.9-release/[36] “MLflow Model Registry | MLflow.” MLflow Documentation. https://mlflow.org/docs/latest/ml/model-registry/[37] “KServe | MLServer.” MLServer Documentation. https://docs.seldon.ai/mlserver/user-guide/deployment/kserve[38] “Machine Learning Model Serving Tools Comparison - KServe, Seldon Core, BentoML.” Xebia Blog. https://xebia.com/blog/machine-learning-model-serving-tools-comparison-kserve-seldon-core-bentoml/[39] “Best Tools For ML Model Serving.” Neptune.ai Blog. https://neptune.ai/blog/ml-model-serving-best-tools[40] “Machine Learning Model Serving Overview (Seldon Core, KFServing, BentoML, MLFlow).” Medium. https://medium.com/israeli-tech-radar/machine-learning-model-serving-overview-c01a6aa3e823[41] “Building A Declarative Real-Time Feature Engineering Framework.” DoorDash Engineering Blog. https://careersatdoordash.com/blog/building-a-declarative-real-time-feature-engineering-framework/[42] “How LinkedIn, Uber, Lyft, Airbnb and Netflix are Solving Data Management and Discovery for Machine Learning Solutions.” KDnuggets. https://www.kdnuggets.com/2019/08/linkedin-uber-lyft-airbnb-netflix-solving-data-management-discovery-machine-learning-solutions.html[43] “TensorFlow Extended (TFX) for data validation in practice.” Sarus Blog. https://medium.com/sarus/tensorflow-extended-tfx-for-data-validation-in-practice-2e6f061753c0[44] “Validating

The AI agent hype is real. AutoGPT, multi-agent frameworks, agent orchestrators with sci-fi names – they’re everywhere. But here’s what nobody’s saying: we’ve been solving these coordination problems for decades.In this episode, we dissect the common AI agent orchestration patterns and trace them back to their software engineering roots. Sequential agents? That’s the Pipes and Filters pattern from Unix. Concurrent orchestration with voting? Welcome to MapReduce. Group chat managers? Meet the Mediator pattern from the Gang of Four book gathering dust on your shelf.We walk through the fundamental patterns – sequential, concurrent, group chat, hierarchical, handoff, and magentic orchestration – showing exactly how each one maps to classic distributed systems and design patterns you already know. Then we predict what’s coming next: reflective QA loops, debate ensembles, market-based task allocation, blackboard architectures, and swarm intelligence.The truth is, AI agents aren’t revolutionary – they’re evolutionary. What’s actually new is applying natural language understanding to coordination problems. Instead of hard-coded routing, you get agents that interpret context dynamically. That’s powerful, but the underlying mechanics are decades old.And that’s a good thing. It means we have a playbook. If you understand design patterns and distributed systems, you already have the mental models to design robust multi-agent AI systems. The next time someone shows you their “revolutionary” AI agent framework, look under the hood. You’ll probably find an old friend.Key Topics- Multi-agent orchestration patterns (sequential, concurrent, group chat, hierarchical, handoff, magentic)- Mapping AI patterns to classic software engineering (Pipes and Filters, MapReduce, Mediator, Chain of Responsibility)- Distributed systems wisdom applied to AI agents- Emerging patterns: debate ensembles, blackboard architecture, swarm intelligence- Why evolutionary > revolutionary in AI agent designReferencesMulti-Agent Systems & Orchestration[1] AI Agent Orchestration Patterns - Azure Architecture Center | Microsoft Learnhttps://learn.microsoft.com/en-us/azure/architecture/ai-ml/guide/ai-agent-design-patterns[2] Design multi-agent orchestration with reasoning using Amazon Bedrock | AWS Machine Learning Bloghttps://aws.amazon.com/blogs/machine-learning/design-multi-agent-orchestration-with-reasoning-using-amazon-bedrock-and-open-source-frameworks/[3] Best Practices for Multi-Agent Orchestration and Reliable Handoffs | Skywork AIhttps://skywork.ai/blog/ai-agent-orchestration-best-practices-handoffs/Sequential Orchestration & Pipes and Filters[4] Pipes and Filters pattern - Azure Architecture Center | Microsoft Learnhttps://learn.microsoft.com/en-us/azure/architecture/patterns/pipes-and-filters[5] Pipes and Filters - Enterprise Integration Patternshttps://www.enterpriseintegrationpatterns.com/patterns/messaging/PipesAndFilters.html[6] Pipe and Filter Architecture - System Design | GeeksforGeekshttps://www.geeksforgeeks.org/system-design/pipe-and-filter-architecture-system-design/Concurrent Orchestration, MapReduce & Fan-Out/Fan-In[7] MapReduce - Wikipediahttps://en.wikipedia.org/wiki/MapReduce[8] MapReduce Patterns, Algorithms, and Use Cases | Highly Scalable Bloghttps://highlyscalable.wordpress.com/2012/02/01/mapreduce-patterns/[9] Fan-In and Fan-Out Patterns in Cloud and Distributed Systems | Mediumhttps://medium.com/@minimaldevops/fan-in-and-fan-out-patterns-in-cloud-and-distributed-systems-0544235b9d6b[10] Fan-out (software) - Wikipediahttps://en.wikipedia.org/wiki/Fan-out_(software)Group Chat Orchestration & Mediator Pattern[11] Design Patterns: Elements of Reusable Object-Oriented Software | Gamma, Helm, Johnson, Vlissides (1994)https://en.wikipedia.org/wiki/Design_Patterns[12] Mediator Design Pattern | Gang of Fourhttps://www.geeksforgeeks.org/system-design/mediator-design-pattern/[13] Mediator Pattern | Refactoring.Guruhttps://refactoring.guru/design-patterns/mediator (implied from search results)Hierarchical Orchestration[14] Mastering AI Agent Orchestration: Comparing CrewAI, LangGraph, and OpenAI Swarm | Mediumhttps://medium.com/@arulprasathpackirisamy/mastering-ai-agent-orchestration-comparing-crewai-langgraph-and-openai-swarm-8164739555ff[15] LangGraph vs CrewAI: Let’s Learn About the Differences | ZenML Bloghttps://www.zenml.io/blog/langgraph-vs-crewai[16] Choosing the Right AI Agent Framework: LangGraph vs CrewAI vs OpenAI Swarm | nuvi Bloghttps://www.nuvi.dev/blog/ai-agent-framework-comparison-langgraph-crewai-openai-swarm### Handoff Orchestration & Chain of Responsibility[17] Chain-of-responsibility pattern - Wikipediahttps://en.wikipedia.org/wiki/Chain-of-responsibility_pattern[18] Chain of Responsibility | Refactoring.Guruhttps://refactoring.guru/design-patterns/chain-of-responsibility[19] Chain of Responsibility Design Pattern | GeeksforGeekshttps://www.geeksforgeeks.org/system-design/chain-responsibility-design-pattern/Magentic Orchestration & AutoGPT[20] Semantic Kernel Agent Orchestration | Microsoft Learnhttps://learn.microsoft.com/en-us/semantic-kernel/frameworks/agent/agent-orchestration/[21] Semantic Kernel: Multi-agent Orchestration | Microsoft DevBlogshttps://devblogs.microsoft.com/semantic-kernel/semantic-kernel-multi-agent-orchestration/[22] AI Agents: AutoGPT architecture & breakdown | Mediumhttps://medium.com/@georgesung/ai-agents-autogpt-architecture-breakdown-ba37d60db944[23] AutoGPT Guide: Creating And Deploying Autonomous AI Agents Locally | DataCamphttps://www.datacamp.com/tutorial/autogpt-guideDistributed Systems Patterns[24] Two-Phase Commit | Martin Fowlerhttps://martinfowler.com/articles/patterns-of-distributed-systems/two-phase-commit.html[25] Two-phase commit protocol - Wikipediahttps://en.wikipedia.org/wiki/Two-phase_commit_protocol[26] Raft and Paxos: Consensus Algorithms for Distributed Systems | Mediumhttps://medium.com/@mani.saksham12/raft-and-paxos-consensus-algorithms-for-distributed-systems-138cd7c2d35a[27] Paxos vs. Raft: Have we reached consensus on distributed consensus? | arXivhttps://arxiv.org/abs/2004.05074[28] Raft Consensus Algorithmhttps://raft.github.io/[29] Atomic broadcast - Wikipediahttps://en.wikipedia.org/wiki/Atomic_broadcast[30] Circuit Breaker Pattern - Azure Architecture Center | Microsoft Learnhttps://learn.microsoft.com/en-us/azure/architecture/patterns/circuit-breaker[31] Circuit Breaker Pattern in Microservices | GeeksforGeekshttps://www.geeksforgeeks.org/system-design/what-is-circuit-breaker-pattern-in-microservices/Orchestration vs. Choreography[32] Orchestration vs. Choreography in Microservices | GeeksforGeekshttps://www.geeksforgeeks.org/system-design/orchestration-vs-choreography/[33] Orchestration vs Choreography | Camundahttps://camunda.com/blog/2023/02/orchestration-vs-choreography/[34] Saga Orchestration vs Choreography | Temporalhttps://temporal.io/blog/to-choreograph-or-orchestrate-your-saga-that-is-the-questionEmerging Patterns[35] Blackboard system - Wikipediahttps://en.wikipedia.org/wiki/Blackboard_system[36] Blackboard Architecture | GeeksforGeekshttps://www.geeksforgeeks.org/system-design/blackboard-architecture/[37] The Resurgence of Blackboard Systems | Mediumhttps://medium.com/@shawncutter/the-resurgence-of-blackboard-systems-b10ea72a8326[38] Swarm Intelligence: The Power of the Collective | FasterCapitalhttps://fastercapital.com/content/Swarm-Intelligence--The-Power-of-the-Collective--Swarm-Intelligence-in-AI.html[39] Multi-Agent Systems Powered by Large Language Models: Applications in Swarm Intelligence | arXivhttps://arxiv.org/abs/2503.03800[40] Enterprise Swarm Intelligence: Building Resilient Multi-Agent AI Systems | AWS Communityhttps://community.aws/content/2z6EP3GKsOBO7cuo8i1WdbriRDt/enterprise-swarm-intelligence-building-resilient-multi-agent-ai-systems[41] Patterns for Democratic Multi-Agent AI: Debate-Based Consensus | Mediumhttps://medium.com/@edoardo.schepis/patterns-for-democratic-multi-agent-ai-debate-based-consensus-part-1-8ef80557ff8a[42] Voting or Consensus? Decision-Making in Multi-Agent Debate | arXivhttps://arxiv.org/abs/2502.19130[43] More Agents Is All You Need | arXivhttps://arxiv.org/html/2402.05120v1[44] Minimizing Hallucinations and Communication Costs: Adversarial Debate and Voting Mechanisms in LLM-Based Multi-Agents | MDPIhttps://www.mdpi.com/2076-3417/15/7/3676[45] Contract Net Protocol - Wikipediahttps://en.wikipedia.org/wiki/Contract_Net_Protocol[46] Task Assignment of the Improved Contract Net Protocol under a Multi-Agent System | MDPIhttps://www.mdpi.com/1999-4893/12/4/70Additional Resources[47] Implementation of Maker and Checker (4-eyes) Principle | LinkedInhttps://www.linkedin.com/pulse/implementation-maker-checker-4-eyes-principle-ajendra-singh[48] When One AI Agent Isn’t Enough: Building Multi-Agent Systems | Mediumhttps://medium.com/@nirdiamant21/when-one-ai-agent-isnt-enough-building-multi-agent-systems-755479f2c64d Get full access to Compiling Ideas at patrickkoss.substack.com/subscribe

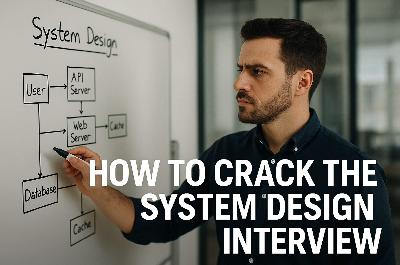

So you’ve made it to the system design interview — the “boss level” of tech interviews where your architectural skills are put to the ultimate test. The stakes are sky-high: ace this, and you’re on your way to that coveted staff engineer role; flub it, and it’s back to the drawing board. System design interviews have become an integral part of hiring at top tech companies and are notoriously difficult at places like Google, Amazon, Microsoft, Meta, and Netflix. Why? These companies operate some of the most complex systems on the planet, and they need engineers who can design scalable, reliable architectures to keep them competitive. However, you’re not alone if this format makes your palms sweat — most software engineers struggle with system design interviews, finding them a major obstacle in career progression.But fear not! This guide will walk you through everything you need to know to crack the system design interview, even at the staff level. We’ll talk about the right mindset, common challenges (and how to tackle them), core concepts (explained with simple analogies), sneaky tricks to impress your interviewer, real-world examples from tech giants, and pitfalls to avoid.If you like written articles, feel free to check out my medium here: https://medium.com/@patrickkossUnderstanding the System Design MindsetBefore you jump into drawing boxes and arrows, step back and change your mindset. A system design interview isn’t like coding out a LeetCode solution with one correct answer — it’s about high-level thinking, trade-offs, and real-world engineering decisions. In other words, you need to think like an architect, not just a coder. Successful system design is all about balancing competing goals and making informed decisions to handle ambiguity and scale. In fact, system design is about making crucial decisions to balance various trade-offs, determining a system’s functionality, performance, and maintainability. Every design choice (SQL vs NoSQL, monolith vs microservices, consistency vs availability, etc.) has pros and cons, and interviewers want to see that you understand these trade-offs and can reason about them out loud.Equally important is adopting a “real-world” perspective. Interviewers aren’t looking for a textbook answer; they want to know how you’d build a system that actually works in production. That means considering things like scale (millions of users), reliability (servers will fail, then what?), and evolution (requirements change, can your design adapt?). The best candidates approach the problem like they’re already the staff engineer on the job: they clarify what’s really needed, weigh options, and choose a design that addresses the requirements with sensible compromises. There’s rarely one “right” answer in system design — what matters is the reasoning behind your answer.One pro-tip: always discuss trade-offs. If coding interviews are about getting the solution, system design interviews are about discussing alternative solutions and why you’d pick one over another. In fact, interviewers love it when you explicitly talk about the “why” behind your design decisions. As one senior engineer put it, hearing candidates discuss trade-offs is a huge green flag that they have working knowledge of designing systems (as opposed to just parroting a tutorial). For example, mention why you might choose a relational database (for consistency) versus a NoSQL store (for scalability) given the problem context — showing you understand the consequences of each choice. Adopting this mindset — thinking in trade-offs, focusing on real-world constraints, and abstracting away from nitty-gritty code — is the first step toward system design success.And yes, it’s normal for system design questions to feel open-ended or ambiguous. Part of the mindset is embracing ambiguity. Unlike a coding puzzle, a system design prompt might not spell out everything — it’s your job to ask questions and reduce the ambiguity. This is exactly what happens in real projects: requirements are fuzzy, and great engineers ask the right questions. So don’t be afraid to say, “Let me clarify the requirements first.” That’s not a weakness — that’s you demonstrating the system design mindset!Common Problems and How to Solve ThemWhen designing any large system, you’ll encounter a few recurring big challenges. Interviewers love to probe how you handle these. Let’s break down the usual suspects — and strategies to tackle them like a pro:* Scalability: Can your design handle 10× or 100× more users or data? Scalability comes in two flavors: vertical scaling (running on bigger machines) and horizontal scaling (adding more machines). Vertical scaling (scaling up) is straightforward — throw more CPU/RAM at the server — but it has limits and can get expensive. Horizontal scaling (scaling out) means distributing load across multiple servers. This approach is more elastic (you can in theory keep adding servers forever) but introduces complexity: you need to split data or traffic and deal with distributed systems issues.* How to solve it: design stateless services (so you can run many clones behind a load balancer), consider database sharding (more on that later) for huge datasets, and use caching to reduce load on databases. Also, identify bottlenecks — if your database is the choke point, maybe you need to replicate it or use a different data store. Scalability is often about partitioning work: more servers, more database shards, more message queue consumers, etc., each handling a slice of the load.* Consistency vs. Availability: In a distributed system, you often have to choose between making data consistent or keeping the system available during network failures — this is the famous CAP Theorem. According to CAP, a distributed system can only guarantee two out of three: Consistency, Availability, Partition Tolerance. Partition tolerance (handling network splits) is usually non-negotiable (networks will have issues, so your system must tolerate it), which forces a trade-off between consistency and availability. Consistency means every read gets the latest write — no stale data. Availability means the system continues to operate (serve requests) even if some nodes are down or unreachable. You can’t have it all, so what do you choose? It depends on the product. For example, in a banking system, you must have strong consistency (your account balance should not wildly differ between servers!) even if that means some waits or downtime. In contrast, for a social media feed or video streaming, availability is king — the system should keep serving content even if some data might be slightly stale.* How to solve it: decide where you need strong consistency (and use databases or techniques that ensure it) versus where you can allow eventual consistency for the sake of uptime. Many modern systems use a mix: e.g., eventual consistency for non-critical data, meaning data updates propagate gradually but the system never goes completely down. (We’ll explain eventual consistency with a fun analogy in the next section!)* Latency: Users hate waiting. Latency is the delay from when a user makes a request to when they get a response. At scale, latency can creep up due to network hops, database lookups, etc. If your design doesn’t account for latency, the user experience could suffer (nobody likes staring at a spinner or loading screen).* How to solve it: The mantra is “move data closer to the user.” Caching is your best friend — store frequently accessed data in memory (RAM is way faster than disk or network) so that repeat requests are blazingly fast. For example, cache popular web pages or API responses in a service like Redis or Memcached so you don’t hit the database each time. Similarly, use a Content Delivery Network (CDN) to cache static content (images, videos, scripts) on servers around the world, closer to users, to reduce round-trip time. If you need to fetch data from a distant server or a complex computation, see if you can do it asynchronously or in parallel to hide the latency. Designing with asynchrony (e.g., queuing tasks) can also keep front-end latency low by doing heavy work in the background. In short, identify the latency-sensitive parts of the system (serving the main user request path) and throw in caches or faster pipelines there. Reserve the slower, batch processing work for offline or less frequent tasks. The result? Your system feels snappy even under load.* Fault Tolerance: Stuff breaks — machines crash, networks go down, bugs happen. A robust system design needs to expect failures and gracefully handle them. Fault tolerance is about designing the system such that a failure in one component doesn’t bring the whole house down.* How to solve it: Build in redundancy at every critical point. If one server dies, there should be another to take over (think multiple app servers behind a load balancer, multiple database replicas with failover). Avoid single points of failure: that one database instance or one cache node should not be the sole keeper of your data. Use replication for databases (with leader-follower setups) so that if the primary goes offline, a secondary can become the primary. In distributed systems, timeouts and retries are essential — don’t wait forever on a failed service, and try again or route to a backup. Also consider graceful degradation: if a feature or component is down, the system should still serve something (maybe with limited functionality) instead of total failure. For instance, if the recommendation service in a video app fails, you can still stream videos (just without personalized recs). Bonus points if you mention techniques like circuit breakers (which prevent repeatedly calling a failing service and overloading it — popularized by Netflix’s Chaos Monkey experiments). At staff engineer level, you should show awareness that at scale, anything can fail, and your design accounts for it via redundancy, failovers, a

Engineering docs don’t have to be boring. We’ve all written (and skipped reading) those 50-page design docs that are technically accurate but put you to sleep by page 3. This article explores when to lean into storytelling, when to stay technical, and how to find the sweet spot where your docs are both precise and actually readable. Spoiler: it’s not an either-or choice.If you like written articles, feel free to check out my medium here: https://medium.com/@patrickkossThe Document Nobody ReadsPicture this: You’ve just spent three weeks writing the most comprehensive design document of your career. Every edge case covered. Every diagram perfect. Every API endpoint documented. You hit “publish” and wait for the feedback to roll in.Instead, you get two comments. One is “LGTM” from someone who definitely didn’t read it. The other is “Can you add a summary at the top?”Sound familiar?Here’s the uncomfortable truth: technical documentation has a reading problem. Not a writing problem. A reading problem. Your 5,000-word architecture spec might be flawless, but if nobody makes it past the introduction, it might as well be blank. The document sitting in your wiki gathering digital dust isn’t failing because it lacks detail. It’s failing because it lacks a pulse. As one analysis notes, technical reports “provide specificity, expertise, and instruction” but “often lack in approachability and human perspective” [1].The weird part? We know how to write stuff people actually want to read. We do it every day in Slack, in code review comments, in postmortem reports that people pass around saying “you have to read this one.” Those documents work because they tell a story. They have stakes. They have a beginning, middle, and end. They make you care.So why do we abandon that when writing “official” documentation? Why does the API reference have to read like a legal contract? Why does the onboarding guide sound like it was written by a committee of robots? After all, Wikipedia itself notes that “since the purpose of technical writing is practical rather than creative, its most important quality is clarity” [2]. But clarity and engagement aren’t mutually exclusive.The answer isn’t to turn every doc into a novel. That would be ridiculous. But there’s a massive gray area between “50 Shades of Technical Specs” and “A Tale of Two Microservices” where most of our documentation could live. This article is about finding that zone.Why Your Brain Hates Walls of Text (But Loves Stories)Let me tell you about the time I inherited a codebase with 200 pages of documentation. Beautiful documentation. Tables of contents, diagrams, the works. Six months later, I still had no idea how anything worked. The docs were comprehensive, but they were also completely impossible to absorb.Then I found a three-page “war story” document someone had written about a production incident. In twenty minutes of reading, I learned more about the system’s actual behavior than I had in months of reading the official docs. Why? Because the story gave me context. It showed me cause and effect. It walked me through a real scenario where decisions mattered and had consequences.Your brain is wired for this. Thousands of years of evolution optimized us for narrative, not bullet points. When someone tells you a story, your brain lights up like a Christmas tree. Language processing, sure, but also emotion centers, sensory regions, even motor cortex areas that fire when you imagine doing the actions being described [1]. A story doesn’t just inform you. It simulates an experience.Studies back this up. A massive 2021 meta-analysis covering over 33,000 participants found that stories are significantly easier to understand and recall than expository essays [3]. Narrative text gets read about twice as fast as expository text. It gets recalled twice as well too [4]. Not 10% better. Twice. That’s not a marginal improvement. That’s a completely different league of effectiveness. And this isn’t just for non-technical topics. It holds true whether you’re explaining how cookies work or how Kubernetes schedules pods [4].The magic happens because stories provide structure that matches how we think. We understand time. We understand causality (this happened, so that happened). We understand problems and solutions [5]. When you frame your technical content in that structure, comprehension becomes effortless. When you don’t, readers have to work overtime just to figure out what connects to what.Here’s a quick test. Which of these would you rather read:“We migrated from monolith to microservices. We implemented service mesh. We updated deployment pipelines.”Or:“Our API was dying under load. Every request took 3 seconds. We were hemorrhaging users. That’s when we decided to blow up the monolith and see if microservices could save us. Spoiler: it got worse before it got better.”Same information. Wildly different engagement. The second version makes you want to know what happened next. The first version makes you want to check Twitter. As one engineer notes, framing technical work as a problem with context and conflict makes the narrative “more compelling, and people will want to hear the results and lessons learned” [8].The kicker? Adding that narrative structure doesn’t make your docs less accurate. It makes them more useful. Because a document nobody reads has an accuracy of zero.If you made it this far, consider clapping and following. It´s free and helps me a lot.When to Stay Dry (And When to Bring the Drama)Not every document deserves a plot twist. I learned this the hard way when I tried to make our API reference “fun” by adding jokes and anecdotes. The feedback was… not positive. Turns out when you’re frantically looking up which HTTP status code means “gateway timeout,” you don’t want to read a paragraph about that time the author’s microwave caught fire.The secret is matching your style to the document’s job. Different docs serve different purposes. Some are meant to be scanned. Others are meant to be absorbed. Here’s how I think about it.Reference docs and API specs are like dictionaries. Nobody sits down to read a dictionary cover to cover (okay, almost nobody). You look up the word you need, get the definition, and move on. These documents should be ruthlessly organized, searchable, and to the point. Tables, bullet lists, code samples. Zero narrative. Any attempt to be clever here just gets in the way. As the Diátaxis documentation framework notes, users consult reference material “for accurate information rather than reading it like a narrative” [6]. Keep it factual, structured, and searchable.Tutorials and onboarding guides are like cooking shows. Ever watch Gordon Ramsay teach someone to cook? He doesn’t just list ingredients and steps. He walks you through it. “First we’re gonna sear this, see how it gets that crust? That’s what you want.” Tutorials benefit massively from that narrative approach. Set up a scenario. Walk through it step by step. Explain why each step matters. Make it feel like someone’s sitting next to you showing you the ropes. In fact, the Diátaxis framework explicitly designs tutorials as a form of storytelling, “providing a narrative that addresses a larger objective” [6]. These docs should absolutely tell a story because you’re taking someone on a journey from “I have no idea” to “I just built something.”Design docs and architecture explanations live in the middle. They need technical precision, but they also need to convince people. I’ve seen brilliant designs shot down because the author couldn’t explain why anyone should care. Start with a story. “Here’s the problem we’re facing. Here’s what happens if we do nothing. Here’s what we’re proposing.” Then dive into the technical details. Then bring it back to impact. Sandwich the dry stuff between layers of narrative context.Postmortems are crime scene investigations. The best postmortems read like detective stories. “At 2:47 AM, service X started throwing 500s. At first we thought it was a deployment. Then we noticed the database was screaming. By 3:15, we realized…” A chronological narrative makes the incident memorable and helps everyone understand not just what broke, but how the failure cascaded. In fact, many postmortem templates explicitly require a timeline section that should “provide a narrative, essentially retelling the story from start to finish” [7]. These documents should absolutely be stories because that’s how humans process and learn from mistakes.Engineering principles and culture docs need soul. Nobody remembers a list of values. They remember the story about the time someone stayed up all night to fix a bug before launch, or the meeting where someone said “this violates our principle of X” and everyone nodded because they got it. If you’re writing about culture or principles, ground every single one in a concrete example or anecdote. Otherwise it’s just corporate word salad.The pattern here? If someone needs to find a specific fact quickly, keep it dry. If someone needs to understand, remember, or be convinced of something, add narrative. And for everything in between, use both. Start with story to hook them and provide context. Then deliver the technical goods. Then wrap back to impact and takeaways.One more thing: even in the driest docs, examples are mini-stories. A code sample with a comment like “// User just logged in, now we need to fetch their profile” is more helpful than the same code with “// Fetch user profile.” Context is story. Use it everywhere.The Anatomy of Engineering StorytellingLet’s get practical. What does “storytelling” actually mean in engineering docs? It’s not flowery language or creative writing. It’s structure. It’s showing instead of telling. It’s giving your reader a protagonist (even if that protagonist is a user, a system, or a bug).Every good story has three ingredients: a character, a problem, and a resolution. In engineering docs, this maps perfectly to o