Discover a16z AI Policy Brief

a16z AI Policy Brief

a16z AI Policy Brief

Author: a16z Policy

Subscribed: 3Played: 2Subscribe

Share

© a16z

Description

Your guide to AI public policy from the team at a16z. Each conversation bridges Washington and Little Tech, bringing together policy leaders, researchers, and builders to explore how the US stays ahead in AI.

a16zpolicy.substack.com

a16zpolicy.substack.com

8 Episodes

Reverse

One of the core pillars of a16z's roadmap for federal AI legislation makes clear AI should not excuse wrongdoing. When people or companies use AI to break the law, existing criminal, civil rights, consumer protection, and antitrust frameworks should still apply. Enforcement agencies should have the resources they need to enforce the law. If existing bodies of law fall short in accounting for certain AI use cases, any new laws should be evidence-based, clearly defining marginal risks and the optimal approach to target harms directly.In this conversation, we go deeper on what that principle means in practice with Martin Casado, general partner at a16z where he leads the firm’s infrastructure practice and invests in advanced AI systems and foundational compute.Martin joins Jai Ramaswamy and Matt Perault to discuss how decades of technology policy can inform addressing harmful uses of AI, defining marginal risk in AI, the importance of open source for long-term competitiveness, and more.Topics Covered:01:55: A brief history of recent debates about how to regulate AI12:30: Regulating use vs. development: lessons from software and cybersecurity15:47: An open question in AI policy today: defining marginal risk18:33: Why social media is often the wrong analogy for AI regulation20:50: Enforcement tools available for holding bad actors to account24:11: Balancing many trade-offs in tech policy27:33: The role open source models play in soft power, the future of AI, and global competitiveness38:06: Implications of regulatory uncertainty41:32: Lawmakers want to act; what can they do now to enact effective policy?Resources:Follow Matt Perault: https://x.com/MattPeraultFollow Jai Ramaswamy: https://twitter.com/jai_ramaswamyFollow Martin Casado: https://twitter.com/martin_casadoSubscribe to the a16z AI Policy Brief: https://a16zpolicy.substack.com/Please note that the content here is for informational purposes only; should NOT be taken as legal, business, tax, or investment advice or be used to evaluate any investment or security; and is not directed at any investors or potential investors in any a16z fund. a16z and its affiliates may maintain investments in the companies discussed. For more details please see a16z.com/disclosures. This is a public episode. If you would like to discuss this with other subscribers or get access to bonus episodes, visit a16zpolicy.substack.com

Debates in Washington often frame AI governance as a series of false choices: they pit innovation against safety, progress against protection, federal leadership against the rights of states. But at a16z, we believe these are not binaries. In order for America to realize the full promise of artificial intelligence, we must both build great products and protect people from AI-related harms. Congress can and should design a federal AI framework that protects individuals and families, while also safeguarding innovation and competition. This approach will allow startups and entrepreneurs, who we call Little Tech, to power America’s future growth while still addressing real risks.In this conversation, Jai Ramaswamy, chief legal and policy officer, Collin McCune, head of government affairs, and Matt Perault, head of AI policy at a16z discuss the current moment in AI policy along with a16z's AI policy agenda built on nine pillars that work to keep Americans safe while keeping the U.S. in the lead.Topics Covered:00:00: Intro00:58: Recapping the current moment in AI policy: state proposals, EO, and preemption debates09:17: Is Congress gridlocked on AI?12:09: Are safety and innovation at odds16:35: a16z’s policy agenda and 9-pillar roadmap to federal AI legislation22:32: Protecting kids from AI-related harms24:49: US AI leadership, China, and competition29:04: Cybersecurity and national security risks34:59: What’s next for federal AI legislationResources:Follow Matt Perault: https://x.com/MattPeraultFollow Collin McCune: https://x.com/Collin_McCuneFollow Jai Ramaswamy: https://www.linkedin.com/in/jai-ramaswamy-85a77675Stay updated:Subscribe to the a16z AI Policy Brief: https://a16zpolicy.substack.com/Please note that the content here is for informational purposes only; should NOT be taken as legal, business, tax, or investment advice or be used to evaluate any investment or security; and is not directed at any investors or potential investors in any a16z fund. a16z and its affiliates may maintain investments in the companies discussed. For more details please see a16z.com/disclosures. This is a public episode. If you would like to discuss this with other subscribers or get access to bonus episodes, visit a16zpolicy.substack.com

If you squint at today’s AI policy debates, you may see the Telecommunications Act of 1996 in the distance.In this conversation, Matt Perault, head of AI policy, a16z, sits down with Adam Thierer, resident senior fellow, technology and innovation, R Street Institute, and Blair Levin, policy analyst, New Street Research and non-resident senior associate, Center for Strategic and International Studies, to revisit their first-hand experience tackling a similarly significant moment in tech policy: a small number of incumbents with entrenched market power, a messy patchwork of federal and local rules, and misaligned governing authority. The result then was federal preemption coupled with a comprehensive national framework for telecommunications—all through a bipartisan deal.Topics Covered:00:00: The Telecom Act’s “big bargain”02:05: Competition as the heart of the deal04:26: Telecom’s regulatory thicket and move to a national framework07:39: Preemption, ambiguity, and the FCC’s role11:58: How the Telecom Act got done: politics, persuasion, and public opinion17:39: Terminating access charges and “regulating on behalf of” the internet21:13: Federal vs. state authority and lessons for AI26:09: Leadership, vision, and a new “constitutional moment” for tech policy34:57: Institutional capacity and the missing expert home for AI39:55: What a “Telecom Act for AI” might look likeResources:Follow Matt Perault: https://x.com/MattPeraultSubscribe to the a16z AI Policy Brief: https://a16zpolicy.substack.com/Please note that the content here is for informational purposes only; should NOT be taken as legal, business, tax, or investment advice or be used to evaluate any investment or security; and is not directed at any investors or potential investors in any a16z fund. a16z and its affiliates may maintain investments in the companies discussed. For more details please see a16z.com/disclosures. This is a public episode. If you would like to discuss this with other subscribers or get access to bonus episodes, visit a16zpolicy.substack.com

Lawmakers are largely supportive of helping AI startups and challengers grow and thrive. They understand the need for the United States to compete and win in AI and generally support small businesses and entrepreneurship. Yet, numerous state AI proposals—while intended to put safeguards in place for the biggest players—still risk sweeping in the startups at the forefront of AI innovation. The tools lawmakers reach for to carve out Little Tech, including compute and training-cost thresholds, aren’t built for the realities of how AI is made today.In this conversation, Guido Appenzeller, investing partner, and Matt Perault, head of AI policy at a16z, discuss why thresholds based on either compute power and training costs fail to separate Little Tech from larger developers, and why revenue may be a more effective criteria for establishing what counts as an AI startup.Topics covered:01:33: Realities of startup teams building AI models03:57: Challenges of defining frontier models by compute06:46: Why competition at the frontier is key to US success 10:45: Practicalities of building and training AI models today13:24: Why training-cost thresholds fail16:47: When startups hit $100M in training spend24:16: Revenue as an alternative metric to focus on use and market impact28:09: Revenue as a clearer metric31:48: Implications for startups33:17: Loopholes to game thresholds34:56: Closing thoughtsResources:Follow Matt Perault: https://x.com/MattPeraultFollow Guido Appenzeller: https://x.com/appenzStay updated:Subscribe to the a16z AI Policy Brief: https://a16zpolicy.substack.com/Please note that the content here is for informational purposes only; should NOT be taken as legal, business, tax, or investment advice or be used to evaluate any investment or security; and is not directed at any investors or potential investors in any a16z fund. a16z and its affiliates may maintain investments in the companies discussed. For more details please see a16z.com/disclosures. This is a public episode. If you would like to discuss this with other subscribers or get access to bonus episodes, visit a16zpolicy.substack.com

As lawmakers consider requiring companies to make disclosures about their AI models—such as risk reports, impact assessments, or content warnings—questions arise about whether those mandates could run afoul of the First Amendment.In part three of our AI Policy Legal Primer, leading appellate lawyers Allon Kedem, Paul Mezzina, and William Jay join Matt Perault, head of AI policy at a16z, to explore how the principles outlined in the First Amendment apply to AI. They discuss recent disclosure laws, the line between constitutional and unconstitutional compelled speech, and emerging questions about whether model developers’ design choices could themselves count as expressive acts protected under the First Amendment.Stay updated: Subscribe to the a16z AI Policy Brief on Substack: https://a16zpolicy.substack.com/ This is a public episode. If you would like to discuss this with other subscribers or get access to bonus episodes, visit a16zpolicy.substack.com

The dormant Commerce Clause has been anything but dormant in the last couple of weeks. With Congress and the administration actively debating the proper roles of the federal and state governments in regulating AI, the dormant Commerce Clause has emerged as an important topic of date.In part two of our AI Policy Legal Primer, leading appellate lawyers Allon Kedem, Paul Mezzina, and William Jay are back to explain the dormant Commerce Clause and how it intersects with AI policy today. They discuss how courts use principles like extraterritoriality and tests like Pike balancing to weigh challenges, and examine what those frameworks could mean for recently enacted or pending state AI laws, including California’s SB 53, Colorado’s SB 205, and New York’s RAISE Act.If you missed part one, check out Preemption, Explained. Stay updated:Subscribe to the a16z AI Policy Brief on Substack: https://a16zpolicy.substack.com/ This is a public episode. If you would like to discuss this with other subscribers or get access to bonus episodes, visit a16zpolicy.substack.com

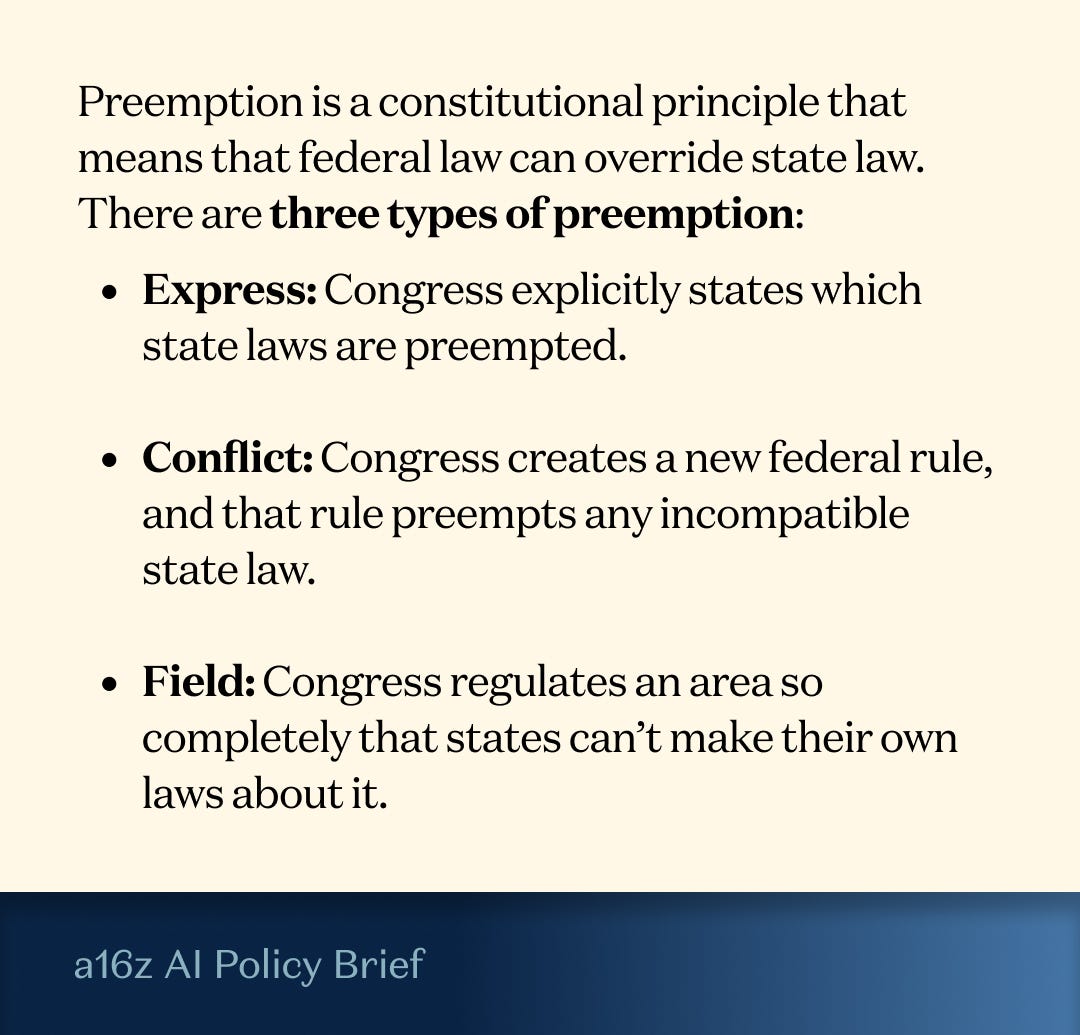

There may be no more important debate in AI policy right now than how power to regulate AI should be divided between the federal and state governments.We first wrote about the respective roles of Congress and state governments in early 2025, as we saw states throughout the country introducing bills that would regulate how AI models are built. Now, with speculation about potential Congressional action to clarify the role of the federal government in regulating AI, the same questions proliferate: What can Congress regulate? What can states regulate? And where are the constitutional limits on their respective governing powers?We asked a panel of leading appellate lawyers to explain the state of the law on these questions.Allon Kedem, partner, Appellate and Supreme Court practice, Arnold & Porter, Paul Mezzina, partner, Appellate, Constitutional and Administrative Law practice, King & Spalding, and William Jay, partner, Appellate and Supreme Court Litigation practice, Goodwin, join Matt Perault, head of AI policy, a16z, to explain how federal preemption, the dormant Commerce Clause, and the First Amendment intersect with AI policy in this moment.This is part one, focused on preemption. Stay tuned for upcoming conversations on the Commerce Clause and the First Amendment.Stay updated:Subscribe to the a16z AI Policy Brief: https://a16zpolicy.substack.com/Please note that the content here is for informational purposes only; should NOT be taken as legal, business, tax, or investment advice or be used to evaluate any investment or security; and is not directed at any investors or potential investors in any a16z fund. a16z and its affiliates may maintain investments in the companies discussed. For more details please see a16z.com/disclosures. This is a public episode. If you would like to discuss this with other subscribers or get access to bonus episodes, visit a16zpolicy.substack.com

With states introducing more than 1,000 AI-related bills this year alone, a fundamental question has taken center stage: what are the roles of the federal government and states in regulating AI?In this episode of the a16z AI Policy Brief, Kevin Frazier, AI Innovation and Law Fellow at the University of Texas School of Law joins Jai Ramaswamy, chief legal and policy officer and Matt Perault, head of AI policy at a16z. Together, they unpack how the Constitution divides power between the federal and state governments, what doctrines like the Commerce Clause mean for modern AI governance, and why this balance of power could shape America’s competitiveness in AI for decades to come.The conversation spans the founding era to the AI frontier–covering constitutional history, current state AI bills, impacts on Little Tech and entrepreneurs, and geopolitical stakes.If you’ve ever found yourself in an animated dinner-party debate about the Articles of Confederation, this one’s for you.Topics Covered:00:00: Why the question of who regulates AI matters as much as how we regulate it01:10: 1,100+ state AI bills and why lawmakers are rushing to act04:05: State and federal authority in governing AI06:35: The limits of state authority09:27: The historical roots of federal power and the Commerce Clause18:10: Preemption, the dormant Commerce Clause, and the implications of a patchwork of state AI laws25:20: Extraterritoriality and how one state’s rules can affect the entire country33:07: Where the founders got it right37:12: How thoughtful AI policy can lower costs and improve everyday life47:42: The global stakes: what fragmentation at home means for US competitivenessResources:Follow Kevin Frazier: https://x.com/KevinTFrazierFollow Matt Perault: https://x.com/MattPeraultFollow Jai Ramaswamy: https://www.linkedin.com/in/jai-ramaswamy-85a77675Stay updated:Subscribe to the a16z AI Policy Brief: https://a16zpolicy.substack.com/Please note that the content here is for informational purposes only; should NOT be taken as legal, business, tax, or investment advice or be used to evaluate any investment or security; and is not directed at any investors or potential investors in any a16z fund. a16z and its affiliates may maintain investments in the companies discussed. For more details please see a16z.com/disclosures. This is a public episode. If you would like to discuss this with other subscribers or get access to bonus episodes, visit a16zpolicy.substack.com