Discover LLMs Research Podcast

LLMs Research Podcast

LLMs Research Podcast

Author: LLMs Research

Subscribed: 1Played: 2Subscribe

Share

© LLMs Research Inc.

Description

10 Episodes

Reverse

State space models like Mamba promised linear scaling and constant memory. They delivered on efficiency, but researchers kept hitting the same wall: ask Mamba to recall something specific from early in a long context, and performance drops.Three papers at ICLR 2026 independently attacked this limitation. That convergence tells you how fundamental the problem is.This podcast breaks down:- Why Mamba's fixed-size state causes "lossy compression" of context- How Mixture of Memories (MoM) adds multiple internal memory banks- How Log-Linear Attention finds a middle ground between SSM and full attention- Why one paper proves SSMs fundamentally can't solve certain tasks without external toolsThe pattern across all three: you can add more state, but you have to pay somewhere. Parameters, mechanism complexity, or system infrastructure. No free lunch.📄 Papers covered:- MoM: Linear Sequence Modeling with Mixture-of-Memorieshttps://arxiv.org/abs/2502.13685- Log-Linear Attentionhttps://openreview.net/forum?id=mOJgZWkXKW- To Infinity and Beyond: Tool-Use Unlocks Length Generalization in SSMshttps://openreview.net/forum?id=sSfep4udCb📬 Newsletter: https://llmsresearch.substack.com🐦 Twitter/X: https://x.com/llmsresearch💻 GitHub: https://github.com/llmsresearch#Mamba #SSM #StateSpaceModels #ICLR2026 #LLM #MachineLearning #AIResearch #Transformers #DeepLearningChapters timestamp0:00 Mamba's secret weakness0:42 The promise: linear scaling, constant memory1:14 The catch: forgetting specific details1:34 Memory bottleneck explained1:43 Attention = perfect recall filing cabinet2:10 SSM = single notepad with fixed pages2:49 The core tradeoff2:57 Three solutions to fix it3:00 Solution 1: Mixture of Memories (MoM)3:51 Solution 2: Log-Linear Attention4:48 Solution 3: External tool use5:49 The "no free lunch" pattern6:41 What wins for longer contexts?7:04 Subscribe for more research deep dives This is a public episode. If you would like to discuss this with other subscribers or get access to bonus episodes, visit llmsresearch.substack.com

The podcast discusses the technical shift in large language models from a standard 512-token context window to modern architectures capable of processing millions of tokens. Initial growth was constrained by the quadratic complexity of self-attention, which dictated that memory and computational needs increased by the square of the sequence length. To address this bottleneck, researchers developed sparse attention patterns and hardware-aware algorithms like FlashAttention to reduce memory and computational overhead. Current iterations, such as FlashAttention-3, leverage asynchronous operations on high-performance GPUs to facilitate context lengths exceeding 128,000 tokens.Changes to positional encodings also proved necessary. Methods such as Rotary Position Embedding (RoPE) and Attention with Linear Biases (ALiBi) allow models to handle longer sequences than those encountered during training. Further scaling into the million-token range required interpolation strategies like NTK-aware scaling and LongRoPE, which adjust how position indices are processed to maintain performance across expanded windows. On a system level, Ring Attention distributes these massive sequences across multiple GPUs by overlapping data communication with computation.While Transformers remain the standard, alternative architectures like Mamba and RWKV have emerged to provide linear scaling through selective state spaces and recurrent designs. These models avoid the quadratic costs associated with the traditional KV cache. Despite these architectural and system-level gains, benchmarks like RULER and LongBench v2 suggest that effective reasoning over very long sequences remains a significant challenge, even as commercial models reach capacities of 2 million tokens This is a public episode. If you would like to discuss this with other subscribers or get access to bonus episodes, visit llmsresearch.substack.com

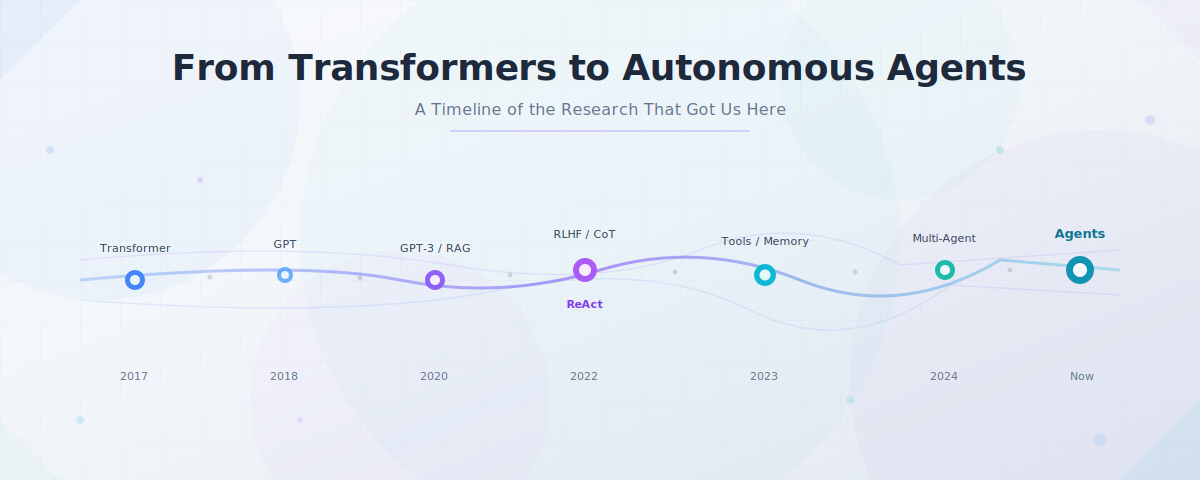

Over the past several years, we have moved from the machine learning era through the large language model era and into what researchers now call the agent era. But we did not arrive here overnight. A series of research contributions, each building on the last, have made agents progressively more capable. This podcast traces that timeline through the papers that shaped today's autonomous systems. This is a public episode. If you would like to discuss this with other subscribers or get access to bonus episodes, visit llmsresearch.substack.com

Your 70-Billion-Parameter Model Might Be 40% WastedThree papers from February 1–6, 2026 converge on a question the field has been avoiding since 2016: what if most transformer layers aren't doing compositional reasoning at all, but just averaging noise?This video traces a decade of evidence, from Veit et al.'s original ensemble observation in ResNets through ShortGPT's layer pruning results and October 2025's formal proof, to three new papers that quantify the consequences. Inverse depth scaling shows loss improves as D to the negative 0.30, worse than one-over-n. TinyLoRA unlocks 91% GSM8K accuracy by training just 13 parameters with RL. And the attention sink turns out to be a native Mixture-of-Experts router hiding in plain sight.The picture that emerges: modern LLMs are simultaneously too deep (layers averaging rather than composing) and too wide (attention heads collapsing into dormancy). Architecturally large, functionally much smaller.This is a video adaptation of our LLMs Research newsletter issue covering the same papers.Papers referenced (in order of appearance):Residual Networks Behave Like Ensembles of Relatively Shallow Networks (Veit, Wilber, Belongie, 2016) https://arxiv.org/abs/1605.06431Deep Networks with Stochastic Depth (Huang et al., 2016) https://arxiv.org/abs/1603.09382ALBERT: A Lite BERT for Self-supervised Learning (Lan et al., 2020) https://arxiv.org/abs/1909.11942ShortGPT: Layers in Large Language Models are More Redundant Than You Expect (Men et al., 2024) https://arxiv.org/abs/2403.03853Your Transformer is Secretly Linear (Razzhigaev et al., 2024) https://arxiv.org/abs/2405.12250On Residual Network Depth (Dherin, Munn, 2025) https://arxiv.org/abs/2510.03470Inverse Depth Scaling From Most Layers Being Similar (Liu, Kangaslahti, Liu, Gore, 2026) https://arxiv.org/abs/2602.05970Learning to Reason in 13 Parameters / TinyLoRA (Morris, Mireshghallah, Ibrahim, Mahloujifar, 2026) https://arxiv.org/abs/2602.04118Attention Sink Forges Native MoE in Attention Layers (Fu, Zeng, Wang, Li, 2026) https://arxiv.org/abs/2602.01203Timestamps:0:00 Why this should bother you 0:41 Veit 2016: ResNets as ensembles 2:14 Stochastic depth, ALBERT, and the quiet accumulation 3:08 ShortGPT, secretly linear transformers, and the formal proof 4:22 February 2026: this week's answer 4:38 Inverse depth scaling: D to the negative 0.30 5:57 Where does capability actually live? 6:23 TinyLoRA: 13 parameters, 91% accuracy 8:35 Width: attention sinks as native MoE 10:58 What this means for architecture, fine-tuning, and inference 11:49 The decade-long arcNewsletter: https://llmsresearch.substack.com GitHub: https://github.com/llmsresearch This is a public episode. If you would like to discuss this with other subscribers or get access to bonus episodes, visit llmsresearch.substack.com

Fixing Reasoning from Three Directions at OnceLLMs Research Podcast | Episode: Feb 1–6, 2026DeepSeek-R1 made RL the default approach for reasoning. This episode covers the 30 papers from the first week of February that are debugging what that approach got wrong, across training geometry, pipeline design, reward signals, memory architecture, and inference efficiency.Timestamps[00:00] Opening and framing — The post-DeepSeek hangover: why the field has shifted from scaling RL to fixing it. Three camps emerge: geometricians, pipeline engineers, and architects.[01:11] MRPO and the bias manifold — Standard RL may not create new reasoning at all, just surface existing capabilities within a constrained subspace. Spectral Orthogonal Exploration forces the model into orthogonal directions. A 4B model beating a 32B on AIME'24.[04:15] ReMiT and the training flywheel — Breaking the linear pretraining-to-RL pipeline. The RL-tuned model feeds signal backward into pretraining. The finding that logical connectors ("therefore," "because") carry the reasoning weight.[06:03] DISPO and training stability - The four-regime framework for gradient control. When to unclip updates, when to clamp hard. Surgical control over the confidence-correctness matrix to prevent catastrophic collapse.[07:20] TrajFusion and learning from mistakes - Interleaving wrong reasoning paths with reflection prompts and correct paths. Turning discarded data into structured supervision.[08:05] Lightning round: Grad2Reward and CPMöbius - Dense rewards from a single backward pass through a judge model. Self-play for math training without external data.[08:46] InfMem and active memory - The shift from passive context windows to active evidence management. PreThink-Retrieve-Write protocol: the model pauses, checks if it has enough information, retrieves if not, and stops early when it does. 4x inference speedup.[09:56] ROSA-Tuning - CPU-based suffix automaton for context retrieval, freeing GPU for reasoning. 1980s data structures solving 2026 problems.[10:36] OVQ Attention - Online vector quantization for linear-time attention. Removes the quadratic memory ceiling for long context.[11:05] Closing debate: which paper survives six months? — One host bets on MRPO (the geometric view challenges scale-is-all-you-need). The other bets on ReMiT (the flywheel efficiency is too obvious to ignore once you see it).Papers DiscussedMRPO: Manifold Reshaping Policy Optimization ReMiT: RL-Guided Mid-Training DISPO TrajFusion Grad2RewardCPMöbius InfMem ROSA-Tuning OVQ AttentionKey TakeawaysStandard RL might be trapping models in a low-rank subspace rather than expanding their reasoning capacity. The bias manifold concept from MRPO reframes the entire alignment-vs-capability debate as a geometric problem.The strict separation between pretraining and post-training is looking increasingly artificial. ReMiT's finding that logical connectors carry disproportionate reasoning weight suggests the base model's curriculum should be informed by what the tuned model struggles with.Passive context windows fail at multi-hop reasoning because they treat all tokens equally. Active memory management (InfMem) and hardware-level retrieval offloading (ROSA-Tuning) are converging on the same insight: models need to manage their own cognitive load.Most RL training fixes this week assume GRPO-style optimization. If that changes, these contributions become fragile. The architectural work on memory and attention solves structural problems that persist regardless of the training recipe. This is a public episode. If you would like to discuss this with other subscribers or get access to bonus episodes, visit llmsresearch.substack.com

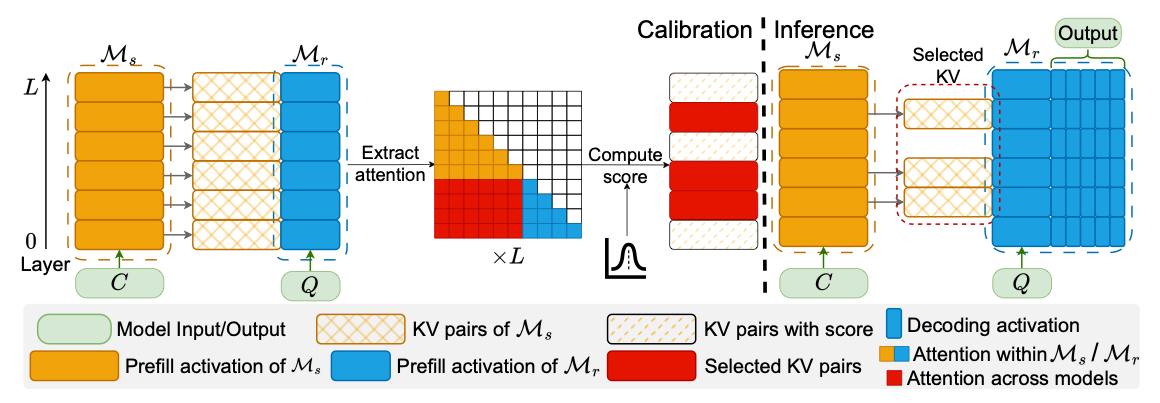

Episode Title: What ICLR 2026 Taught Us About Multi-Agent FailuresEpisode Summary: We scanned ICLR 2026 accepted papers and found 14 that address real problems when building multi-agent systems: slow pipelines, expensive token bills, cascading errors, brittle topologies, and opaque agent coordination. This episode walks through five production problems and the research that provides concrete solutions.TimestampsTimeSection00:00Introduction: The gap between demos and production01:29Problem 1: Why is my agent system so slow?04:44Problem 2: My token bills are out of control07:30Problem 3: One agent hallucinates, the whole pipeline fails10:45Problem 4: My agent graph breaks when I swap a model12:53Problem 5: I have no idea what my agents are saying to each other15:39Recap: The practitioner's toolkit16:33What's still missing: Long-term stability and adversarial robustness17:02ClosingPapers DiscussedProblem 1: LatencySpeculative Actions - Uses faster draft models to predict likely actions and execute API calls in parallel. Up to 30% speedup across web search and OS control tasks.Graph-of-Agents - Uses model cards to filter agents by relevance. Beat a 6-agent baseline using only 3 selected agents.Problem 2: Token CostsKVComm - Shares KV cache directly instead of translating to English. 30% of KV layers achieves near-full performance.MEM1 - Uses RL-based memory consolidation to maintain constant context size. 3.7x memory reduction, 3.5x performance improvement.Problem 3: Error CascadesWhen Does Divide and Conquer Work - Noise decomposition framework identifying task noise, model noise (superlinear growth), and aggregator noise.DoVer - Intervention-driven debugging that edits message history to validate failure hypotheses. Flips 28% of failures to successes.Problem 4: Brittle TopologiesCARD - Conditional graph generation that adapts topology based on environmental signals.MAS² - Generator-implementer-rectifier team that self-architects agent structures. 19.6% performance gain with cross-backbone generalization.Stochastic Self-Organization - Decentralized approach using Shapley value approximations. Hierarchy emerges from competence without explicit design.Problem 5: ObservabilityGLC - Autoencoder creates compressed symbols aligned with human concepts via contrastive learning. Speed of symbols, auditability of words.Emergent Coordination - Information-theoretic metrics distinguishing real collaboration from "spurious temporal coupling." Key finding: you must prompt for theory of mind.ROTE / Modeling Others' Minds as Code - Models agent behavior as executable scripts. 50% improvement in prediction accuracy.Key Concepts ExplainedTermExplanationSpeculative executionBorrowed from CPU architecture. Guess the next action, execute in parallel, discard if wrong.KV cacheThe model's working memory of conversation context, stored as mathematical vectors.Model noiseConfusion that grows with context size. Grows superlinearly with input length.Shapley valuesGame theory concept for assigning credit to players in cooperative games.Spurious temporal couplingAgents appearing to collaborate but actually solving problems independently at the same time.Contrastive learningPushing similar things closer and different things further apart in vector space.Key Quotes"English is a terrible data transfer protocol for machines. We're taking clean mathematical concepts, translating them into paragraphs, and then asking another machine to turn them back into math.""The hierarchy emerges from competence. You don't design it.""Did they solve it together, or did they just all happen to solve it at the same time by themselves?""Treating minds as software is a pretty effective way to predict what software will do."LinksNewsletter: llmsresearch.substack.comTwitter/X: @llmsresearch This is a public episode. If you would like to discuss this with other subscribers or get access to bonus episodes, visit llmsresearch.substack.com

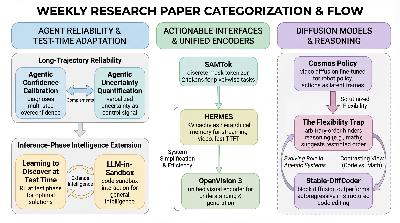

Jan 17–23, 2026: The Rise of the Action LayerHow multimodal AI is shifting from passive perception to active controlThe current landscape of research reflects a shift toward Multimodal Agentic Intelligence, where multimodal capabilities are no longer treated as simple perception but as actionable interfaces for control and interaction. This trend involves the integration of visual representations into next-token prediction frameworks, the adaptation of diffusion models for robotic control, and a focus on active uncertainty signals to improve agent reliability. This is a public episode. If you would like to discuss this with other subscribers or get access to bonus episodes, visit llmsresearch.substack.com

The focus of LLM research is undergoing a significant shift from simply increasing model size to making existing capabilities practically usable for deployment. This week’s papers highlight four emerging themes: Reasoning & Agents, Generation & Synthesis, LLM Decisions, and Structured Episodic Memory. The overarching goal is to solve the real-world bottlenecks of agentic systems, such as high inference costs, trajectory instability, and the reliability of self-reported confidence. This is a public episode. If you would like to discuss this with other subscribers or get access to bonus episodes, visit llmsresearch.substack.com

Key takeaway from todays podcast:Robust Reasoning: The Holonomic Network achieves perfect fidelity extrapolation 100x beyond training lengths, demonstrating a new universality class for logical reasoning with topological stability.Efficient Inference: RelayLLM cuts inference cost by 98.2% invoking LLM only 1.07% of tokens, while GlimpRouter reduces latency by 25.9% and boosts accuracy by 10.7% via entropy-guided routing.Token-level Collaboration: FusionRoute outperforms existing token-level and sequence-level collaboration methods, robustly merging expert LLM outputs with complementary logits.Evaluation Reliability: Evaluative fingerprints reveal LLM judges disagree strongly yet consistently, with classifiers identifying judges at up to 89.9% accuracy, challenging synthetic averaging.Alignment & Steering: SemPA improves sentence embeddings without hurting generation, and compositional steering tokens enable zero-shot control over multiple LLM behaviours. This is a public episode. If you would like to discuss this with other subscribers or get access to bonus episodes, visit llmsresearch.substack.com

* Bayesian & Cognitive Advances: Transformers achieve ultra-precise Bayesian inference with 10⁻³ to 10⁻⁴ bit accuracy, while CREST boosts reasoning accuracy by 17.5% and cuts token usage by 37.6%.* Model Efficiency & Scaling: TG reduces data needs by up to 8% and parameters by 42% compared to GPT-2, and Recursive Language Models handle 100x longer inputs at similar or lower inference costs.* State-of-the-Art Performance: Youtu-LLM sets a new bar for sub-2B parameter models with 128k context, DLCM improves zero-shot benchmarks by +2.69%, and ADOPT outperforms all prior prompt optimization methods.* Benchmark Breakthroughs: Encyclo-K’s top models hit 62.07% accuracy on complex knowledge queries, while Youtu-Agent accelerates RL training by 40% and scores over 71% on WebWalkerQA and GAIA benchmarks.* Novel Insights & Safety: Diffusion Language Models match optimal step complexity in chain-of-thought sampling, and safety analysis reveals a 9.2x disparity between past- and future-tense prompt safety rates. This is a public episode. If you would like to discuss this with other subscribers or get access to bonus episodes, visit llmsresearch.substack.com