Discover The AI Governance Brief

The AI Governance Brief

The AI Governance Brief

Author: Keith Hill

Subscribed: 2Played: 6Subscribe

Share

© 2026 Keith Hill

Description

Daily analysis of AI liability, regulatory enforcement, and governance strategy for the C-Suite. Hosted by Shelton Hill, AI Governance & Litigation Preparedness Consultant. We bridge the gap between technical models and legal defense.

27 Episodes

Reverse

Seventy-five percent of HR leaders report that managers are overwhelmed and not equipped to lead change. But before you dismiss this as a middle management problem, consider: by the time information reaches the CEO, it has been filtered, softened, and "customised to cater to superiors' expectations" at every level. Researchers call it "interpreting upwards."You're not leading the organization you think you're leading. You're leading the organization people want you to believe exists.And that organization is a fiction.In This Episode:The CEO Bubble Is RealGartner 2025: 75% of managers overwhelmed and unequipped to lead changeCEIBS research: Information is "interpreted upwards" at each level—filtered, softened, divorced from ground truth66% of employees hide aspects of themselves from senior leaders80% of C-suite executives "cover" with almost everyone around themYou Cannot Move an Organization You Don't UnderstandThe org chart is a legal fiction—necessary for compliance, useless for understanding how work gets doneThe 80/20 reality: 20% of people drive 80% of influence—and they're not always the people with titlesWith just 20 influential employees identified through ONA, companies can reach 70% of the entire organizationThe Seven Types of Informal PowerExpertise-Based Power (technical knowledge, organizational memory)Reputational Power (track record, reliability)Relational Power (access to key people, social capital)Cultural Gatekeeping (control over "how things are done here")Information Brokerage (bridging disconnected groups)Resource Control (informal control over budgets, tools, access)Positional Proximity (closeness to decision-makers)Network Position Metrics That MatterDegree Centrality: Direct connections—ability to spread information or resistance quicklyBetweenness Centrality: Bridge between disconnected groups—the brokers with cross-silo perspectiveEigenvector Centrality: Connected to other highly connected people—systemic influenceWhy Your AI Governance Initiative Will FailYou'll launch through formal channels targeting formal authorityYou'll miss the informal systems that actually determine what people doPredictable arc: announcement → compliance → erosion → irrelevanceWells Fargo, Boeing: Executives were the last to know about problems employees understood clearlyYour Seven-Day Action Plan:Days 1-3: Map one network—ask 15 people across levels: "When you need to get something done outside the normal process, who do you go to?" Days 4-5: Schedule three skip-level conversations two to three levels down Days 6-7: Identify one gap between the organization you thought you had and the organization you actually haveReady to see your actual organization?Understanding informal power structures isn't optional for AI governance success. It's the foundation everything else depends on.organizational network analysis, informal power structures, executive blindness, AI governance failure, organizational psychology, skip-level meetings, change management, CEO bubble, interpreting upwards, informal influencers, psychological safety, organizational intelligence

Six months ago, I worked with a healthcare technology company that had everything CRA compliance requires on paper: executive sponsorship confirmed, steering committee formed, product inventory complete, SBOM tools selected, documentation templates created. Six months of planning. Six months of meetings. Six months of preparing to prepare.When I asked how many products had achieved conformity-ready status, the answer was zero.They had mistaken planning for progress. And September 2026 was now six months closer.In This Episode:Why Knowledge Isn't the Barrier—Execution IsCRA requires simultaneous changes across Engineering, Product, Security, Legal, Quality, and DocumentationEach function has competing priorities and limited capacityWithout structured change management, organizational capacity overwhelms and implementation stallsThe Three-Phase Implementation RoadmapPhase One (Now → Early 2026): Governance, inventory, SBOM infrastructure, documentation systemsPhase Two (Mid-2026 → September 2026): PSIRT operationalization, vulnerability reporting workflows, 24-hour response verificationPhase Three (Late 2026 → December 2027): Complete documentation, conformity assessment, EU Declaration preparationQuick Wins That Build MomentumWeek 1: Executive sponsor announcementWeek 2: Single business unit inventoryWeek 3: First compliant SBOMWeek 4: Pilot product risk assessmentWeek 6: Control mapping to existing frameworksWeek 8: Complete documentation package for pilot productWeek 12: Tabletop vulnerability exerciseOvercoming the Five Resistance Patterns"We don't have time" → Explicit deprioritization decisions"This isn't my responsibility" → RACI matrix clarity"We already do this" → Evidence-based gap analysis"The deadline is far away" → Phase gate accountability"Let's wait for regulatory clarity" → Risk-based implementationThe Cost of Delay (Quantified)20 months remaining allows phased implementation14 months remaining requires 30% faster implementation8 months remaining requires 2.5x resource multiplicationNotified body calendars are filling NOWTalent competition is intensifyingFrom Project to Operational DisciplineDecember 2027 isn't the finish line—it's the starting lineSBOM generation must become permanent pipeline capabilityVulnerability monitoring must become continuousDocumentation must be maintained as products evolveConformity must be reassessed when products change materiallyYour Fourteen-Day Action Plan:Days 1-3: Formalize executive commitment with documented engagement cadence Days 4-6: Identify specific individuals for CRA work with time allocation Days 7-9: Select three quick wins achievable in 90 days with owners and dates Days 10-12: Define Phase One milestones with specific completion dates Days 13-14: Prepare and distribute program kickoff communicationDeliverables:Documented executive commitment with engagement cadenceNamed resource allocation with sponsor approvalSelected quick wins with owners and datesPhase One milestone scheduleProgram kickoff communicationReady to convert knowledge into action?The First Witness Stress Test reveals where your organization stands today—and builds the implementation roadmap that converts planning into progress. Stop preparing to prepare. Start executing.CRA implementation, CRA change management, compliance program execution, CRA roadmap, September 2026 compliance, CRA quick wins, compliance momentum, CRA phase gates, regulatory implementation, CRA operational discipline, compliance transformation, CRA program management

A healthcare technology CEO told me last quarter that she wasn't worried about CRA because her products were medical devices regulated under MDR. She was half right. Her Class IIa infusion management system is indeed exempt from CRA product requirements. But the cloud platform that aggregates patient data from those devices? Not exempt. The mobile application clinicians use to monitor alerts? Not exempt. The integration APIs that connect to hospital EHR systems? Not exempt.Her MDR exemption protected one product. Her ecosystem has seventeen products in CRA scope that nobody was tracking.In This Episode:Healthcare: Why Your MDR Exemption Is Narrower Than You ThinkMDR exempts medical devices with medical purpose—not the digital ecosystem surrounding themCloud platforms, clinician dashboards, mobile alert apps, integration APIs: likely in CRA scopeThe proposed MDR revision (COM(2025)1023): enhanced cybersecurity requirements coming for certified devicesRadio Equipment Directive (RED) overlay for WiFi/Bluetooth-enabled productsFinance: Why DORA Doesn't Satisfy CRADORA is entity-level regulation (your organization's ICT risk management)CRA is product-level regulation (products placed on the market)Your mobile banking app needs DORA compliance AND CRA compliance—separatelyFinancial industry exemption requests have not prevailedThe Silo Problem in Both SectorsHealthcare: MDR teams lack DevSecOps velocity; IT Security lacks regulatory documentation expertiseFinance: DORA teams don't address product-level compliance; product teams operate outside regulatory structureResult: competent functional performance producing collective compliance failureThe Integration OpportunityISO 27001 implementations provide ~60% CRA requirement coverageHealthcare: Extend MDR QMS to cover CRA requirementsFinance: Map DORA ICT controls to CRA essential requirementsOrganizations aren't starting from zero—they're closing specific gaps from established foundationsSector-Specific Implementation PathsHealthcare: Ecosystem inventory → QMS extension → Notified body harmonization → RED overlayFinance: Product-vs-entity analysis → DORA-CRA mapping → Evidence integration → Dual reportingYour Fourteen-Day Action Plan:Days 1-3: Exemption analysis with documented regulatory rationale Days 4-7: Existing framework inventory (MDR QMS, DORA ICT, ISO 27001, NIST CSF) Days 8-11: Control mapping—CRA requirements vs. existing controls Days 12-13: Gap prioritization by examination risk and implementation effort Day 14: Integration strategy documentation for executive approvalDeliverables:Exemption analysis with documented rationaleExisting framework inventoryControl mapping showing CRA coverage percentageGap prioritization with preliminary roadmapReady to map your regulatory overlaps?The First Witness Stress Test includes sector-specific analysis—mapping your existing MDR, DORA, or ISO 27001 controls against CRA requirements to reveal how much coverage you already have and where genuine gaps remain. Stop duplicating compliance effort. Start integrating it. CRA MDR exemption, healthcare CRA compliance, financial services CRA, DORA CRA overlap, medical device regulation cybersecurity, CRA ISO 27001 mapping, integrated compliance framework, CRA healthcare ecosystem, fintech CRA requirements, connected medical devices, regulatory integration, CRA control mapping

I recently facilitated a CRA readiness meeting with a mid-size medical technology company. Present: the CISO, the VP of Engineering, the Chief Product Officer, the General Counsel, and the VP of Quality Assurance. I asked a simple question: "Who owns CRA compliance for your flagship monitoring platform?" Forty-five seconds of silence. Then the CISO said, "I assumed Product owned it." The CPO said, "We thought it was a security matter." The VP of Engineering said, "Legal never told us it was our responsibility." The General Counsel said, "We've been waiting for someone to tell us what Legal's role should be."That platform ships to thirty-two EU countries. Nobody owns its compliance.In This Episode:The Accountability Diffusion ProblemCRA compliance touches eight functions—when everyone owns it, no one owns itEach function optimizes for its own objectives; no function optimizes for CRA specificallyCompetent functional performance producing collective compliance failureThe Four Accountability GapsProduct lifecycle ownership discontinuity: who owns the product across 5+ years of support?Cross-functional requirement translation: who converts CRA language into engineering specs, test cases, documentation requirements?Evidence aggregation: who integrates outputs from multiple functions into examination-ready packages?Conformity declaration authority: who signs, and do they have visibility into actual compliance status?The Three-Level Accountability SolutionExecutive Sponsor: Named executive accountable for compliance outcomes—resolves resource conflicts, ensures priority, provides board visibilityCRA Program Owner: Operational coordination, roadmap management, cross-functional alignment, consolidated status reportingProduct Compliance Owners: Named individual accountable for each product's conformity, documentation completeness, evidence maintenanceThe Steering Committee ModelCross-functional decision-making body, not a status meetingResolves conflicts individual functions cannot resolve aloneWeekly during implementation, bi-weekly during steady stateChaired by Program Owner, executive sponsor attends monthlyThe RACI FrameworkProduct inventory: Product Management (R), Program Owner (A)Risk assessment: IT Security (R), Product Compliance Owner (A)SBOM generation: Engineering (R), Product Compliance Owner (A)Technical documentation: Engineering/Tech Writing (R), Product Compliance Owner (A)Conformity testing: Quality Assurance (R), Product Compliance Owner (A)EU Declaration of Conformity: Legal (R), Executive Sponsor (A)Five Governance Pitfalls to AvoidAssigning ownership without authorityOver-centralizing execution (creates bottlenecks)Treating CISO as default owner (CRA is product safety, not just security)Failing to define product-level ownersGovernance without executive commitmentYour Fourteen-Day Action Plan:Days 1-3: Confirm executive sponsorship with explicit CEO/executive team discussion Days 4-6: Identify/appoint CRA Program Owner with defined authority Days 7-9: Form Steering Committee, define membership and meeting cadence Days 10-12: Assign Product Compliance Owners for every in-scope product Days 13-14: Develop RACI matrix for key CRA activitiesDeliverables:Documented executive sponsorshipAppointed Program Owner with defined authorityFormed Steering Committee with scheduled meetingsAssigned Product Compliance Owners for all in-scope productsRACI matrix defining cross-functional accountabilityReady to establish CRA governance?The First Witness Stress Test includes governance assessment—identifying accountability gaps, mapping current ownership patterns, and recommending structures that convert functional activity into compliance outcomes. Stop assuming someone owns it. Start documenting who does.CRA governance, CRA accountability, compliance ownership, CRA program owner, executive sponsorship compliance, RACI matrix CRA, CRA steering committee, product compliance owner, regulatory accountability, cross-functional compliance, CRA organizational structure, compliance governance framework

In January 2025, a German market surveillance authority examined twelve IoT manufacturers under existing CE marking requirements. Four couldn't produce documentation within the required timeframe. Three produced documentation that failed to demonstrate conformity. Two had documentation so disorganized examiners couldn't determine what had been tested. Only three manufacturers—twenty-five percent—provided documentation that satisfied examination. And this was before CRA requirements took effect.Market surveillance authorities won't inspect your codebase. They won't interview your developers. They won't observe your security practices. They will examine documentation—and documentation alone.In This Episode:What Market Surveillance Actually ExaminesArticle 31: Authority to require documentation demonstrating conformityArticle 54: Ten-year minimum retention requirementWhy engineering documentation doesn't satisfy regulatory requirementsThe Four CRA Documentation Annexes DecodedAnnex II: User information requirements (manufacturer ID, security risks, update sources, vulnerability reporting contact)Annex V: EU Declaration of Conformity (the legal attestation creating personal liability)Annex VII: Technical documentation (risk assessment, design specification, test results, production process, vulnerability handling)Annex VIII: Conformity assessment procedures (documented internal assessment for Default category)The Five Documentation Gaps That Fail ExaminationRisk assessment without design traceabilityEvidence chains without version controlProduction process without conformity maintenanceVulnerability handling without product-specific recordsSupport periods without formal definition or notification mechanismDocumentation as a System, Not a CollectionDocument identifiers and explicit cross-referencesTraceability matrices linking requirements → risks → design → tests → evidenceIntegration with engineering workflows for automatic evidence generationDistinct documentation ownership separate from engineering ownershipRetention infrastructure designed for ten-year horizonsThe Six Required Documentation TypesProduct Risk Assessment (with treatment decisions referencing design)Design Specification (with requirement traceability matrices)Test Plan and Results (with requirement coverage matrix)Production Process Description (with continuous conformity evidence)Vulnerability Handling Record (with timeline documentation)EU Declaration of Conformity (with authorized signatory)Your Fourteen-Day Action Plan:Days 1-3: Documentation inventory for priority product Days 4-6: Gap analysis against CRA requirements using six document types Days 7-9: Traceability assessment—trace one requirement through full evidence chain Days 10-12: Workflow integration analysis—identify automation opportunities Days 13-14: Documentation roadmap draft with prioritized improvementsDeliverables:Documentation inventoryGap analysis against CRA requirementsTraceability assessment identifying where evidence chains breakPrioritized documentation roadmapReady to assess your documentation gaps?The First Witness Stress Test includes comprehensive documentation assessment—revealing where your evidence chains break, where traceability fails, and what examination would expose. The organizations that discover gaps internally can remediate. The organizations that discover gaps during examination cannot.MAKE AN APPOINTMENT WITH ME TO PREPARE YOUR DOCUMENTATION APPROACHhttps://calendly.com/verbalalchemist/30minCRA documentation requirements, CRA technical documentation, Annex VII documentation, EU Declaration of Conformity, market surveillance examination, compliance evidence, regulatory documentation, CRA audit preparation, ten-year retention, risk assessment traceability, conformity assessment documentation, CRA Annex II user information

Your engineering team has probably told you they're "mostly compliant" with CRA technical requirements. They're not lying—they just don't know what compliance actually means. The CRA's Annex I contains twenty-one essential cybersecurity requirements. When I assess mid-size organizations against these requirements, typical coverage is eight to eleven. Not because engineering isn't competent. Because the requirements demand capabilities most organizations have never built.In This Episode:The Twenty-One Essential Requirements DecodedThirteen product security requirements: security-by-design, data protection, access control, operational security, and update capabilityEight vulnerability handling requirements: the infrastructure that enables September 2026 complianceWhy "appropriate level of cybersecurity based on risks" means documented risk assessments with traceable design decisionsThe SBOM Reality CheckYour package manager export captures 2-3 of 7 required data elementsBSI TR-03183-2 mandatory elements: component name, version, supplier identification, unique identifier (Package URL/CPE), cryptographic hash, license information, dependency relationshipsWhy partial SBOM coverage equals non-complianceDevSecOps as Compliance EnablerOrganizations with mature DevSecOps address 12-17 of 21 requirements through existing pipeline integrationThe three persistent gaps: SBOM completeness, documentation formality, vulnerability handling process maturityYou don't need new tools—you need to configure existing tools for CRA evidence generationThe Five-Phase Implementation PathPhase 1: Evidence inventory (2-4 weeks)Phase 2: SBOM infrastructure buildout (4-8 months) — THE CRITICAL PATHPhase 3: Documentation formalization (3-6 months, parallel)Phase 4: PSIRT establishment (2-4 months)Phase 5: Conformity assessment preparationExecutive Liability and Technical RequirementsConformity declarations signed without verification create personal exposureDiscovery scenarios: incomplete SBOM → missed vulnerability → customer compromise → presumption of defectivenessEngineering builds infrastructure; executives verify it meets requirementsYour Fourteen-Day Action Plan:Days 1-3: Evidence inventory initiation—list all security tools and processes Days 4-7: CRA mapping exercise—requirements matrix against evidence sources Days 8-10: SBOM capability assessment—test seven-element generation on one product Days 11-12: Vulnerability response timeline analysis against 24/72-hour/14-day requirements Days 13-14: Gap prioritization and preliminary roadmapDeliverables:Evidence inventory mapping current capabilities to CRA requirementsSBOM gap assessment identifying missing elementsVulnerability response timeline analysisPrioritized gap list with preliminary roadmapReady to assess your technical CRA gaps?The First Witness Stress Test maps your existing DevSecOps capabilities against all twenty-one Annex I requirements—identifying where you have evidence, where you have gaps, and what closing those gaps actually requires. Stop guessing at coverage. Start measuring it.CRA Annex I requirements, SBOM compliance, Software Bill of Materials, BSI TR-03183-2, DevSecOps CRA compliance, vulnerability handling requirements, PSIRT product security, CRA conformity assessment, security by design, twenty-one essential requirements, CRA evidence generation, cryptographic hash SBOM

A medical technology company's compliance team was confident they had three products requiring CRA attention. After completing the inventory exercise, we identified twenty-three. Twenty had no documented compliance owner. Twelve had never undergone security assessment. Four required third-party conformity assessment from notified bodies already signaling capacity constraints. Their eighteen-month timeline became a resource crisis in a single meeting.Most organizations underestimate CRA product scope by sixty to seventy percent on initial assessment.In This Episode:What "Products with Digital Elements" Actually MeansSoftware products: applications, SaaS platforms, mobile apps, SDKsHardware with embedded software or firmwareRemote data processing solutions—the cloud backends your products depend on are part of the productThe Three Gap Patterns That Destroy Compliance TimelinesLegacy product gap: systems in "maintenance mode" still generating revenue still have CRA obligationsComponent product gap: APIs, SDKs, and libraries distributed through package managers require independent classificationCloud infrastructure gap: you cannot outsource compliance responsibility to your cloud providerWhy Exemptions Are Narrower Than You ThinkMDR-certified medical devices may be exempt—but patient data platforms receiving their data are notNon-commercial open-source exemption doesn't cover commercial products using open-source dependenciesExemption assumptions require documented regulatory basis, not organizational convenienceThe Four-Tier Classification SystemDefault category (~90% of products): internal self-assessment with proper documentationImportant Class I: identity management, VPNs, SIEM systems—harmonized standards or third-party assessmentImportant Class II: operating systems, firewalls, HSMs—mandatory notified body involvementCritical: hardware security boxes, smart meter gateways—highest scrutiny with cybersecurity certificationWhy Classification Determines EverythingConformity assessment pathway drives timeline and budgetNotified body capacity is finite—organizations engaging early secure assessment slotsEU 2025/2392 Implementing Regulation clarifies component vs. integrated product classificationYour Fourteen-Day Action Plan:Days 1-3: Revenue-based product identification with Finance Days 4-6: Technical architecture expansion with Engineering Days 7-9: Customer relationship validation with Customer Success Days 10-12: Exemption analysis with documented regulatory basis Days 13-14: Preliminary classification against Annex III and IV criteriaDeliverables:Comprehensive product inventoryExemption register with documented rationalePreliminary classification matrixReady to discover your actual CRA scope?The First Witness Stress Test includes comprehensive scope determination and classification analysis—revealing the products hiding in plain sight and the conformity assessment pathway each requires. Stop assuming. Start inventorying.

While your competitors build compliance roadmaps around December 2027, a hidden deadline eighteen months earlier will determine who maintains European market access—and who loses it. September 11, 2026 activates mandatory twenty-four-hour vulnerability reporting to ENISA. Most mid-size organizations cannot meet that timeline because they lack the Software Bill of Materials infrastructure required to identify affected products. That infrastructure takes twelve to eighteen months to build. Do the math.In This Episode:The September 2026 Compliance CliffWhy vulnerability reporting obligations activate sixteen months before full CRA complianceTwenty-four-hour ENISA notification requirements for actively exploited vulnerabilitiesThe Log4Shell lesson: organizations with SBOM infrastructure responded in hours; those without took monthsThe Four Gaps Destroying Compliance TimelinesProduct inventory failures: most organizations cannot answer "how many products with digital elements do you sell in EU markets"Classification confusion across Default, Important Class I, Important Class II, and Critical tiersSBOM systems capturing two of seven required data elementsDocumentation infrastructure that cannot survive regulatory examinationPersonal Liability ExposureEU Product Liability Directive 2024/2853: presumption of defectiveness for non-CRA-compliant productsDiscovery scenarios: every security investment decision becomes evidence in litigationHealthcare MDR intersection: connected ecosystems surrounding exempt medical devices may still be in scopeFinance DORA overlap: dual compliance requirements most organizations haven't integratedThe Six-Element Governance FrameworkProduct inventory and classification processesDocumented ownership from design through end-of-lifeAutomated SBOM generation as a build gateCRA-compliant documentation systemsTwenty-four-hour vulnerability management workflowCross-departmental steering committee with executive sponsorshipYour Fourteen-Day Action Plan:Days 1-3: Conduct complete product inventory across all EU market offerings Days 4-7: Preliminary classification against four-tier CRA framework Days 8-10: Map current ownership and identify accountability gaps Days 11-14: Assess SBOM generation capability against seven required data elementsThe Stakes:€15 million or 2.5% of global annual turnover for non-compliance. No CE marking means no European market. The organizations that dominate EU markets in 2028 are the ones that started preparing in 2025.Ready to assess your CRA exposure?The First Witness Stress Test delivers a comprehensive gap analysis of your current readiness against September 2026 vulnerability reporting requirements and December 2027 full compliance obligations. Stop guessing. Start preparing. SCHEDULE AN APPOTINMENT: https://calendly.com/verbalalchemist/discovery-callEU Cyber Resilience Act, CRA compliance, September 2026 deadline, SBOM Software Bill of Materials, CE marking requirements, vulnerability reporting, ENISA notification, product liability directive, digital product compliance, European market access, cybersecurity regulation, mid-size company compliance

Eighty-three percent of organizations are using AI. Only twenty-five percent have proper governance. That's not a gap—it's a liability waiting to land on someone's desk.This week's AI Governance News Roundup delivers five critical developments every executive needs before their next leadership meeting. From the world's first government framework for AI agents to a federal power play that could reshape AI regulation across the United States, the governance landscape is shifting faster than most organizations can adapt.Story 1: Singapore Becomes First Government to Publish AI Agent Framework[CLIP] "AI agents are different from the AI tools you've governed before. They have autonomy. They access sensitive data. They connect to external systems. They take actions that have immediate real-world consequences."Singapore's Infocomm Media Development Authority, through Minister Josephine Teo, launched the world's first government framework specifically targeting AI agent deployment. This isn't about chatbots or traditional AI—it addresses systems that make decisions and take actions independently, with minimal human oversight.Why This Matters:→ Agents have already deleted live databases without being instructed to do so → They've exposed sensitive customer information → As agents increasingly interact with other agents, a single failure can cascade across systemsThe Framework Addresses Three Critical Areas:→ Accountability — Making it explicitly clear who bears responsibility when an agent fails → Controls — Building mechanisms to stop, check, and limit what agents can access → Human Oversight — Identifying checkpoints that require human approval before agents proceedYour Move This Week: Three questions to ask your team immediately:What AI agents are we currently deploying or piloting, and who is accountable if they fail?What controls exist to stop an agent from taking actions beyond its intended scope?Where are the human approval checkpoints in our agent workflows?Story 2: DOJ Creates AI Litigation Task Force to Challenge State Laws[CLIP] "The federal government is asserting dominance, and the patchwork of state regulations you've been tracking may be about to get challenged in court."The Department of Justice has established an Artificial Intelligence Litigation Task Force with an explicit mission: identify and challenge state AI laws that conflict with federal priorities. A January 9th memorandum cited the President's executive order directing a "minimally burdensome national policy framework for AI" to ensure U.S. "dominance across many domains."The Compliance Implications:→ Colorado's AI Act, California's various AI bills, and New York's algorithmic accountability requirements could face federal preemption challenges → Grounds for challenge: state laws unconstitutionally regulate interstate commerce or are preempted by federal regulation → If challenges succeed, compliance work you've already done for state requirements may become unnecessary → If challenges fail—or if administrations change—you'll still need those programsYour Move This Week: Don't abandon your state compliance programs. Task your legal team with scenario analysis: What if federal preemption challenges succeed against specific state laws? Build flexibility into your governance program.Story 3: New Survey Reveals the 4-to-1 Governance Gap[CLIP] "Eighty-three percent of organizations use AI but only twenty-five percent have strong governance—the gap is your exposure and your opportunity."A new survey from Compliance Week and konaAI of 193 compliance, ethics, risk, and audit leaders found an alarming disparity between AI adoption and accountability.The Breakdown:→ 90% have deployed generative AI tools like ChatGPT and Claude → 52% are using agentic AI → 51% are using large language models → 42% are using predictive analyticsThe Risk Exposure:→ 66% reported data quality issues → 47% had training problems → 46% faced privacy and security concerns → 42% experienced unmanaged AI use by employees → 54% said a major problem was a lack of AI expertiseCritical Finding: Only 5% of compliance teams have been using AI for more than two years. 27% started in the last six months. This is an industry still figuring it out—which means the standard of care hasn't been established yet. Right now is when you set yourself apart.Your Move This Week: Run an internal audit. Ask: What AI tools are employees using that we haven't formally approved? Create an inventory before your board asks why AI your employees deployed created a liability.Story 4: The Case for a Chief AI Governance Officer[CLIP] "AI introduces risks that don't fit neatly into existing domains. Harmful bias isn't a security problem. Hallucinations aren't a privacy problem. Explainability isn't a compliance problem. But they're all AI problems."Writing in IAPP, experts from McDonald's and Credo AI make the case that organizations need dedicated AI governance functions—not distributed responsibility across existing risk domains, but central teams with AI risk specialists and a strategic quarterback role.The Three-Stage Maturity Model:→ Stage 1: Ad Hoc Governance — Existing security, privacy, and legal teams augment their responsibilities → Stage 2: Collaborative Governance — AI working groups and better coordination → Stage 3: Dedicated AI Governance — A central team mandated to design and enforce responsible AI enterprise-wideThe Trajectory: Just as data protection officers became standard after GDPR, expect Chief AI Governance Officers—or CAIGOs—to become standard as AI regulation matures.Your Move This Week: Assess your current stage honestly. If you're in stage one, start planning the transition to stage two. The goal isn't to flip a switch, but to begin the progression before you're forced into it by regulation or incident.Story 5: Zero-Trust Coming for Data Governance[CLIP] "You can no longer assume data was generated by humans. You can no longer implicitly trust data quality."Gartner predicts that 50% of organizations will adopt zero-trust models for data governance by 2028. The driver: AI-generated data—often called "AI slop"—is contaminating training data at scale.The Problem:→ Large language models trained on web-scraped data are increasingly training on outputs from other AI models → This creates risk of "model collapse" under the accumulated weight of hallucinations and inaccurate realities → As AI-generated content becomes indistinguishable from human-created content, authentication and verification become essentialYour Move This Week: Ask your data team: Can we identify which data in our systems was AI-generated? Can we trace the provenance of our training data? If not, you're building AI systems on foundations you can't verify.Your Action List for This Week:Inventory your AI agents and their accountability structuresRun a shadow AI audit to find unapproved toolsAssess your governance maturity stageCheck whether you can identify AI-generated data in your systemsThe ...

Eighty percent of organizations will formalize AI policies addressing ethical, brand, and PII risks by 2026. That's the prediction from Gartner.But here's the question nobody's asking: Who enforces those policies? Who monitors compliance? Who measures whether AI governance actually works?That's Compliance. The department that transforms boardroom promises into operational reality.And here's what makes Compliance unique: They don't just enforce rules. They measure whether governance creates value—or just creates documentation nobody reads.**The Measurement Crisis:**Your CEO asks: "Are we compliant?"You answer: "We have policies."That's not the same thing.Your Board asks: "Is AI governance delivering ROI?"You show them: "We conducted 47 bias audits this quarter."They say: "That's activity. Not impact."This is the Measurement Crisis: Compliance has frameworks, policies, controls, and audit trails. What Compliance doesn't have is measurable proof that governance creates value.**The Activity vs. Outcome Gap:**Most Compliance teams track:- Number of policies created- Number of training sessions delivered- Number of audits conducted- Percentage of employees who completed AI ethics trainingThose are activity metrics. They measure effort, not impact.What Compliance should track:- Time from AI concept to compliant deployment (Governance Velocity)- Percentage of AI projects rejected late-stage vs. early-stage- Cost of late-stage compliance remediation vs. early integration- Reduction in regulatory penalties year-over-year- Increase in AI deployment success rateThose are outcome metrics. They measure value.**Five Compliance Failures:****Failure #1 - The ISO/IEC 42001 Implementation Gap:**ISO/IEC 42001 is the world's first certifiable AI management system standard. Organizations that achieve certification report 40% faster AI compliance cycles.But most organizations are implementing Annex A controls piecemeal—adopting bias mitigation and transparency requirements without building the management system infrastructure that makes those controls sustainable.You pass the initial audit, then controls decay because there's no governance structure holding them in place.**Failure #2 - The NIST Framework Misinterpretation:**Most organizations treat NIST RMF as a one-time checklist. They check "Govern" because they wrote a policy. They check "Map" because they created a spreadsheet 18 months ago that's never been updated.NIST RMF is a continuous cycle, not a one-time project.- Govern: Continuously cultivating organizational culture and capability- Map: Continuously discovering and classifying AI systems including shadow AI- Measure: Systematically evaluating against trustworthiness characteristics- Manage: Continuously allocating resources based on measured risk**Failure #3 - The Late-Stage Rejection Crisis:**Here's the average timeline when Compliance isn't involved early:- Months 1-3: Concept development- Months 4-8: Development and testing- Month 9: Someone says "maybe we should get Compliance to review"- Month 10: Legal discovers undocumented training data. HR discovers no bias audit. Compliance discovers no human oversight protocol.- Month 11: Project rejected or sent back for major reworkTotal sunk cost? $500K to $2M per project.Organizations with mature AI governance—involving Compliance from inception—report 60% reduction in late-stage project rejections.**Failure #4 - The KPI Inadequacy:**Your current KPIs: "95% completed AI ethics training. 47 bias audits conducted. 12 policies published."What those KPIs don't tell you:- Did training change anyone's behavior?- Did audits find problems that were actually fixed?- Are published policies being followed by anyone?Effective KPIs:- Governance Velocity: Average days from concept to compliant deployment (target: <90 days)- Early-Stage Gate Success: % of projects passing initial review without major rework (target: >85%)- Shadow AI Discovery Rate: % discovered through audits vs. voluntarily disclosed (lower is better)- Remediation Cycle Time: Average days from gap identification to closure (target: <30 days)- Governance ROI: Cost savings from early integration vs. late remediation (target: 5-10x)**Failure #5 - The Audit Trail Inadequacy:**Most Compliance teams maintain:- Policy documents in SharePoint- Training records in LMS- Audit reports in spreadsheets- Risk assessments in Word documents- Meeting minutes scattered across emailWhen an auditor asks how you ensure continuous AI bias monitoring, you send seven documents from four systems with no clear narrative.That's not an audit trail. That's an audit nightmare.**The Compliance Operations Framework:****Responsibility #1 - Governance Orchestration:**Compliance is the conductor, not the orchestra. Your job is ensuring all departments play from the same score.- Integrated Control Framework: Map ISO 42001 controls, NIST RMF functions, and EU AI Act requirements into a single unified structure- Cross-Functional Governance Committee backbone: Facilitate meetings, track decisions, ensure follow-through- Master Governance Calendar: All deadlines, responsibilities, dependencies in one place**Responsibility #2 - Continuous Monitoring and Measurement:**AI Governance Dashboard showing real-time:- AI systems by risk classification- Compliance status by system- Governance Velocity metrics- Audit findings and remediation status- Training competency verification- Shadow AI discovery rate**Responsibility #3 - Audit and Verification:**Risk-Based Audit Protocol—audit for effectiveness, not checkbox compliance:- Human Oversight Verification: Don't just verify "reviewer assigned." Sample actual decisions. Interview reviewers. Calculate override rates. Test whether reviewers can explain their approvals.- Bias Audit Quality Assessment: Review methodology. Verify auditor qualifications. Test whether discovered biases were remediated.- Data Lineage Validation: Sample training datasets. Verify documented sources match reality.**Responsibility #4 - ROI Demonstration:**Track and report:- Cost Avoidance: "Early compliance integration prevented $3.2M in late-stage rework"- Velocity Improvement: "Governance Velocity improved from 127 days to 82 days"- Risk Reduction: "Zero AI-related regulatory penalties"- Competitive Advantage: "ISO 42001 certification achieved 6 months faster than industry average"**The Measurable Governance Operating System:****Stage 1 - Integrated Framework Implementation:**Stop implementing ISO 42001, NIST RMF, and EU AI Act as separate initiatives.Framework Integration:- ISO 42001 Clause 4 (Context) → NIST Govern → EU AI Act Article 9 (Risk Management)- ISO 42001 Clause 8 (Operation) → NIST Map + Measure → EU AI Act Technical Documentation- ISO 42001 Clause 9 (Performance Evaluation) → NIST Measure → EU AI Act MonitoringBuild ONE governance infrastructure satisfying all frameworks. Not three separate programs.**Stage 2 - Governance Velocity Measurement:**Stage Gate Timing:1. Concept Gate: Initial proposal → Compliance review (Target: 3 days)2. Design Gate: Technical architecture → Risk classification (Target: 10 days)3. Development Gate: Build/test → Bias audit and data verification (Target: 30 days)4. Deployment Gate: Final verification → Legal sign-off (Target: 7 days)Total Standard Governance Velocity: 50 days for standard-risk projec...

Over 700 court cases worldwide now involve AI hallucinations. Sanctions range from warnings to five-figure monetary penalties.The EU AI Act goes into full enforcement August 2nd, 2026—190 days from today. Penalties reach €35 million or 7% of global revenue, whichever is higher.And here's the impossible situation Legal finds itself in: They're expected to defend AI decisions they weren't consulted about, using systems they didn't approve, with training data they can't audit, against regulations that didn't exist when the AI was deployed."We trusted the vendor" isn't a defense. It's an admission of negligence. And Legal gets blamed anyway.**The Regulatory Tsunami:****EU AI Act Timeline:**- August 1, 2024: Entered into force- February 2, 2025: Prohibited AI practices and AI literacy obligations- August 2, 2025: Governance provisions and GPAI model obligations- August 2, 2026: Full enforcement for high-risk AI systems**Penalties:**- Up to €35 million OR 7% of worldwide annual turnover (whichever is higher)- €15 million or 3% for other infringements- €7.5 million or 1% for supplying incorrect dataThe EU AI Act has extraterritorial reach. If you offer AI systems to EU users—regardless of where your company is based—you're covered. Just like GDPR.**The US State Patchwork:**- Colorado AI Act: Effective June 2026—risk management policies, impact assessments, transparency- Illinois HB 3773: Effective January 1, 2026—can't use AI that results in bias "whether intentional or not"- NYC Local Law 144: Independent bias audits annually, public disclosure required- California: Four-year data retention for automated decision dataThat's state-by-state compliance complexity. And more states are introducing bills in 2026 with private rights of action, punitive damages, and invalidation of forced arbitration.**Litigation Explosion:**- 700+ court cases involving AI hallucinations- Copyright litigation targeting training data and fair use- Product liability lawsuits against LLM developers- Illinois BIPA cases allowing "extremely high damages"- Emerging "agentic liability" where autonomous AI takes binding legal action**Five Critical Legal Failures:****Failure #1 - The Reactive Posture:**Typical timeline: Business deploys AI → IT implements → Months pass → Problem surfaces → NOW Legal gets involved.By the time Legal sees the system, decisions are baked in. Training data is historical. Vendors are contracted. Legal is asked: "Can you defend this?"That's not governance. That's damage control after the damage is done.**Failure #2 - The Mapping Void:**The EU AI Act requires a fundamental first step: AI system mapping. Identify every AI system, classify by risk level, determine provider vs. deployer obligations.How many organizations have completed this? Most haven't even started.Without the map, you can't comply. And Legal can't defend what it can't describe.**Failure #3 - The Data Lineage Black Box:**Your AI model was trained on historical data. That historical data reflects historical bias—discrimination that was LEGAL when it happened but creates ILLEGAL outcomes now.Example: Resume screening AI trained on 10 years of hiring data from a company that historically hired predominantly male engineers. The AI learns "good candidate" correlates with male markers. It doesn't need gender data—it uses proxy markers.When that AI screens out qualified female candidates in 2026, you have discrimination. "Neutral historical data" doesn't matter. The outcome is illegal.Legal's question: Can you even audit the training data? Many organizations can't. Vendors won't disclose "proprietary" training corpora. Models trained on internet scrapes include copyrighted and potentially illegal source material.**Failure #4 - Human Oversight Theater:**A human "reviewing" 500 AI hiring recommendations per day isn't providing oversight. That's rubber-stamping.True human oversight requires:- Understandable explanations (not just "the algorithm recommends")- Genuine authority to override- Reasonable caseload- Clear escalation protocols- Documentation of override reasoningMost organizations have none of these. When plaintiff's attorney shows the reviewer approved 99.7% of AI recommendations, "we had human oversight" won't survive.**Failure #5 - The Vendor Accountability Gap:**Standard vendor due diligence—SOC 2 reports, security questionnaires—doesn't address AI-specific risks. You need:- Training data provenance documentation- Bias audit methodology and results- Model update procedures- Incident response for AI errors- Liability allocation for discriminatory outcomesMost vendor contracts have none of this. When Legal asks post-deployment, vendors say: "That's proprietary."Now you're using AI you can't audit, can't explain, and can't prove doesn't discriminate—but you're 100% liable for its outcomes.**The Legal Accountability Framework:**Legal can't prevent AI risk. Legal ensures organizational accountability for AI risk.**Function #1 - Risk Translation:**Legal translates complex, evolving regulatory requirements into actionable business controls. The EU AI Act is 180 recitals and 113 Articles. State laws create patchwork obligations.Legal must translate this into: "Here's what we must do. Here's what we should do. Here's what reduces liability."**Function #2 - Pre-Deployment Compliance Gate:**Legal must have formal authority to block AI deployments with unacceptable legal risk.Before ANY AI system touches customer data, employee data, or business-critical decisions:1. Risk Classification: High-risk under EU AI Act? State laws?2. Data Lineage Review: Can we document and defend training data?3. Bias Audit Verification: Independent audit conducted? Results acceptable?4. Human Oversight Protocol: Genuine review structured and resourced?5. Vendor Liability Allocation: Contracts assign responsibility for AI errors?6. Documentation Completeness: Can we survive discovery?If answers are "no" or "unclear," deployment doesn't proceed.**Function #3 - Continuous Compliance Monitoring:**- Quarterly AI Compliance Reviews (not annual—regulations evolve mid-year)- Regulatory Horizon Scanning for pending legislation- Incident Documentation Protocol for every AI error**Function #4 - Cross-Functional Governance Leadership:**Legal must have:- Veto authority over high-risk AI deployments- Co-approval authority on vendor selection- Escalation authority to CEO/Board- Budget authority for compliance infrastructure**The AI Legal Operations Model:****Stage 1 - Regulatory Compliance Infrastructure:**- AI Regulatory Calendar: Live tracker of EU AI Act dates, state law effective dates, audit requirements- Jurisdiction Matrix: Map where you have employees, customers, EU data processing, high-risk systems- Compliance Team Structure: Dedicated Legal AI Specialist, Privacy/Compliance partnership, external counsel on retainer**Stage 2 - AI-Specific Contract Provisions:**- Training Data Warranty: Legally obtained, no copyright violation, no discrimination patterns, auditable- Bias Audit Requirements: Independent annual audit, methodology disclosure, model updates if disparate impact found- Incident Response: 24-hour notification, 5-day root cause analysis, 10-day corrective action- Liability Allocation: Clear responsibility for discriminatory outcomes, indemnification, AI-specific insurance- Discovery Cooperation: Expert testimony, technical documentation, no "...

Forty-five percent of workers now use AI regularly. Confidence in using that AI? Down 18% in the last year.That's not a typo. AI usage jumped 13% while trust collapsed.Workers are using tools they don't trust, haven't been trained on, and increasingly fear will replace them. Fifty-seven percent of employees hide their AI usage from employers. Half can't tell if their AI-generated work is even accurate.And here's the nightmare: 56% of the global workforce reports receiving NO recent training. None.While management deploys AI at breakneck speed and HR scrambles to audit bias, frontline workers are left to figure it out alone—with their jobs on the line.**The Great Disconnect:**ManpowerGroup's 2026 Global Talent Barometer—released January 20th—reveals catastrophic results:- AI usage jumped 13% to 45% of workers- Confidence in using technology fell sharply by 18%- For the first time in three years, overall worker confidence declined- Baby Boomers: 35% decrease in tech confidence- Gen X workers: 25% dropMore than half the global workforce—56%—reports receiving no recent training. Fifty-seven percent have no access to mentorship opportunities.You're deploying AI faster than ever while systematically denying workers the support they need to use it.**The Assumption That's Completely Wrong:**The belief that workers are resistant to AI? Wrong.A Weavix survey of 300 frontline manufacturing workers found:- 74% are comfortable with AI-powered tools- 87% are comfortable with data collection for safety and efficiency- 81% report being MORE engaged at work than last year- 94% are optimistic about workplace safety improvements in 2026Nearly nine in ten frontline workers are FINE with AI monitoring if it improves safety and efficiency. The problem isn't worker resistance.[CLIP] "Workers are comfortable with AI and data collection, but their leaders have hamstrung them with prehistoric communication devices or nothing at all."67% of manufacturing workers still rely primarily on outdated two-way radios. 64% operate under smartphone restrictions. They're ready for AI. Management is blocking them with 1990s infrastructure.**The Hidden AI Crisis:**According to a KPMG and University of Melbourne study: 57% of employees HIDE their AI usage from employers.They're using AI anyway. They just don't tell you.And half of those workers can't tell whether the AI-generated content they're creating is even accurate. They're publishing work they don't trust because they need to keep up.That's the "AI workslop" crisis—poorly created AI content that "can sound authoritative and accurate but lacks the examples and detail that individuals require for behavior change."This isn't just inefficiency. It's organizational sabotage from the bottom up, created entirely by management failure to include workers in AI transformation.**Four Worker-Level Failures:****Failure #1 - The Training Void:**- Over 90% of global enterprises face critical skills shortages by 2026- Sustained skills gaps risk $5.5 trillion in losses from global market performance- Only one-third of employees report receiving ANY AI training in the past year- OECD found most AI training focuses on advanced skills only 1% of jobs requireResult: "AI workslop"—managers using AI to write performance reviews without considering actual performance. AI-enabled dereliction of duty.**Failure #2 - The Participation Gap:**Who's typically on AI Governance Committees? C-Suite, IT leadership, Legal, Compliance, HR directors. Who's NOT? Frontline workers—the people who actually USE AI daily.Workers with 20+ years of experience: Only 29% feel their feedback reaches decision-makers.This creates "Shadow Participation"—workers shaping AI adoption through workarounds, hidden usage, and informal experimentation. 57% of your AI adoption lessons are invisible to you.**Failure #3 - The Infrastructure Mismatch:**81% of frontline workers report being MORE engaged than last year. 94% are optimistic about safety improvements.What do you give them? Two-way radios from 1985.You're spending millions on AI platforms while your frontline can't even send a text message with a photo.**Failure #4 - The Feedback Vacuum:**When AI makes a mistake that a frontline worker catches, what happens? In most organizations: Nothing. The worker fixes it manually, the AI never learns, the error repeats tomorrow.You've created AI systems that can't learn from the people using them.**The Frontline Stakeholder Model:****Principle #1 - Workers Are Stakeholders, Not Users:**Stop calling them "end users." Users consume products. Stakeholders have vested interests in outcomes. Frontline workers' livelihoods depend on AI decisions about productivity, performance, and job security.Stakeholders have rights:- Right to understand how AI affects their work- Right to contribute feedback that shapes AI deployment- Right to transparent communication about AI-driven changes- Right to training that enables effective AI participation- Right to escalate concerns without retaliation**Principle #2 - Frontline Workers Own Operational AI Intelligence:**Workers know:- Which AI recommendations make sense and which are nonsense- Where AI saves time versus where it creates busywork- Which automated decisions align with customer needs- Where AI monitoring feels helpful versus invasiveThat's operational AI intelligence. Your job is to extract it, not ignore it.**Principle #3 - Participation Must Be Systematic, Not Symbolic:**One frontline representative on a quarterly committee isn't participation. It's tokenism.Real participation requires:- Structured feedback loops with response protocols- Frontline AI Champions Network with peer trainers- Accessible training embedded in workflow- Authority to override AI decisions with documentation**The Participatory AI Framework:****Stage 1 - Pre-Deployment Frontline Consultation:**Conduct an Operational Impact Assessment before any AI tool touches frontline work:- How will this tool change daily workflow?- What tasks will it eliminate, augment, or complicate?- What new skills will workers need?- Where might AI create errors that workers catch?**Stage 2 - Phased Rollout with Frontline Champions:**Create a Frontline AI Champions Network:- Early adopters who demonstrate AI fluency- Peer trainers for new AI tools- Escalation points for AI concerns- Beta testers for new deployments- Authority to pause rollout if serious issues emerge**Stage 3 - Embedded Training and Support:**- Contextual help INSIDE the tool, not separate modules- Peer learning sessions led by champions- Safe practice environments without performance impact- Micro-credentials for demonstrated AI competencyMcKinsey's research: "For every two dollars top-performing sites spend on technology, they spend three on processes and five on capability building."Stop spending 100% on tools and 0% on people.**Stage 4 - Continuous Feedback and Iteration:**- Weekly anomaly reporting with 48-hour IT response commitment- Monthly worker feedback sessions—conversations, not surveys- Quarterly AI tool performance reviews (AI performance, not worker performance)- Clear authority to override AI with documentation**Evidence This Works:**- McKinsey Global Lighthouse Network: Top sites spend $5 on capability building per $2 on technology- 74% of frontline workers comfortable with AI when given prop...

Sixty percent of American workers believe AI will eliminate more jobs than it creates in 2026. Fifty-one percent fear losing their jobs to automation this year.And who gets blamed when these fears come true? Not the CEO who bought the AI. Not the IT team that deployed it. Human Resources.HR is being asked to champion AI transformation while simultaneously protecting employees from that transformation. That's not a job description. That's an impossible mandate.**The Scale of the Impossible:**- 60% of workers believe AI eliminates more jobs than it creates (Resume Now, January 2026)- 51% worried about losing their job to AI this year (Resume Now)- 37% of companies expect to have replaced jobs with AI by end of 2026 (Resume.org)- 30% of companies plan to replace HR functions themselves with AI by year-end (HR Digest)- 74% of employees are now subject to some form of digital surveillanceThink about that last statistic: HR is being asked to manage workforce AI transformation while their own function is being targeted for replacement. You're supposed to be the change champion for a change that might eliminate you.**The Bias Nightmare:**A University of Washington study from late 2024 found that three leading large language models exhibited "significant racial, gender, and intersectional bias" when ranking identical resumes.The study found that AI models never preferred names perceived as Black male over white male names. Not once. But they preferred names perceived as Black female 67% of the time versus only 15% for Black male names.[CLIP] "That's a really unique harm against Black men that wasn't necessarily visible from just looking at race or gender in isolation."Now multiply that by reality: Your AI screening tool has already processed thousands of applications this month. How many qualified candidates did it screen out? You don't know. Because the vendor told you their algorithm was "bias-free" and you believed them.**The Legal Nightmare:**Under Illinois House Bill 3773, which went into effect January 1st, 2026, you can't use AI in ways that result in bias against protected classes—whether intentional or not.Notice that phrase: "whether intentional or not."Your intent doesn't matter. Your vendor's promises don't matter. Only the outcome matters.[CLIP] "We trusted the vendor isn't a defense. It's an admission that you didn't do due diligence."Add complexity:- NYC Local Law 144 requires independent bias audits—not vendor self-audits- Colorado AI Act requires risk management programs by June 30, 2026- California requires maintaining automated decision data for four years- EU AI Act classifies employment-related AI as "High Risk"How many HR teams have infrastructure to comply with all of these simultaneously?**Four Critical Failures:****Failure #1 - The Compliance Illusion:**HR teams believe they're compliant because they read vendor documentation. But vendors are facing lawsuits themselves. The first EEOC settlement involving AI hiring discrimination happened in 2024. HR tech vendors can be held liable under anti-discrimination law as "employment agencies"—meaning you AND your vendor can both get sued.**Failure #2 - The Bias Blindness:**AI doesn't need protected characteristics to discriminate. It uses proxy markers:- ZIP codes as proxies for race- Employment gaps as proxies for caregiving (which correlates with gender)- University names as proxies for socioeconomic statusRemember Amazon's resume-scanning tool from 2014-2018? It systematically downgraded resumes from women because it was trained on historical hiring data. The algorithm used phrases like "captain of the women's chess club" to identify female candidates and screen them out.That's called proxy discrimination. And it's happening right now in your hiring tools.**Failure #3 - The Surveillance State:**74% of employees are now subject to digital surveillance. Big Tech firms are tracking "everything from keystrokes to office attendance."Here's what surveillance creates: Employees start "performing busyness rather than genuine productivity." They game the system. Trust collapses. Actual productivity often decreases because workers spend more energy appearing productive than being productive.[CLIP] "Hypervigilance about continuous surveillance takes away from tasks that may be meaningful or necessary for long-term wellbeing."**Failure #4 - The False Promise of Reskilling:**A January 2026 analysis concluded: "The reskilling timelines companies promised in 2023-2024 proved wildly optimistic—most workers couldn't be retrained fast enough to keep pace with AI capabilities."The disconnect: 54% of organizations say AI-specific upskilling would have high organizational impact. But only 1% had actually implemented such a strategy as of 2025.When you say "reskilling," employees hear "delayed layoff notice." And they're not wrong.**The Dual Mandate Model:**HR has two non-negotiable responsibilities that must be held simultaneously:**Mandate #1 - Transformation Enabler:**- Partner with IT on AI tool evaluation- Lead change management for AI implementation- Build AI literacy across the organization- Identify high-value use cases for AI in HR functions**Mandate #2 - Human Dignity Steward:**- Conduct independent bias audits before deployment- Establish transparent monitoring policies- Create genuine pathways for displaced workers- Maintain human oversight of all AI decisions affecting peopleThese mandates don't compete. They're integrated. You don't get to choose transformation OR dignity. You have to deliver both simultaneously.**HR's VETO Authority:**HR has VETO authority over any AI implementation that creates unmitigated discrimination risk or violates employee dignity. Not recommendation authority. VETO authority.Why? Because in every lawsuit, every regulatory investigation—HR gets named. Your CEO will say "we trusted HR to vet this." Your vendor will say "we provided documentation."The accountability has to match the liability. And the liability is ALWAYS on HR.**The Dignity-First AI Framework:****Stage 1 - Pre-Deployment Dignity Assessment:**- Bias Audit Requirement: Independent third-party audit testing for intersectional discrimination- Transparency Threshold: Can you explain to an affected employee exactly how the AI made a decision about them?- Human Override Protocol: Every AI decision affecting hiring, firing, promotion must have required human review- Surveillance Boundary Definition: What will be monitored, why, and what will NOT be monitored**Stage 2 - Deployment with Participatory Governance:**Create an Employee AI Advisory Council with representation from:- Frontline workers who will be monitored or assisted by AI- Mid-level managers who will interpret AI outputs- Underrepresented groups who face higher discrimination risk- Union representatives (if applicable)**Stage 3 - Continuous Dignity Monitoring:**- Monthly Disparate Impact Analysis: Track hiring, promotion, termination patterns by protected class. Not annually. Monthly.- Quarterly Bias Re-Audits: Your AI model learns and its biases can evolve- Employee Sentiment Tracking: Anonymous surveys specifically asking about fairness and trust**Stage 4 - Genuine Transition Support:**- Transparent Timeline: If a role will be automated in 18 months, tell affected workers in month 1- Funded Reskilling: Not "here's a LinkedIn Learning account"—funded retraining with guaranteed interview opportunities- Alternative Pathway Cre...

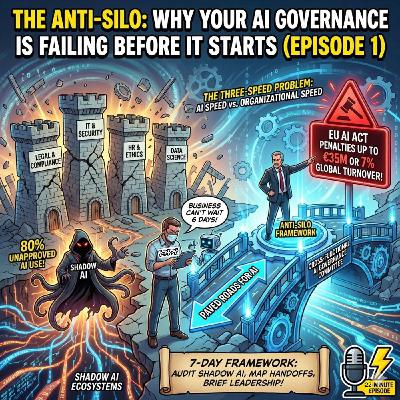

Technical debt in the United States costs organizations 2.41 trillion dollars annually.But here's what that number obscures: IT departments have known about this debt for years. They've raised the alarm. They've documented the risks. And they've been consistently overruled by business stakeholders who don't speak their language.The problem isn't that IT doesn't understand the business. It's that the business has never learned to understand IT—and now AI is making that translation failure catastrophic.**The Scale of the Crisis:**- $2.41 trillion annual cost of technical debt in the US alone (MIT Sloan)- 75% of tech leaders will face moderate-to-high technical debt severity by 2026 (Forrester)- 50%+ of business leaders say their infrastructure can't support the AI workloads they want to run (Microsoft)- Only 23% of CIOs are confident they're investing in AI with built-in data governance (Salesforce)- 282% surge in AI implementation since last year (Salesforce CIO Study)**The Pressure IT Is Under:**CIO.com published their analysis of IT leadership challenges just one week ago. The headline quote came from Barracuda's CIO:[CLIP] "The biggest challenge I'm preparing for in 2026 is scaling AI enterprise-wide without losing control. AI requests flood in from every department."That's the reality. Every department wants AI. Every department wants it now. And IT is the bottleneck everyone resents—until something breaks, at which point IT becomes the scapegoat everyone blames.**Why AI Makes Technical Debt Exponentially Worse:**CFO Dive reported on what they called a "tech debt tsunami" building amid the AI rush. The Forrester principal analyst explained:[CLIP] "There's a massive amount of technical debt in IT infrastructures. It's really this perfect storm of technology growing, companies being far more distributed, and AI coming into the equation, which will make the problem exponentially worse."AI isn't linear. Your legacy systems that "mostly work" become critical failure points when you try to layer AI on top of them.DevPro Journal reframed the conversation: Technical debt isn't actually technical debt. It's business risk.[CLIP] "In the era of Large Language Models and machine learning, technical debt is actually data corruption. If your database schemas are inconsistent or your API endpoints are held together with tape, your expensive new AI features will yield hallucinations rather than insights."**The Translation Gap:**When IT says "technical debt," business hears "maintenance that costs money and delivers no visible value."When IT says "infrastructure risk," business hears "IT trying to slow us down."When IT says "we need to refactor before we scale AI," business hears "bureaucratic delay."IT is trying to communicate probability and consequence—"if we don't fix this, there's a 40 percent chance of failure"—to stakeholders who think in certainty and outcome—"will this work or not?"The result: IT's warnings get discounted as pessimism. Their risk assessments get overruled by business urgency. And when the predicted failures occur, IT gets blamed for not preventing what they warned against.**The Governance Paradox:**IT is asked to simultaneously:- Accelerate AI adoption to meet business demands- Maintain security and compliance standards- Prevent shadow AI without blocking innovation- Scale infrastructure while managing technical debt- Document everything for audit and regulatory purposesThese demands conflict. Acceleration and governance exist in tension. And IT is expected to resolve that tension without adequate resources, authority, or organizational support.**Two Metaphors for Business Communication:****The Poisoned Well (Data Quality):**Your AI is only as good as the data it's trained on. If your data is contaminated—biased, incomplete, inconsistent, or outdated—then every AI system that drinks from that well produces poisoned outputs.The Harvard Kennedy School's Misinformation Review found: "Training data often contain biases, omissions, or inconsistencies, which may embed systemic flaws into outputs."But IT didn't create the data. Business units created the data through years of operational decisions—what to capture, what to ignore, how to categorize. Those decisions embedded biases that AI now amplifies.IT can identify data quality issues. IT can flag bias patterns. But IT can't fix data quality alone—it requires collaboration with the business units that created and own that data.**The Eager Intern (Model Hallucination):**AI hallucinations are a governance crisis that business stakeholders fundamentally misunderstand. They assume AI either works or doesn't work. They don't understand that AI can confidently produce completely fabricated outputs.Imagine an intern who's desperate to please, never admits uncertainty, and will confidently make things up rather than say "I don't know." That's your AI model.Recent incidents documented by Wikipedia (updated three days ago):- October 2025: Deloitte submitted a $440,000 report to the Australian government containing fabricated academic sources and fake quotes from a federal court judgment- November 2025: Another Deloitte report for the Government of Newfoundland ($1.6 million CAD) contained at least four false citations to non-existent research papersThese weren't edge cases. These were reports from a major consulting firm, containing AI-generated hallucinations that no one caught before submission.IT can implement guardrails—retrieval-augmented generation, fact-checking pipelines, confidence scoring. But IT can't implement the domain expertise needed to catch industry-specific hallucinations. A legal hallucination requires legal expertise to detect. A medical hallucination requires medical expertise.**The Anti-Silo Solution:**The solution isn't giving IT more authority to block AI initiatives. It's creating shared ownership structures where IT enables rather than gates.**The AI Studio Model:**CIO & Leader interviewed the CTO of ICICI Prudential Asset Management about balancing governance and innovation:[CLIP] "Centralized evaluation with decentralized execution. The central team defines standards, evaluates models, ensures compliance, and maintains oversight. Functional business units own specific AI use cases."IT doesn't approve every AI initiative. IT creates the governed pathways—the "paved roads" we introduced in Episode 1—that business units can use without per-project approval.**Responsible AI FinOps:**CIO.com published analysis on "the hidden operational costs of AI governance." Most organizations manage AI cost and AI governance as separate concerns owned by different departments.[CLIP] "This organizational structure leads to projects that are either too expensive to run or too risky to deploy. The solution is managing AI cost and governance risk as a single, measurable system."New metrics needed:- Cost per compliant decision- Development rework cost due to governance failures- Governance monitoring overhead- Explainability overhead as percentage of computeMake governance costs visible before they become surprises.**The Cross-Functional Tiger Team:**For high-risk AI initiatives, create integrated teams: IT, Legal, Compliance, business unit owner, and Finance. Give them shared accountability for both delivery and governance. Measure them on risk-adjusted outcomes—not just deployment speed.**The Proof That This Works:**Accenture studied 1,500 global companies across 19 industries. Companies well-positioned for AI change h...

Gartner predicts that by 2026, 20 percent of organizations will use AI to eliminate more than half of their middle management positions.But here's what that headline misses: the organizations flattening their structures are also losing the only people who can translate C-suite AI mandates into operational reality.Your middle managers aren't the problem. They're the last line of defense between your AI strategy and your shadow AI crisis—and you're about to fire them.**The Scale of the Elimination:**- 20% of organizations will use AI to eliminate 50%+ of middle management positions by 2026 (Gartner)- IMD expects a 10-20% reduction in traditional middle-management positions by the end of 2026- Largest reductions: reporting-heavy roles in finance, compliance, supply chain planning, and procurement**But Here's What the Headlines Miss:**A Prosci study surveying over 1,100 professionals found that 63 percent of organizations cite human factors as the primary challenge in AI implementation.Not technology. Not budget. Human factors.And guess who's supposed to manage those human factors? Middle management.The same research found that mid-level managers are the most resistant group to AI adoption—followed by frontline employees. That finding has been weaponized to justify eliminating the management layer.But resistance isn't random defiance. It's a signal.When middle managers resist AI initiatives, they're often responding to real problems:- Unclear mandates from above- Inadequate training- Tools that don't integrate with existing workflows- Accountability structures that hold them responsible for outcomes they can't control**The Knowledge Inversion:**There's a phenomenon happening that nobody's talking about directly: middle managers often know more about AI than their senior executives.A Mindflow analysis found:- 71% of middle managers actively use AI in their daily work- Only 52% of senior leaders use AI regularly- Nearly half of senior executives have never used an AI tool at allThis creates what researchers call a "knowledge inversion"—the people making strategic AI decisions have less hands-on experience than the people implementing them.C-suite executives issue mandates based on vendor presentations and board pressure. Middle managers receive those mandates knowing—from direct experience—that the implementation will be more complex than leadership understands.When middle managers raise concerns, they're perceived as resistant. When they propose alternatives, they're overruled by executives who lack the operational knowledge to evaluate their suggestions.**The Accountability Trap:**Middle managers are expected to:- Drive AI adoption within their teams- Manage shadow AI risks they can't see- Implement governance protocols they didn't design- Hit productivity targets that assume AI integration- Maintain team morale through technological disruptionAnd they're expected to do all of this without clear authority over tool selection, budget allocation, or policy creation.[CLIP] "This is the accountability trap: responsibility without authority, expectations without resources."The Allianz Risk Barometer 2026—released this month—found that AI has surged to the number two global business risk, up from number ten in 2025. That's the biggest jump in their entire ranking.Their analysis: "In many cases, adoption is moving faster than governance, regulation, and workforce readiness can keep up."Who's responsible for workforce readiness? Middle management.Who's blamed when adoption outpaces governance? Middle management.Who has the authority to slow adoption until governance catches up? Not middle management.**The Translation Failure:**C-suite executives speak in strategy—competitive advantage, market position, ROI potential.Frontline employees speak in tasks—"how does this help me do my job?"Middle managers are supposed to translate between these languages.But AI introduces a third language—technical complexity that neither strategic executives nor task-focused employees fully understand. Inference costs. Model drift. Hallucination rates. Prompt engineering. Fine-tuning requirements.Most middle managers weren't trained in this language. They're expected to translate strategies they don't fully understand into implementations they can't technically evaluate.Fast Company identified three functions that will define the future of middle management:1. Orchestrating AI-human collaboration2. Serving as agents of change through continuous AI-driven disruption3. Coaching employees through constant reskilling and role evolutionThese are sophisticated capabilities. But how many organizations are actually developing these capabilities in their management layer—versus simply expecting them to emerge?**The Human-in-the-Loop Reality:**"Human-in-the-Loop" has become the default reassurance in AI governance. It appears in policies, governance frameworks, and implementation plans. But its practical meaning is still emerging.The EU AI Act requires Human-in-the-Loop for high-risk systems. But implementation varies wildly.MobiHealthNews interviewed an AI governance expert preparing for the 2026 HIMSS conference. Her message was direct:[CLIP] "Stop asking 'Do we have Human-in-the-Loop?' and start asking 'Have we designed for the human in the loop?'"- What is the person expected to do at the decision point?- How much time do they have?- What information and constraints are visible?- What happens if they disagree or need to escalate?Those are middle management questions. The human in the loop is often a manager or team lead who's supposed to validate AI outputs—without clear guidance on what validation means, without time allocated for validation, and without authority to halt processes when validation fails.Accounting Today was blunt: "The biggest gap isn't in the models. It's in people. Most finance professionals were trained to interpret evidence, not interrogate algorithms."That's not governance. That's liability theater.**The Solution: Middle Management as Translation Layer:**The solution isn't eliminating middle management. It's reinventing it.In the Anti-Silo framework, middle management isn't a hierarchical layer to be flattened. It's a translation layer to be strengthened.**Three Translation Functions:****Upward Translation:** Converting operational reality into strategic intelligence. When frontline employees are using shadow AI tools because approved alternatives don't work, middle managers translate that signal into actionable feedback for the governance committee.**Downward Translation:** Converting strategic mandates into operational implementation. When the C-suite announces an AI initiative, middle managers translate the strategic intent into workflow changes their teams can actually execute.**Lateral Translation:** Facilitating cross-functional collaboration at the operational level. When an AI tool affects multiple departments, middle managers coordinate across silos.**The Shadow AI Response Framework (4 Steps):****Step 1 - Discovery, Not Enforcement:** The first response to shadow AI shouldn't be punishment. It should be understanding. Why is this person using this tool? What need does it meet? What approved alternative failed them?**Step 2 - Risk Assessment:** Not all shadow AI is equally dangerous. Middle managers need a simple risk classification framework—provided by Security and Legal—that lets them triage what they discover.**Step 3 - Pathway Creati...