Discover Statistical Methods & Thinking

Statistical Methods & Thinking

Statistical Methods & Thinking

Author: Weijing Wang @ NYCU

Subscribed: 0Played: 0Subscribe

Share

© Weijing Wang @ NYCU

Description

The materials in this podcast are generated by NotebookLM based on the lecture notes of the course Applied Statistical Methods, offered at NYCU and taught by Weijing Wang.

The podcast covers core methods for analyzing associations in data, including correlation analysis, simple and multiple linear regression (estimation, testing, and model checking), and discussions on association versus causation. It also introduces methods for categorical data analysis such as contingency tables, chi-square tests, logistic regression, and the generalized linear model framework.

The podcast covers core methods for analyzing associations in data, including correlation analysis, simple and multiple linear regression (estimation, testing, and model checking), and discussions on association versus causation. It also introduces methods for categorical data analysis such as contingency tables, chi-square tests, logistic regression, and the generalized linear model framework.

13 Episodes

Reverse

In this episode, we introduce the core ideas behind analyzing time-to-event data—situations where the outcome isn’t just “what happened,” but when it happened. A key challenge is that some participants haven’t experienced the event yet by the end of follow-up (or they drop out), so the data are only partially observed.We build the intuition for describing how risk changes over time, then walk through three practical tools: how to estimate a survival curve from one group, how to compare two groups fairly over the whole follow-up, and how to study the role of multiple predictors while keeping the time dimension front and center.

In this episode, we step into multivariate thinking and ask a practical question: when do data points naturally form “groups,” and how can we use those groups to make decisions?We walk through how grouping methods decide what’s “close” or “similar,” then compare two main approaches—building clusters step by step versus forming clusters all at once. You’ll also hear how tree-like visual summaries help us see structure in messy data, and how the same multivariate ideas can be flipped into classification, where the goal is to assign a new case to the most likely group.

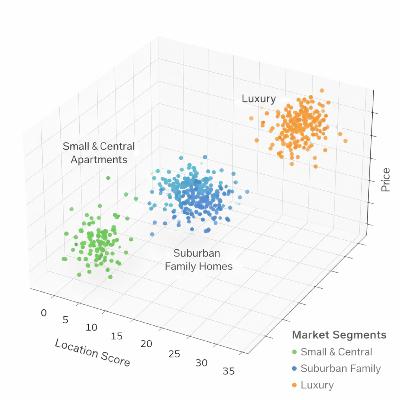

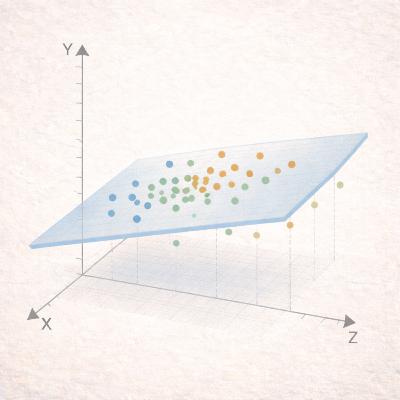

This episode is about what to do when your data has many variables at once. We start with the basic idea of how variables “move together” (correlation and covariance), and why that matters for understanding patterns in real datasets.Then we introduce dimension reduction—ways to compress lots of information into a few summary features, so you can see the main structure without getting lost in details. We explain how these methods find the directions where the data varies most, and how a simple “rotation” can make the results easier to interpret.We wrap up with practical rules of thumb for deciding how many components to keep, and a quick preview of how these ideas connect to grouping similar observations and classifying new cases.

This episode is about working with categorical outcomes—questions where results fall into categories rather than a numeric scale. We learn how to check whether two variables are related, how to model the chance of a “yes/no” outcome using multiple predictors, and how to compare different modeling choices. We finish with simple ways to judge how well a model fits and whether a simpler or more detailed model is the better choice.

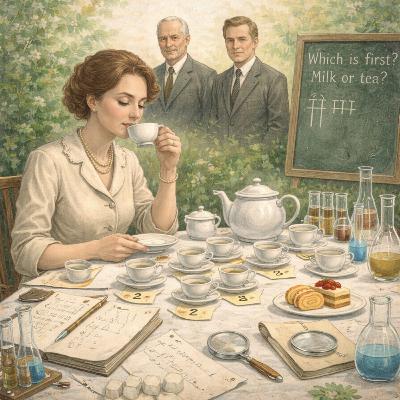

In this episode, we start with Fisher’s “Lady Tasting Tea”—a classic reminder that good questions need good experimental design. Then we shift from continuous outcomes to categorical data: how a simple 2×2 table turns test results into sensitivity/specificity, and study results into association measures like relative risk and odds ratios.Next, we unpack Simpson’s paradox—how the headline conclusion can flip once you stratify by a key factor. We wrap up with practical inference tools, including Fisher’s exact test and the chi-square test, plus a quick nod to ROC/AUC for evaluating classifiers.

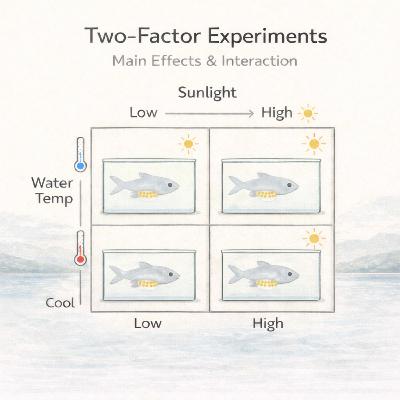

This episode moves from one-way ANOVA to two-factor randomized experiments, focusing on how to test main effects and, more importantly, interactions—when the effect of one factor depends on the level of the other. Using examples like printer sales and a fish reproduction index, we show how ANOVA partitions variation and supports hypothesis testing. We also give a quick tour of extensions including random-effects and mixed-effects models, plus ANCOVA for adjusting with covariates. In the second half, we shift to study design in biomedicine—contrasting prospective vs. retrospective data collection—and close with a short introduction to categorical data analysis and basic concepts in clinical trials.

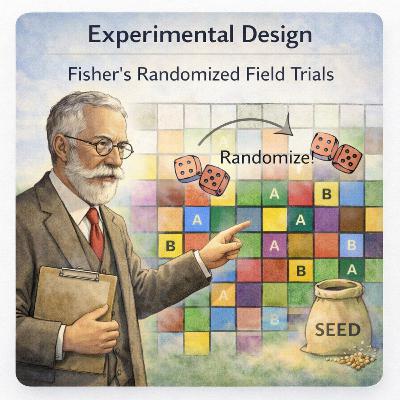

This episode introduces the core logic of experimental design and ANOVA: what we mean by causality, factors, and confounders—and why randomization, replication, and blocking are the practical tools that make comparisons fair. We build the one-way ANOVA model, run the hypothesis test in R, and discuss multiple comparisons and how to control Type I error. We also connect ANOVA to regression, highlight R.A. Fisher’s role in modern statistics, and close with randomized block designs to improve precision by accounting for nuisance variation.

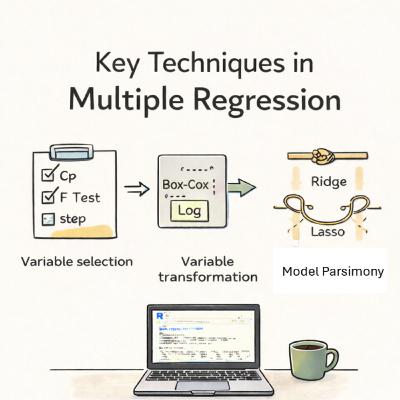

Episode 6 is about making multiple regression work in real life: how to choose predictors without overfitting, when to transform variables to fix messy variance or nonlinearity, and what to do when predictors are strongly correlated. We’ll walk through tools like Mallows’ Cp, partial F tests, and stepwise selection, then wrap up with ridge and lasso as practical fixes for multicollinearity—with quick examples along the way.

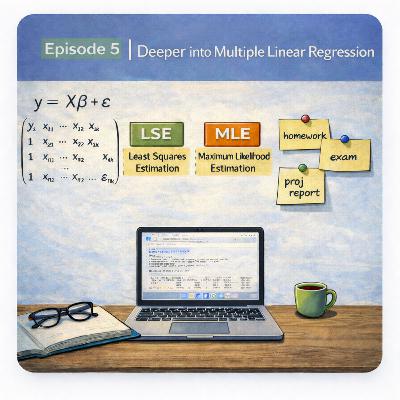

Episode 5 connects the “big picture” of multiple linear regression: the matrix form of the model, how least squares and maximum likelihood lead to the same estimates under standard assumptions, and what the ANOVA table is really decomposing. We compare r-square vs. adjusted r-square, review t-tests for individual predictors and the F-test for overall model validity, and finish with practical model selection (AIC and partial F-tests) plus examples on diagnosing outliers and interpreting results.

Episode 4 introduces multiple linear regression—how to model an outcome using several predictors at once, and how to interpret each effect while holding the others constant.We cover dummy variables for categorical data, and interaction terms (e.g., how experience and gender together can change salary patterns). We also compare regression with the two-sample mean test, showing how they’re related but regression is more flexible. We end with a practical note: p-values aren’t the whole story, and conclusions should rely on context and assumptions, with nonparametric options available when data don’t fit normality well.

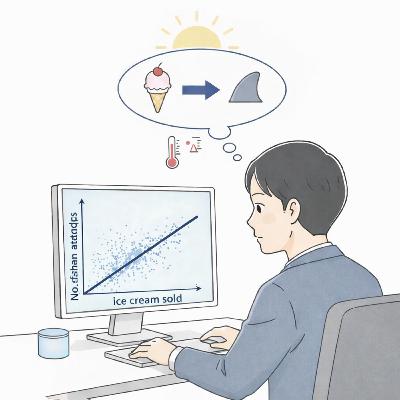

This episode builds on simple linear regression by focusing on statistical inference—how we move from a fitted line to meaningful conclusions. We review the intuition behind least squares and explain why switching the roles of the two variables can lead to different fitted lines.We then discuss how to interpret regression results in practice, including point estimates, uncertainty, and the difference between statements about an average outcome versus predictions for an individual. Using simple examples and R-based illustrations, the episode highlights how confidence intervals and prediction intervals answer different questions.Finally, we return to a key warning in applied data analysis: association is not causation. Through classic real-world examples (such as ice cream sales and shark attacks), we explain how hidden variables can create misleading relationships—and why a strong regression fit does not automatically justify a causal claim.

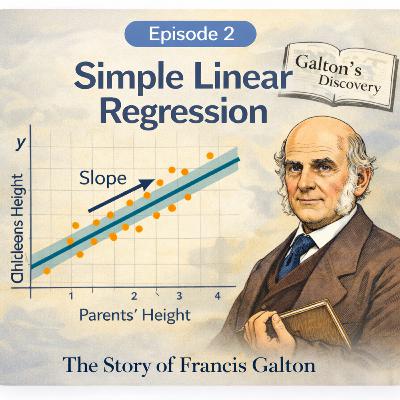

This episode introduces simple linear regression as a tool for understanding trends and making predictions from data. We begin with the historical insight of Francis Galton, whose study of the relationship between parents’ and children’s heights helped popularize the idea of describing association with a straight line.Building on this intuition, we explain the meaning of the slope and intercept in practical terms, and how they connect to interpretation and prediction. We then emphasize why it is important to check whether a linear model is appropriate before trusting it—using scatter plots and residual plots to detect nonlinearity, unequal variability, and unusual observations.Finally, we discuss how to judge whether the model is actually useful: how much it explains the variation in the data, what to look for in common regression output, and how these ideas translate into practice through simple examples.

This episode is based on the course syllabus and the first lecture of Applied Statistical Methods. The primary goal is to introduce students to applied data analysis using statistical tools.The lecture focuses on the analysis of association in data, with particular emphasis on graphical methods for describing bivariate relationships. Scatter plots are introduced as a fundamental tool for visualizing data and guiding statistical interpretation.Different measures of correlation, including Pearson, Kendall, and Spearman coefficients, are discussed and compared in terms of their assumptions, strengths, and robustness. Through these comparisons, the episode highlights how outliers and nonlinear relationships can affect statistical conclusions.The episode also provides an overview of how statistical methods are applied in practice, drawing on examples from past student projects in fields such as medicine, social sciences, and business analytics.