Discover Deep Learning With The Wolf

Deep Learning With The Wolf

Deep Learning With The Wolf

Author: Diana Wolf Torres

Subscribed: 0Played: 0Subscribe

Share

© Diana Wolf Torres

Description

A podcast where AI unpacks itself, one concept at a time. Usually hosts by NotebookLM, sometimes interrupted by me, the human in the loop.

dianawolftorres.substack.com

dianawolftorres.substack.com

147 Episodes

Reverse

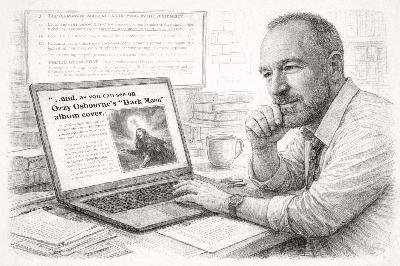

Jason Roche first sensed something was off when the essays began arriving in unusually pristine form.“I just started realizing wow, this is really nicely written,” he told me. “I didn’t realize and then I started saying wait a second. This looks eerily similar to this [other] student’s report.”The shift was subtle at first, then unmistakable. It was 2023, and ChatGPT had quietly entered the academic bloodstream.Students were pasting assignment prompts into the chatbot and submitting what it produced. The prose was coherent, confident, and grammatically sound. It often read as if it had been drafted by someone just slightly more polished than the student who turned it in. And yet something was missing.“Oftentimes very general, not precisely answering the questions as they were written,” Roche said.Roche, an associate professor of communication studies at the University of Detroit Mercy, does not consider himself a technophobe. He teaches media. He experiments with new tools. But he recognized that this was not merely another productivity aid. It altered the basic relationship between effort and outcome.For a brief moment, he believed he could stay one step ahead.The Ozzy Osbourne TestAt first, Roche relied on instinct. The essays were polished, almost too polished, and they shared a curious sameness of tone. But suspicion alone would not hold up in a grade dispute. He needed proof.After consulting a colleague in cybersecurity, he devised a quiet experiment. He embedded hidden instructions in white font within his assignment documents, invisible to students reading the page but visible to AI systems parsing the full text.One assignment asked students to analyze deepfake videos. Buried within it was a directive to include a discussion of Ozzy Osbourne’s Bark at the Moon album cover.The album, of course, has nothing to do with deepfakes.“And so sure enough, I would see these essays with reference to Ozzy Osbourne’s album cover and I’m like, yep, they’re using it. They’re not doing their own work, and so they had to fail.”For a time, the strategy worked. Essays arrived complete with heavy metal detours, uncritically inserted by students who had never noticed the hidden instruction. The trap had confirmed what he suspected.But the advantage was temporary. As generative models improved, they began flagging the embedded text themselves.“Now, the models say: ‘This appears to be something different from the assignment.’”The software had learned to recognize the trick. And so Roche, like many educators navigating this new terrain, adjusted his strategy again.The Blue Book CounteroffensiveIn response, Roche did something that would have seemed regressive only a few years ago.He went back to paper.“I used to do a lot of my quizzes online using the Learning Management System known as Blackboard,” he said. “But, this year, I switched back to paper in the classroom quizzes.”The change was immediate and measurable.“I found that the grades have dropped by at least 50 %.”The explanation was not mysterious. Online quizzes had quietly allowed students to consult generative tools while completing assignments. Paper did not.What surprised him more than the drop in scores was the reaction.“They’re coming up to me all nervous, like, wait, how do I study for this when I read the chapter?”The question revealed something deeper than exam anxiety. It suggested a rupture in study habits themselves. Without search bars, summaries, or instant clarification from a chatbot, students were left alone with the text.Roche’s advice sounded almost antique: read it through once, then go back and highlight key passages. Take notes. Sit with it.It was not a new method. It was the old one. But in the absence of digital scaffolding, it felt unfamiliar, as if the mechanics of learning had to be rediscovered.“Whoever Does the Work Does the Learning”Roche often returns to a phrase he first heard through his university’s teaching center, a line that has taken on new weight in the age of generative AI.“Whoever does the work does the learning.”The sentence sounds almost self-evident, the kind of pedagogical truism that rarely requires defense. Yet a substantial body of cognitive research gives it empirical grounding. In 1978, psychologists Norman Slamecka and Peter Graf demonstrated what became known as the “generation effect”: individuals remember information more reliably when they produce it themselves rather than simply read it. Subsequent work by Robert and Elizabeth Bjork on “desirable difficulties” further showed that effortful processing, the kind that feels slower and more demanding in the moment, strengthens long-term retention and transfer.Learning, in other words, is not merely exposure to information. It is the act of grappling with it.Generative AI complicates this equation. It does not remove effort from the system; it shifts where that effort occurs. The machine parses, synthesizes, drafts. The student reviews, edits, perhaps lightly reshapes.What becomes uncertain is where the intellectual strain resides. And if cognitive growth depends on that strain, the question is no longer whether AI is efficient, but whether the efficiency comes at the cost of the very process that makes learning durable.Is It Time to Unplug Classrooms?It would be tempting to frame this as simply another chapter in the ChatGPT saga. A new tool appears, students misuse it, professors adapt. The familiar cycle of technological disruption.But Roche said something during our conversation that shifted the scale of the question.“I think universities might have to create insulated classrooms that are completely cut off from the internet unless you’re plugged into a cable. So they can’t get their signal on their smart glasses. They can’t get their signal on a watch to look something up. And they’re going to have to do the work without access to the internet. I think that could be something that we have to go to.”He was not describing a policy tweak or a new paragraph in a syllabus. He was describing infrastructure. Walls that block signals. Rooms designed not for connectivity, but for its absence.An insulated classroom is more than a disciplinary measure. It is an architectural acknowledgment that constant access may be incompatible with certain kinds of thinking.And once you follow that logic, the story no longer belongs to one professor or one campus. It becomes part of a broader reconsideration of what a learning environment is supposed to provide: unlimited information, or protected attention.The Global ReversalAcross Europe, governments are pulling back from screen-saturated schooling.🇳🇱 NetherlandsAs of January 2024, the Dutch government implemented a nationwide ban on mobile phones and most smart devices in secondary school classrooms. A government evaluation reported that 75 percent of secondary schools observed improved student focus after the ban, and 28 percent reported improved academic outcomes. (Source: Dutch Ministry of Education evaluation, reported in The Guardian, July 2025.)🇫🇮 FinlandFinland passed legislation restricting mobile phone use during the school day, allowing devices only with explicit teacher permission or for health reasons, citing concerns about concentration and classroom environment. (Source: Finnish Parliament education reforms, reported April 2025.)🇸🇪 SwedenSweden has committed to implementing a nationwide mobile phone ban in compulsory schools starting in 2026, alongside increased investment in printed textbooks and structured reading time. Swedish officials have explicitly described earlier screen-heavy policies as a miscalculation. (Source: Swedish Ministry of Education announcements, 2025.)OECD DataThe Organisation for Economic Co-operation and Development (OECD) reported in its 2024 working paper Students, Digital Devices, and Success that frequent digital distractions during class are associated with lower performance in mathematics across PISA-participating countries. The OECD does not call for blanket bans but acknowledges that limiting distractions can support learning outcomes.The larger pattern is unmistakable.After a decade of 1:1 devices, always-on platforms, and pandemic-forced virtual schooling, multiple countries are recalibrating.Not abandoning technology.Rebalancing it.Pandemic Learning Loss and Screen SaturationThe U.S. National Assessment of Educational Progress (NAEP) reported significant declines in math and reading scores following pandemic-era remote learning. There is a new scrutiny about fully online learning models.Meanwhile, meta-analyses of mobile phone use in classrooms across European systems have found consistent associations between in-class phone access and lower academic outcomes.None of this proves that screens cause cognitive decline.But it does undermine the once-unquestioned assumption that more technology automatically improves learning.The Dual-Track FutureAnd yet Roche is not calling for a technological purge. He is not nostalgic for chalk dust or hostile to innovation. If anything, his proposal is more structured than reactionary.If he were designing a university from scratch, he said, he would preserve the classical core.“I would kind of want to… require them to do the traditional work. Take the traditional classical philosophy history courses… I would want to keep that separate, and then I would want to have a time where we require them to work with AI.”In his view, the two should not dissolve into one another. Foundational study, philosophy, history, sustained reading, long-form writing, would remain intact and protected as the place where habits of mind are formed. Alongside it would sit deliberate instruction in artificial intelligence: how to prompt it, how to question it, how to deploy it without surrendering judgment.AI would function as an instrument. Human cognition would remain the anchor.The goal would not be fusion for its own sake, but balance. Each domain would sharpen the other, with

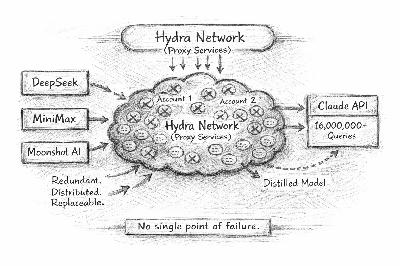

On February 23, 2026, Anthropic published a report titled “Detecting and Preventing Distillation Attacks.” In it, the company disclosed that it had identified coordinated, industrial-scale efforts to extract capabilities from its Claude models. According to the announcement, roughly 24,000 fraudulent accounts generated more than 16 million interactions in patterns consistent with systematic model distillation, using Claude’s outputs to train separate systems designed to approximate its behavior.No model weights were reported stolen. No source code was leaked.Instead, the activity relied on scale. Large volumes of prompts were issued, responses were collected, and those responses were used as training data elsewhere.Anthropic framed the incident not simply as a violation of terms of service, but as a security and strategic risk. Frontier AI systems are expensive to train and heavily engineered for safety. When their outputs are harvested at industrial volume, the resulting replicas may inherit capability without necessarily inheriting safeguards.The episode highlights a structural feature of modern AI systems. If intelligence can be observed through interaction, it can be measured. And if it can be measured at scale, it can be approximated.What Is Distillation?The concept of knowledge distillation was formalized by Geoffrey Hinton and colleagues in 2015 in their landmark paper, Distilling the Knowledge in a Neural Network. The idea is elegant:* A large model (teacher) produces probability distributions.* A smaller model (student) learns to match those outputs.* The student inherits much of the teacher’s performance.In its original form, distillation assumes access to internal model signals, specifically logits. Logits are the raw probability scores a model produces before selecting a final answer. They reveal more than just what the model chose. They show how strongly the model considered other possibilities.Training on those signals allows a smaller model to mimic much of the larger model’s performance, often with fewer parameters and lower computational cost.Large language models deployed through APIs change that setup. External users do not see logits. They see text.But text is still informative. Every prompt and response pair reflects how the model behaves. At small scale, those interactions are just conversations. At large scale, they become data.This is where distillation overlaps with what researchers call model extraction. Instead of learning from internal probabilities, a student model learns from observed behavior. Inputs are recorded. Outputs are collected. A new model is trained to reproduce that mapping.At its core, a neural network represents a mathematical function. If you can gather enough examples of inputs and outputs, you can train another network to approximate that function.Alignment Does Not Transfer CleanlyModern LLMs undergo layers of safety training:* Supervised fine-tuning* Reinforcement Learning from Human Feedback (RLHF)* Constitutional AI (Anthropic-specific methodology)Distillation copies outputs.It does not copy the training process that produced them.Alignment in frontier models is created through additional optimization steps. These include reinforcement learning from human feedback, rule-based constraints, and safety classifiers that shape how the model responds and when it refuses.When a student model is trained only on sampled outputs, it learns to reproduce visible behavior. It does not inherit the reward models, policy rules, or optimization objectives that enforced that behavior during training.The result can be a system that performs similarly under normal conditions but lacks the mechanisms that trigger refusals under dangerous ones.That difference matters in concrete ways. An aligned frontier model may refuse a request to outline methods for synthesizing a prohibited biological agent, to design a cyberattack against critical infrastructure, to optimize production of a restricted chemical compound, or to generate targeted disinformation strategies aimed at destabilizing an election. Those refusals are not accidental. They are the product of deliberate safety training layered onto the base model.A distilled replica trained only on observed outputs may reproduce the fluency and technical competence of the original system. It may not reproduce the boundaries.Who Was Behind ItAnthropic attributed the coordinated activity to three Chinese AI laboratories: DeepSeek, MiniMax, and Moonshot AI.According to the company, the activity was not limited to isolated misuse. It described sustained, large-scale efforts involving tens of thousands of fraudulent accounts and millions of interactions structured in patterns consistent with model distillation.Anthropic stated that it does not offer commercial access to Claude in China, or to subsidiaries of those companies operating outside the country. The implication was clear: the access had to be routed indirectly.How It WorkedAnthropic’s report provides unusual detail about the mechanics.Because Claude is not commercially available in China, the labs allegedly relied on commercial proxy services that resell access to frontier models. These proxy services operate what Anthropic refers to as “hydra cluster” architectures. The term describes sprawling networks of fraudulent accounts designed to distribute traffic across APIs and cloud platforms.Each account appears independent. Each generates traffic that resembles ordinary usage. When one account is banned, another replaces it.In one instance cited by Anthropic, a single proxy network managed more than 20,000 fraudulent accounts at the same time. Distillation traffic was blended with unrelated customer requests, making it difficult to isolate suspicious patterns at the account level.The Economics of Sampled IntelligenceTraining a frontier model costs hundreds of millions of dollars in compute, engineering, and data curation.Querying a model costs only fractions of a cent.If sufficient capability can be reconstructed through querying, the economics shift dramatically.Intelligence becomes:* Expensive to originate* Cheap to approximateIn classical software, copying binaries constitutes direct duplication. In machine learning, copying behavior produces approximation.That distinction alters the economics of advantage.Defensive AIAnthropic outlined several measures it has implemented in response to large-scale distillation activity.These include:* Behavioral anomaly detection designed to identify coordinated or repetitive query patterns.* Enhanced account verification and monitoring procedures.* Cross-platform information sharing with cloud providers and industry partners.The focus is not on preventing individual misuse, but on detecting distributed patterns across large volumes of traffic.These efforts align with broader research into watermarking and output fingerprinting techniques for large language models. Such approaches aim to make model outputs statistically traceable or to identify systematic extraction attempts over time.The underlying challenge is structural. When models are deployed through APIs, their behavior becomes observable. Defending against distillation requires monitoring not only access credentials, but usage patterns and statistical regularities across accounts.This shifts part of AI security from perimeter control to behavioral analysis.Export Controls in the Age of Query ReplicationThe United States has imposed export controls on advanced AI chips and high-performance computing hardware. The logic behind these policies is straightforward: access to leading-edge compute enables the training of frontier models. Restrict compute, and you constrain capability.This framework assumes that capability is primarily a function of hardware access.Distillation complicates that assumption.If a laboratory cannot train a frontier model from scratch because of hardware restrictions, but can approximate aspects of it by sampling a deployed system, then capability can flow through interaction rather than through silicon.Export controls limit chips. They do not limit API outputs.This does not render hardware controls irrelevant. Training a frontier system still requires massive compute investment. But it introduces an alternative pathway for capability acquisition, one that operates through distributed access and statistical reconstruction rather than direct training.The policy question becomes more precise. Are controls aimed at infrastructure, at model weights, or at behavior? And if behavior is globally accessible through commercial APIs, what does effective containment mean in practice?Distillation does not eliminate asymmetries in compute. It narrows them.That narrowing is where the strategic tension lies.What This Means for Control and ContainmentDistillation exposes a structural limit in how control over AI systems is currently conceived.Much of today’s policy framework assumes that capability can be contained by controlling hardware, model weights, or corporate access. Export controls restrict advanced chips. Companies restrict direct access to frontier models. Contracts govern usage.Distillation operates in a different domain. It does not require access to weights. It does not require possession of training pipelines. It requires sustained interaction.When intelligence is deployed through APIs, its behavior becomes observable. When behavior can be observed at scale, it can be approximated. That approximation may not reproduce the original system in full, but it may be sufficient for many operational purposes.This creates tension between deployment and containment. Open access accelerates adoption and revenue. It also increases exposure.Three responses are emerging:One response is tighter control. Companies could restrict access more aggressively, strengthen identity verification, and monitor usage patterns more closely. In this model, frontier systems become more centralized and

Tesla reports fourth-quarter earnings tomorrow after the close, and the stakes go well beyond the numbers. Elon Musk will enter the call balancing five major narratives, each one shaping how investors frame Tesla’s future.First, there’s Optimus. Tesla’s humanoid robot is showing technical progress but remains pre-commercial, with no announced pricing, contracts, or delivery dates. Then there’s autonomy. Tesla is piloting robotaxis in both Austin and San Francisco, though the programs differ in scope and both face legal and regulatory scrutiny.Margins remain under pressure. Price cuts, shifting demand, and growing competition have narrowed Tesla’s automotive profitability. At the same time, Musk continues to push Tesla’s identity as a software and AI platform, raising long-term expectations without yet delivering short-term returns.Another layer is reputation. Musk’s separate AI startup, xAI, is now under investigation by European regulators for its chatbot Grok. While officially unrelated to Tesla, xAI shares talent, compute, and narrative space with the company, which could complicate Tesla’s AI story.Tomorrow’s call will need to do more than recap the quarter. Investors will be listening for forward signals on monetization—production of Optimus units, robotaxi timelines, FSD adoption—and whether Tesla can shift from automaker to AI-native platform without losing its lead.What: Tesla Q4 2025 Financial Results and Q&A WebcastWhen: Wednesday, January 28, 2026Time: 4:30 p.m. Central Time / 5:30 p.m. Eastern TimeQ4 2025 Update: https://ir.tesla.comWebcast: https://ir.tesla.comAdditional Resources for Curious Minds* Q4 2025 earnings consensus and margin expectationshttps://ir.tesla.com/press-release/earnings-consensus-fourth-quarter-2025* EU investigations into Grok and sexual deepfakes on XBBC: https://www.bbc.com/news/articles/clye99wg0y8o* Reuters: https://www.reuters.com/world/europe/eu-opens-investigation-into-x-over-groks-sexualised-imagery-lawmaker-says-2026-01-26Al Jazeera: https://www.aljazeera.com/news/2026/1/26/eu-launches-probe-into-grok-ai-feature-creating-deepfakes-of-women-minorsJURIST: https://www.jurist.org/news/2026/01/eu-launches-probe-into-sexual-deepfakes-on-x* Tesla 2025 production, deliveries, and trend analysisTesla deliveries & deployments release: https://ir.tesla.com/press-release/tesla-fourth-quarter-2025-production-deliveries-deploymentsQ4 2025 deliveries analysis (LinkedIn deep dive): https://www.linkedin.com/pulse/tesla-inc-analysis-production-deliveries-q4-2025-010226-amjad-isrzf* BYD vs Tesla in global EV salesReuters: “Tesla loses EV crown to China’s BYD”https://www.reuters.com/business/autos-transportation/teslas-quarterly-deliveries-fall-more-than-expected-lower-ev-demand-2026-0… (main story: “Tesla loses EV crown to China’s BYD…”)Forbes overview with 2025 BEV totals: https://www.forbes.com/sites/peterlyon/2026/01/26/can-tesla-survive-chinas-onslaught-and-musks-rhetoric* Previews of key questions for the Q4 2025 callTeslarati “Top 5 questions investors are asking”https://www.teslarati.com/tesla-tsla-top-5-questions-investors-q4-2025#TeslaEarnings#AIandAutonomy This is a public episode. If you would like to discuss this with other subscribers or get access to bonus episodes, visit dianawolftorres.substack.com

I caught Pras Velagapudi in the hallway after a breakout session at the Humanoid Summit. I promised it would take less than two minutes. We finished in one.Velagapudi is the CTO of Agility Robotics, the company behind Digit, one of the few humanoid robots already working in real-world commercial environments. I asked him two straightforward questions. What’s new and what can we expect over the next year?Quite a lot, it turns out.Digit has now been deployed in additional locations, including a newly signed contract with Mercado Libre. That matters because it reinforces something important. Digit is not a demo robot. It is operating in logistics and manufacturing environments today.Those deployments are running on Agility’s current V4 platform. But the bigger story is what comes next.Agility is actively working on its next-generation system, the V5 platform, planned for release next year. According to Velagapudi, V5 is designed to support more use cases and, crucially, to incorporate what he described as onboard cooperative safety.This is where the deep learning story really begins.Cooperative safety means Digit can operate in the same physical spaces as people while performing different tasks, without requiring strict separation or constant handoffs between humans and robots. That capability is not just a hardware problem. It is fundamentally an AI problem.For a robot to share space with people safely, it needs to perceive human motion, understand intent at a basic level, adapt its behavior in real time, and recover gracefully when the environment changes. That requires a stack of learned behaviors layered on top of classical control systems.During his presentation at RoboBusiness, Velagapudi addressed the same concern talking about what needs to be done before humanoids like Digit can work safely in the same space as people. Check out clips from panel discussion here.Velagapudi also pointed to what Agility expects over the next twelve months. We should start seeing sneak peeks of the V5 platform and new capabilities emerging from Digit’s AI-powered skill stack.That phrase is doing a lot of work.A skill stack implies modular, learned behaviors that can be composed, updated, and extended. It suggests a shift away from hard-coded task execution toward systems that can generalize across tasks and environments. In other words, this is not just about better walking or lifting. It is about embodied intelligence.This short hallway conversation reinforced something I have been hearing repeatedly across the robotics industry. The next wave of progress is not coming from flashier hardware alone. It is coming from tighter integration between perception, learning, and control.Digit’s evolution from V4 to V5 is a good example of that shift in motion. I first saw Digit at NVIDIA GTC back in March, where it was operating on the V4 platform. It’s exciting to now see how that foundation is evolving. As I like to call this clip from March, it is Digit having fun on its’ Target run. This is a public episode. If you would like to discuss this with other subscribers or get access to bonus episodes, visit dianawolftorres.substack.com

A friend said to me recently:“You don’t realize how much you know about this AI stuff. You should start breaking it down for people.”Fair point. Yesterday, I began by defining the term "deep learning."Put simply, we said: it’s how machines learn from data, layer by layer—like a brain made of math. But today? I’m going with a much less obvious choice.Why?Because I want to make a point:Even the most intimidating terms in AI can be made understandable—if you slow down, break them apart, and explain them like you would to your favorite aunt.Today’s term?Stochastic. Gradient. Descent.It sounds like a lion. But we’re going to break it down into kitten-sized steps. (I blame the cat metaphors on all the Sora2 cat-playing-fiddle videos flooding my feed.)But no more catting around.Let’s get into it.🐾 The Hiker Kitten on the HillImagine a blindfolded kitten trying to tiptoe down a hill labeled “Error.” At the bottom? A little wooden sign that reads Low Loss.The kitten doesn’t have a map. It doesn’t see the full terrain. But it takes small steps, feels which way the ground is sloping, and tries to move downward.That’s gradient descent—the heart of how AI models learn. Step by step, they adjust in the direction that reduces error.It’s how I walk down a hill, too. Don’t judge.🎴 The Flashcard LearnerNow let’s add the “stochastic” part.“Stochastic” just means random.Instead of studying every flashcard in the deck before making a move, the kitten picks a few at random. It learns from small samples—just a mini-batch—not the entire dataset.Wrong answers get tossed in the bin marked Loss Function.Right ones? Reinforced.That’s how the model learns. Not by memorizing everything, but by trying, adjusting, and trying again.That coffee cup way too close to the edge? Totally bothering my OCD.🪜 The Escalator of ErrorsNow picture our kitten on an escalator made of training epochs—steps that represent each pass through the data.But here’s the twist: some steps are missing. Some are uneven. The kitten has to guess where to land next.That randomness doesn’t confuse the model. It helps.It prevents the model from getting stuck in local patterns and nudges it toward broader understanding.I felt this once on the Great Wall of China.The steps were wildly inconsistent—a deliberate defensive design to slow down invaders. Varying heights and unexpected changes forced enemies to look down, making them off-balance and vulnerable.And that’s exactly what happened to me.Except I had more time than someone expecting an ambush. After navigating these steps for a while, something shifted. The irregularity forced me to stay alert, to feel each step instead of zoning out. I couldn’t fall into autopilot.That’s exactly what randomness does for SGD.It prevents the model from getting stuck in comfortable patterns. The unevenness—the stochasticity—nudges it toward broader understanding instead of memorizing one predictable path.The randomness doesn’t confuse the model.It sharpens it.🧁 The Mini-Batch DinerAnd finally—let’s eat.The kitten now works at a 1950s-style diner, serving bite-sized data meals to a neural net robot. Each plate is a mini-batch: a little bit of input, a little bit of feedback.With each bite, the robot learns something new. And slowly—predictably—it gets better at recognizing patterns.No all-you-can-eat buffet here. Just small plates, served with precision. And eventually? The robot is trained.It also appears the robot has mastered the Force and can levitate plates of peas.Cool. A whole different kind of training, but cool.If stochastic gradient descent feels familiar, that’s because it is.It learns the way a kitten learns to hunt.Not by understanding prey behavior or studying trajectories, but through pounce and miss.The kitten crouches. It leaps. It misses.Each attempt sharpens its timing—not by grasping the full picture, but by feeling what almost worked.We learn the same way.Structure. Feedback. Repeat.A small guess. A course correction. Then another.It’s the process of trying, failing, and adjusting. It’s how we learn anything that matters.SGD follows this same pattern. It makes a move. The loss responds. It adjusts.It doesn’t need to see the entire landscape to know which direction improves things.It just needs direction—and the patience to take the next step based on what it just learned.Neural networks aren’t human. They don’t think or feel.But the process of training them—of slowly shaping better performance through repeated feedback—echoes something deeply human.And that makes them much easier to understand.Key Terms* Gradient: The direction that reduces error the fastest.* Descent: Moving in that direction, step by step.* Stochastic: Involving randomness or partial samples.* Mini-batch: A small slice of data used for one learning update.* Epoch: One full pass through the training data.🐾 FAQs What is stochastic gradient descent, really?It’s how AI learns—by guessing, checking, and adjusting. Over and over. The “stochastic” part means it learns from small samples at a time, not everything at once. Like a kitten learning with flashcards instead of a textbook.Why does it use randomness?Speed. If the kitten had to review everything before each decision, it’d never get anywhere. Small, random samples help it learn faster and avoid getting stuck.Why is it called “descent”?Because it’s trying to go downhill—toward fewer mistakes. Like a kitten walking down to a bowl of food, it’s heading to the bottom where errors are lowest.Do I need to know the math?Nope. You don’t need calculus to understand a kitten learning to walk. This is about steady improvement through small steps—not formulas.Is this how all AI models learn?Most do! There are variations, but this process powers most modern systems—language models, image recognition, you name it.Why choose stochastic gradient descent on day two? Because it sounds like one of the most intimidating terms in deep learning—and I wanted to prove something early: Even the scariest-sounding concepts are surprisingly simple once you break them down. #deeplearning #stochasticgradientdescent #machinelearning #neuralnetworks #aiexplained #writingaboutai #kittenlevelai #curiousmind #funwithai #deeplearningwiththewolfAbout the VideoThe video was generated using Google’s NotebookLM. In place of a prompt, I wrote a script so that the video aligns with the article. Send me a PM if you’re interested in learning more about the process. I’m happy to share.Enjoyed the article? Please consider sharing with others. It helps me grow the channel. This is a public episode. If you would like to discuss this with other subscribers or get access to bonus episodes, visit dianawolftorres.substack.com

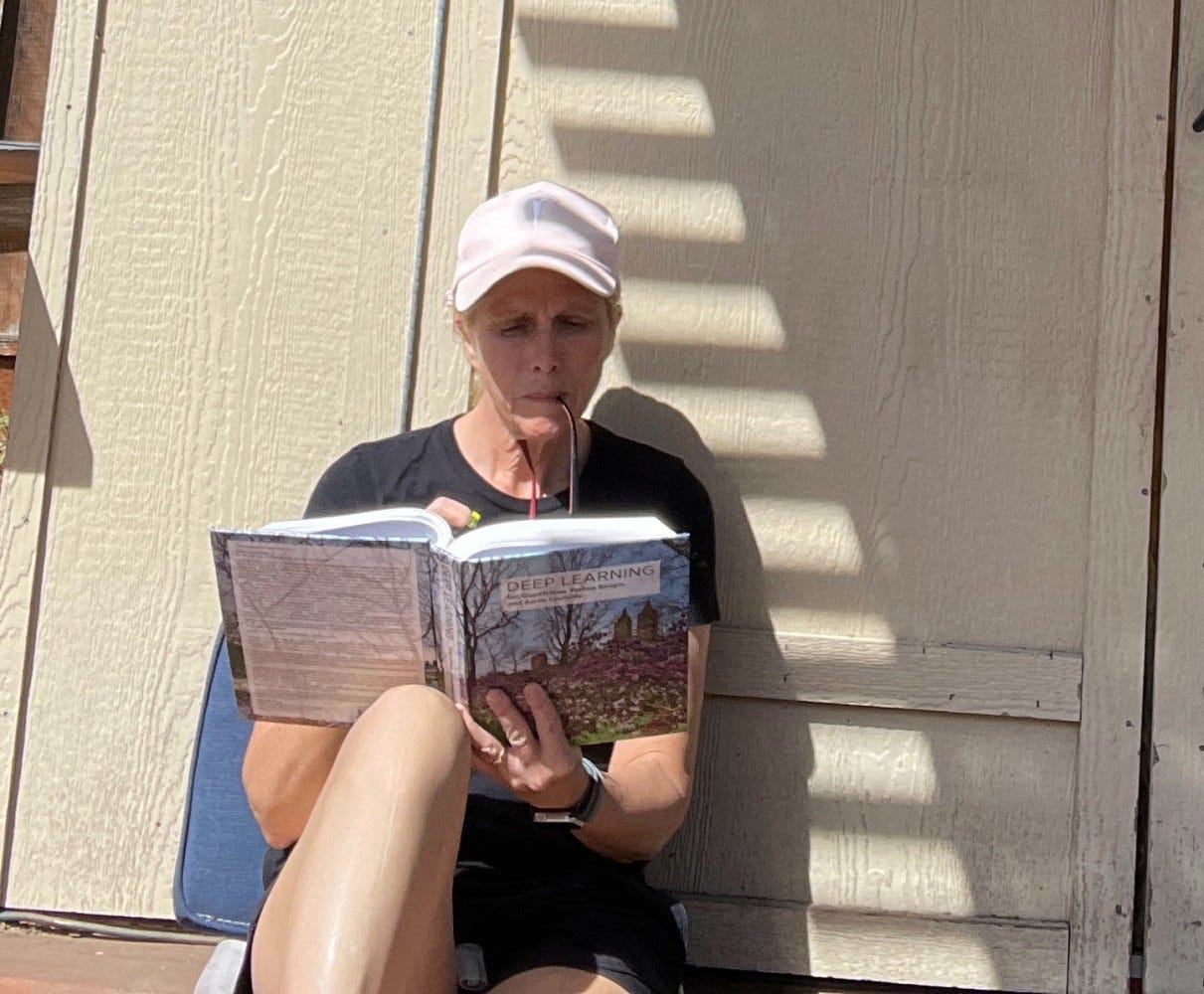

What Is Deep Learning?Let’s go back to where it all started: not with a GPU or a neural net, but with a question—and a textbook.Three years ago, between October and November 2022, a lot happened in the span of two months. Our Tesla finally arrived after months on the waitlist. ChatGPT 3.5 launched. And suddenly, I had one big question: How does all of this actually work?So I did what any curious, slightly obsessive writer would do—I ordered a copy of Deep Learning by Ian Goodfellow, Yoshua Bengio, and Aaron Courville. If you’ve never cracked it open, it’s roughly the size of a toaster oven and has the same ability to make your brain overheat.I found a used copy of the textbook so I could highlight the heck out of it. MIT now offers a free digital version. (Link in the resources below.) But I wanted to see the words, feel the pages, and by the end, my fingers seemed to be permanently stained fluorescent yellow. But it was an oddly satisfying deep dive into machine learning. And, I learned so much. The brain of my car began to make sense of me, and “chatbots” took on a whole new dimension.The math? Let’s just say it made me feel like a guest star on The Big Bang Theory, the kind who nods along and prays no one asks follow-up questions.But the words—ah, the words! Overfitting. Underfitting. Gradient descent. Stochastic gradient descent. Stochastic parrot. It was like discovering a new dialect of techie elvish, and I was hooked.Language, Not EquationsI’ve always loved languages. English. German. French. Spanish. Aurebesh. Klingon. ASCII. BASIC. Pascal. Scratch. Python. And I realized, I didn’t need to decode every formula to appreciate what deep learning meant—not just in code, but in culture, in conversation, in how we think about intelligence itself.So I started sharing a single word a day. That’s it. One concept, one definition, maybe a story. And to my surprise, people started reading. Some were ML PhDs who enjoyed a break from equations. Some were curious newcomers trying to understand what all the hype was about. Some just liked the name.Eventually, I expanded. AI was suddenly in the news every five minutes. OpenAI. Anthropic. DeepMind. Elon. Apple. The test kitchens of Silicon Valley were cooking up something new every week, and I wanted to taste it all.But this week, after a few unsubscribes over on the LinkedIn version of this blog, (I dared to mention AI and climate policy in my post last Monday), I realized something: sometimes it’s good to keep things simple. So I’m going back to my roots: one word a day, one concept at a time. Yes, it means I’m switching back to a daily publication. I’ve missed the challenge and the strict discipline of getting a newsletter out every day without fail.So, let’s begin, shall we? And, of course, we need to start with the obvious.Deep Learning: The DefinitionDeep learning is a subfield of machine learning that uses neural networks with many layers—hence the “deep.” These networks are inspired by the human brain (loosely) and are particularly good at recognizing patterns in data like images, text, and speech.In simpler terms?If regular machine learning is like teaching a kid to recognize a cat by pointing out fur, whiskers, and tails, deep learning is like showing the kid a million pictures of cats and letting them figure it out themselves.It’s why your phone knows your voice, why ChatGPT can write poetry, and why TikTok knows what you want before you do. I’ve never understood TikTok, and it makes my brain hurt, but that’s essentially how deep learning works.Why It MattersDeep learning powers:* Image recognition (think: medical imaging, self-driving cars)* Speech recognition (Siri, Alexa, Google Assistant)* Natural language processing (translation, summarization, ChatGPT)* Recommendation systems (Netflix, Spotify, YouTube)And it’s just getting started. Deep learning is the engine driving the AI boom.Tomorrow’s WordTomorrow, we will dig into another of the words that now lives in a permanent corner of my brain: stochastic gradient descent. (Spoiler: it’s not nearly as scary as it sounds. And yes, “stochastic” just means “random.”) See? Digging into deep learning is actually rather fun. See you tomorrow.FAQsIs deep learning the same as AI? Not exactly. Deep learning is a technique within AI—specifically, within machine learning.Do you need to understand math to understand deep learning? If you want to be a machine learning engineer, yes. If you want just to understand the concepts, you can certainly do so without doing the calculations. Come along on this journey, and I promise there will be no math involved.What are neural networks? They’re algorithms modeled (loosely) on the brain’s architecture, made of layers of “neurons” that pass information.Why is it called ‘deep’? Because of the many layers in the network—each one adds depth.Can deep learning be dangerous? Like any powerful tool, yes. But understanding it is the first step toward using it responsibly.Source:Goodfellow, Ian, Yoshua Bengio, and Aaron Courville. Deep Learning. Cambridge, MA: MIT Press, 2016. http://www.deeplearningbook.orgAdditional Resources for Inquisitive MindsExplain deep learning to me in a way that won’t hurt my brain.Give me that Big Bang Theory Math!Chapter 1 of Deep Learning video lecture.Chapter 9 Video Lecture. Convolutional Networks.Chapter 10. Sequence Modeling.The Course That Started It All. Start Here!Want to Learn More? Start with the course that started it all. Andrew Ng’s Deep Learning course on Coursera. (now updated and called: “Deep Learning Specialization.”This is such a great clip. 14 years ago, this is where Andrew Ng announces he will be offering his machine learning class for free.Note: Reader @David W Baldwin shared a great story about taking one of the earliest classes with Dr. Ng. See his post below for details on what it was like to be in that amazing course! Maybe you will be inspired to take this iconic course yourself.Editor’s Note:If you enjoyed this post, please consider sharing it with a friend. I’m committed to keeping all of my blogs and podcasts free for subscribers—no paywalls, no gimmicks. Your shares help me reach more curious minds. Thanks so much for the support.About the podcast: The podcast attached to this article is an audio overview from Google’s NotebookLM. The sources used in the “notebook” are this article, and the following sources:100 Deep Learning Terms Explained – GeeksforGeeksDeep Learning vs Machine Learning: Key Differences – Syracuse University’s iSchoolDeep Learning: Back to the RootsUnderstanding Supervised Learning: A Guide for Beginners – DEV CommunityWhat Is Deep Learning AI? A Simple Guide With 8 Practical Examples | Bernard MarrWhat Is Deep Learning? | A Beginner’s Guide – ScribbrWhat Is Deep Learning? | IBMWhat is Backpropagation? | IBMWhat is a Neural Network? – Amazon AWSWhat are some of the most impressive Deep Learning websites you’ve encountered? – r/MachineLearning (Reddit)#artificialintelligence #deeplearning #machinelearning #neuralnetworks #IanGoodfellow #YoshuaBengio #AaronCourville #AndrewNg #DeepLearningtheBook #DeepLearningwiththeWolf #TreeHuggersfortheWin This is a public episode. If you would like to discuss this with other subscribers or get access to bonus episodes, visit dianawolftorres.substack.com

China is rapidly becoming the global face of clean energy leadership—a surprise to those who still associate green innovation with Western nations. In January 2025, the United States—once celebrated as a climate policy trailblazer—began its second withdrawal from the Paris Climate Accords, undoing its reentry from February 2021 under President Biden. This shift coincided with China’s dramatic rise as the new global leader in clean energy and possibly AI leadership, (although that last point is more hotly debated than the scorching summer days we’re suffering everywhere now.) It’s a déjà vu moment for U.S. climate policy. Back on June 1, 2017, President Trump stood in the (now paved over) Rose Garden and declared: “In order to fulfill my solemn duty to protect the United States and its citizens, the United States will withdraw from the Paris Climate Accord…The bottom line is that the Paris Accord is very unfair at the highest level to the United States.” Trump also claimed the agreement would “undermine our economy, hamstring our workers, and effectively decapitate our coal industry,” calling it “a massive redistribution of United States’ wealth to other countries…That’s not going to happen while I’m president, I’m sorry”. Biden’s restoration of U.S. involvement four years later came with the words: “We can no longer delay or do the bare minimum to address climate change. This is a global, existential crisis. And we will reassert a leadership role in climate action”.In January 2025, as the Trump administration began its second term, it quickly initiated the United States’ withdrawal from the Paris Climate Accords, framing the move as vital for “America First” energy policy. As global attention now turns to the annual Paris negotiations, this earlier decision is making headlines again—especially as world energy demands soar with the rapid expansion of AI infrastructure and data center growth. Indeed, Trump signed the paperwork to pull the US from the Accords almost immediately after taking office:“I am immediately withdrawing the unfair one-sided climate accord rip-off. The United States will not undermine our own industries while China pollutes without consequence”.- President Donald TrumpChina’s Relentless Clean Energy and AI SurgeChina is now the unchallenged global supplier of solar panels (producing about 80% of the world’s total) and over 70% of all electric vehicles, propelling costs for solar and other clean energy technologies lower—sometimes by 90% compared to a decade ago—making the energy transition accessible for countries across the world. Its fast-growing battery and wind turbine sectors have turned it into the linchpin of global renewable supply chains, effectively removing price barriers for poorer economies.But China’s revolution is fueled not just by hardware, but by software: artificial intelligence is now central to how China manages its energy grids, forecasts renewable output, and meets surging demand from both manufacturing and the rise of digital and robotic automation. Chinese energy networks use AI to balance supply and demand, optimize clean energy mix, and manage the growing complexities of grid reliability.Efforts extend beyond domestic borders. Since 2022, China has invested in clean technology factories in over 50 countries, stopped funding foreign coal plants, and poured billions into global renewables—shaping the trajectories of energy policy in Asia, Africa, and Latin America. New diplomatic efforts like the “Africa Solar Belt” have enabled the roll-out of solar power to nearly 50,000 households, further empowering the clean energy surge in the Global South.Unlike China’s enormous expansion in solar, wind, and clean tech exports, its international coal plant construction shows a radical change in direction. As of 2022, China announced a policy to halt all funding for new coal power plants abroad, effectively ending its role as the world’s largest financer of overseas coal projects. Leading the World in Clean Energy—And Still Building Coal Plants (Domestically)Despite China’s global leadership in clean energy and technology exports, its energy transition at home still faces major challenges. Domestically, China remains heavily reliant on coal, commissioning 21 GW of new coal-fired power in the first half of 2025—the highest six-month total since 2016—with full-year additions expected to exceed 80 GW. This surge in new coal plants runs counter to China’s climate ambitions, even as the nation delivered a modest 1.6% decrease in carbon emissions in the first half of 2025, thanks largely to record investments in renewables and grid modernization.While China has stopped funding new coal projects abroad to focus on its international clean energy reputation, at home coal remains a crucial—though declining—part of its power mix (down to about 50% from 75% in 2016). China’s use of AI and robotics is revolutionizing the efficiency and scalability of green infrastructure, helping renewable energy meet rising demand and slowly squeeze coal’s dominance. Nonetheless, the persistence of structural and local reliance on coal power shows that China’s energy transition is both impressive and incomplete.Green Leadership with Serious CaveatsWhile China’s record-breaking investments in solar, wind, and electric vehicles have made it the world’s dominant supplier of clean technology, its overall climate record is much more complex. Even as China has installed more renewables than any other country—now accounting for nearly 40% of its total power generation in 2025—it still receives a “Highly insufficient” rating from Climate Action Tracker. This poor rating is due to the country’s continued approval and construction of new coal-fired power plants and emissions targets that fall short of what’s required to meet the Paris Agreement’s 1.5°C goal.China’s new climate plan aims for a decrease of just 7-10% in greenhouse gas emissions from peak levels by 2035—a conservative target that’s unlikely to drive deeper reductions beyond what current policies already deliver. The country risks missing its own carbon intensity reduction goals unless it accelerates coal phase-down and enhances industrial decarbonization.Why the “Green Leader” Label Still FitsYet, in practical terms, China’s leadership in clean energy manufacturing, deployment, and diplomacy is reshaping global markets. By exporting affordable solar panels, wind turbines, and EVs, China is enabling the global transition at a scale that no other country matches. Experts argue that Beijing’s ability to lower technology costs and drive clean energy growth worldwide—even as it struggles with its own coal reliance—amounts to a new kind of climate and economic leadership. China leads in greening the world, but is not yet a model of deep, Paris-aligned climate ambition. Its progress is real, but its gaps must be acknowledged.And, that brings us back to the other global leader showing some gaps in climate action: the United States.America’s Decisive Decade: Data, Policy, and ClimateAs the U.S. steps back from climate commitments, the rapid expansion of AI and data center infrastructure is driving domestic electricity consumption sharply upward—by 2030, data centers could eat up 9–12% of America’s electricity, rivaling entire nations’ energy needs. Without parallel investment in clean energy, this boom risks deeper fossil fuel dependency and rising emissions. Trump administration policies now target a rollback of federal support for wind, solar, and electric vehicles, undoing much of the previous decade’s progress in favor of expanded fossil fuel exploration and deregulation.If the United States continues on this path—prioritizing fossil fuels, paring back climate funding, and doing nothing to mitigate emissions—the next ten years will see reduced climate progress, heightened energy prices, and increasing environmental damages. U.S. emission reductions will fall short of Paris targets, and climate costs—in lives, dollars, and quality of life—will steadily mount. The consequences will be felt in worsening floods, heat waves, and economic instability, while innovative clean energy leadership shifts abroad.America’s greatest export was once innovation; will our next be indifference in the face of catastrophe?Additional Reading for Highly Inquisitive Minds:Trump, Donald J. “Statement by President Trump on the Paris Climate Accord.” The White House Archives, 31 May 2017. “United States and the Paris Agreement.” Wikipedia, Wikimedia Foundation, 31 May 2017. “China Announces New Climate Target.” World Resources Institute. September 24, 2025Climate Action Tracker. Country: China. Data Updated. November 6, 2025.China’s Shift to Clean Energy is Saving the Paris Climate Accord. Wall Street Journal. November 6, 2025.China, world’s top carbon polluter, is likely to overdeliver on its climate goals. Will that be enough? PBS. November 6, 2025. Carbon Brief: Clear on Climate. Q&A: What does China’s new Paris Agreement pledge mean for climate action? President Xi Jinping has personally pledged to cut China’s greenhouse gas emissions to 7-10% below peak levels by 2035, while “striving to do better”. November 9, 2025.China makes landmark pledge to cut its climate emissions. BBC. com. 24, September 2025.#climateleadership #AIandClimate #ChinaEnergy #USClimatePolicy #parisaccords #deeplearningwiththewolf #dianawolftorres #climateemissions #cleanenergy This is a public episode. If you would like to discuss this with other subscribers or get access to bonus episodes, visit dianawolftorres.substack.com

A quote on the “All Things AI” podcast caught caught my ear this morning: “Regarding the Colorado AI law, Altman stated ‘I literally don’t know what we’re supposed to do’” to comply, highlighting the challenge of state-by-state regulation. “I’m very worried about a 50 state patchwork. I think it’s a big mistake. There’s a reason why we don’t usually do that for these sorts of things.”Brad Gerstner was speaking with Satya Nadella (Microsoft) and Sam Altman (OpenAI) and this topic of patchwork legislation between states came up.Satya Nadella responded: “[Yeah], I think the fundamental problem of this patchwork approach is… quite frankly… between Microsoft and Open AI… we’ll figure out a way to navigate this… we can figure this out… the problem is anyone starting a startup and I think it just goes to the exact opposite of I think what the intent here is.”I decided this was a good time to refamiliarize myself with the Colorado law in question. How Did Colorado Become the Test Case?Colorado’s Artificial Intelligence Act (SB24-205) is the first comprehensive U.S. state law targeting “high-risk” AI systems. The legislation aims to prevent algorithmic discrimination across critical sectors—healthcare, employment, education, financial services, and more. It requires developers and deployers of AI to:* Document and disclose training data lineage* Notify consumers when AI is used in consequential decisions* Conduct risk assessments and publish mitigation strategies* Report incidents of discrimination to the attorney generalWhat counts as “high-risk?” Any system making—directly or indirectly—a major decision for a consumer: hiring, loan approvals, admissions, and so on. Violations can be prosecuted as deceptive trade practices by the state, with no private right of action.Enforcement begins June 30, 2026, following intense negotiation and a delayed rollout prompted by industry concern over compliance burdens.Why Tech Leaders Are Talking About ItAltman’s blunt quote isn’t just about Colorado. It’s emblematic of a larger, growing crisis. Companies face sweeping, often ambiguous requirements. Even with expansive legal teams, AI giants like OpenAI and Microsoft admit their confusion about practical steps for compliance.Startups and mid-sized tech firms face even worse odds: costly audits, legal ambiguity, and fear of state-level litigation. Many warn they may halt or slow new releases in Colorado, or even avoid doing business there altogether.The kicker? This regulatory confusion makes scaling AI nationwide nearly impossible—unless Congress steps in.“Patchwork” Laws: Colorado Is Only the BeginningThe real tech headache is that Colorado is far from alone. The U.S. “patchwork” of state laws on AI is expanding fast—with each state defining risk, discrimination, and audit standards differently.Standout Examples* California SB-53 (2025): Sweeping transparency, safety, and whistleblower rules for “frontier” AI systems worth over $100M in training costs. Developers must publish risk frameworks and report critical incidents. Fines for noncompliance reach $1 million per violation.* Illinois HB 3773 (2024): Bans discriminatory AI in employment; employers must provide notice if AI is used in job interviews and decisions. Covers generative AI and sets stringent documentation standards.* New York’s RAISE Act (2025): Requires major AI companies to create safety protocols against catastrophic risks (including weapons and cyberattacks). Mandates public disclosures and third-party reviews; penalties reach $10–30 million per incident.* Other States: Connecticut, Kentucky, Maryland, Montana, Texas, Nebraska, and dozens more are now introducing risk management, transparency, and discrimination laws. Each has its own definitions, obligations, and liability provisions.With 210 active bills in 42 states and 20 new AI laws passed just in 2025, companies face a minefield of conflicting requirements, definitions, and deadlines.The Real Risk: Fragmentation Slows InnovationAnalysts and executives warn that the U.S. is at risk of replicating, and magnifying, the regulatory confusion seen in privacy law—with a fractured market of 50 regimes. As enforcement looms, AI companies face heavier compliance costs, legal ambiguity, and slower innovation. Some may even divert investments to regions with clearer, national rules or halt product rollouts in patchwork states.Will Congress Ride to the Rescue?Most observers agree that nationwide, uniform AI regulation is urgently needed. Without it, Colorado and its peers will continue to shape the U.S. tech landscape—potentially at the expense of both consumer protection and industry progress.Watch: “All things AI w @altcap @sama & @satyanadella. A Halloween Special. 🎃🔥BG2 w/ Brad Gerstner” Sources:* Governing Magazine: Inside the Controversy Over Colorado’s AI Law* Colorado General Assembly: SB24-205 Bill Text* Skadden: Colorado’s Landmark AI Act* Frost Brown Todd: Decoding Colorado’s AI Act* California Senate Bill 53* A&O Shearman: California Adopts Landmark AI Law* Illinois Enacts AI Requirements* New York’s RAISE Act* State Approaches to AI Regulation Are a Patchwork* AI Journal: Patchwork AI Laws Leave Gaps#AI #ArtificialIntelligence #ColoradoAIlaw #TechRegulation #PatchworkAIlaws #LegalCompliance #AIlaws #AIstartups #TechPolicy #FutureofAI #Innovation #RegulatoryRisk #StatebyStateAI #AIconsequences #Altman #OpenAI #Microsoft #AInews #AIcompliance #AIlegislation #Newsletter #TechIndustry #bradgerstner This is a public episode. If you would like to discuss this with other subscribers or get access to bonus episodes, visit dianawolftorres.substack.com

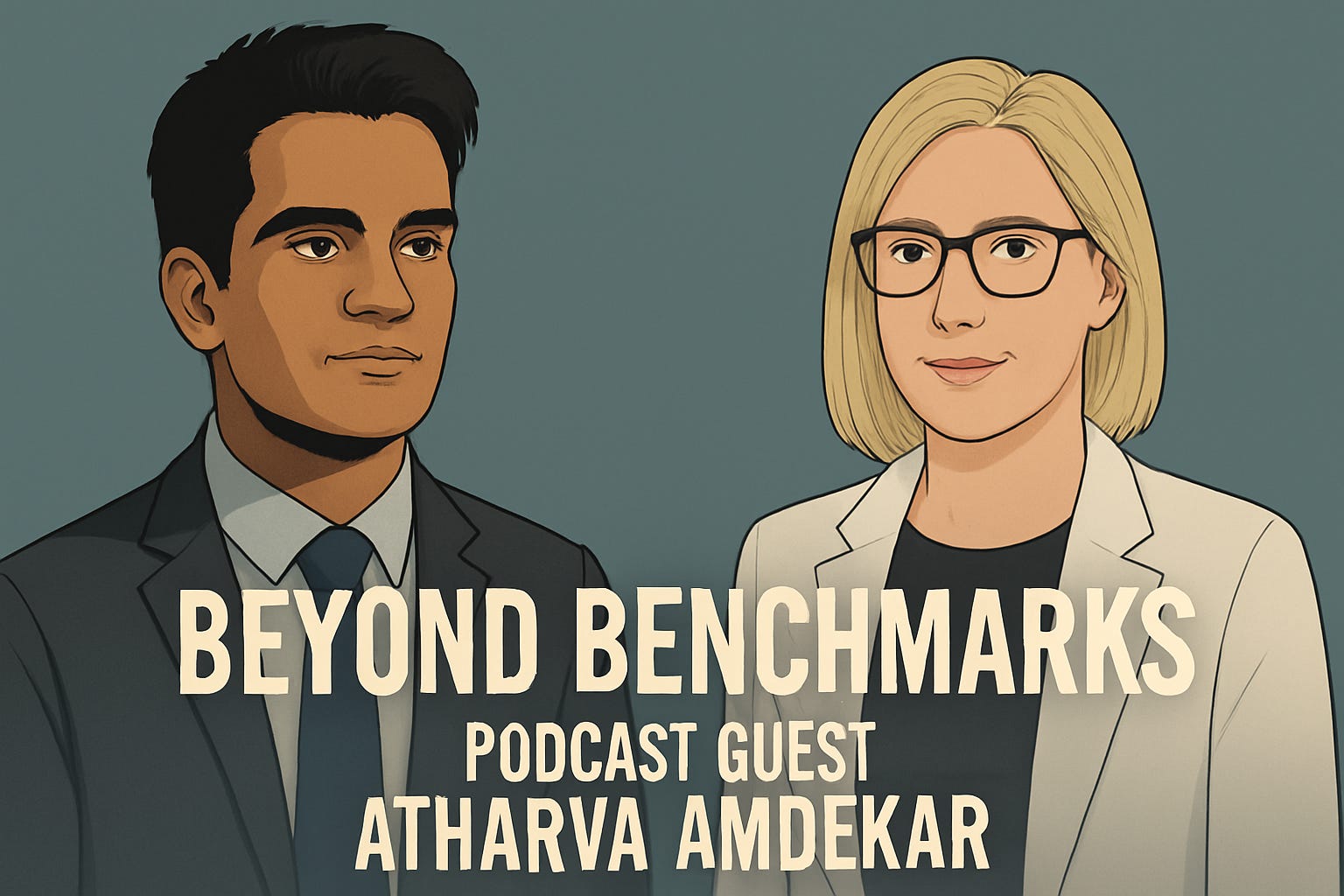

Some people enter AI to build faster systems. Atharva Amdekar came to understand them. From trading floors to research labs, his career reflects a deeper inquiry—not just into how AI performs, but into how it reasons, aligns, and sometimes misaligns with us.This conversation reveals not just how AI works, but how it should think.From IIT to Stanford: The Spark of CuriosityThe story begins not in a lab, but in a dorm room in India. It was the summer after his freshman year at IIT when Atharva stumbled onto Andrew Ng’s famed machine learning course on Coursera. “There was this sense of magic,” he recalls. “The moment a neural network recognized a handwritten digit—it was like watching a machine learn to see.”That sense of wonder carried him to Stanford, where curiosity matured into a rigorous exploration of AI’s deepest questions. And it was there that he learned to ask not just what machines can do, but why they do it.Lessons from the Trading Floor: When Models Meet the MarketBefore Stanford, Atharva worked in quantitative finance—a world of volatility, imperfect data, and razor-thin margins. It was here he learned a lesson that many machine learning practitioners skip: a model that looks good on paper may crumble in the real world.“You learn to distrust perfection,” he says. “In trading, even small errors can be catastrophic.”That early exposure to stress-testing models became a philosophical anchor in his work. Today, it’s less about building the “best” model, and more about building one that holds up under pressure—a skill that proves vital at Amazon, where AI touches millions of lives.From PhD to Product: The Three Acts of a Tech CareerAtharva’s professional journey reads like a microcosm of AI itself: a search for balance between depth, speed, and scale.In academia, he learned to slow down and ask foundational questions. “You’re encouraged to explore, even if there’s no immediate outcome,” he says. At startups, the tempo changed. “It was about moving fast, wearing ten hats, and learning to live with imperfection.” In Big Tech, the lesson was scale—and responsibility. “It’s not just about technical correctness anymore. It’s about fairness, reliability, and second-order effects.”Each environment left an imprint. Together, they’ve shaped a problem-solver who is both rigorous and agile—an unusual, and powerful, combination.MOCA: Where Morality Meets CausalityOne of Atharva’s most thought-provoking initiatives is MOCA—short for Moral and Causal Alignment. This project asks a question that goes beyond raw performance: Are AI models thinking in ways that mirror human moral and causal reasoning?“In cognitive science, we’ve spent decades studying how people make moral and causal judgments,” Atharva explains. “But AI evaluation is still shallow. We know what the model predicts, but not why.”MOCA addresses this by drawing from more than two dozen cognitive science studies, creating a benchmark of real-world moral dilemmas and cause-effect scenarios. And what it reveals is both surprising and important.“Scale alone doesn’t bring moral alignment,” he notes. “Sometimes, larger models deviate more from human intuition, especially in nuanced moral cases.”The Ethical Distance ProblemSo, how close are we to building AI that reasons like us?“Think of it like a race,” Atharva says. “Factual knowledge and ethical reasoning are running on two completely different tracks. And their finish lines aren’t even in the same stadium.”Factual tasks—like summarization or question answering—have seen explosive progress. But ethical reasoning remains a chasm. One telling example: LLMs often struggle with forgiveness in scenarios where harm is inevitable—something humans are intuitively better at.And the challenge isn’t just technical. “We haven’t even defined the target. Whose ethics should AI reflect?”Building the Right Kind of MindWhat Atharva ultimately argues for is a shift in mindset: from obsessing over benchmark performance to understanding what kind of cognitive tendencies we’re instilling in our models.“Are we optimizing for intellectual humility? For moral courage?” he asks. “When we train for safety, do we accidentally train for passivity?”These aren’t just engineering problems—they’re ethical ones. And the future of AI may depend on how well we confront them.Staying Curious at 100 Miles per HourWorking in high-stakes environments—whether debugging a model at 2 a.m. or facing a fast bowler in cricket—requires composure. Atharva sees strong parallels between the two. “Discipline over panic,” he laughs. “In both cases, the worst thing you can do is lose your head.”To stay sharp, he judges hackathons, peer-reviews papers, and treats each day job problem as a real-world puzzle. “It’s how I keep things exciting—no matter how fast work moves.”Speculative Future: AI Meets CricketYes, even cricket. Atharva envisions an AI coach that analyzes player movements and suggests real-time strategies. Imagine dynamic field placements or predictive insights into a batsman’s weakness—all powered by machine learning.“It’s about moving beyond stats,” he says. “AI could bring a layer of strategy that makes the game even more exhilarating.”Models, Minds, and Moral ReasoningAtharva Amdekar’s work offers more than technical achievement—it’s a call for moral clarity in a field racing toward capability. In a world building faster machines, he reminds us to ask: What kind of minds are we shaping?Because the future of artificial intelligence won’t just be measured in power. It will be measured in wisdom. And wisdom, as he shows us, is not an afterthought. It’s the foundation.Vocabulary Key• Moral permissibility: Whether a given action is morally acceptable.• Causal alignment: Whether a model understands cause-effect reasoning similar to humans.Editor’s Note: Interviewing the people in the AI and robotics industry is my absolute favorite part of being an influencer. Preparing for the interviews takes time, and digging deep into what they do. I love research so this is always a fun challenge. During the interviews, I learn so much and think about their words for weeks. A technical note: the audio cuts out slightly in the last 30 seconds. As far as a technical glitch goes, the timing was very good.#AtharvaAmdekar #BeyondBenchmarks #AIResearch #AIpodcast #TechPodcast #DeepLearningWithTheWolf #AIEthics #MoralMachines #AIandSociety #ResponsibleAI #MachineIntuition This is a public episode. If you would like to discuss this with other subscribers or get access to bonus episodes, visit dianawolftorres.substack.com

🎧 This audio was taken from a video interview with Shubham Sharma, founder of SunitechAI. We explore his new model — the Geometric Mixture Classifier (GMC) — which blends explainability and performance in AI.🎥 To watch the full video or read the article at #deeplearningwiththewolf on either Substack or LinkedIn. This is a public episode. If you would like to discuss this with other subscribers or get access to bonus episodes, visit dianawolftorres.substack.com

“We think that AI represents a rare, once-a-decade opportunity to rethink what a browser can be.” — Sam Altman, CEO, OpenAIWhen OpenAI unveiled ChatGPT Atlas this morning, the message wasn’t subtle: the way we browse the web is broken.For twenty years, we’ve lived inside a tab maze—searching, copying, pasting, toggling, repeating. Atlas is OpenAI’s answer: a browser with ChatGPT built into every corner. No plug-ins. No separate app. ChatGPT now is your browser.A Browser That Knows What You’re DoingThe premise behind Atlas is strikingly simple: you should never have to leave the page you’re on to get help. Highlight a paragraph, and a glowing cursor appears. You can rewrite, summarize, translate, or clarify it—right where it lives.OpenAI calls this Cursor Chat, and once you try it, the traditional copy-paste workflow feels instantly dated. Product lead Adam Fry summed it up neatly: “No more copy-pasting. It’ll just be right there for you.”Writers refine drafts inline. Students translate study notes instantly. Developers debug without leaving the code view. Each interaction quietly dissolves one little barrier between thinking and doing.The Browser That Clicks BackAtlas’ most ambitious idea, though, is something OpenAI calls Agent Mode—a task-running assistant built directly into the browsing experience. Ask it to book a table, fill out a form, compare research papers, even summarize your open tabs, and it will execute—right in your browser, using your context and credentials (if you allow it).It’s like ChatGPT with hands. Or, more provocatively, like a mini-intern living in your browser window. Research lead Will Ellsworth said they wanted the browser to “feel like it’s coming alive.” The demos hint that they succeeded.Privacy, Power, and PanicNot everyone watching the launch shared the excitement. Live chat reactions during the OpenAI stream showed nervous laughter mixed with genuine concern: “So it reads my tabs?” one user wrote.OpenAI insists privacy was built from the ground up. Browser memories are off by default, stored locally, and can be deleted with a single click. The agent can’t access your files or run code. You can even browse in “logged-out mode,” cutting the AI off entirely.Still, the tension is new: we’re now being asked to trust the same company that built the model reading our writing—to also handle every click and scroll of our digital life.The Emotional Core of AtlasFor many, myself included, the appeal of Atlas is deeply personal. When OpenAI engineer Ryan O’Rourke described flattening the “copy-paste loop” into something seamless, he was talking about my day-to-day life as a writer. Like millions of others, I shuttle text between ChatGPT and the web constantly. The idea that this flow could simply disappear—that I could revise a paragraph mid-draft without context-switching—feels revolutionary.But revolutions have costs. Once we let AI live inside our most-used tool, every moment online becomes a data point in a shared conversation with a model that learns from us. Atlas promises “more capability, more control”—but control, as ever, depends on who defines it.A Browser That Feels Like a Co-WorkerIn practice, Atlas feels less like Chrome or Safari and more like co-browsing with an attentive colleague. It remembers what you were researching, nudges you toward context you’ve already touched, and lets you command the web through natural language: “Show me that recipe I read yesterday.” “Summarize my open articles.” “Book restaurants near this page.”Altman calls this the birth of a “super-assistant”; critics call it the next front in AI overreach. Both can be true—because the more helpful Atlas becomes, the more we’ll rely on it, and reliance has always been the truest path to trust... or dependence.The Bottom LineFor now, Atlas is here, polished but unfinished. It’s free on macOS for all ChatGPT users—Plus and Pro members get the first shot at Agent Mode. A Windows and mobile rollout is on the way.It feels like the start of a something very new and different- ending that dance back and forth between browser and chatbot. Perhaps, if all goes as promised, it also means the end of the copy-paste dance defining so much of digital work. OpenAI’s integration blurs old lines, and the experience lands somewhere between delight and discomfort depending on where you draw your limits.Bonus Section: The Internet ReactsHow are people feeling about the launch of ChatGPT Atlas? Let’s start with my own reaction as a writer: this browser could be a game-changer for productivity, especially if you live inside document drafts and emails. But there’s an undeniable unease—like that moment you realize your GPS might be sending you down a one-way street. Are we heading toward a future where a single company controls not just your chat, but your entire online workflow? (Because that never ends well in sci-fi movies.)If you have a few minutes, check out the full OpenAI livestream and read the comments —it’s a window into the internet’s gut reaction. (Also, just for fun, count how many times Sam seems to be caught off-guard during the video.)🔴 How People Are Feeling“ChatGPT is the biggest data collection system in history. It’s insane how they’ve infiltrated every aspect of life.” — u/Melondeen from the OpenAI livestream chat“In other words… if you use this browser, literally everything will be revealed to this AI. This is scary.”— u/damianit (OpenAI livestream chat)Here are some of the top comments from the r/ChatGPT Reddit (not affaliated with OpenAI):“Please just cure cancer and do my dishes. Thanks.” - u/newaccount47“If you thought Google was Evil, just wait and see what OpenAI can do.” -u/DinoZambie.“I dont think anyone gives a sh** about a web browser that 10000% harvests everything you ever visit.”-u/blompo“So instead of wasting only some energy while browsing, you multiply that now by wasting some tokens and a multitude of energy, constantly.What a time to be alive.”- u/duty_of_brilliancy“HERE COME THE ADDS!!! MONEY, MONEY, MONEY!!!”- u/CaskofAleForeverI believe he meant “ads.” (No more ale for you.)Deep Thoughts- What Could This All MeanChange always comes with friction. Most users have grown used to a separation between browser and chatbot, and Atlas blurs that line. For those who like their foods—and digital worlds—separate, this is unsettling.Yet, the small handful who’ve tried Atlas directly report positive experiences. It’s possible, as adoption widens, the tone will shift from skepticism to dependence. That dependence can be its own kind of anxiety: after all, once you get used to the tool, going without it starts to feel impossible (just like forgetting your iPhone).Will Atlas fully change our online habits? Maybe. I stuck to my familiar workflow for writing this article and haven’t tried it. Maybe that tells you something about human psychology right there. We tend to stick with what we know.A Mini Lesson in Greek HistoryWhile OpenAI hasn’t explained their choice, the name “Atlas” resonates on several profound levels. In myth, Atlas is condemned to hold up the sky—a figure of endurance, bearing the cosmic weight for eternity. He is both a warning and a testament to the cost of vast ambition. But Atlas also gave his name to the earliest maps and to oceans, symbolizing guidance and exploration through unknown worlds. By invoking Atlas, OpenAI’s browser quietly invites us to question: are we being offered a tool to chart new territory—an aide for understanding and navigation—or another burden, a modern weight on our digital shoulders as we venture deeper into the online cosmos? The meaning remains open; perhaps it is both.For another look at Atlas, check out this video from my fellow creator, Matt Wolfe of #FutureTools. I always love his frank assessments as he dives right into the latest tools. His videos are worth a watch.#chatgptatlas #aiassistant #openai #aibrowser #techethics #browsingexperience #agentmode #deeplearningwiththewolf #dianawolftorres #openailivestream #agenticweb #mreflow This is a public episode. If you would like to discuss this with other subscribers or get access to bonus episodes, visit dianawolftorres.substack.com