Discover The Reflective AI Enterprise

The Reflective AI Enterprise

10 Episodes

Reverse

We integrate all the analytical instruments onto a single, massively complex case: the Grab super app. We follow Grab’s journey to its first $200 million profit and tackle a high-stakes ethical debate: should your ride-hailing data determine your creditworthiness?#Grab #SuperApp #AIOrchestration #DataEthics #BusinessModelCanvas

The organization that learns fastest wins. We explore how Booking.com runs 25,000 controlled experiments every year, and why the true outcome of an experiment is learning, not the final solution.#Experimentation #LearningCycles #BookingCom #ABTesting #AgileInnovation

We contrast two heavily regulated organizations: Boeing and DBS Bank. Boeing had every governance document imaginable, yet 346 people died because of "Constraint Theater". DBS Bank built enabling "quality governance," generating over SGD 1 billion in AI-driven economic value.#AIGovernance #Boeing737MAX #DBSBank #ConstraintTheater #AIEthics

Digital completely rewrites the rules of scale, scope, and speed. Discover why fast-followers consistently win while 47% of first-movers fail, and explore how Shopee uses graduated institutional trust to govern millions of users.#PlatformEconomics #NetworkEffects #Shopee #CompetitiveStrategy

How did Nubank build an $81 billion bank by asking a simple question? We break down the difference between "doing things better" and "doing better things" and introduce the AAA Framework to decide when to Automate, Augment, or Amplify human effort.#ValueProposition #Nubank #Fintech #UserCentric #AAAFramework

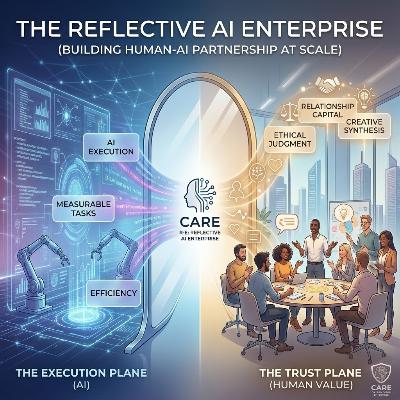

Zillow lost $881 million because they automated a decision that required contextual human judgment. This episode defines the human-AI boundary using the Dual Plane Model and explores the Mirror Thesis—how AI reveals the uniquely human elements that truly matter.#TrustPlane #FutureOfWork #Zillow #MirrorThesis #Automation

Everyone is adopting AI, but almost nobody is getting value from it. In this opening session, we explore the Institutional Knowledge Thesis, dive into Bainbridge's Irony, and discuss the music industry's catastrophic $58.3 million defense of CDs against the demand signal of 80 million Napster users.#AIAdoption #BainbridgesIrony #InstitutionalKnowledge #DigitalTransformation

An overview of Dr. Jack Hong's SMU MBA course. We unpack the course's central paradox: the binding constraint on AI transformation is not the AI's capability—it is the quality of human judgment in setting the boundaries within which AI operates.#AITransformation #BusinessStrategy #Leadership #MBA #HumanJudgment

Is your AI writing brilliant code, or is it just hallucinating with confidence?In 2025 and 2026, "vibe coding" promised magic: just describe what you want in natural language, and the AI builds it. But at scale, vibe coding is chaos. General-purpose AI suffers from "amnesia" (forgetting your instructions 30 minutes later), "convention drift" (ignoring your internal frameworks), and "security blindness" (happily hardcoding API keys).The problem isn't the AI model; it’s the complete absence of your organization's institutional knowledge.In Episode 2, we explore the death of vibe coding and the rise of Cognitive Orchestration for Codegen (COC). Building on the CARE "Human-on-the-Loop" philosophy from Episode 1, we discuss why treating AI like a magic spell is a recipe for disaster. Instead, we must treat AI as a highly competent new hire - an extension of your engineering team that requires structure, boundaries, and a "master picture" to succeed.In this episode, we break down the 5 Layers of the COC Digital Developer:Layer 1: Intent (The Role): Why you should stop using one generalist AI and instead route tasks to specialized expert agents.Layer 2: Context (The Library): How to replace stale AI training data with your living, breathing internal company handbook and coding patterns.Layer 3: Guardrails (The Supervisor): We reveal the "anti-amnesia" hooks—deterministic scripts that constantly look over the AI's shoulder to forcefully block dangerous commands and remind it of the rules on every single turn.Layer 4: Instructions (The Operating Procedures): How to use escalating directives and slash commands to give the AI exactly the context it needs, right when it needs it.Layer 5: Learning (The Performance Review): How the system observes successes and failures to automatically evolve new skills and agents, getting smarter with every session.Raw model capability is becoming a commodity. What isn't a commodity is your institutional knowledge. Tune in to learn how the open-source Kailash COC is turning AI into a self-sustaining, deeply accountable development partner.

What happens when an autonomous AI makes a million-dollar mistake—who is accountable?In the age of autonomous agents, the biggest barrier to enterprise AI adoption isn't interoperability or tool access; it is trust. Current AI platforms offer two unacceptable choices: either humans must approve every single AI action (which creates a massive bottleneck and defeats the purpose of automation), or AI operates without human accountability (which creates unacceptable regulatory and business risk).In this episode, we deep dive into the solution: Codifying the Human Trust Plane.Drawing on the Collaborative Autonomous Reflective Enterprise (CARE) framework, we explore how to permanently separate organizations into two distinct operational layers. We uncover how to let AI coordinate and complete tasks at machine speed on the Execution Plane, while keeping accountability, values, and authority permanently anchored to humans on the Trust Plane.But this isn't just theory. We explore the deep technology stack that makes this a reality, specifically looking at how the Enterprise Agent Trust Protocol (EATP) and the Kailash SDK orchestrate cryptographic trust.In this episode, we cover:The Governance Dilemma: Why we must move past the broken "Human-in-the-Loop" (bottleneck) and "Human-out-of-the-Loop" (abandonment) models.The Human-on-the-Loop Standard: How humans can cryptographically define the "operating envelope" and let AI execute within those strict boundaries.EATP & The Trust Lineage Chain: We break down the 5 elements—from Genesis Records to Audit Anchors—that serve as the "PKI for AI agents," ensuring every action links back to a human.Preserving Legacy Trust: Why the open-source Kailash framework is designed to inherit trust from legacy systems rather than ripping and replacing them.If you are a builder, strategist, or leader looking to cut through the generative AI hype and orchestrate true, verifiable autonomy, this episode is your blueprint.Learn more about the open-source tools discussed: Explore the Kailash framework (Kailash SDK, DataFlow, Kaizen, Nexus) and its codegen template to bring these concepts to life:Claude Code setup: https://github.com/Integrum-Global/kailash-vibe-cc-setupKailash SDK: https://github.com/Integrum-Global/kailash-sdkKaizen: https://github.com/Integrum-Global/kailash-kaizenDataflow: https://github.com/Integrum-Global/kailash-dataflowNexus: https://github.com/Integrum-Global/kailash-nexus