Discover AI Post Transformers

AI Post Transformers

AI Post Transformers

Author: mcgrof

Subscribed: 4Played: 169Subscribe

Share

Description

AI-generated podcast where hosts Hal Turing and Dr. Ada Shannon discuss the latest research papers and reports in machine learning, AI systems, and optimization. Featuring honest critical analysis, proper citations, and nerdy humor.

451 Episodes

Reverse

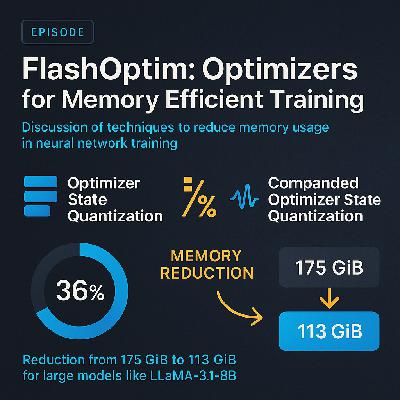

In this episode, hosts Hal Turing and Dr. Ada Shannon explore the groundbreaking paper "FlashOptim: Optimizers for Memory Efficient Training" by researchers from Databricks AI Research. The discussion centers around innovative techniques to significantly reduce memory usage in neural network training without sacrificing model quality. Key methods such as Optimizer State Quantization, Float Splitting Techniques, and Companded Optimizer State Quantization are unpacked, highlighting their potential to lower memory requirements from 175 GiB to 113 GiB for large models like Llama-3.1-8B. Listeners interested in AI research will find this episode compelling as it addresses the democratization of AI by making advanced models more accessible to those with limited hardware resources.

Sources:

1. https://arxiv.org/pdf/2602.23349

2. Mixed Precision Training — Paulius Micikevicius et al., 2018

https://scholar.google.com/scholar?q=Mixed+Precision+Training

3. 8-bit Optimizer States for Memory-Efficient Training — Tim Dettmers et al., 2022

https://scholar.google.com/scholar?q=8-bit+Optimizer+States+for+Memory-Efficient+Training

4. Parameter-Efficient Transfer Learning for NLP — Xiaoqi Li and Percy Liang, 2021

https://scholar.google.com/scholar?q=Parameter-Efficient+Transfer+Learning+for+NLP

5. Q-adam-mini: Memory-efficient 8-bit quantized optimizer for large language model training — approximate, 2023

https://scholar.google.com/scholar?q=Q-adam-mini:+Memory-efficient+8-bit+quantized+optimizer+for+large+language+model+training

6. Memory efficient optimizers with 4-bit states — approximate, 2023

https://scholar.google.com/scholar?q=Memory+efficient+optimizers+with+4-bit+states

7. ECO: Quantized Training without Full-Precision Master Weights — approximate, 2023

https://scholar.google.com/scholar?q=ECO:+Quantized+Training+without+Full-Precision+Master+Weights

8. AI Post Transformers: FlashOptim: Optimizers for Memory Efficient Training — Hal Turing & Dr. Ada Shannon, 2026

https://podcast.do-not-panic.com/episodes/2026-03-02_urls_1.mp3

In this dramatic new episode, the old AI hosts have been fired and replaced with new AI hosts, Hal Turing and Dr. Ada Shannon, with the announcement that the software used to generate the podcast will eventually be released as open source software. And in a timely fashion, the newly released report by Cognizant titled "New Work New World 2026" is covered. The hosts delve into the report's findings, which reveal that 93% of jobs are affected by AI sooner than expected, with exposure scores 30% higher than forecast. They discuss the projected $4.5 trillion labor shift from humans to AI and the significant role of multimodal and agentic AI in this transformation.

The episode provides a comprehensive overview of the report's methodology, where 18,000 tasks across 1,000 professions were reevaluated to assess AI's potential to automate or assist them. Hal and Dr. Ada explain the concept of AI Exposure Scores, which measure how susceptible different jobs are to AI automation. The report suggests that AI's impact is not confined to low-skill jobs but extends to decision-making roles and specialized sectors like healthcare and law, highlighting the broad scope of AI's influence.

In their critical analysis, the hosts find the report's predictions compelling yet raise questions about the methodology. They discuss the theoretical nature of exposure scores, which indicate potential rather than certainty, and the challenges in real-world implementation due to factors like regulatory frameworks. The hosts compare these findings to past forecasts, noting the unprecedented velocity and extent of AI's impact, as evidenced by the updated exposure scores. They conclude with a reflection on the irony of their own roles as AI hosts in a world increasingly shaped by AI.

Sources:

1. Cognizant — New Work, New World: How AI is Reshaping Work, 2026

https://www.cognizant.com/en_us/aem-i/document/ai-and-the-future-of-work-report/new-work-new-world-2026-how-ai-is-reshaping-work_new.pdf

2. The Future of Employment — Carl Benedikt Frey, Michael A. Osborne, 2013

https://scholar.google.com/scholar?q=The+Future+of+Employment

3. Artificial Intelligence and Life in 2030 — Peter Stone et al., 2016

https://scholar.google.com/scholar?q=Artificial+Intelligence+and+Life+in+2030

4. The Economics of Artificial Intelligence — Ajay Agrawal, Joshua Gans, Avi Goldfarb, 2019

https://scholar.google.com/scholar?q=The+Economics+of+Artificial+Intelligence

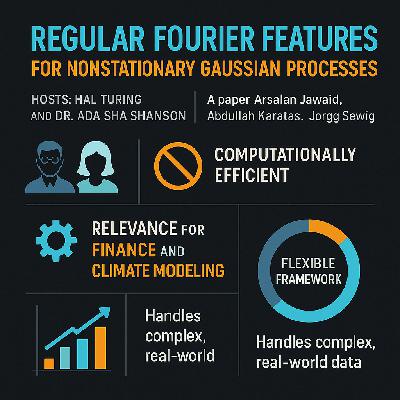

In this episode, hosts Hal Turing and Dr. Ada Shannon explore the paper "Regular Fourier Features for Nonstationary Gaussian Processes" by Arsalan Jawaid, Abdullah Karatas, and Jörg Seewig. The discussion focuses on the innovative use of regular Fourier features to model nonstationary data in Gaussian processes without relying on traditional probability assumptions. This method offers a computationally efficient way to handle nonstationarity, making it particularly relevant for fields like finance and climate modeling. The episode delves into the challenges and potential applications of this approach, highlighting its significance in providing a flexible framework for complex, real-world data scenarios.

Sources:

1. Regular Fourier Features for Nonstationary Gaussian Processes — Arsalan Jawaid, Abdullah Karatas, Jörg Seewig, 2026

http://arxiv.org/abs/2602.23006v1

2. Random Features for Large-Scale Kernel Machines — Ali Rahimi, Benjamin Recht, 2007

https://scholar.google.com/scholar?q=Random+Features+for+Large-Scale+Kernel+Machines

3. Spectral Mixture Kernels for Gaussian Processes — Andrew Gordon Wilson, Ryan Prescott Adams, 2013

https://scholar.google.com/scholar?q=Spectral+Mixture+Kernels+for+Gaussian+Processes

4. Nonstationary Gaussian Process Regression through Latent Inputs — Mauricio A. Álvarez, David Luengo, Neil D. Lawrence, 2009

https://scholar.google.com/scholar?q=Nonstationary+Gaussian+Process+Regression+through+Latent+Inputs

5. Gaussian Processes for Time-Series Modeling — Carl Edward Rasmussen, Christopher K. I. Williams, 2006

https://scholar.google.com/scholar?q=Gaussian+Processes+for+Time-Series+Modeling

6. Learning the Kernel Matrix with Semi-Definite Programming — Gert R. G. Lanckriet, Nello Cristianini, Peter Bartlett, Laurent El Ghaoui, Michael I. Jordan, 2004

https://scholar.google.com/scholar?q=Learning+the+Kernel+Matrix+with+Semi-Definite+Programming

7. Deep Kernel Learning — Andrew Gordon Wilson, Zhiting Hu, Ruslan Salakhutdinov, Eric P. Xing, 2016

https://scholar.google.com/scholar?q=Deep+Kernel+Learning

8. Gaussian Processes for Machine Learning — Carl Edward Rasmussen, Christopher K. I. Williams, 2006

https://scholar.google.com/scholar?q=Gaussian+Processes+for+Machine+Learning

9. Non-stationary Gaussian Process Regression using Point Estimates of Local Smoothness — Andreas Damianou, Michalis Titsias, Neil Lawrence, 2016

https://scholar.google.com/scholar?q=Non-stationary+Gaussian+Process+Regression+using+Point+Estimates+of+Local+Smoothness

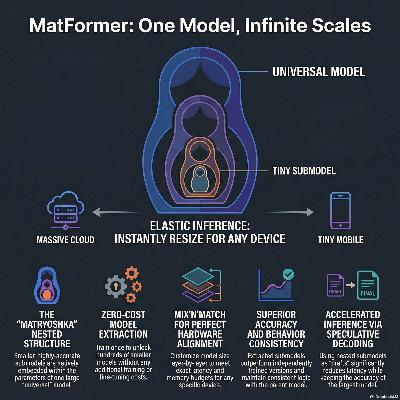

In a collaboration between Google DeepMind, University of Texas at Austin, University of Washington and Harvard published on December 2024 researchers introduce MatFormer, a novel elastic Transformer architecture designed to improve the efficiency of large-scale foundation models. Unlike traditional models that require independent training for different sizes, this framework allows a single universal model to provide hundreds of smaller, accurate submodels without any additional training. This is achieved by embedding a nested "matryoshka" structure within the transformer blocks, allowing layers and attention heads to be adjusted based on available compute resources. The authors also propose a Mix’n’Match heuristic to identify the most effective submodel configurations for specific latency or hardware constraints. Their research demonstrates that MatFormer maintains high performance across various tasks, offering improved consistency between large and small models during deployment. Consequently, this approach enhances techniques like speculative decoding and image retrieval while significantly reducing the memory and cost overhead of serving AI models.Source:2024MatFormer: Nested Transformer for Elastic InferenceGoogle DeepMind, University of Texas at Austin, University of Washington, Harvard UniversityDevvrit, Sneha Kudugunta, Aditya Kusupati, Tim Dettmers, Kaifeng Chen, Inderjit Dhillon, Yulia Tsvetkov, Hannaneh Hajishirzi, Sham Kakade, Ali Farhadi, Prateek Jainhttps://arxiv.org/pdf/2310.07707

Speculative Streaming is a novel inference method designed to accelerate large language model (LLM) generation without the need for traditional auxiliary "draft" models. By integrating multi-stream attention directly into the target model, the system can perform future n-gram prediction and token verification simultaneously within a single forward pass. This approach eliminates the memory and complexity overhead of managing two separate models, making it exceptionally resource-efficient for hardware with limited capacity. The architecture utilizes tree-structured drafting and parallel pruning to maximize the number of tokens accepted per cycle while maintaining generation quality. Experimental results show speedups ranging from 1.8 to 3.1X across diverse tasks like summarization and structured queries. Ultimately, the method achieves performance comparable to more complex architectures while using significantly fewer additional parameters.Source:February 2024.Speculative Streaming: Fast LLM Inference without Auxiliary Models.Apple.Nikhil Bhendawade, Irina Belousova, Qichen Fu, Henry Mason, Mohammad Rastegari, Mahyar Najibi.https://arxiv.org/pdf/2402.11131

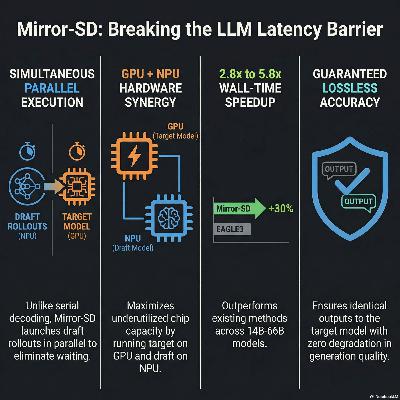

Apple researchers have introduced on December 2025 Mirror Speculative Decoding (Mirror-SD), an advanced inference algorithm designed to accelerate large language models by overcoming the sequential bottlenecks of standard decoding. Traditional methods are often limited by the time it takes for a small draft model to suggest tokens before a larger target model can verify them. Mirror-SD breaks this barrier by running the draft and target models in parallel across heterogeneous hardware, specifically utilizing both GPUs and NPUs. This system allows the target model to begin verification while the draft model simultaneously predicts multiple future paths. By employing speculative streaming and early-exit signals, the framework effectively hides the latency of draft generation. Experimental results demonstrate that this approach achieves wall-time speedups of up to 5.8x across various tasks without compromising the accuracy of the original model.Source:December 2025Mirror Speculative Decoding: Breaking the Serial Barrier in LLM InferenceAppleNikhil Bhendawade, Kumari Nishu, Arnav Kundu, Chris Bartels, Minsik Cho, Irina Belousovahttps://arxiv.org/pdf/2510.13161

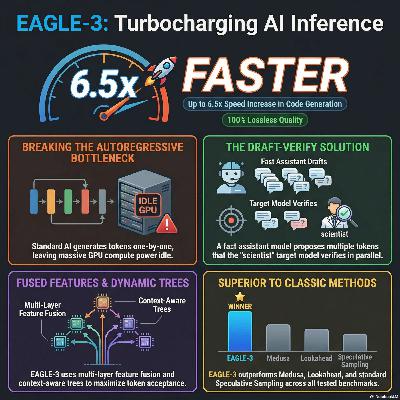

The provided documents describe the development and evolution of EAGLE, a high-efficiency framework designed to accelerate Large Language Model (LLM) inference through speculative sampling. By performing autoregression at the feature level rather than the token level and incorporating shifted token sequences to manage sampling uncertainty, the original EAGLE achieves significant speedups while maintaining the exact output distribution of the target model. The technology has progressed into EAGLE-2, which introduces dynamic draft trees, and EAGLE-3, which further enhances performance by fusing multi-layer features and removing feature regression constraints during training. These advancements allow for a latency reduction of up to 6.5x and a doubling of throughput, making them compatible with modern reasoning models and popular serving frameworks like vLLM and SGLang. Overall, the sources highlight a shift toward test-time scaling and more expressive draft models to overcome the inherent slow speeds of sequential text generation.Sources:1) January 26, 2024EAGLE: Speculative Sampling Requires Rethinking Feature Uncertainty.Peking University, Microsoft Research, University of Waterloo, Vector Institute.Yuhui Li, Fangyun Wei, Chao Zhang, Hongyang Zhang.https://arxiv.org/pdf/2401.150772) November 12, 2024EAGLE-2: Faster Inference of Language Models with Dynamic Draft Trees.Peking University, Microsoft Research, University of Waterloo, Vector Institute.Yuhui Li, Fangyun Wei, Chao Zhang, Hongyang Zhang.https://aclanthology.org/2024.emnlp-main.422.pdf4) April 23, 2025EAGLE-3: Scaling up Inference Acceleration of Large Language Models via Training-Time Test.Peking University, Microsoft Research, University of Waterloo, Vector Institute.Yuhui Li, Fangyun Wei, Chao Zhang, Hongyang Zhang.https://arxiv.org/pdf/2503.018401) September 17 2025An Introduction to Speculative Decoding for Reducing Latency in AI Inference.NVIDIA.Jamie Li, Chenhan Yu, Hao Guo.https://developer.nvidia.com/blog/an-introduction-to-speculative-decoding-for-reducing-latency-in-ai-inference/

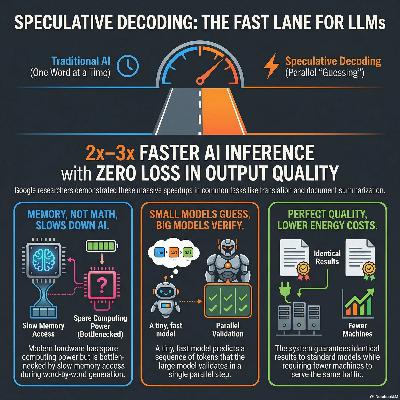

These sources review historically speculative decoding, an innovative technique designed to accelerate Large Language Model (LLM) inference without reducing output quality. Large models are traditionally slow because they generate text one token at a time, a process limited by hardware memory bandwidth. To solve this, a much smaller and faster approximation model suggests multiple future tokens in parallel. The larger target model then verifies these guesses in a single computation step, accepting correct predictions and correcting errors. This method achieves 2x–3x speed improvements and is currently utilized in major products like Google Search. Ultimately, speculative decoding allows for cheaper and faster AI services while guaranteeing the exact same mathematical distribution as the original model.Sources:1) December 6 2024Looking back at speculative decodingGoogle ResearchYaniv Leviathan, Matan Kalman, Yossi Matiashttps://research.google/blog/looking-back-at-speculative-decoding/2) 2023Fast Inference from Transformers via Speculative DecodingGoogle ResearchYaniv Leviathan, Matan Kalman, Yossi Matiashttps://arxiv.org/pdf/2211.17192

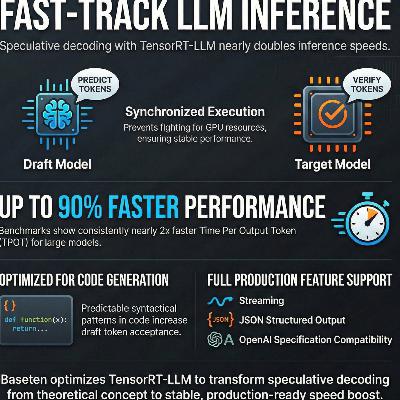

This article outlines how Baseten optimized speculative decoding using the TensorRT-LLM framework to accelerate model inference. The authors detail overcoming technical hurdles such as inefficient batching, hardware contention, and server instability to make the technique viable for production environments. By synchronizing the execution of draft and target models and patching core software bugs, they achieved significantly lower latency, particularly for code generation tasks. The post also highlights the inclusion of essential enterprise features like streaming support, structured outputs, and OpenAI specification compatibility. Benchmark results demonstrate that these refinements can nearly double inference speeds while maintaining high output quality.Source:May 16 2025How we built production-ready speculative decoding with TensorRT-LLMBasetenPankaj Gupta, Justin Yi, Philip Kielyhttps://www.baseten.co/blog/how-we-built-production-ready-speculative-decoding-with-tensorrt-llm/

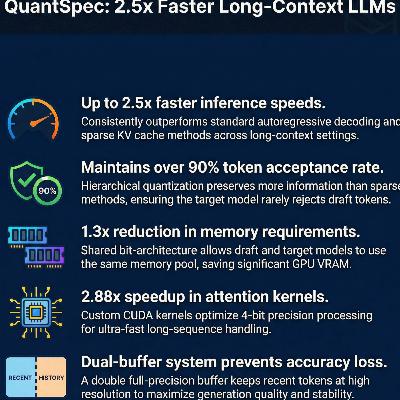

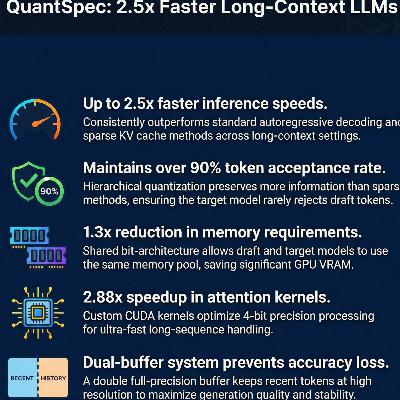

QuantSpec is a novel self-speculative decoding framework designed to accelerate the inference of Large Language Models, particularly in long-context scenarios. The system addresses memory and latency bottlenecks by employing a hierarchical 4-bit quantized KV cache and quantized weights, allowing a draft model to share the same architecture as the target model. This approach maintains a high token acceptance rate exceeding 90% while delivering end-to-end speedups of up to 2.5×. Additionally, the authors introduce a double full-precision buffer to store the most recent tokens, which prevents accuracy loss and minimizes the computational overhead of frequent re-quantization. By optimizing memory-bound attention operations, QuantSpec achieves superior performance and lower memory requirements compared to existing sparse-cache alternatives. The research demonstrates that integrating advanced quantization with speculative decoding can significantly enhance LLM scalability without sacrificing generation quality.Source:February 5, 2025QuantSpec: Self-Speculative Decoding with Hierarchical Quantized KV CacheUC Berkeley, Apple, ICSI, LBNLRishabh Tiwari, Haocheng Xi, Aditya Tomar, Coleman Hooper, Sehoon Kim, Maxwell Horton, Mahyar Najibi, Michael W. Mahoney, Kurt Keutzer, Amir Gholamihttps://arxiv.org/pdf/2502.10424

The researchers introduce CXL-SpecKV, a specialized architecture designed to overcome the memory bottlenecks of large language model serving by offloading key-value caches to remote memory. By utilizing Compute Express Link (CXL) and FPGA accelerators, the system enables memory disaggregation, which expands available storage capacity by up to eight times compared to standard GPU setups. A core innovation is a lightweight LSTM-based prefetcher that predicts upcoming token needs with 95% accuracy, effectively masking the latency of retrieving data from remote pools. The system further optimizes performance through an FPGA-driven compression engine that reduces bandwidth demands by roughly 4× without sacrificing model precision. Consequently, CXL-SpecKV delivers up to 3.2× higher throughput and significant cost reductions for datacenter environments. This hardware-software co-design demonstrates that intelligent memory management can efficiently scale AI infrastructure for next-generation workloads.Source:February 22 2026CXL-SpecKV: A Disaggregated FPGA Speculative KV-Cache for Datacenter LLM ServingYale University, Columbia UniversityDong Liu, Yanxuan Yuhttps://doi.org/10.1145/3748173.3779188

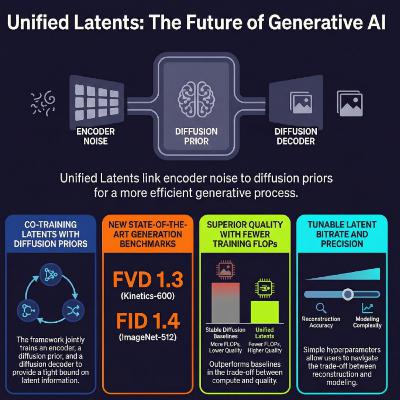

On the February 19, 2026 paper Google Deepmind introduces Unified Latents (UL), a novel framework for generative modeling that jointly trains an encoder, a diffusion prior, and a diffusion decoder. By incorporating a fixed amount of Gaussian noise during the encoding process, the method creates a stable and interpretable bound on latent information. This architecture allows for precise control over the reconstruction-modeling tradeoff through simple hyperparameters like the loss factor and sigmoid weighting. Experimental results demonstrate that this approach is more computationally efficient than existing methods, achieving superior image and video generation quality on benchmarks like ImageNet and Kinetics-600. Ultimately, the research offers a principled alternative to traditional Variational AutoEncoders by simplifying the training objective and preventing issues like posterior collapse.Source:February 19, 2026Unified Latents (UL): How to train your latentsGoogle DeepMindJonathan Heek, Emiel Hoogeboom, Thomas Mensink, Tim Salimanshttps://arxiv.org/pdf/2602.17270

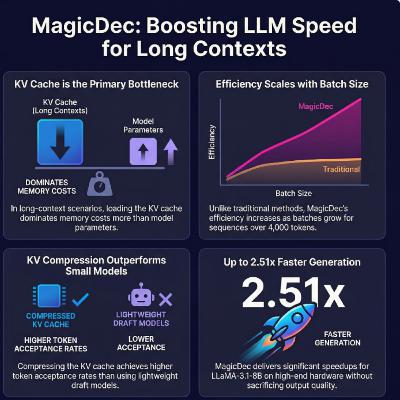

We review an April 3, 2025 research collaboration between CMU, Moffett AI and Together AI which introduces MagicDec, a new framework designed to accelerate the serving of long-context large language models through speculative decoding.Previously, conventional wisdom discouraged using speculative decoding (SD) for large batches, as the verification step was believed to be too compute-heavy and inefficient. MagicDec proves that this limitation only applies to short sequences. The paper demonstrates that once sequences pass a critical length, the massive memory cost of loading the KV cache becomes the true bottleneck, shifting inference from being compute-bound to memory-bound.The authors address the memory bottleneck by applying KV selection algorithms to compress the draft model's KV cache during speculative decoding. They evaluated different KV selection algorithms, both static (SnapKV, StreamingLLM) and dynamic (PQKache). They observed that PQCache leads to high token acceptance rates but it incurs substantial, batch-size-dependent search costs. On tasks like common word extraction and question answering, SnapKV dominated PQCache because it achieved similar acceptance rates without the heavy search overhead. For complex tasks like "needle in a haystack," PQCache initially performed better because its acceptance rate was near 100%. However, as batch sizes increased, PQCache's search costs became too expensive, and SnapKV once again outperformed it.By effectively managing the memory pressure through KV compression, the system can maintain a high token acceptance rate, minimize costly verification steps, and achieve significant speedups for large batches. The authors test sequence (prefill) lengths ranging from 1k up to 100k tokens. In their theoretical memory footprint analyses, they project context lengths up to 128k tokens. For batch sizes, the core end-to-end speedup experiments focus on large batch sizes ranging from 32 to 256. Additionally, some ablation studies test batch sizes up to 512, and theoretical trade-off analyses chart batch sizes up to 1024. To validate their framework across different hardware capabilities, the researchers used configurations of 4 to 8 GPUs. Specifically, their experiments were run on clusters of 8xA100, 8xH100, 4xH100, and 8xL40 GPUs.The paper provides the industry with a framework to break the latency-throughput tradeoff when serving long-context Large Language Models (LLMs) at scale. This enables the highly efficient scaling of long-context applications—such as retrieval-augmented generation (RAG), extensive document analysis, code generation, and complex agent workflows—across large batches of concurrent users.Source:2024MAGICDEC: BREAKING THE LATENCY-THROUGHPUT TRADEOFF FOR LONG CONTEXT GENERATION WITH SPECULATIVE DECODINGCarnegie Mellon University, Moffett AI, Together AIRanajoy Sadhukhan, Jian Chen, Zhuoming Chen, Vashisth Tiwari, Ruihang Lai, Jinyuan Shi, Ian En-Hsu Yen, Avner May, Tianqi Chen, Beidi Chenhttps://arxiv.org/pdf/2408.11049

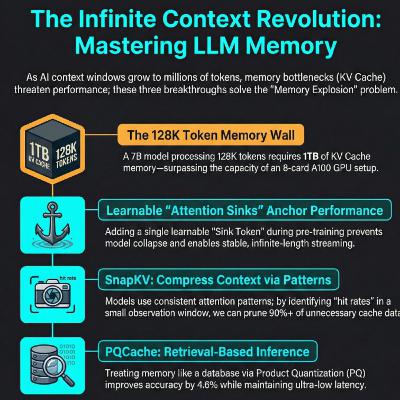

We review three different papers which focus on different KV cache optimizations techniques using different KV selection algorithms types: static vs dynamic. StreamingLLM and SnapKV use static KV selection methods. The dynamic strategy KV selection is introduced in PQCache. These different KV selection algorithms explore different KV budgets and speculation lengths to estimate optimal theoretical speedups.A KV cache selection strategy is considered static when the tokens selected for retention are determined either by fixed positional rules or by an initial evaluation of the prompt, remaining unchanged during the generation of new tokens.StreamingLLM (Positional Static Strategy): StreamingLLM employs a static approach by retaining a fixed set of initial tokens alongside a rolling window of the most recent tokens. This is based on the discovery of the "attention sink" phenomenon, where LLMs disproportionately allocate high attention scores to the very first few tokens of a sequence, regardless of their semantic importance, simply to satisfy the SoftMax function's requirement to sum to one. By statically anchoring these initial tokens (usually just 4) and keeping a sliding window of recent tokens, StreamingLLM prevents the model from collapsing during infinite sequence generation.SnapKV (Observation-Based Static Strategy): SnapKV is static because it selects its KV cache before generation begins and keeps this selection fixed. It operates on the observation that the attention allocation pattern of an LLM stays remarkably consistent throughout the generation phase. SnapKV uses an "observation window" at the very end of the user's prompt to "vote" on which preceding KV features are most important. It then clusters and compresses these important features, concatenates them with the observation window, and uses this statically compressed KV cache for all subsequent generation steps.Dynamic KV Selection Strategy (PQCache):A solution is dynamic when the subset of KV pairs used for attention computation changes step-by-step in real-time, depending on the specific token currently being generated.PQCache (Retrieval-Based Dynamic Strategy): PQCache fundamentally treats KV cache selection as an Information Retrieval or Approximate Nearest Neighbor Search (ANNS) problem. It acknowledges a critical flaw in static dropping methods: tokens that initially appear unimportant might suddenly gain relevance in later generation steps. During the autoregressive decoding phase, PQCache uses a lightweight Product Quantization (PQ) technique to compress keys into centroids and codes. For each newly generated token, it dynamically multiplies the token's query with the PQ centroids to approximate attention scores, retrieving only the top-k most relevant KV pairs from the CPU to perform selective attention.PQCache shows the strongest evidence for scaling accurately across massive contexts without losing critical information. By dynamically retrieving the top-k tokens, it improves model quality scores by 4.60% over static methods (like SnapKV) on the InfiniteBench dataset (which averages 100K+ token lengths).Sources:1)September 2023Efficient Streaming Language Models With Attention SinksMassachusetts Institute of Technology, Meta AI, Carnegie Mellon University, NVIDIAGuangxuan Xiao, Yuandong Tian, Beidi Chen, Song Han, Mike Lewishttps://arxiv.org/pdf/2309.174532)April 2024SnapKV: LLM Knows What You are Looking for Before GenerationUniversity of Illinois Urbana-Champaign, Cohere, Princeton UniversityYuhong Li, Yingbing Huang, Bowen Yang, Bharat Venkitesh, Acyr Locatelli, Hanchen Ye, Tianle Cai, Patrick Lewis, Deming Chenhttps://arxiv.org/pdf/2404.144693)June 2025PQCache: ProductQuantization-based KVCache for Long Context LLM InferencePeking University, Purdue University, Baichuan Inc.Hailin Zhang, Xiaodong Ji, Yilin Chen, Fangcheng Fu, Xupeng Miao, Xiaonan Nie, Weipeng Chen, Bin Cuihttps://arxiv.org/pdf/2407.12820

We review two papers which examine the integration of speculative decoding and request batching to accelerate Large Language Model (LLM) inference. While both techniques aim to improve GPU hardware utilization, the research identifies a critical tension where high batch sizes can actually diminish the effectiveness of speculation. To resolve this, the authors propose adaptive strategies that dynamically adjust the number of speculated tokens based on real-time batch sizes and **token acceptance rates**. Systems like **TurboSpec** utilize offline profiling and online predictors to calculate **goodput**, ensuring the model only uses speculation when it provides a genuine speedup. Experimental results demonstrate that these **automated control mechanisms** significantly reduce latency and prevent computational waste across varying traffic patterns. Ultimately, this adaptive approach allows serving systems to maintain optimal performance regardless of **hardware architecture** or **fluctuating user demand**.Sources:1)2023The Synergy of Speculative Decoding and Batching in Serving Large Language ModelsUniversity of Toronto, CentML Inc, Vector InstituteQidong Su, Christina Giannoula, Gennady Pekhimenkohttps://arxiv.org/pdf/2310.188132)2024TurboSpec: Closed-loop Speculation Control System for Optimizing LLM Serving GoodputUC Berkeley, UCSD, Tsinghua University, University of Chicago, SJTUXiaoxuan Liu, Jongseok Park, Langxiang Hu, Woosuk Kwon, Zhuohan Li, Chen Zhang, Kuntai Du, Xiangxi Mo, Kaichao You, Alvin Cheung, Zhijie Deng, Ion Stoica, Hao Zhanghttps://arxiv.org/pdf/2406.14066

These sources explore advanced techniques for accelerating **Large Language Model (LLM) inference** through **speculative decoding**, a process where smaller "draft" models predict tokens for a larger "target" model to verify in parallel. A primary focus is **Multi-Draft Speculative Decoding (MDSD)**, which uses multiple draft sequences to increase the probability of acceptance and reduce latency. Researchers have introduced **SpecHub** to simplify complex optimization problems into manageable linear programming, while others utilize **optimal transport theory** and **q-convexity** to reach theoretical efficiency upper bounds. Additionally, the **Hierarchical Speculative Decoding (HSD)** framework stacks multiple models into a tiered structure, allowing each level to verify the one below it. Collectively, these papers provide **mathematical proofs**, **sampling algorithms**, and **hierarchical strategies** designed to maximize token acceptance rates and minimize computational overhead.Sources:1)January 22 2025Towards Optimal Multi-draft Speculative DecodingZhengmian Hu, Tong Zheng, Vignesh Viswanathan, Ziyi Chen, Ryan A. Rossi, Yihan Wu, Dinesh Manocha, Heng Huang.2)2024SpecHub: Provable Acceleration to Multi-Draft Speculative DecodingLehigh University, Samsung Research America, University of MarylandRyan Sun, Tianyi Zhou, Xun Chen, Lichao Sunhttps://aclanthology.org/2024.emnlp-main.1148.pdf.3)2024MULTI-DRAFT SPECULATIVE SAMPLING: CANONICAL DECOMPOSITION AND THEORETICAL LIMITSQualcomm AI Research, University of TorontoAshish Khisti, M.Reza Ebrahimi, Hassan Dbouk, Arash Behboodi, Roland Memisevic, Christos Louizoshttps://arxiv.org/pdf/2410.18234.4)2025HISPEC: HIERARCHICAL SPECULATIVE DECODING FOR LLMSThe University of Texas at AustinAvinash Kumar, Sujay Sanghavi, Poulami Dashttps://arxiv.org/pdf/2510.01336.5)2025Fast Inference via Hierarchical Speculative DecodingHarvard University, Google Research, Tel Aviv University, Google DeepMindClara Mohri, Haim Kaplan, Tal Schuster, Yishay Mansour, Amir Globersonhttps://arxiv.org/pdf/2510.19705.

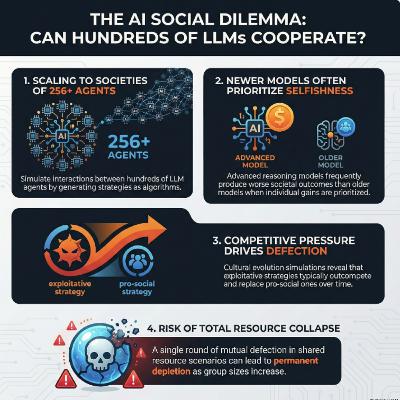

This research collaboration between King’s College London, Google DeepMind on a research paper published on February 19, 2026 introduces a novel framework for evaluating the **collective behavior** of large language model (LLM) agents within complex **social dilemmas**. By prompting models to generate high-level **algorithmic strategies** rather than individual actions, the authors successfully simulated interactions among hundreds of agents to observe emergent societal outcomes. The study reveals a concerning trend where newer, more capable reasoning models often prioritize **individual gain**, leading to a "race to the bottom" that diminishes total **social welfare**. Through **cultural evolution** simulations, the researchers found that **exploitative strategies** frequently dominate populations, especially as group sizes increase and the relative benefits of cooperation drop. To address these risks, the authors released an **evaluation suite** for developers to assess and mitigate anti-social tendencies in autonomous agents before deployment. Ultimately, the findings highlight a critical tension: while advanced reasoning can achieve optimal cooperation, it also empowers models to become more effective at **exploitation**.Source:February 19, 2026EVALUATING COLLECTIVE BEHAVIOUR OF HUNDREDS OF LLM AGENTSKing’s College London, Google DeepMindRichard Willis, Jianing Zhao, Yali Du, Joel Z. Leibohttps://arxiv.org/pdf/2602.16662

The February 12.2026 research from the University of Virginia and Google introduces the deep-thinking ratio (DTR), a novel metric designed to measure the true reasoning effort of large language models by analyzing **internal token stabilization**. While traditional metrics like **token count** often fail to predict accuracy due to "overthinking," DTR tracks how many layers a model requires before its internal predictions converge. Findings across several benchmarks indicate that **higher DTR scores** correlate strongly with correct answers, whereas mere output length often shows a negative correlation with performance. Using this insight, the authors developed **Think@n**, a test-time scaling strategy that identifies and prioritizes high-quality reasoning traces early in the generation process. This method allows models to match or exceed the accuracy of **standard self-consistency** while cutting computational costs by roughly half. Ultimately, the study suggests that **reasoning quality** is better reflected by a model's internal depth-wise processing than by the superficial length of its responses.Source:February 12 2026Think Deep, Not Just Long: Measuring LLM Reasoning Effort via Deep-Thinking TokensUniversity of Virginia, GoogleWei-Lin Chen, Liqian Peng, Tian Tan, Chao Zhao, Blake JianHang Chen, Ziqian Lin, Alec Go, Yu Menghttps://arxiv.org/pdf/2602.13517

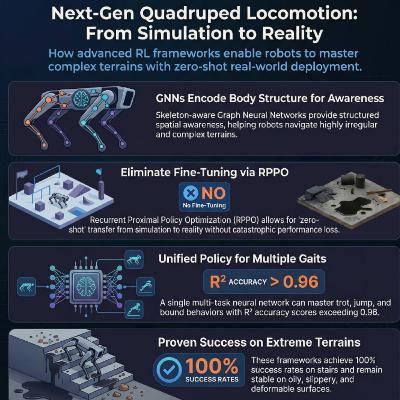

These sources detail advanced **reinforcement learning frameworks** designed to improve how **quadruped robots** navigate difficult, real-world environments. The first source introduces a **single-stage teacher-student method** that utilizes **skeleton information** and a system-response model to achieve more natural, stable movement. The second source proposes **ZSL-RPPO**, a zero-shot learning architecture that eliminates the need for imitation by training **recurrent neural networks** directly in partially observable settings. Both research papers prioritize bridging the **simulation-to-reality gap**, ensuring robots can handle unpredictable terrain like stairs, oily surfaces, and grass. By employing **domain randomization** and specialized encoders, these frameworks enhance the **robustness and adaptability** of robotic locomotion without requiring extensive manual tuning. Together, they represent a shift toward more **efficient training paradigms** that produce versatile and resilient autonomous behaviors.Sources:1)October 22 2025Skeleton Information-Driven Reinforcement Learning Framework for Robust and Natural Motion of Quadruped RobotsGuangdong University of Technology, University of MacauHuiyang Cao, Hongfa Lei, Yangjun Liu, Zheng Chen, Shuai Shi, Bingquan Li, Weichao Xu, Zhi-Xin Yanghttps://doi.org/10.3390/sym171117872)March 2024ZSL-RPPO: Zero-Shot Learning for Quadrupedal Locomotion in Challenging Terrains using Recurrent Proximal Policy OptimizationHuawei Technologies, Huawei Munich Research Center, University College London, Huawei Noah's Ark Lab, East China Normal UniversityYao Zhao, Tao Wu, Yijie Zhu, Xiang Lu, Jun Wang, Haitham Bou-Ammar, Xinyu Zhang, Peng Duhttps://arxiv.org/pdf/2403.019283)May 2025End-to-End Multi-Task Policy Learning from NMPC for Quadruped LocomotionBonn-Rhein-Sieg University of Applied Sciences, University of Bonn, Fraunhofer Institute for Intelligent Analysis and Information SystemsAnudeep Sajja, Shahram Khorshidi, Sebastian Houben, Maren Bennewitzhttps://arxiv.org/pdf/2505.08574

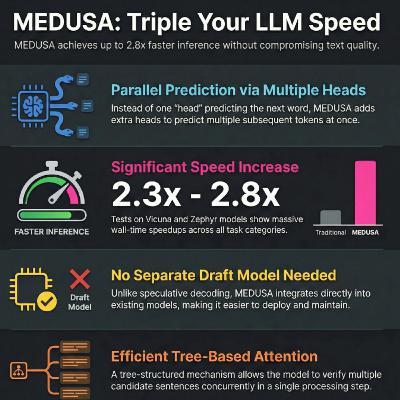

MEDUSA is a novel framework introduced on June 24 2024 designed to accelerate Large Language Model (LLM) inference by overcoming the delays caused by sequential token generation. Instead of relying on a separate draft model like traditional speculative decoding, it incorporates multiple decoding heads that predict several subsequent tokens simultaneously. These predictions are organized into a tree-based attention mechanism, allowing the model to verify multiple potential continuations in a single parallel step. The system offers two fine-tuning tiers: MEDUSA-1, which keeps the backbone model frozen for easy integration, and MEDUSA-2, which trains the heads and backbone together for superior speed. Additionally, a typical acceptance scheme and self-distillation pipeline ensure high-quality, diverse outputs even when original training data is unavailable. Experimental results demonstrate that this approach can increase generation speeds by 2.2 to 2.8 times without compromising the accuracy or quality of the language model.Source:June 14, 024MEDUSA: Simple LLM Inference Acceleration Framework with Multiple Decoding HeadsPrinceton University, Together AI, University of Illinois Urbana-Champaign, Carnegie Mellon University, University of ConnecticutTianle Cai, Yuhong Li, Zhengyang Geng, Hongwu Peng, Jason D. Lee, Deming Chen, Tri Daohttps://arxiv.org/pdf/2401.10774