Discover BeyondWords Blog

BeyondWords Blog

67 Episodes

Reverse

Immersion reading (or immersive reading) is the act of following along with text while listening to audio narration. In other words, synchronized reading and listening.

(You can try it out for yourself by playing this article.)

Google Trends shows interest in immersion reading has surged in recent months:

Interest peaked in February 2026 when Audible launched its Read & Listen feature, which highlights the corresponding ebook text as the audiobook plays.

Kindle has offered a similar feature for years, but this new launch pushes immersion reading further into the mainstream and makes it more accessible.

It also reflects a broader shift in how people consume content:

Audiences don't always want to choose between reading and listening—they expect both to work seamlessly together.

Why enable immersion reading on articles?

Enabling immersion reading on articles means tapping into a behavior that's gaining momentum and has potential to drive meaningful value for your business.

Audible says customers who read and listen simultaneously are among their most engaged users.

That's not surprising.

Combining visual and auditory input helps people stay focused, absorb more, and move through content with less effort. Nine in ten Audible customers agree: immersion reading improves content retention and comprehension.

So, immersion readers are likely to spend longer with your journalism and get more from your stories.

Adoption is likely to emerge across several audiences, such as:

Book lovers bringing read-and-listen habits with them

Younger audiences accustomed to watching subtitled content

News subscribers keen to stay more focused in a world of distractions

Second-language readers who benefit from seeing and hearing content together

Readers who need more accessible ways to absorb the news

Listeners engaging with longer or more complex stories

By offering audio, text, and a read-along feature, you cater to a wide range of needs and preferences. Whether your audience is multitasking or taking time to focus, you're giving them a way to engage that fits.

And delivering a read-listen experience is easier than you think.

How to enable immersion reading on articles

BeyondWords makes it simple for publishers to enable immersion reading on their websites and apps.

Our platform automatically turns articles into audio, embeds a player alongside the text, and highlights each word as it's spoken—so users can read along as they listen.

Setup takes minutes. Create a project, embed the player script, and you're ready to go.

You can tailor the immersion reading experience to match your publication's style, with customizable word and paragraph highlight colors for light and dark modes, as well as flexible player styling.

BeyondWords also makes it easy for readers to control playback. Click-to-play lets users start listening from any paragraph, while a sticky player keeps controls accessible as they scroll down the page.

Audio narration is powered by hyper-realistic ElevenLabs voices or voice clones, so every article sounds natural, engaging, and on-brand.

Together, these features turn every article into a seamless read-and-listen experience that keeps audiences engaged from start to finish.

Want to enable immersion reading on your website or app?

Book a demo with our team.

Apps are where news publishers build their most valuable audience relationships, and AI audio is playing a growing role in their success.

Leading publishers like The Washington Post, The Guardian, and Business Insider are using AI audio to deepen in-app engagement in a way that's scalable and cost-effective. And they're deploying it in increasingly creative ways.

Expanding usable moments

Listening is already entrenched in mobile behavior. Music, podcasts, and audiobooks fill commutes, workouts, and quiet moments throughout the day.

AI audio helps your app compete for those same moments within daily routines.

Publishers including The Guardian and the Wall Street Journal let users listen to almost any story inside their apps. They can switch between listening and reading without friction, easily choosing the format that best suits their needs and preferences.

This means audiences can more easily fit news into their day. So, they're more likely to subscribe—and stay subscribed. Or drive ad impressions.

Making individual articles playable is the foundation to building audio engagement. But the real magic happens when listening extends beyond a single story.

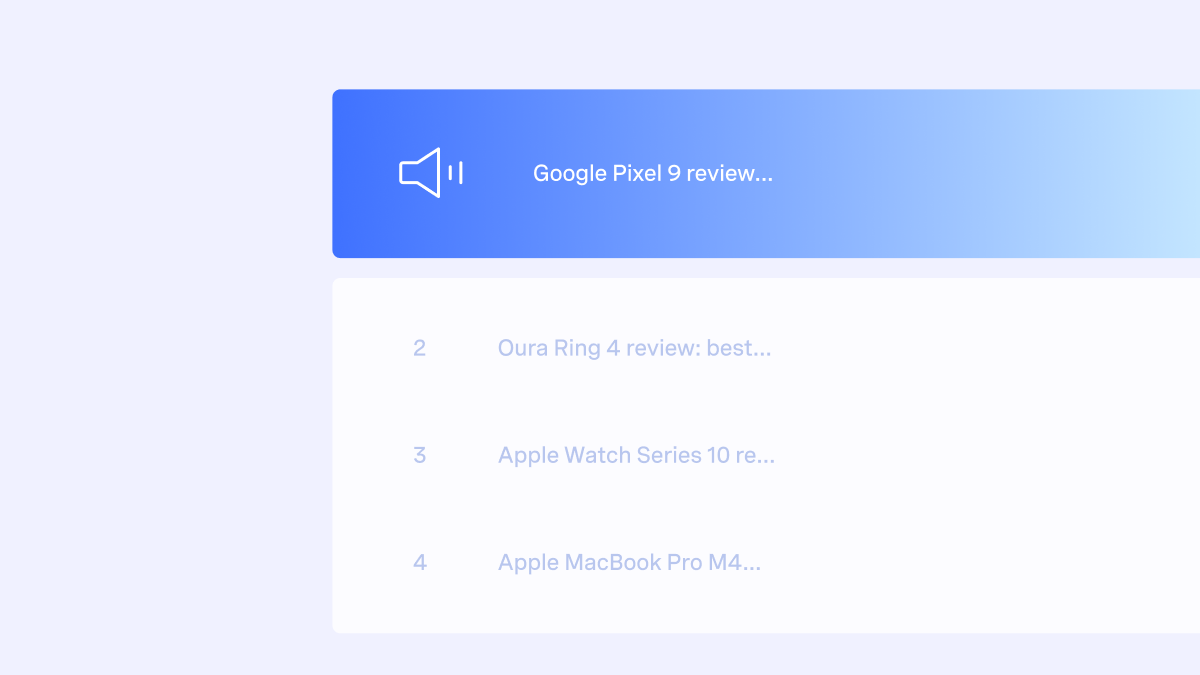

Turning single plays into long listening sessions

Many publishers use playlists and audio queueing to encourage exploration and longer listening sessions.

On The Washington Post and Bulletin apps, starting one article automatically generates a queue of related stories. When one audio finishes, the next one begins, pulling listeners into deeper sessions than they originally planned.

These apps also let users start editor-curated playlists and build custom audio queues, so they can intentionally engage in longer listening sessions.

For example, listeners can queue up articles to play on their commute home. Or start a themed playlist before heading out for a run.

By giving readers the option to step away from the screen while staying connected with your app, you unlock new patterns of news consumption. Patterns that are key to building long-term loyalty.

And if your app integrates with the user's device, driving sustained engagement becomes even easier.

Making audio mobile-native

Native device integration allows your audio to function as part of a smartphone's built-in playback system, so listening works the way users expect from any established audio app.

For example:

Playback continues when the screen locks or the app is minimized

Stories appear in lock-screen controls and system control panels

Playback can be controlled using familiar on-screen controls

Readers can pause and resume with headphones

Audio works seamlessly in vehicles through CarPlay and Android Auto

Incoming calls automatically pause playback and resume afterwards

When audio works this way, users can move between tasks without breaking playback. This continuity reduces friction, supports longer sessions, and makes your audio journalism feel like a first-class mobile experience—not an add-on feature.

It also keeps your brand present beyond the app itself, increasing the likelihood that users return and press play again.

Tapping into audio stickiness

For many publishers, in-app audio isn't just embedded in articles or tucked away in menus—it's a prominent destination in its own right.

Apps like The Washington Post and Bulletin feature "Listen" tabs, bringing audio to the centre of the news experience rather than treating it as a secondary format.

That prominence matters. According to the Pugpig Media App Report 2025, users who engage with audio in publisher apps spend nearly twice as much time as those who don't.

That means more advertising revenue, more subscription conversions, and stronger subscription loyalty.

Some publishers accelerate in-app audio discovery and adoption even further by:

Providing personalized audio recommendations based on user data

Sending push notifications at key audio engagement moments

Introducing audio during the app onboarding process

Creating an audio-native experience also me...

This post is narrated by Caroline Piercy's Instant voice clone, powered by ElevenLabs through BeyondWords.

ElevenLabs offers more than 10,000 AI voices across a range of styles, tones, and personalities.

If you're looking for pre-made voices to narrate your articles, the abundance of choice quickly becomes friction.

After all, not every voice lives up to newsroom standards.

That's why, when adding ElevenLabs voices into BeyondWords, we didn't just add the full library—we handpicked the very best options for news narration.

Key criteria for news narration

First, we excluded ElevenLabs voices that use "Live Moderation".

News publishers regularly cover sensitive or controversial topics, and automated moderation systems aren't always calibrated for journalism. By removing moderated voices from our selection, we help ensure legitimate reporting isn't inadvertently flagged or restricted.

Secondly, we filtered out voices with short notice periods to give publishers greater stability once a voice is selected.

The final filter we applied was for "High-Quality" voices.

These voices have been reviewed by the ElevenLabs team to meet professional standards for clarity, stability, and tone—all essential to doing publishers' journalism justice.

Once we'd applied our initial filters, it was time to start listening.

Curation through careful listening

Our team listened to hundreds of remaining ElevenLabs voices to assess their suitability for article narration, testing them with long-form news content.

We evaluated voices against the qualities publishers consistently tell us matter most: naturalness, clarity, and authority.

This meant removing:

character voices, like those made for cartoons;

novelty voices, like those created for ASMR content;

voices that didn't meet our studio-level quality standards; and

voices with overly emotional or flat delivery.

Where we didn't have in-house language expertise, we brought in native speakers to share their opinions on the voices. We also gathered feedback from some of our publishers.

The result is a selection of over 200 news-ready voices across dozens of languages and accents*. All available for immediate use through the BeyondWords platform.

Many of these voices are multilingual, which means they're capable of delivering natural narration across various languages. This is particularly useful for publishers who want to maintain consistency across markets.

Multilingual voices can carry traces of their native accent, but they're often indistinguishable from native voices. It's worth exploring the full range before narrowing your selection.

Tools for faster voice selection

To make voice selection even easier, we added voice previews built around real news-style openings. These give you a realistic sense of how each voice will perform in context.

We also set new default voices to give you a convenient starting point across our most-used languages.

You can preview some of our favorite ElevenLabs voices below:

If you'd like to get tailored voice advice from our team, we're happy to help.

Finding the right voices for every publisher

We have vast experience working with publishers to find the right voices for their brands.

Just recently, we helped a fashion title adopt a female, regionally accented voice that feels authentic to its readership and aligns seamlessly with its style.

Whether you have a detailed brief or prefer to rely on instinct, we'll guide you toward voices that align with your editorial identity. And collaborate to find the right fit for every use case.

If there's a specific ElevenLabs voice you'd like to use that isn't part of our curated selection, we'll add it for you. You can also create ElevenLabs voice clones.

Ready to find your publication's voice? Book a demo with our team.

Outside Interactive now uses BeyondWords to deliver ElevenLabs narration across multiple editorial titles, including Outside Online, Velo, and Backpacker.

Once an article is published, an audio version can be generated and embedded with BeyondWords, so subscribers can listen on the move or read and listen simultaneously.

Quality voices for quality journalism.

After reviewing a range of AI voice providers, Outside gravitated toward ElevenLabs voices for their quality and realism. For a brand built on immersive, human-led storytelling, anything less wouldn't cut it.

Rachel Risko, Lead Product Manager at Outside, explains: "Our long-form features are crafted pieces of journalism—they deserve narration that sounds natural and engaging, not robotic. ElevenLabs produces voices that can carry the emotional weight of a 5,000-word adventure narrative without listener fatigue.

"When someone's on a long trail run listening to one of our stories, the voice needs to feel like a companion, not a machine."

You can listen to an extract of one of Outside's audio articles below:

"It's the kind of immersive, character-driven narrative that works beautifully in audio," says Risko.

Adopting ElevenLabs, without the complexity.

ElevenLabs set the benchmark for voice quality, but Outside knew building the surrounding infrastructure—from CMS integration to the audio player—would be complex and time-consuming.

That's when they discovered BeyondWords.

BeyondWords allows Outside to use ElevenLabs voices as part of an automated publishing workflow.

After a simple, one-time setup of the WordPress plugin and a dedicated project for each title, audio versions are created and embedded into articles.

There are no extra steps for editors, and minimal engineering overhead. So, Outside can scale ElevenLabs audio without disrupting existing workflows.

Using audio to drive subscriptions.

Audio plays a direct role in Outside's subscription strategy, offered as one of the many exclusive benefits to Outside+ subscribers.

For non-subscribers, the BeyondWords Player displays a "Subscribe to listen" prompt.

Clicking play takes readers to the sign-up page, where audio is positioned as a premium benefit.

For subscribers, the audio player displays a "Listen and enjoy this subscriber-only benefit" message that reinforces exclusivity.

By giving subscribers flexibility in how they consume content, Outside can foster engagement habits that increase satisfaction and reduce churn.

Outside also uses audio to support its brand identity. With a mission to help people get outdoors, the company believes that time outside is transformational and essential to human health, happiness, and connection—for everyone. By turning its editorial content into a premium listening experience, this allows their audience to enjoy the stories that inspire them while on the go.

"Our readers are active people—they're out hiking, running, driving to trailheads, or commuting to their next adventure. Audio lets them engage with our long-form storytelling during moments when reading isn't practical," explains Risko.

"It's about meeting our audience where they are: in motion, outdoors, living the lifestyle we celebrate."

Add ElevenLabs narration to your publication.

Want to offer high-quality AI narration without building and maintaining an audio stack?

BeyondWords lets you deploy ElevenLabs voices through a fully automated, publisher-ready workflow—across websites, apps, and feeds.

Book a demo to see it in action.

Audio articles get more engagement when they're presented and promoted thoughtfully.

In this post, we'll share audio article best practices informed by our close partnerships with leading publishers. So you can get more readers to click play—and keep listening.

Here are 11 ways to boost listener engagement:

1. Choose the right voice

Choosing the right voice for your audio articles reduces friction, strengthens trust, and makes the listening experience more enjoyable. So people listen for longer and come back for more.

Many publishers choose AI voices because they're easy to scale and deliver a consistent listening experience across large volumes of content.

These are key factors to consider when choosing an AI voice:

Naturalness and clarity: The voice should sound lifelike, with smooth pacing, natural emphasis, and accurate pronunciation, so it never distracts from the story.

Brand alignment: The voice should feel true to your publication's character. Consider whether the accent reflects the communities you serve and whether the voice's personality fits your newsroom.

Tone and editorial fit: The delivery should suit the content. For example, a steady tone helps serious reporting feel more credible. You may want to use different voices across different categories.

At BeyondWords, we curate AI voices from ElevenLabs and Azure to give you the best possible balance of quality and variety. And we can help you find the best options for your publication.

That said, we recommend voice cloning above anything else.

Clone your journalists' voices

Cloning your journalists' voices is one of the most effective ways to make your AI audio feel authentic, distinctive, and closely aligned with your newsroom's identity. It can deepen audience connection, encouraging listeners to stay engaged for longer and return more often.

If your newsroom already has recognizable voices (such as podcast hosts) cloning lets you extend the value of their voices without adding to their workload.

Publishers like News Corp Australia, SPH Media, Schibsted, and La Nación have worked with BeyondWords to create Professional voice clones for their publications.

2. Customize the audio player

Customizing the player is one of the simplest ways to make audio feel more integrated with your website or app and increase playback rates.

If you're using the BeyondWords Player, you can choose from Small, Standard, or Large player designs. Each offers a different balance between visibility and functionality.

Whatever player size you choose, update the background, icon, and text colors so they fit naturally with your light and dark mode designs. Aim for strong contrast to ensure accessibility and make the play button easy to spot.

If you prefer full control over the design and behavior of your player, you can build custom interfaces using our JavaScript, iOS, and Android SDKs.

3. Position the player (and widget) strategically

We recommend positioning the audio player directly above the featured image or first paragraph of your article, so it's easy for prospective listeners to discover.

Also enable the BeyondWords Player widget, so users can access the player as they scroll down the page. Alternatively, build a custom solution for your website or app.

4. Enable playback-by-paragraph

JFM boosted audio engagement rates tenfold after enabling BeyondWords' playback-by-paragraph feature, which lets readers click anywhere in an article to start (or stop) listening.

The feature lowers the barrier to audio by making it easy to try out, while also giving readers more control over how they consume a story. Together, these benefits can increase dwell time and improve reader satisfaction.

5. Use paragraph highlighting

With paragraph highlighting, the section of text being read aloud is highlighted in a color of your choice. This reduces the friction of switching between reading and listening, improves comprehension, and makes long-form articles easier to follow.

Combined with playback-...

The most engaging videos and audios start with stories tailored to the format.

That's why we're introducing script templates.

BeyondWords script templates automatically transform your articles into specialized video and audio scripts, adapting the length, structure, and style to match how people watch and listen.

These scripts then continue through your custom workflow to generate on-brand video and audio.

It's the easy, effective way to repurpose written journalism for modern audiences.

Use our pre-made storytelling templates.

We've pre-built a set of script templates you can use straight away.

For instance:

Summary converts articles into short-form scripts ideal for engaging users who want quick updates on the move.

Hook and Payoff generates high-impact videos that are great for video feeds and social platforms, where grabbing attention is the first challenge.

Presenter Voiceover works particularly well when paired with cloned newsroom voices, as it enables warmer, more personable narration across your video output.

All templates are designed to preserve the tone and meaning of the original article, so resulting videos and audios reflect your journalism accurately.

You can find the full selection of pre-made script templates—or create your own—in your BeyondWords dashboard.

Create custom script templates.

With custom script templates, you can define your own instructions for how articles should transform into audio and video scripts.

This allows you to align with an existing video content strategy, cater to specific video use cases, and experiment with new storytelling approaches.

Want to use a different template based on brand, section, or platform? Organize your content into projects and create a template for each.

If needed, you can override the default on a per-article basis.

Start extracting more value from every story.

BeyondWords turns articles into format-native video and audio stories at scale. So you can improve engagement, reach new audiences, and boost advertising revenue—without adding work for your team.

Here's a quick overview of how audio and video automation works:

1. Integrate BeyondWords with your website and app.

2. Choose or create a script template to control how articles are transformed into scripts.

3. Choose or create a style template to define the visual look of your videos.

4. Select a voice or create a voice clone for consistent, on-brand narration.

5. Configure background music, distribution, monetization, and more.

6. Publish as normal and let audio and video versions appear automatically.

Prefer to keep humans in the loop? You can create, review, edit, and manage content through your BeyondWords dashboard.

To see the full workflow in action, book a demo with our team.

Article-to-audio and video automation only works if you extract the right content first.

For publishers, this step is a constant source of friction. Modern news pages are dynamic, JavaScript-heavy, and packed with non-editorial elements, but most content extraction methods still rely on fixed HTML rules tied to page structure.

This can result in navigational elements, ads, and other unwanted elements appearing in audio and video assets. Or your team having to spend time specifying content manually.

That's why we built a more reliable content extraction method.

Introducing automatic extraction

BeyondWords now offers automatic extraction, which is powered by AI. Our model interprets the context of a page and accurately identifies which text and images actually belong to the article, even when layouts vary or content is rendered dynamically.

The extracted content then flows through the rest of the BeyondWords workflow to generate audio and video, based on your settings.

Automatic extraction delivers practical benefits for publishers:

Cleaner, more faithful audio and video versions of your articles;

Reduced need for per-site configuration and manual tuning;

Better adaptability across layouts, frameworks, and CMSs; and

More reliable scaling across diverse publisher environments.

Solving the content extraction problem took a lot of engineering effort. We evaluated several tools, compared benchmarks, and iterated several times to finally arrive at a solution that yields high-quality results.

Optional filters and metadata controls are available for publishers who want to refine what content is extracted, but most sites won't need them.

We also added controls that allow publishers to limit which domains the workflow is allowed to run and to set HTTP headers to bypass paywalls, so our servers always have access to the necessary content.

Automatic extraction in action

To give you an example, we ran a media-rich news article through BeyondWords using the automatic extraction setting.

The screenshot below highlights which parts of the article were used to generate the audio and/or video versions—and which parts were automatically excluded.

BeyondWords accurately identified the editorial text and images for inclusion in the audio and video versions.

Unwanted elements—such as the navigation menu, advertising banner, author byline, key points, call-to-action button, image caption, content sidebar, and footer—were rightfully excluded.

And this was all done automatically.

The next step: Developing a change detection algorithm

Improving initial extraction only solves part of the problem. For audio and video automation to meet newsroom needs, article updates must be detected and reflected accurately.

A potential solution is to repeatedly fetch the page and rerun the entire extraction and AI pipeline, but this method is inefficient and could be unstable. It can also introduce unnecessary costs—especially when changes are minor or purely superficial.

To avoid this, we developed a change detection algorithm. This compares newly fetched HTML with the content extracted previously to determine what has changed.

So, audio and video stay in sync with article edits, without manual intervention or excessive processing.

Built to fit your publishing stack

Automatic content extraction is built directly into our Magic Embed integration, making it easy to enable audio and video across your publication.

Add a small script to your website, then let BeyondWords handle the rest—including content extraction, audio and video generation, distribution, monetization, and analytics. You can manage settings and content centrally through your BeyondWords dashboard.

Want to see how it works in practice? Book a demo today.

Vertical video is moving from social platforms into the heart of news products.

Over the past year, publishers like The New York Times, The Economist, and CNN have introduced vertical-video feeds across their homepages and apps. These new "Watch" tabs signal a clear shift: social-style video is becoming a core output for modern newsrooms.

The opportunity.

Social platforms like Instagram and TikTok have made short-form vertical video a daily habit for millions. 73% of U.S. consumers watch short-form video multiple times per day, according to Media.net. And 90% of consumers are open to seeing these formats on publisher sites.

This presents a clear opportunity for newsrooms to bring a popular format into their own ecosystems, where engagement can be owned and monetized.

INMA's Advertising Initiative Lead, Gabriel Dorosz, says short-form vertical video is "the biggest ad opportunity for news." Advertisers are expected to redirect US$146 billion from display advertising into short-form video advertising by 2028.

Publishers are also using vertical video to drive subscription revenue.

The Economist's paid subscribers have doubled their vertical video consumption over the past year, according to Nieman Lab, helping the publisher to tackle the "unread guilt factor" that so often drives subscription cancellations.

The challenge.

To deliver a TikTok-style experience inside your news app, you need a constant supply of timely vertical video.

Traditional production workflows simply can't keep pace.

Each clip demands scripting, recording, editing, approvals, and coordination—an intensive chain of tasks that makes high-volume output hard to sustain. And by the time a video is ready, the story's moment may have already passed.

Costs add up quickly, too. Relying on traditional video production makes it tough to generate a return on investment.

Newsrooms need a fast, scalable way to turn articles into vertical video.

That's where BeyondWords comes in.

The solution.

BeyondWords automatically converts your articles into vertical videos and delivers them directly into your websites, apps, and feeds. So you can publish videos as quickly and consistently as you publish stories.

It's the simple, commercially smart way to satisfy modern media habits.

Videos can be fully customized to fit your brand and audience. You can:

turn full articles into video or generate video-specific scripts using AI;

choose an ElevenLabs or Azure voice, or clone your own voices for narration;

auto-insert relevant images and videos from Getty or your own DAM system; and

customize the visual style of your videos, including captions and branding.

Our platform can also generate horizontal videos and audio versions from the same article, allowing you to repurpose stories across multiple formats and channels without additional work.

Just integrate, configure, then let BeyondWords do the rest.

Want to know more? Book a demo with our team.

Audiences want it. Platforms reward it. Advertisers pay premiums for it.

Demand for video has never been higher. And now, production moves at newsroom speeds.

BeyondWords automatically converts your written articles into fully produced videos, with distribution, monetization, and analytics built in. So you can capitalize on modern media habits without the cost or complexity of traditional production.

See what's possible

The BeyondWords video generator brings articles to life with hyper-realistic narration, dynamic visuals, engaging captions, background music, waveforms, and built-in branding—all tailored to your publication's style.

BeyondWords even offers a built-in script generator that converts your stories into video-friendly narratives. You can choose from a variety of preset styles or define your own prompts.

You can create vertical and horizontal videos, with automatic asset resizing for each format. And insert independent video clips—such as logo stings, ads, and contextual footage.

These videos started out as standard articles. Now, they're polished assets ready to engage audiences on websites, apps, and third-party platforms

Built for the economics of modern newsrooms

With BeyondWords, you produce video at a fraction of the usual cost and in a fraction of the usual time.

You also get the distribution and monetization tools needed to extract maximum value with minimum effort. So, you can experiment and scale without adding pressure to your editorial teams—or your bottom line.

Follow publishers like the New York Times and CNN by adding vertical video feeds to your homepage and app. Monetize every article with an ad-supported video version. And reach new audiences on platforms like TikTok, YouTube, and Instagram.

This is your chance to get ahead—and stay ahead—of the video curve.

Want to see BeyondWords video in action?

Book a demo with our team.

As newsrooms expand into AI audio, many face the same strategic choice: Build your own workflow using a general-purpose TTS API, or let BeyondWords handle everything for you.

In other words, should you build or buy your audio stack?

In this post, we'll compare these two AI audio approaches. So you can choose the one that makes sense for your newsroom.

Using a general-purpose TTS API means engineering your own stack and workflow. A service like Polly, Azure, Google, ElevenLabs, Hume, or Cartesia handles audio generation, and you build the surrounding infrastructure. This gives you full control over your stack, but it takes a lot of work.

On the other hand, BeyondWords provides everything you need out of the box - content generation, distribution, analytics, monetization - giving you a complete workflow with far less engineering effort. The company also provides ongoing support and product development.

General-purpose TTS APIs don't extract or clean your content - your team has to build a system that identifies which parts of each article should be narrated and which should be excluded.

Without proper extraction, elements such as navigation labels, captions, inline components, related links, or HTML fragments may end up in the audio. Most newsrooms solve this by building custom logic to parse article templates, strip out unwanted elements, and deliver only clean editorial content to the API.

This approach works, but it requires maintenance whenever templates or CMS structures change.

BeyondWords offers Magic Embed, Ghost, and WordPress integrations, which automatically extract clean editorial content for narration. This ensures a great listening experience and keeps audio consistent through CMS changes, removing the ongoing maintenance your team would otherwise have to manage.

If you use our API or RSS Feed Importer, you will need to set up and maintain extraction logic. But our support team will be on hand to help you with any issues.

General-purpose TTS APIs like Polly, Azure, Google, ElevenLabs, Hume, and Cartesia offer wide selections of high-quality voices, but these voices are built for various use cases (such as video game characters). So, you may need to sift through dozens to find one suitable for news narration.

Some providers, including ElevenLabs and Azure, also offer voice cloning. The quality, training requirements, and licensing vary by model, so your results depend heavily on which provider you choose.

Once you pick a provider, you're largely locked into its capabilities. If another vendor releases better voices or more advanced cloning, moving over isn't trivial - it typically means updating your integration, rebuilding parts of your workflow, and adapting to a new set of tools.

BeyondWords is built to keep pace with rapid advances in voice technology. We integrate high-performing voices and cloning models from providers like Azure and ElevenLabs, expanding our support for new models as they reach the quality bar our publishers expect.

This gives you long-term flexibility: your audio quality improves as the market evolves, without requiring you to rework your workflow or switch vendors.

We also curate the voices available in the platform to ensure they meet newsroom standards, and we can help you select the right voice for any publication. That expertise leads to stronger sonic branding and saves your newsroom from evaluating an ever-growing list of models.

Most general-purpose TTS APIs perform basic text normalization before generating audio, automatically converting non-standard text like numbers, dates, and abbreviations into their expected spoken forms.

However, these systems aren't context-aware, so they can misinterpret ambiguous elements - for example, reading "$" as "dollars" when the article means "pesos".

These APIs generally let you correct mispronunciations by adding custom pronunciation rules through SSML or a lexicon, but these fixes must be created and maintained manually.

BeyondWords includes ...

We're excited to announce that BeyondWords has partnered with Livingdocs, a leading editorial system, to make it easier for newsrooms to expand into audio and video publishing.

Once connected to Livingdocs, BeyondWords automatically generates audio and video versions of published articles. This allows newsrooms to unlock the potential of multimedia content without adding any steps to their editorial workflow.

Capitalizing on modern media habits

As audiences increasingly switch between reading, listening, and watching, publishers need flexible ways to deliver content across formats. BeyondWords and Livingdocs make that possible, helping publishers reach more readers, boost engagement, and create new revenue opportunities.

Céline Tykve, Head of Business at Livingdocs, says: "Our publishers want to innovate and adapt, but they also need to maintain the speed and simplicity of their workflows. By partnering with BeyondWords, we're helping them expand into audio and video in a way that's efficient, cost-effective, and aligned with how they already publish."

Tailoring audio and video to each publication

Livingdocs publishers can use BeyondWords' voice cloning tools to create their own AI voices or choose from an extensive library of lifelike voices. Custom pronunciation rules and text preprocessing ensure every story is narrated naturally and accurately.

Publishers can also customize the audio player, video captions, and other elements to ensure multimedia formats align seamlessly with their brand identity.

"At BeyondWords, we understand how much Livingdocs publishers care about their brand voice and editorial standards," says Patrick O'Flaherty, BeyondWords Co-Founder and CEO. "We help them maintain that identity and quality as they expand their storytelling into audio and video."

To learn more about how Livingdocs and BeyondWords can work together in your newsroom, get in touch with our team.

Exciting news! ElevenLabs AI voices and voice cloning are now available in BeyondWords.

That means you can get your articles narrated by some of the best-sounding AI voices on the market - at scale - without complicated setups or workflows.

Just pick your favorite voices and let our platform do the rest.

Effortless publishing through BeyondWords

BeyondWords removes the complexity of bringing ElevenLabs voices into your newsroom workflow, because it's purpose-built for publishers.

Our platform transforms your articles into audio and video using your chosen voices, then embeds them into your website or app. There's full support for subscription paywalls and ad monetization, and trackable results through built-in analytics and integrations.

You can connect your CMS to BeyondWords in whichever way works best for your setup - using our API, RSS Feed Importer, Magic Embed, or CMS-specific plugins. And we'll provide all the support you need to integrate and scale.

Voices handpicked for news content

Our team handpicked the ElevenLabs voices featured in BeyondWords, selecting only the very best options for news audio and video. So, there's no need to scroll through hundreds of unsuitable character voices - you'll find a variety of news-ready options in seconds.

These hyper-realistic, emotionally engaging voices span multiple languages and accents and represent a variety of personalities and speaking styles.

You can hear one of our favorite ElevenLabs voices, Archer, when you listen to this article.

We're confident there's a great pre-made voice for every publication. But if you want to take sonic branding a step further, there's voice cloning.

Next-generation voice clones

We're now using ElevenLabs to power our Professional Voice Clones, so you can create custom voices with the same level of quality, realism, and expressiveness as ElevenLabs' pre-made voices.

This is the best way to give your journalism a unique, authentic voice at scale.

Media leaders like News Corp Australia, La Nación, and Schibsted have already used BeyondWords to create journalist voice clones, so they can deliver narrations that sound as real and individual as the reporters behind the stories.

You can create a Professional Voice Clone with as little as 30 minutes of recorded audio.

Smarter speech through preprocessing

BeyondWords uses AI preprocessing to prepare your articles for ElevenLabs voice conversion, ensuring elements like acronyms, abbreviations, and symbols are converted into their correct spoken forms.

For example, the system can normalize "$" into "dollars" or "pesos" based on the article's context, ensuring the voice narrates correctly.

This adds a reliable layer of quality assurance for publishers, reducing the risk of misreads and delivering a better listening experience.

For those rare exceptions, you can create and manage custom pronunciation rules in your BeyondWords dashboard. This means names, brand terms, and other elements always sound the way you want them to.

Book your demo today

By using ElevenLabs voices through BeyondWords' AI audio and video platform, you can bring lifelike narration to your journalism quickly, reliably, and at scale.

To see just how easy it could be, book a demo with our team.

SPH Media has launched BeyondWords-powered AI audio across two major Singapore titles, unlocking a more intuitive way for local language communities to experience the news.

Audiences can now listen to articles on the websites and apps of Berita Harian and Tamil Murasu, Singapore's leading Malay- and Tamil-language newspapers.

Articles are narrated by hyper-realistic AI voice clones of newsroom journalists, giving both publications a familiar, authentic, and trustworthy voice that's true to their identity.

In Singapore, English is the main language people learn to read and write in, while Malay and Tamil are more often used for speaking. So, many Singaporeans are less confident reading news written in their mother tongue.

For SPH's Malay and Tamil newsrooms, audio presented a clear opportunity to make their journalism more accessible and unlock new pathways for habit-building, loyalty, and future revenue.

"Sometimes you read the first paragraph and you're tired because it's not your natural consumption pattern," explains Santhosh Vemisetty, Principal Product Manager at SPH Media. "But if you hear the story, it's simpler. It's your natural way of consuming content."

SPH Media evaluated multiple AI voice providers and found BeyondWords ticked two boxes that mattered most: lifelike quality and editorial control.

"BeyondWords was the first solution where the voices actually felt real, and where we could fine-tune pronunciation to protect editorial integrity.

"But the big differentiator was the CMS. We need human-in-the-loop workflows, not a black-box generator. BeyondWords was the only audio platform that actually aligned with the way we publish news."

SPH Media used our voice-cloning service to create lifelike AI versions of two trusted newsroom voices, giving listeners a familiar, credible way to experience their journalism.

Here's an extract of a Berita Harian article, brought to life with Atiyyah Said's voice clone:

And an extract of a Tamil Murasu article, brought to life by Palanisamy Veerrappan's voice clone:

"We wanted the audio to feel familiar and trustworthy," Santhosh says. "So we cloned the voices of presenters who already host our shows and podcasts. They were the strongest voices we had, and the clones sound incredibly natural."

SPH Media connected BeyondWords to its websites and apps using our Magic Embed integration, ensuring audio articles are generated and embedded automatically.

The audio player provides a continuous playback experience, meaning:

When one audio article ends, the next one plays automatically

When listeners scroll to the next article, the audio switches automatically

Users can also see which part of the article is being read and click anywhere to start listening - a natural fit for the read-and-listen habits many bilingual speakers prefer.

"People who start listening to one article often move on to a second, third, or fourth. Around 20 to 25% continue to the next article," says Santhosh.

SPH Media is also seeing average playback rates for BeyondWords audio reach five times higher than its internal and regional benchmarks.

But, Santhosh and his team aren't stopping there: "We're exploring multiple ways to engage our audience better, whether that's through offering multiple voice options or launching curated playlists.

"On the commercial side, we're planning to introduce a sign-up prompt for returning listeners. We're also looking to launch ad-supported video articles with BeyondWords, to see if we can further monetize that audio attention."

Want to know how AI audio and video can drive revenue for your media business? Book a demo with our team.

On October 24, 2025, my co-founder Patrick O'Flaherty and I shared our insights into the future of audio AI for media at INMA's Media Tech and AI Week in San Francisco.

Here are four highlights from our keynote speech, which focused on

AI article narration:

1. Brands will sound as distinct as they look

As audiences spend more time with audio and video content, how your publication sounds will become just as vital to its identity as how it looks.

Voice plays the central role in sonic branding. Just as a person's voice tells us so much about who they are and how they feel, an article's voice influences how listeners perceive your publication and connect with your story.

AI voice cloning makes it possible to use unique, on-brand voices at scale.

For example, we helped News Corp Australia

clone the voices of dozens of journalists in newsrooms across Australia, including legendary cricket commentator Robert 'Crash' Craddock.

Sonic logos, background music, and sound effects can also make audio articles more distinctive and immersive. This helps audiences to recognize your content across platforms and form lasting connections with your brand.

2. Multimodal consumption will drive multimodal journalism

As multimodal news consumption grows, publishers must craft stories that work fluidly across formats.

Journalists and editors will increasingly consider how their work sounds as well as how it reads, writing in ways that feel more conversational. They may also favor topics that lend themselves well to spoken formats, such as human-interest stories.

AI will help to bridge the gap between formats, automatically adapting the base content for optimal listening, viewing, or reading experiences. For example, by generating spoken summaries of visual charts.

These advancements will give journalists the freedom to focus on their preferred mediums while maximizing their stories' impact and reach.

3. Audio will become integral to product design

Audio is too often an afterthought in product design - something bolted onto text-first interfaces rather than built in from the start.

But that's changing.

The future lies in audio experiences that are intrinsic to product design, treated as a core part of the user experience rather than an optional add-on layer.

Some publishers are already experimenting with audio-centric design.

Earlier this year, Jysk Fynske Medier (JFM)

increased listen rates tenfold by implementing BeyondWords' playback-by-paragraph feature, which lets readers start listening by clicking anywhere in the text.

With our new persistent playback feature, available through our player SDKs, listeners can keep listening as they navigate between pages on a website or app.

4. Hyper-personalized audio will deepen engagement

In the future, AI audio will adapt to each listener in real time.

The voice's tone, accent, and pacing will automatically shift to suit personal preferences or inferred listening habits, deepening listener engagement.

For example, narration might sound brighter and more energetic during morning commutes, then softer and slower during late-night listening sessions.

One of the clearest opportunities for personalization lies in regional accents, which can be tailored to a listener's preferences or location. This will make audio more engaging and easier to understand.

Publishers like The Irish Times are

already using regional accents to strengthen connections with localized audiences.

Prepare for the future of AI audio

Audio production and consumption is changing fast, and media companies must adapt to stay ahead.

With BeyondWords, you can automatically generate, distribute, and monetize audio versions of your articles using advanced AI voices or voice clones. To learn what we could do for your publication,

book a meeting with our team.

News Corp Australia today announced a bold step into the future of journalism, rolling out text-to-speech audio across its major news brands.

With this launch, millions of readers now have the option to listen to the news - not just read it - changing how audiences connect with their news content every day.

A new way to engage with the news

The listen function is now live across both web and app on titles including The Australian, news.com.au, The Daily Telegraph, Herald Sun, The Courier-Mail, The Advertiser, The Mercury, NT News, Cairns Post, Gold Coast Bulletin, Toowoomba Chronicle, Townsville Bulletin, Geelong Advertiser, The Weekly Times, and CODE Sports.

Enabled on thousands of articles each week, the rollout spans key categories from news and sport to business, entertainment, opinion, health, and education.

The voices of the newsroom

News Corp Australia worked hand-in-hand with BeyondWords to develop brand-specific voices cloned from their journalists. The hyper-realistic clones represent their newsrooms, allowing each title to have their own unique voice.

As Rod Savage, Director of Newsroom Innovation at News Corp Australia, explains: "The ability to use AI technology to offer brand-specific voices of journalists - which sound remarkably realistic - and automatically enable audio for thousands of our articles will change how our audience connects with our content."

Seamless listening, anywhere

Adopting the BeyondWords player and app SDKs, News Corp Australia offers their readers an experience that is designed to fit naturally into modern news habits.

Readers can listen while commuting, doing chores, or whilst browsing elsewhere on their devices, giving them new ways to consume content, on their terms. Default controls allow them to adjust playback speed and skip forwards and backwards through paragraphs.

Powered by BeyondWords

News Corp Australia worked with BeyondWords to build 15 professional voice models and to deliver audio at scale.

After an initial trial with The Australian, this full rollout is a huge milestone, and just the beginning of what's possible when publishers combine journalism with AI-powered audio.

Jysk Fynske Medier, better known as JFM, is one of Denmark's largest privately held media groups.

The group publishes 15 daily newspapers and more than 40 local weekly papers, reaching more than 2.7 million Danes per week (out of the 6 million population).

With a founding mission to enhance democracy and create unity amongst Danish communities, AI audio has become core to their strategy.

After in-house attempts fell short of expectations, JFM looked to BeyondWords to help unify AI audio across the titles.

Reliability and speed to market

JFM knew that their future success would depend on offering new formats that could reach audiences wherever they were - especially those consuming articles on the go.

They began experimenting with AI audio in 2020, starting with an in-house attempt to configure and integrate a text-to-speech API.

After some testing, and a review of the potential partners on the market, they chose to proceed with BeyondWords.

The platform made it possible to roll out high-quality audio across all their titles, drastically accelerating their time to market, while also enhancing the quality of their text-to-speech product along the way.

Kristoffer Hamborg, Head of Editorial Technology at JFM told us:

"BeyondWords had something that would work across all our brands. Something that could drastically shorten the time to market compared to developing it ourselves. If there's a good solution in the market that fits our needs, we'd rather use that, rather than inventing the solution ourselves."

Once integrated, AI audio quickly became a core part of their reader experience - an essential feature within their websites and apps.

Kristoffer adds:

"Today, for a lot of users, it's one of the most important features on our sites. If audio is the only way you can stay updated and consume local news, then it is the most important feature for you."

Engagement gains with playback-from-paragraphs

In April 2024, eager to spark listening amongst their subscribers and amplify engagement, JFM introduced the BeyondWords playback-from-paragraphs feature.

The upgrade allows readers to start playback from anywhere in the article, simply by clicking on the text. This makes the audio experience more dynamic and allows readers to switch to listening mid-article.

The results were immediate. Listener data from three local dailies - Lemvig Folkeblad, Helsingør Dagblad, and Viborg Stifts Folkeblad - showed dramatic gains.

If we compare the three months to March 1, 2024 to the three months to September 1, 2024:

Lemvig Folkeblad listen rates increased from 0.14% to 6.71%

Helsingør Dagblad listen rates increased from 0.15% to 3.03%

Viborg Stifts Folkeblad listen rates increased from 0.19% to 2.1%

Across the three titles, average engagement rates rose from under 0.3% to around 4% - a tenfold increase.

Kristoffer notes:

"Naturally, we saw a lot more audio being played. It helped people become aware of the possibility of listening to articles. We expected some pushback but the response has been nothing but positive. It's been a real success."

Looking ahead

For JFM, audio is no longer an experiment: it's a proven driver of engagement and an essential part of the digital news experience.

BeyondWords has enabled them to scale AI audio efficiently across dozens of titles, unlocking new ways for readers to connect with local journalism.

And this is just the beginning. Looking to the future, Kristoffer told us they're excited about how new formats - such as article-to-video and audio digests - will further drive engagement and maintain their reputation for publishing excellence and innovation.

If you want to learn more about how your team can leverage BeyondWords, lift the impact of every article, and bring peace of mind to your product team, reach out to hello@beyondwords.io or book a meeting today.

At BeyondWords, we care about audio quality. It's our north star - the single greatest driver of engagement through audio.

Today's audiences are discerning. A single mispronounced acronym, misread measurement, or garbled foreign word can break the spell and break trust.

That's why we're excited to introduce AI preprocessing - a small step for AI audio, a giant leap for listenability.

Read more on this, plus hear about some sleek new updates to the Editor, below.

Prepare for cleaner audio, at scale

Even the most realistic-sounding AI voices can be let down by mispronunciations.

When a sports score is read like a year or a date sounds like a price, it can pull listeners out of the story and remind them they're not hearing a human.

AI preprocessing fixes this automatically. It understands the context of your article and normalizes tricky text - like dates, sports scores, measurements, and abbreviations - into smooth, natural speech.

With built-in language detection, pronunciations of foreign words within articles can now also be automatically converted into their native pronunciations.

Switch on AI preprocessing today in your Projects preferences or directly in the Editor.

Make room for the new Editor

We've rebuilt the Editor from the ground up, making it faster, cleaner, and more powerful.

Clone a voice without leaving the Editor, assign it to your article, and adjust voice, summarization, and video settings on a per-article basis.

What's more, you can now import any article straight from a URL to speed up your workflow.

Visit our docs and guides for more info.

Fixes and improvements

We've made several behind-the-scenes upgrades to improve flexibility, compatibility, and security:

Video: Adjust how many caption lines appear per scene, control caption alignment, and define how article images display and playback in videos.

Magic Embed: Now works reliably with sites that render articles dynamically via JavaScript.

SAML: Added support for SAML Single Sign-On (SSO), making logins more secure and streamlined for enterprise customers.

If you have any questions about these updates, just reply. We're always happy to help.

That's all from us for now. If you're heading to INMA Media Innovation Week this September in Dublin, we'll be there! Come say hello!

And to keep up with the most recent updates, visit the BeyondWords changelog.

Not all text is designed for audio. Think sport scores, stock market updates, analysis of demographic data, etc.

For news publishers, even a single mispronounced name, clumsy acronym, or mistranslated currency can damage trust. But editing for audio can be tedious and impractical at scale.

That's why we've launched AI preprocessing - a new feature designed to make AI audio smarter, clearer, and more natural-sounding from the very first listen.

Have confidence in every article

Priming text for multimodal formats has always been a focus for BeyondWords.

While AI voices sound more natural than ever, they can still be tripped up by the structure of real-world editorial content.

BeyondWords offers publishers full control over audio and video with pronunciation rules - such as substitutions and IPA transcriptions - embedded directly into the dashboard.

With AI preprocessing, we take this a further step forward, removing the need for subediting and offering confidence in every article as you scale.

Reduce time spent on manual improvements

AI preprocessing automatically detects and cleans up non-standard text - such as dates, scores, and abbreviations - removing the need for manual rules.

Take this sentence:

Snap rose 24.3% YoY to 422M, contributing to 8.9% QoQ revenue growth in 3Q.

Before AI preprocessing:

After AI preprocessing:

In this example, numerous terms are preprocessed to sound natural in spoken English:

"YoY" becomes "year over year"

"QoQ" becomes "quarter over quarter"

"3Q" becomes "third quarter"

"422M" becomes "four hundred twenty-two million"

Or take this Spanish sentence:

El Gobierno anunció un subsidio de $150.000 para las familias afectadas por la sequía en la región de Valparaíso.

Before AI preprocessing:

After AI preprocessing:

Here, thanks to context-aware AI preprocessing, the "$" symbol is correctly interpreted as referring to Chilean pesos, not US dollars.

Perfect pronunciations of foreign names

Furthermore, AI preprocessing allows for language detection for improved pronunciations of foreign words.

Currently available in German, with more languages coming soon, this improvement ensures that named entities, quotes, and loanwords are spoken in the correct accent and intonation - no clunky mispronunciations or awkward phrasing.

For example, take this German sentence:

Im neuesten Update kündigte das Start-up ein Rebranding seiner App an, das laut CEO mehr User Engagement und eine bessere Conversion Rate bringen soll.

Before AI preprocessing:

After AI preprocessing:

In this sentence, "Start-up," "CEO," "User Engagement," and "Conversion Rate" are all detected as English terms and pronounced accordingly.

It's these little touches that make a big difference. And now, they happen automatically.

Why AI preprocessing matters

With AI preprocessing, your audio gets cleaner and more consistent, without any extra effort from your team.

Fewer manual rules

No more fiddling with exceptions like "12/03/24" or "1,500 CAD". AI preprocessing handles edge cases in context, so you can skip the tweaks and focus on what matters.

Professional grade output

A clumsy misread breaks immersion and trust. By getting pronunciation right the first time, your audio sounds smooth, confident, and credible.

Multilingual support at scale

Ideal for global newsrooms, foreign names and terms are detected and pronounced correctly.

Whether you're scaling audio across hundreds of articles or experimenting with article-to-video, AI preprocessing helps every article feel polished, at scale.

How to turn AI preprocessing on

Now available in public beta, follow these steps to turn on AI preprocessing:

1. Within your Project, head to "Content" then "Preferences"

2. From the top navigation, click the "Pronunciations" tab

3. Click the "AI preprocessing" button

4. Toggle the switch on, then click "Done"

To enable AI processing for a specific article, open the article editor, go to the "Pronunciations" tab in the sidebar, toggle on "AI preprocessing", then gene...

In a world flooded with news, how do you make sure your stories stand out and get heard?

At BeyondWords, we've got a few audio tricks up our sleeves to help your content rise above the noise.

Introducing Summaries, Playlists, and Podcast Feeds:

AI summaries

Our AI-powered summaries automatically transform your articles into concise, digestible audio snippets. Perfect for listeners on the go who want the headlines and highlights… right when they need them.

Custom playlists

Create smart or standard playlists that organize your audio content around themes, trending topics, or audience interests. Whether your listeners prefer deep dives or quick catch-ups, they'll find exactly what they're looking for.

Podcast feeds

Turn your audio into ready-to-go podcast feeds that work with all the big platforms, including Spotify, Apple Podcasts, and YouTube. Expand your reach and let listeners subscribe, download, and enjoy your stories wherever and whenever they want.

Together, these tools don't just amplify your voice: they make sure it's heard loud, clear, and exactly where your audience is listening.

Want to know more? Feel free to reach out to one of our team at hello@beyondwords.io.

Don't you love it when you time your commute just right, when you're first in line at the coffee shop, when you meet your deadlines…when things just work out?

Well, we've been trying to package up that feeling at BeyondWords with the new Magic Embed.

Forget tedious manual processes, complicated coding, or juggling multiple tools. Easily embed BeyondWords and make any article available in audio.

Here's how it works:

1. Paste and play: Configure your settings, paste the embed code into your page, and voilà! Magic Embed does the heavy lifting - automatically generating, embedding, and updating audio.

2. Stay synced: Edits happen. Stories evolve. Magic Embed keeps your audio seamlessly in sync, updating instantly whenever your text changes. No lag, no fuss, just real-time magic.

3. Engage effortlessly: Our player is sleek, accessible, and responsive, effortlessly fitting your brand's look and feel. Readers can listen anywhere, on any device.

Instead of wrestling with complex integrations, you now have more time to focus on what really matters: producing amazing stories that resonate with your audience.

More importantly, you've given your readers the freedom to explore what matters most to them, right through their headphones.

Want to know more? Feel free to reach out to one of our team at hello@beyondwords.io.