“All the lab’s AI safety Plans: 2025 edition” by Algon

Description

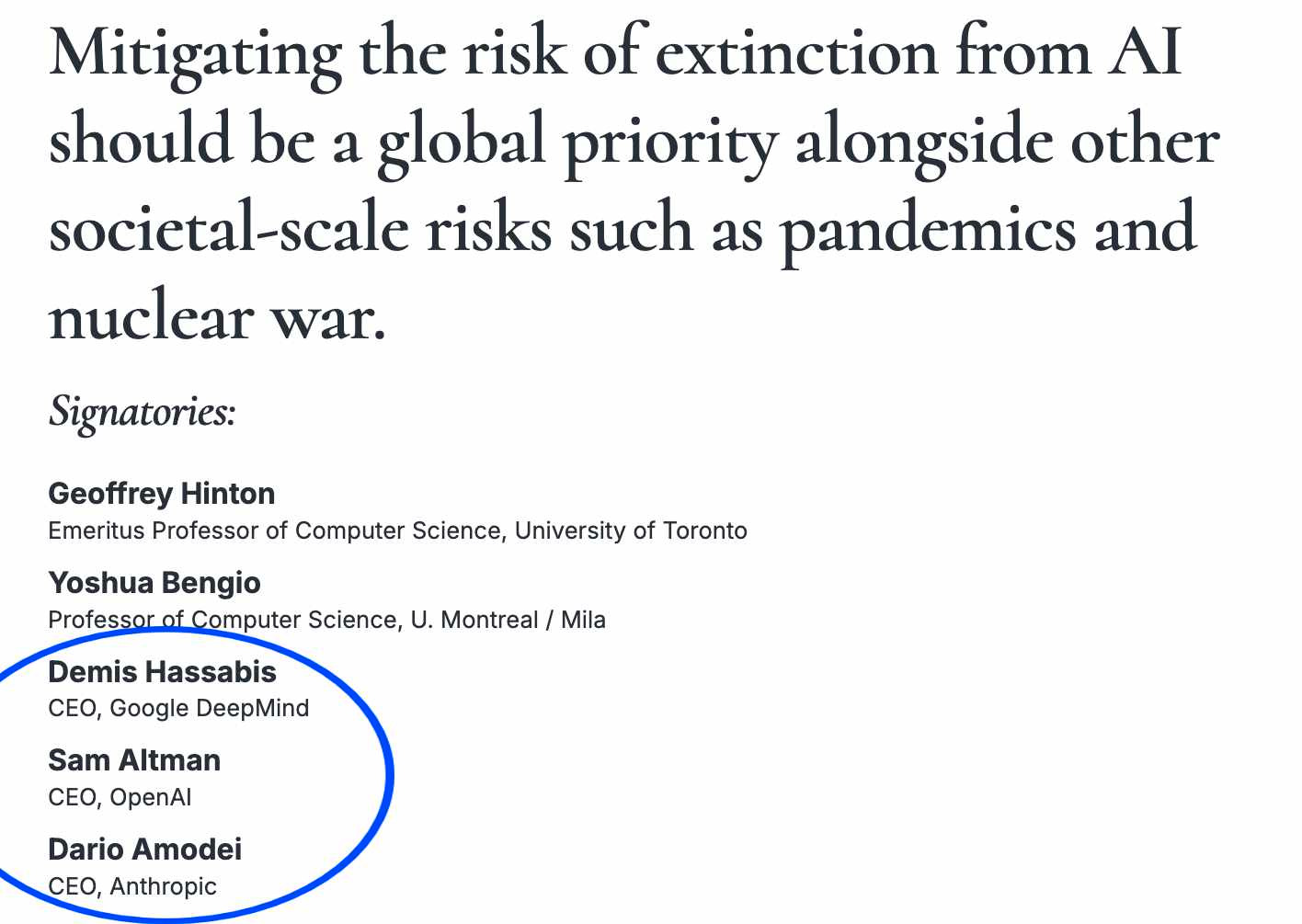

Three out of three CEOs of top AI companies agree: "Mitigating the risk of extinction from AI should be a global priority."

How do they plan to do this?

Anthropic has a Responsible Scaling Policy, Google DeepMind has a Frontier Safety Framework, and OpenAI has a Preparedness Framework, all of which were updated in 2025.

Overview of the policies

All three policies have similar “bones”.[1] They:

- Take the same high-level approach: the company promises to test its AIs for dangerous capabilities during development; if they find that an AI has dangerous capabilities, they'll put safeguards in place to get the risk down to "acceptable levels" before they deploy it.

- Land on basically the same three AI capability areas to track: biosecurity threats, cybersecurity threats, and autonomous AI development.

- Focus on misuse more than misalignment.

TL;DR summary table for the rest of the article:

AnthropicGoogle DeepMindOpenAISafety policy documentResponsible [...]---

Outline:

(00:44 ) Overview of the policies

(02:00 ) Anthropic

(02:18 ) What capabilities are they monitoring for?

(06:07 ) How do they monitor these capabilities?

(07:35 ) What will they do if an AI looks dangerous?

(09:59 ) Deployment Constraints

(10:32 ) Google DeepMind

(11:03 ) What capabilities are they monitoring for?

(13:08 ) How do they monitor these capabilities?

(14:06 ) What will they do if an AI looks dangerous?

(15:46 ) Industry Wide Recommendations

(16:44 ) Some details of note

(17:49 ) OpenAI

(18:21 ) What capabilities are they monitoring?

(21:04 ) How do they monitor these capabilities?

(22:35 ) What will they do if an AI looks dangerous?

(26:24 ) Notable differences between the companies' plans

(27:21 ) Commentary on the safety plans

(29:12 ) The current situation

The original text contained 14 footnotes which were omitted from this narration.

---

First published:

October 28th, 2025

Source:

https://www.lesswrong.com/posts/dwpXvweBrJwErse3L/all-the-lab-s-ai-safety-plans-2025-edition

---

Narrated by TYPE III AUDIO.

---

Images from the article:

Apple Podcasts and Spotify do not show images in the episode description. Try Pocket Casts, or another podcast app.