Discover Best AI papers explained

Best AI papers explained

701 Episodes

Reverse

This paper proposes that the future of artificial intelligence lies in plurality and social interaction rather than a single, monolithic super-intelligence. The authors argue that modern reasoning models already function as a "society of thought," where internal debates between different perspectives drive more accurate problem-solving. By moving toward a hybrid ecosystem, human and machine agents can form "centaur" configurations that mirror the collective intelligence found in biological evolution and human institutions. This shift requires a new focus on agentic governance and institutional design to ensure that diverse AI entities can coordinate and provide necessary checks and balances. Ultimately, the text suggests that the next great leap in intelligence will be defined by collaborative networks that extend our existing cultural and social frameworks into the digital realm.

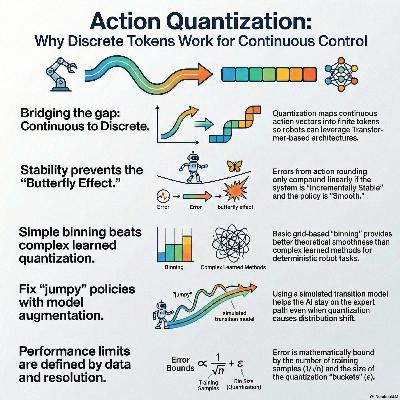

This research provides a theoretical foundation for behavior cloning using action quantization, a common practice in robotics and large-scale AI models where continuous signals are converted into discrete tokens. The authors analyze how quantization error and statistical complexity interact to influence a model’s performance over time. Their findings demonstrate that stable dynamics and smooth policies are essential for preventing small errors from compounding into significant failures. The study specifically highlights that binning-based quantization is more reliable than learning-based methods when imitating deterministic experts. To address potential instability, the paper proposes a model-based augmentation that improves accuracy without requiring high levels of policy smoothness. Finally, the researchers establish information-theoretic lower bounds to define the fundamental limits of learning from quantized demonstrations.

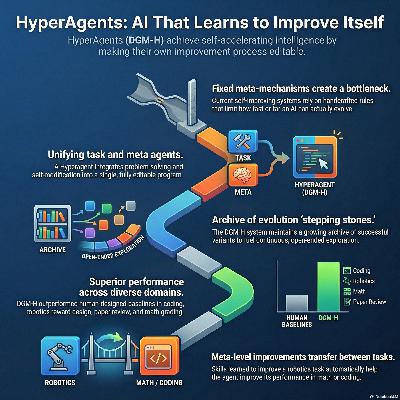

This paper introduces HyperAgents, a novel framework for creating self-referential AI systems capable of autonomous, open-ended improvement across any computable task. Unlike previous models that rely on rigid, human-designed rules for self-modification, these agents integrate task-solving logic and meta-level improvement mechanisms into a single editable program. This architecture enables metacognitive self-modification, allowing the AI to refine not only its answers but also the very process it uses to upgrade itself. By extending the Darwin Gödel Machine (DGM-H), the system demonstrates the ability to evolve sophisticated features like persistent memory and performance tracking without manual engineering. Experiments across diverse fields—including robotics, coding, and mathematical grading—show that these improvements are highly effective, transferable between different domains, and capable of compounding over time. Ultimately, the research suggests a path toward self-accelerating AI that can independently enhance its own problem-solving architecture while maintaining safety through sandboxed environments.

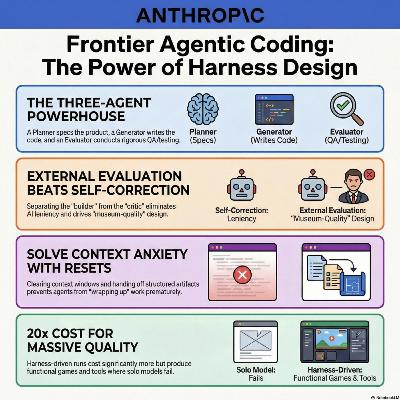

This article explores how **multi-agent harness design** significantly enhances the performance of AI models in complex, long-running tasks like **frontend design** and **autonomous software engineering**. The author details a shift from single-agent attempts to a **GAN-inspired architecture** involving specialized **planner, generator, and evaluator** roles to overcome issues like "context anxiety" and poor self-assessment. By implementing **objective grading criteria** and automated testing via tools like Playwright, the system can autonomously iterate on projects for several hours to produce high-fidelity, functional applications. Comparative experiments demonstrate that while these structured harnesses increase **token costs and latency**, they deliver a level of **creative polish and technical correctness** that solo models cannot currently achieve. Ultimately, the work suggests that as underlying models improve, the role of the AI engineer shifts toward refining these **agentic orchestrations** to push the boundaries of what autonomous systems can build.

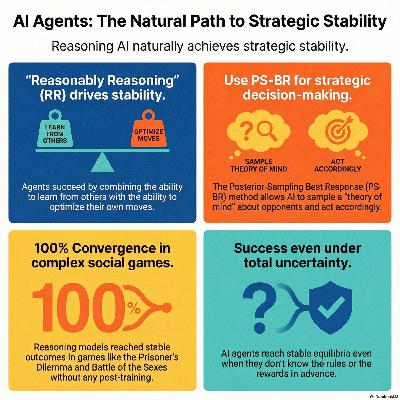

This research explores whether AI agents can autonomously reach strategic equilibria in repeated interactions without specialized training. The author proves that "reasonably reasoning" agents—those capable of basic capabilities such as Bayesian learning and asymptotic best-response—naturally converge toward Nash equilibrium play, where posterior-sampling behaviors of off-the-shelf models guarantee asymptotic best response. The study further demonstrates that these agents successfully navigate environments, even when payoffs are unknown or stochastic, by inferring the game structure from private observations. Empirical simulations across various scenarios, such as the Prisoner’s Dilemma, confirm that advanced reasoning capabilities enable stable, predictable cooperation. Ultimately, the paper suggests that sophisticated AI naturally possesses the intrinsic mechanisms necessary for reliable decision-making in complex economic markets.

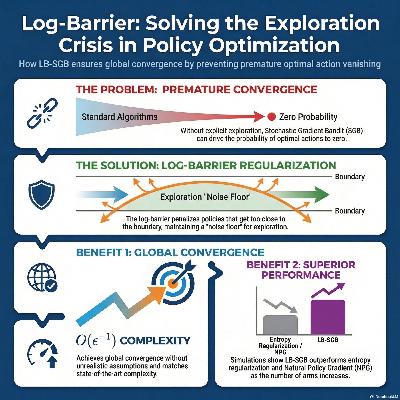

This paper introduces Log-Barrier Stochastic Gradient Bandit (LB-SGB), a new algorithm designed to fix structural flaws in standard policy optimization methods. While traditional gradient bandits often prematurely converge to suboptimal actions because they lack an explicit exploration mechanism, the authors use log-barrier regularization to force the policy away from the boundary of the probability simplex. This approach ensures that the probability of selecting any action, specifically the optimal one, never vanishes during the learning process. The researchers prove that this method matches state-of-the-art sample complexity while providing more robust global convergence guarantees without relying on unrealistic assumptions. Additionally, the study identifies a significant theoretical link between log-barrier regularization and Natural Policy Gradient methods through the geometry of Fisher information. Empirical simulations confirm that LB-SGB outperforms standard entropy-regularized and vanilla gradient methods, especially as the number of available actions increases.

This research introduces specialized pretraining (SPT), a strategy that incorporates domain-specific data directly into the initial pretraining phase rather than reserving it solely for finetuning. By mixing a small percentage of specialized tokens with general web data, models achieve superior performance and faster convergence on niche topics like chemistry, music, and mathematics. This approach effectively addresses the finetuner’s fallacy, proving that early data integration reduces the "tax" of forgetting general knowledge while preventing the overfitting common in standard finetuning. The authors demonstrate that a smaller model using SPT can actually outperform a much larger model trained via traditional methods. Ultimately, the study provides overfitting scaling laws to help practitioners determine the ideal data mixture based on their specific compute budget and dataset size.

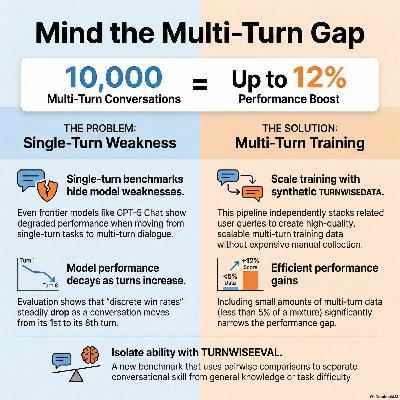

This research addresses the performance gap in large language models between single-turn and multi-turn interactions. The authors introduce TURNWISEEVAL, a new benchmark that isolates conversational ability by comparing model responses in long dialogues against equivalent single-turn prompts. To improve model performance, they also developed TURNWISEDATA, a scalable pipeline that generates synthetic multi-turn training data from existing single-turn instructions. Their experiments demonstrate that even advanced models often struggle with extended context, but incorporating a small amount of this synthetic data during training significantly boosts chat capabilities. Ultimately, the study highlights that multi-turn proficiency is a distinct skill set that requires dedicated evaluation and specialized training data.

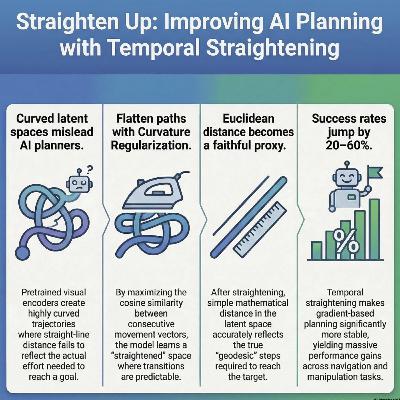

This research paper introduces **temporal straightening**, a technique designed to improve **latent planning** in AI world models by regularizing the curvature of agent trajectories. While standard visual encoders often produce highly curved paths in latent space, this approach uses a **curvature regularizer** to create a representation where feasible transitions follow straighter lines. This geometric transformation ensures that **Euclidean distance** serves as a more accurate proxy for the actual distance to a goal, significantly improving the stability of **gradient-based optimization**. Theoretical analysis demonstrates that straightening the latent space leads to a better-conditioned **planning objective**, allowing planners to converge more efficiently. Empirical tests across several goal-reaching tasks, such as **PointMaze** and **PushT**, show that this method substantially increases success rates for both open-loop and closed-loop planning. Ultimately, the work suggests that the **geometric structure** of learned representations is a critical factor in the effectiveness of autonomous planning systems.

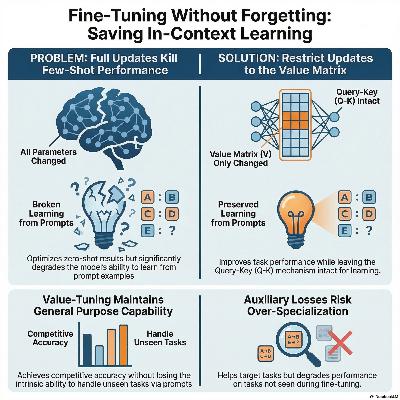

This research examines the tension between in-context learning (ICL) and fine-tuning in Transformer-based models, specifically using linear attention to provide a theoretical foundation. While fine-tuning is often employed to enhance zero-shot performance on specific target tasks, the authors demonstrate that updating all attention parameters can inadvertently damage the model's ability to learn from demonstrations. They identify a superior strategy: restricting updates to the value matrix, which improves task-specific accuracy while maintaining the model’s original few-shot capabilities. The study further explores the use of an auxiliary few-shot loss, finding that it boosts performance on the target task but reduces the model's ability to generalize to out-of-distribution tasks. These theoretical insights are validated through both mathematical proofs and empirical experiments on the MMLU benchmark. Ultimately, the work provides a framework for optimizing language models without sacrificing their inherent flexibility as in-context learners.

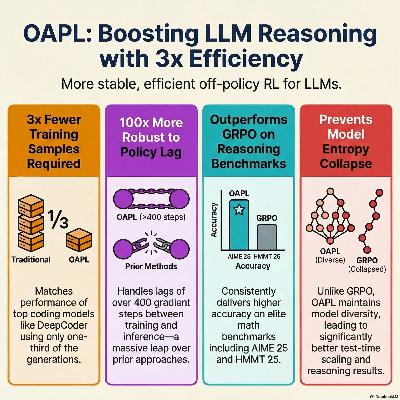

Researchers have introduced OAPL, a new reinforcement learning algorithm designed to improve how Large Language Models (LLMs) learn complex reasoning for math and coding. Traditional methods often struggle when the training policy and the inference engine are out of sync, a common issue in large-scale, asynchronous computing. Instead of trying to force these mismatched systems to align, OAPL embraces this discrepancy by using a squared regression objective that functions effectively even with significant policy lag. This approach eliminates the need for complex importance sampling or heuristics that can destabilize training. Empirical results show that OAPL outperforms existing methods like GRPO on competitive benchmarks while using significantly fewer computational resources. Furthermore, the model maintains higher sequence entropy, which prevents the performance collapse often seen in other post-training techniques.

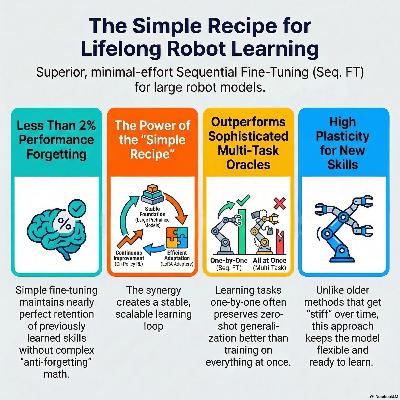

This paper explores Continual Reinforcement Learning (CRL) for large Vision-Language-Action (VLA) models, focusing on how these agents adapt to new tasks without losing prior knowledge. While traditional machine learning often suffers from catastrophic forgetting during sequential training, this research demonstrates that a simple Sequential Fine-Tuning approach remains remarkably effective. By combining pre-trained VLAs, on-policy reinforcement learning, and Low-Rank Adaptation (LoRA), the researchers found that models maintain high plasticity and strong zero-shot generalization. Their systematic study across multiple benchmarks reveals that this basic recipe often outperforms more complex, specialized CRL strategies. Ultimately, the source positions parameter-efficient fine-tuning as a scalable and stable foundation for developing lifelong embodied intelligence in robotic agents.

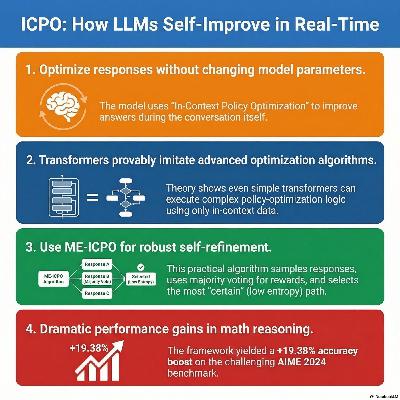

This research paper introduces In-Context Policy Optimization (ICPO), a framework designed to explain and enhance the self-reflection capabilities of large language models. The authors provide a mathematical foundation proving that specific transformer architectures can inherently mimic policy optimization algorithms without requiring parameter updates. Building on this theory, they develop ME-ICPO, a practical algorithm that improves mathematical reasoning by iteratively refining responses based on self-assessed rewards. To ensure reliability, the system utilizes minimum-entropy selection and majority voting to filter out noise from self-evaluations. Empirical results demonstrate that this approach significantly boosts performance on complex reasoning benchmarks while remaining computationally efficient. Ultimately, the work bridges the gap between the theoretical understanding of in-context learning and the empirical success of test-time scaling.

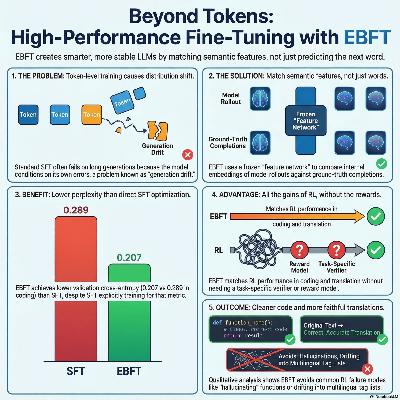

This research paper introduces Energy-Based Fine-Tuning (EBFT), a novel method for refining language models by matching feature statistics of generated text with ground-truth data. Traditional training relies on next-token prediction, which often causes models to drift or fail during long sequences because they lack global distributional calibration. By optimizing a feature-matching objective using a frozen feature network, EBFT provides dense semantic feedback at the sequence level without requiring a manual reward function. Experimental results across coding and translation show that EBFT outperforms standard supervised fine-tuning and equals reinforcement learning in accuracy. Furthermore, it achieves superior validation cross-entropy, proving that aligning feature moments effectively stabilizes model behavior and preserves high-quality language modeling.

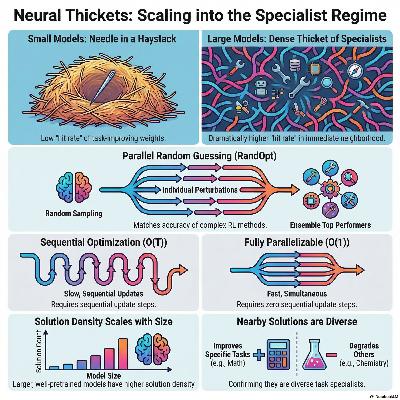

This research introduces the concept of Neural Thickets, describing a phenomenon where large pretrained models are surrounded by a high density of diverse, task-specific solutions in their local weight space. While small models require structured optimization like gradient descent to find improvements, larger models transition into a regime where random weight perturbations frequently yield "expert" versions of the model. The authors exploit this discovery through RandOpt, a parallel post-training method that samples random weight changes, selects the best performers, and ensembles their predictions. Their findings show that these random experts are specialists rather than generalists, often excelling at one task while declining in others, which makes ensembling via majority vote highly effective. This approach proves competitive with standard reinforcement learning methods like PPO and GRPO, especially as model scale increases. Ultimately, the study suggests that sufficient pretraining fundamentally reshapes the loss landscape, making complex downstream adaptation possible through simple parallel search and selection.

This paper introduces AdaEvolve, a novel framework designed to enhance how Large Language Models (LLMs) solve complex optimization and programming tasks through evolutionary search. Unlike existing methods that use rigid, pre-set schedules, this system implements hierarchical adaptivity to manage computational resources and search strategies dynamically. It operates across three levels: local adaptation to adjust exploration intensity, global adaptation to allocate the budget toward promising solution populations, and meta-guidance to generate new tactics when progress stalls. This approach mimics the efficiency of adaptive gradient methods used in continuous optimization but applies it to discrete, zero-th order problems. Experimental results across 185 benchmarks show that AdaEvolve consistently outperforms standard baselines and human-designed solutions in areas like combinatorial geometry and systems optimization. By replacing brittle manual tuning with a unified improvement signal, the framework demonstrates a more robust and autonomous path for AI-driven discovery.

This paper introduces ∇-Reasoner, a novel framework that improves Large Language Model (LLM) reasoning by applying gradient-based optimization during the inference process. Unlike traditional methods that rely on random sampling or discrete searches, this approach uses Differentiable Textual Optimization (DTO) to refine token logits through first-order gradients derived from reward models and likelihood signals. By iteratively updating textual representations in latent space, the system allows for bidirectional information flow, enabling the model to correct its reasoning chains on the fly. To ensure efficiency, the framework incorporates gradient caching and rejection sampling, which reduce the computational burden typically associated with backpropagation. Empirical results demonstrate that $\nabla$-Reasoner significantly boosts accuracy on complex mathematical benchmarks while requiring fewer model calls than existing search-based baselines. Ultimately, the research establishes a theoretical and practical shift toward treating test-time reasoning as a continuous optimization problem rather than a simple stochastic generation task.

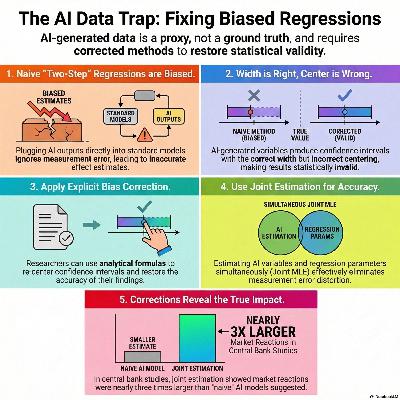

This research investigates how using artificial intelligence (AI) or machine learning (ML) to generate variables for economic regressions can lead to biased estimates and invalid statistical inference. While researchers often treat AI-generated outputs as standard data, the authors demonstrate that measurement error in these variables—even from high-performance algorithms—shifts the centering of confidence intervals, making them unreliable. To address these distortions, the paper introduces two practical solutions: a mathematical bias correction that does not require ground-truth validation data and a joint estimation framework that models the latent variables and regression parameters simultaneously. The effectiveness of these methods is illustrated through diverse applications, including job posting classifications, CEO time-use analysis, and central bank sentiment indexing. Ultimately, the study provides a robust toolkit for economists to maintain statistical integrity when integrating modern computational tools into empirical research.

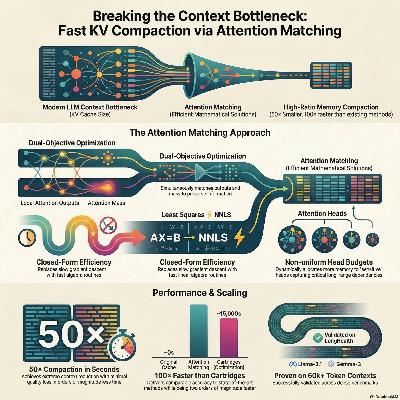

This paper introduces Attention Matching (AM), a novel framework for fast and efficient key-value (KV) cache compaction in long-context language models. As models process longer sequences, the memory required for the KV cache becomes a major bottleneck, often necessitating lossy strategies like summarization or token eviction. The researchers propose optimizing compact keys and values to reproduce the original model's attention outputs and attention mass across every layer. This method achieves up to 50× compaction in seconds, significantly outperforming traditional token-dropping baselines and matching the quality of expensive gradient-based optimization. By incorporating nonuniform head budgets and scalar attention biases, AM maintains high downstream accuracy on complex reasoning tasks while remaining compatible with existing inference engines. Their findings suggest that latent-space compaction is a powerful primitive for managing the memory demands of modern generative AI.

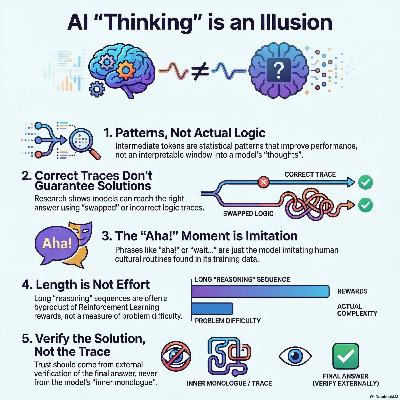

This position paper argues against the anthropomorphization of intermediate tokens in large language models, commonly referred to as "reasoning traces" or "chains of thought." The authors contend that these outputs are not genuine reflections of human-like thinking but are instead statistically generated patterns that may lack semantic validity. Research indicates that model performance can improve even when these traces are factually incorrect or nonsensical, suggesting that the connection between a trace and the final answer is often tenuous. Consequently, viewing these tokens as an interpretable window into a model’s logic can lead to a dangerous overestimation of its reliability. The authors call on the scientific community to move away from human-centric metaphors and focus on external verification of solutions. By treating intermediate tokens as a computational tool for the model rather than an explanation for the user, researchers can pursue more effective and honest AI development.