📆 ThursdAI - Nov 21 - The fight for the LLM throne, OSS SOTA from AllenAI, Flux new tools, Deepseek R1 reasoning & more AI news

Description

Hey folks, Alex here, and oof what a 🔥🔥🔥 show we had today! I got to use my new breaking news button 3 times this show! And not only that, some of you may know that one of the absolutely biggest pleasures as a host, is to feature the folks who actually make the news on the show!

And now that we're in video format, you actually get to see who they are! So this week I was honored to welcome back our friend and co-host Junyang Lin, a Dev Lead from the Alibaba Qwen team, who came back after launching the incredible Qwen Coder 2.5, and Qwen 2.5 Turbo with 1M context.

We also had breaking news on the show that AI2 (Allen Institute for AI) has fully released SOTA LLama post-trained models, and I was very lucky to get the core contributor on the paper, Nathan Lambert to join us live and tell us all about this amazing open source effort! You don't want to miss this conversation!

Lastly, we chatted with the CEO of StackBlitz, Eric Simons, about the absolutely incredible lightning in the bottle success of their latest bolt.new product, how it opens a new category of code generator related tools.

00:00 Introduction and Welcome

00:58 Meet the Hosts and Guests

02:28 TLDR Overview

03:21 Tl;DR

04:10 Big Companies and APIs

07:47 Agent News and Announcements

08:05 Voice and Audio Updates

08:48 AR, Art, and Diffusion

11:02 Deep Dive into Mistral and Pixtral

29:28 Interview with Nathan Lambert from AI2

30:23 Live Reaction to Tulu 3 Release

30:50 Deep Dive into Tulu 3 Features

32:45 Open Source Commitment and Community Impact

33:13 Exploring the Released Artifacts

33:55 Detailed Breakdown of Datasets and Models

37:03 Motivation Behind Open Source

38:02 Q&A Session with the Community

38:52 Summarizing Key Insights and Future Directions

40:15 Discussion on Long Context Understanding

41:52 Closing Remarks and Acknowledgements

44:38 Transition to Big Companies and APIs

45:03 Weights & Biases: This Week's Buzz

01:02:50 Mistral's New Features and Upgrades

01:07:00 Introduction to DeepSeek and the Whale Giant

01:07:44 DeepSeek's Technological Achievements

01:08:02 Open Source Models and API Announcement

01:09:32 DeepSeek's Reasoning Capabilities

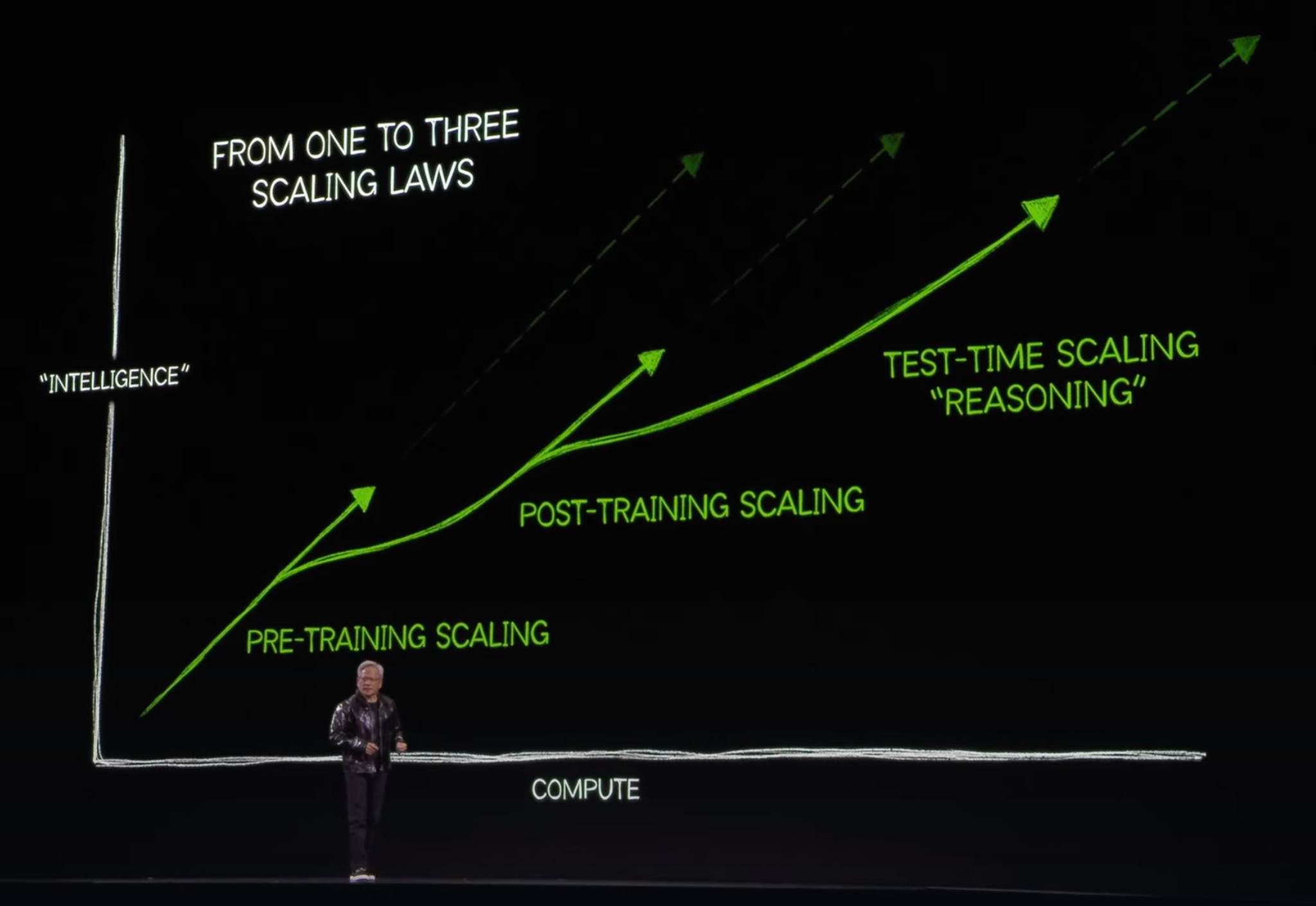

01:12:07 Scaling Laws and Future Predictions

01:14:13 Interview with Eric from Bolt

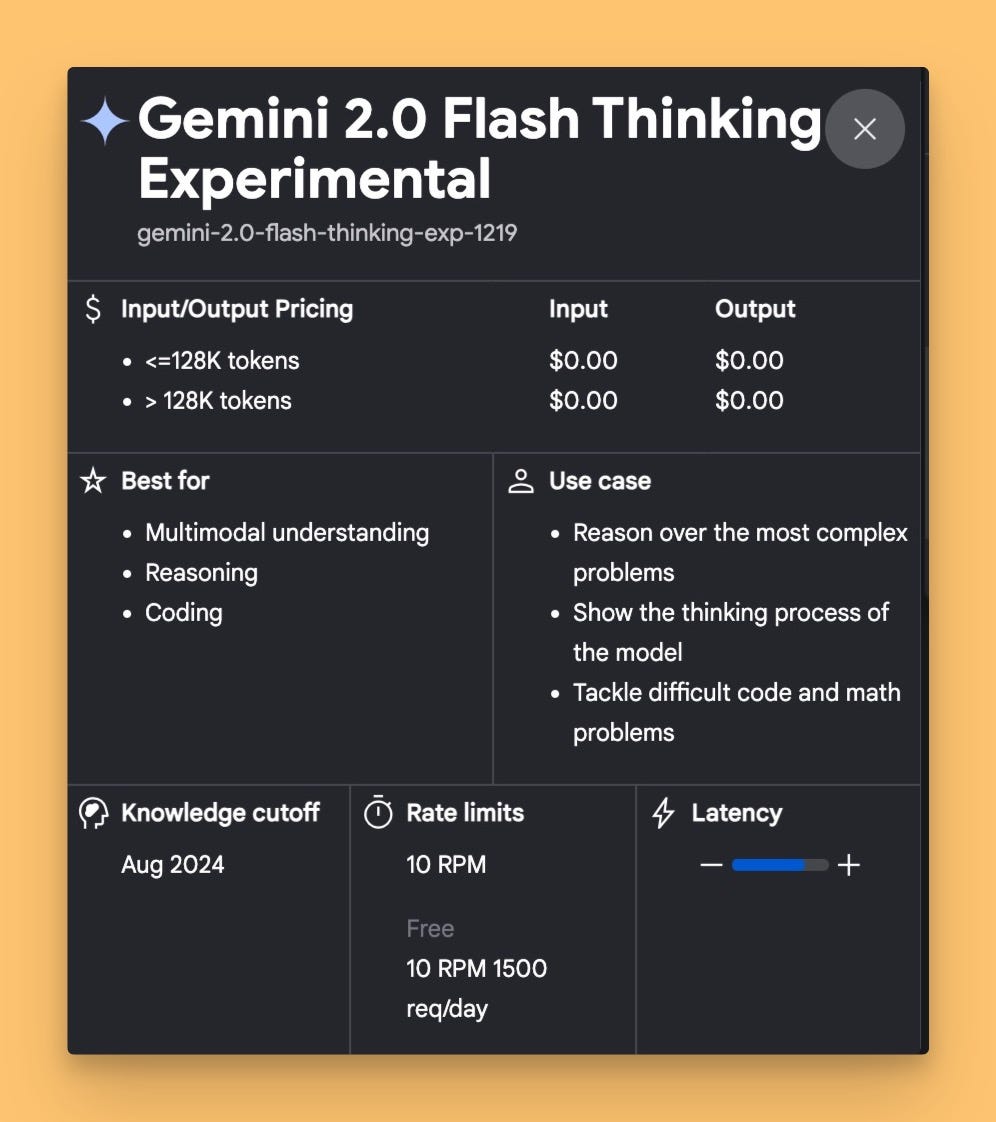

01:14:41 Breaking News: Gemini Experimental

01:17:26 Interview with Eric Simons - CEO @ Stackblitz

01:19:39 Live Demo of Bolt's Capabilities

01:36:17 Black Forest Labs AI Art Tools

01:40:45 Conclusion and Final Thoughts

As always, the show notes and TL;DR with all the links I mentioned on the show and the full news roundup below the main new recap 👇

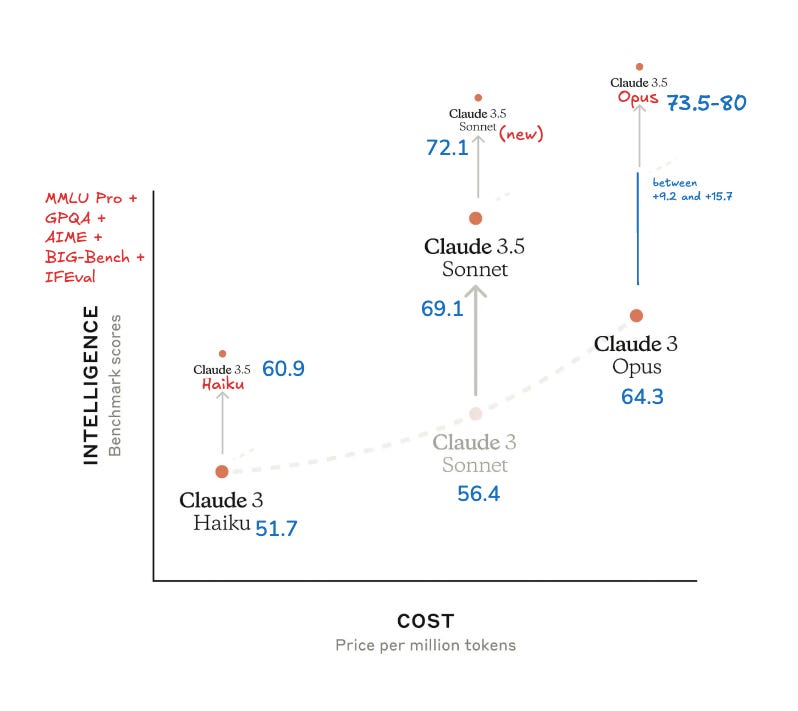

Google & OpenAI fighting for the LMArena crown 👑

I wanted to open with this, as last week I reported that Gemini Exp 1114 has taken over #1 in the LMArena, in less than a week, we saw a new ChatGPT release, called GPT-4o-2024-11-20 reclaim the arena #1 spot!

Focusing specifically on creating writing, this new model, that's now deployed on chat.com and in the API, is definitely more creative according to many folks who've tried it, with OpenAI employees saying "expect qualitative improvements with more natural and engaging writing, thoroughness and readability" and indeed that's what my feed was reporting as well.

I also wanted to mention here, that we've seen this happen once before, last time Gemini peaked at the LMArena, it took less than a week for OpenAI to release and test a model that beat it.

But not this time, this time Google came prepared with an answer!

Just as we were wrapping up the show (again, Logan apparently loves dropping things at the end of ThursdAI), we got breaking news that there is YET another experimental model from Google, called Gemini Exp 1121, and apparently, it reclaims the stolen #1 position, that chatGPT reclaimed from Gemini... yesterday! Or at least joins it at #1

LMArena Fatigue?

Many folks in my DMs are getting a bit frustrated with these marketing tactics, not only the fact that we're getting experimental models faster than we can test them, but also with the fact that if you think about it, this was probably a calculated move by Google. Release a very powerful checkpoint, knowing that this will trigger a response from OpenAI, but don't release your most powerful one. OpenAI predictably releases their own "ready to go" checkpoint to show they are ahead, then folks at Google wait and release what they wanted to release in the first place.

The other frustration point is, the over-indexing of the major labs on the LMArena human metrics, as the closest approximation for "best". For example, here's some analysis from Artificial Analysis showing that the while the latest ChatGPT is indeed better at creative writing (and #1 in the Arena, where humans vote answers against each other), it's gotten actively worse at MATH and coding from the August version (which could be a result of being a distilled much smaller version) .

In summary, maybe the LMArena is no longer 1 arena is all you need, but the competition at the TOP scores of the Arena has never been hotter.

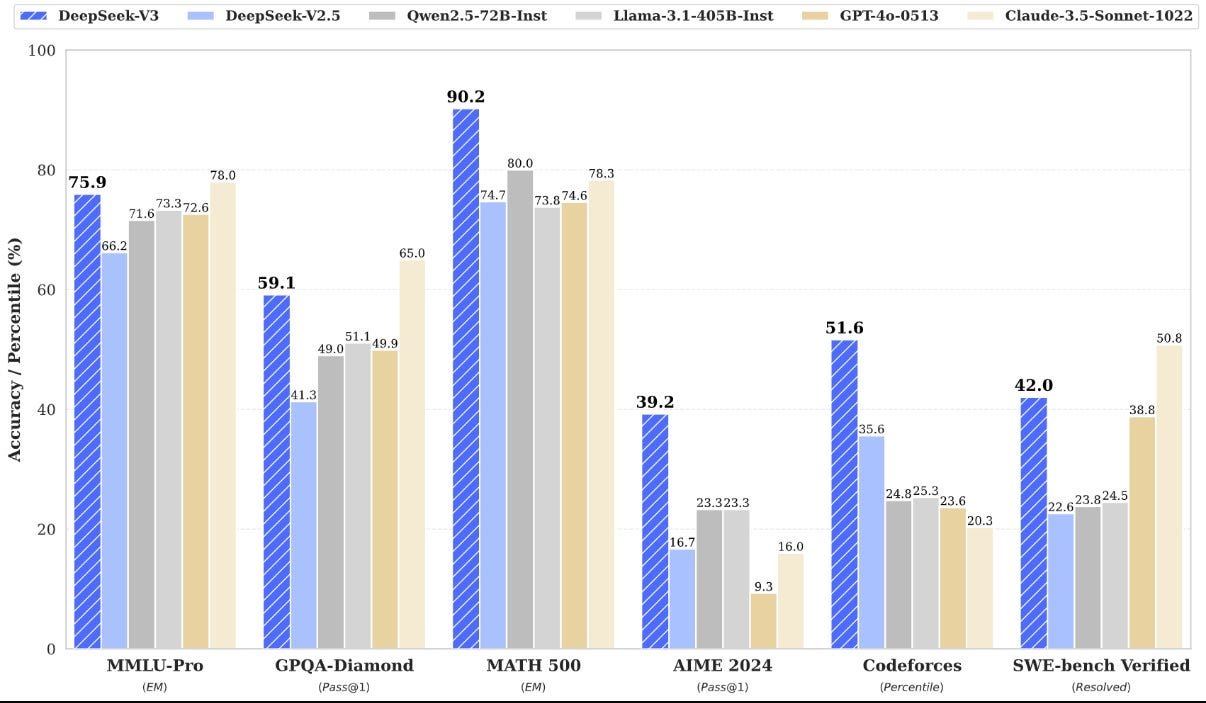

DeepSeek R-1 preview - reasoning from the Chinese Whale

While the American labs fight for the LM titles, the real interesting news may be coming from the Chinese whale, DeepSeek, a company known for their incredibly cracked team, resurfaced once again and showed us that they are indeed, well super cracked.

They have trained and released R-1 preview, with Reinforcement Learning, a reasoning model that beasts O1 at AIME and other benchmarks! We don't know many details yet, besides them confirming that this model comes to the open source! but we do know that this model , unlike O1, is showing the actual reasoning it uses to achieve it's answers (reminder: O1 hides its actual reasoning and what we see is actually another model summarizing the reasoning)

The other notable thing is, DeepSeek all but confirmed the claim that we have a new scaling law with Test Time / Inference time compute law, where, like with O1, the more time (and tokens) you give a model to think, the better it gets at answering hard questions. Which is a very important confirmation, and is a VERY exciting one if this is coming to the open source!

Right now you can play around with R1 in their demo chat interface.

In other Big Co and API news

In other news, Mistral becomes a Research/Product company, with a host of new additions to Le Chat, including Browse, PDF upload, Canvas and Flux 1.1 Pro integration (for Free! I think this is the only place where you can get Flux Pro for free!).

Qwen released a new 1M context window model in their API called Qwen 2.5 Turbo, making it not only the 2nd ever 1M+ model (after Gemini) to be available, but also reducing TTFT (time to first token) significantly and slashing costs. This is available via their APIs and Demo here.

Open Source is catching up

AI2 open sources Tulu 3 - SOTA 8B, 70B LLama post trained FULLY open sourced (Blog ,Demo, HF, Data, Github, Paper)

Allen AI folks have joined the show before, and this time we got Nathan Lambert, the core contributor on the Tulu paper, join and talk to us about Post Training and how they made the best performing SOTA LLama 3.1 Funetunes with careful data curation (which they also open sourced), preference optimization, and a new methodology they call RLVR (Reinforcement Learning with Verifiable Rewards).

Simply put, RLVR modifies the RLHF approach by using a verification function instead of a reward model. This method is effective for tasks with verifiable answers, like math problems or specific instructions. It improves performance on certain benchmarks (e.g., GSM8K) while maintaining capabilities in other areas.

The most notable thing is, just how MUCH is open source, as again, like the last time we had AI2 folks on the show, the amount they release is staggering

In the show, Nathan had me pull up the paper and we went through the deluge of models, code and datasets they released, not to mention the 73 page paper full of methodology and techniques.

Just absolute ❤️ to the AI2 team for this release!

🐝 This weeks buzz - Weights & Biases corner

This week, I want to invite you to a live stream announcement that I am working on behind the scenes to produce, on December 2nd. You can register HERE (it's on LinkedIn, I know, I'll have the YT link next week, pr