ThursdAI - Sep 19 - 👑 Qwen 2.5 new OSS king LLM, MSFT new MoE, Nous Research's Forge announcement, and Talking AIs in the open source!

Description

Hey folks, Alex here, back with another ThursdAI recap – and let me tell you, this week's episode was a whirlwind of open-source goodness, mind-bending inference techniques, and a whole lotta talk about talking AIs! We dove deep into the world of LLMs, from Alibaba's massive Qwen 2.5 drop to the quirky, real-time reactions of Moshi.

We even got a sneak peek at Nous Research's ambitious new project, Forge, which promises to unlock some serious LLM potential. So grab your pumpkin spice latte (it's that time again isn't it? 🍁) settle in, and let's recap the AI awesomeness that went down on ThursdAI, September 19th!

ThursdAI is brought to you (as always) by Weights & Biases, we still have a few spots left in our Hackathon this weekend and our new advanced RAG course is now released and is FREE to sign up!

TL;DR of all topics + show notes and links

* Open Source LLMs

* Alibaba Qwen 2.5 models drop + Qwen 2.5 Math and Qwen 2.5 Code (X, HF, Blog, Try It)

* Qwen 2.5 Coder 1.5B is running on a 4 year old phone (Nisten)

* KyutAI open sources Moshi & Mimi (Moshiko & Moshika) - end to end voice chat model (X, HF, Paper)

* Microsoft releases GRIN-MoE - tiny (6.6B active) MoE with 79.4 MMLU (X, HF, GIthub)

* Nvidia - announces NVLM 1.0 - frontier class multimodal LLMS (no weights yet, X)

* Big CO LLMs + APIs

* OpenAI O1 results from LMsys do NOT disappoint - vibe checks also confirm, new KING llm in town (Thread)

* NousResearch announces Forge in waitlist - their MCTS enabled inference product (X)

* This weeks Buzz - everything Weights & Biases related this week

* Judgement Day (hackathon) is in 2 days! Still places to come hack with us Sign up

* Our new RAG Course is live - learn all about advanced RAG from WandB, Cohere and Weaviate (sign up for free)

* Vision & Video

* Youtube announces DreamScreen - generative AI image and video in youtube shorts ( Blog)

* CogVideoX-5B-I2V - leading open source img2video model (X, HF)

* Runway, DreamMachine & Kling all announce text-2-video over API (Runway, DreamMachine)

* Runway announces video 2 video model (X)

* Tools

* Snap announces their XR glasses - have hand tracking and AI features (X)

Open Source Explosion!

👑 Qwen 2.5: new king of OSS llm models with 12 model releases, including instruct, math and coder versions

This week's open-source highlight was undoubtedly the release of Alibaba's Qwen 2.5 models. We had Justin Lin from the Qwen team join us live to break down this monster drop, which includes a whopping seven different sizes, ranging from a nimble 0.5B parameter model all the way up to a colossal 72B beast! And as if that wasn't enough, they also dropped Qwen 2.5 Coder and Qwen 2.5 Math models, further specializing their LLM arsenal. As Justin mentioned, they heard the community's calls for 14B and 32B models loud and clear – and they delivered! "We do not have enough GPUs to train the models," Justin admitted, "but there are a lot of voices in the community...so we endeavor for it and bring them to you." Talk about listening to your users!

Trained on an astronomical 18 trillion tokens (that’s even more than Llama 3.1 at 15T!), Qwen 2.5 shows significant improvements across the board, especially in coding and math. They even open-sourced the previously closed-weight Qwen 2 VL 72B, giving us access to the best open-source vision language models out there. With a 128K context window, these models are ready to tackle some serious tasks. As Nisten exclaimed after putting the 32B model through its paces, "It's really practical…I was dumping in my docs and my code base and then like actually asking questions."

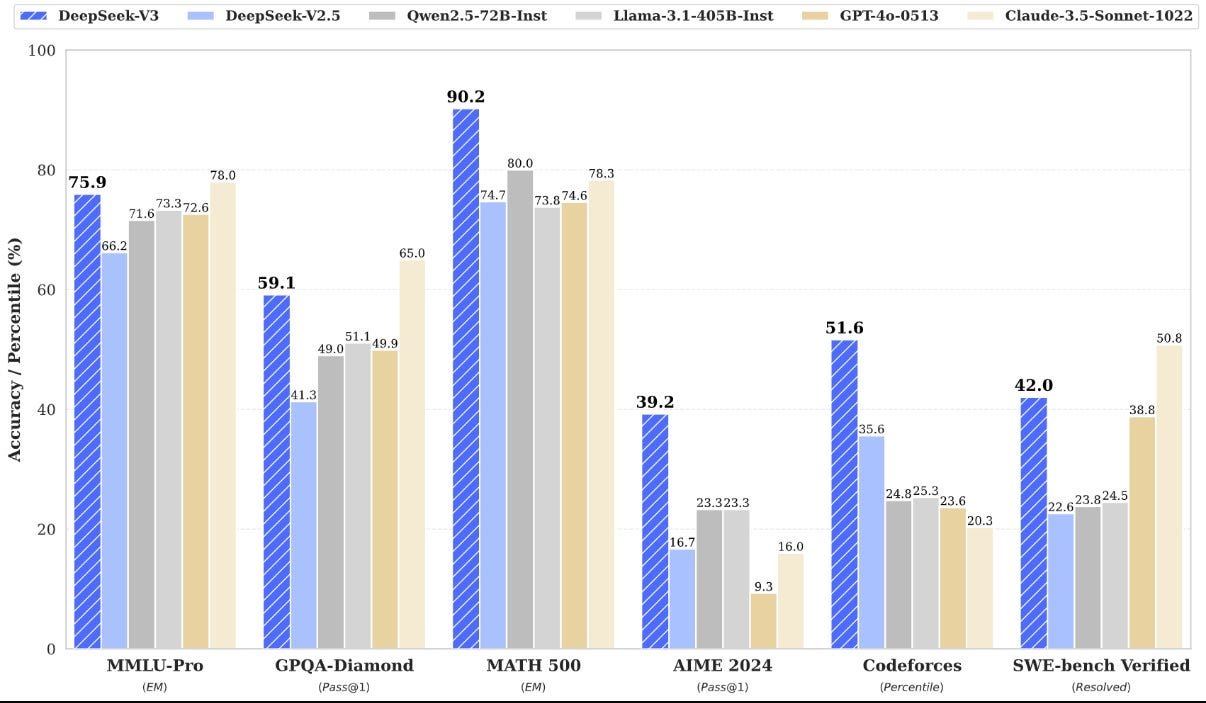

It's safe to say that Qwen 2.5 coder is now the best coding LLM that you can use, and just in time for our chat, a new update from ZeroEval confirms, Qwen 2.5 models are the absolute kings of OSS LLMS, beating Mistral large, 4o-mini, Gemini Flash and other huge models with just 72B parameters 👏

Moshi: The Chatty Cathy of AI

We've covered Moshi Voice back in July, and they have promised to open source the whole stack, and now finally they did! Including the LLM and the Mimi Audio Encoder!

This quirky little 7.6B parameter model is a speech-to-speech marvel, capable of understanding your voice and responding in kind. It's an end-to-end model, meaning it handles the entire speech-to-speech process internally, without relying on separate speech-to-text and text-to-speech models.

While it might not be a logic genius, Moshi's real-time reactions are undeniably uncanny. Wolfram Ravenwolf described the experience: "It's uncanny when you don't even realize you finished speaking and it already starts to answer." The speed comes from the integrated architecture and efficient codecs, boasting a theoretical response time of just 160 milliseconds!

Moshi uses (also open sourced) Mimi neural audio codec, and achieves 12.5 Hz representation with just 1.1 kbps bandwidth.

You can download it and run on your own machine or give it a try here just don't expect a masterful conversationalist hehe

Gradient-Informed MoE (GRIN-MoE): A Tiny Titan

Just before our live show, Microsoft dropped a paper on GrinMoE, a gradient-informed Mixture of Experts model. We were lucky enough to have the lead author, Liyuan Liu (aka Lucas), join us impromptu to discuss this exciting development. Despite having only 6.6B active parameters (16 x 3.8B experts), GrinMoE manages to achieve remarkable performance, even outperforming larger models like Phi-3 on certain benchmarks. It's a testament to the power of clever architecture and training techniques. Plus, it's open-sourced under the MIT license, making it a valuable resource for the community.

NVIDIA NVLM: A Teaser for Now

NVIDIA announced NVLM 1.0, their own set of multimodal LLMs, but alas, no weights were released. We’ll have to wait and see how they stack up against the competition once they finally let us get our hands on them. Interestingly, while claiming SOTA on some vision tasks, they haven't actually compared themselves to Qwen 2 VL, which we know is really really good at vision tasks 🤔

Nous Research Unveils Forge: Inference Time Compute Powerhouse (beating o1 at AIME Eval!)

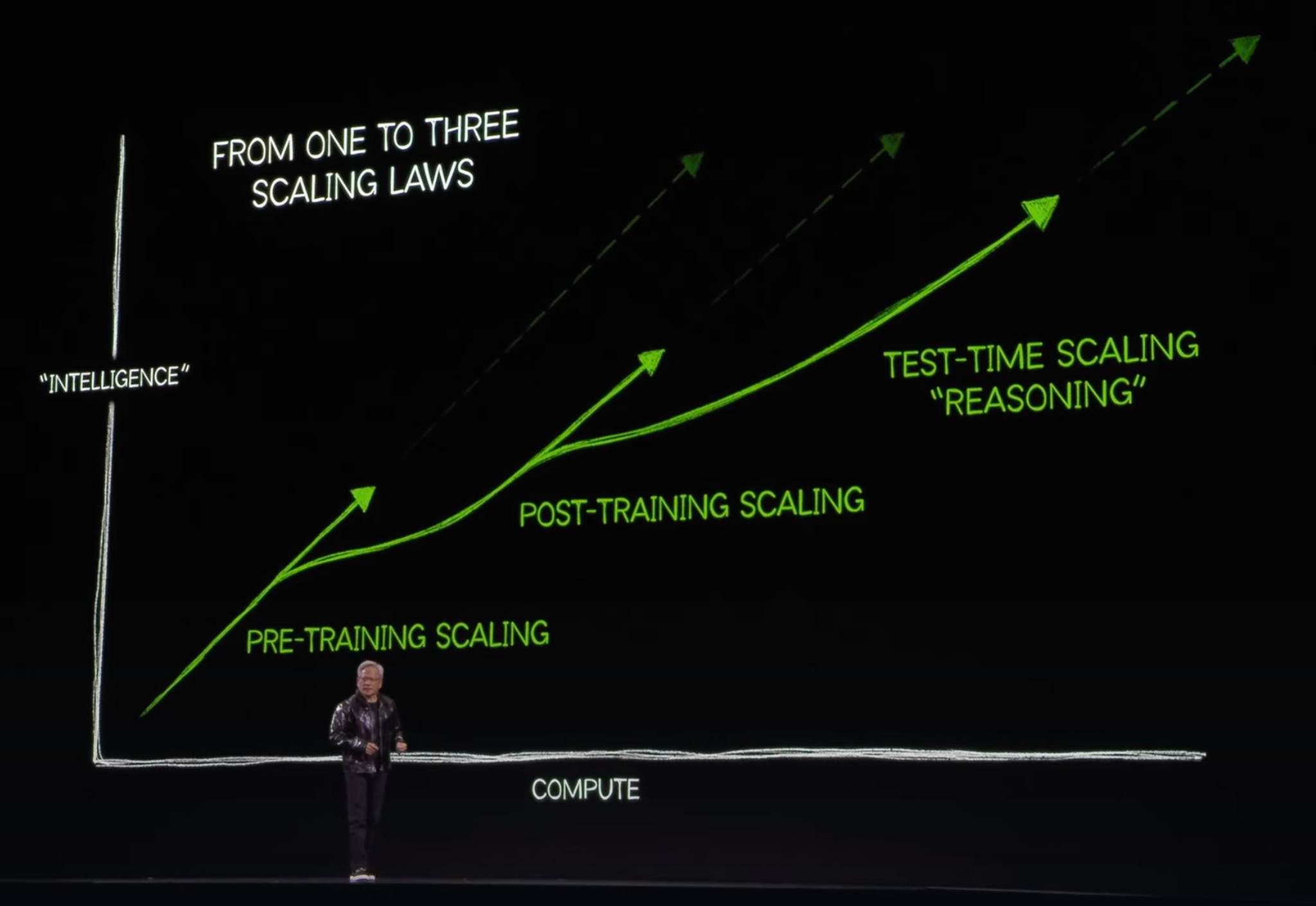

Fresh off their NousCon event, Karan and Shannon from Nous Research joined us to discuss their latest project, Forge. Described by Shannon as "Jarvis on the front end," Forge is an inference engine designed to push the limits of what’s possible with existing LLMs. Their secret weapon? Inference-time compute. By implementing sophisticated techniques like Monte Carlo Tree Search (MCTS), Forge can outperform larger models on complex reasoning tasks beating OpenAI's o1-preview at the AIME Eval, competition math benchmark, even with smaller, locally runnable models like Hermes 70B. As Karan emphasized, “We’re actually just scoring with Hermes 3.1, which is available to everyone already...we can scale it up to outperform everything on math, just using a system like this.”

Forge isn't just about raw performance, though. It's built with usability and transparency in mind. Unlike OpenAI's 01, which obfuscates its chain of thought reasoning, Forge provides users with a clear visual representation of the model's thought process. "You will still have access in the sidebar to the full chain of thought," Shannon explained, adding, “There’s a little visualizer and it will show you the trajectory through the tree… you’ll be able to see exactly what the model was doing and why the node was selected.” Forge also boasts built-in memory, a graph database, and even code interpreter capabilities,