Accelerating Large Language Model Decoding

with Speculative Sampling

Description

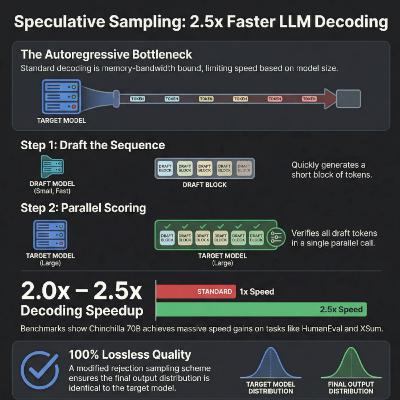

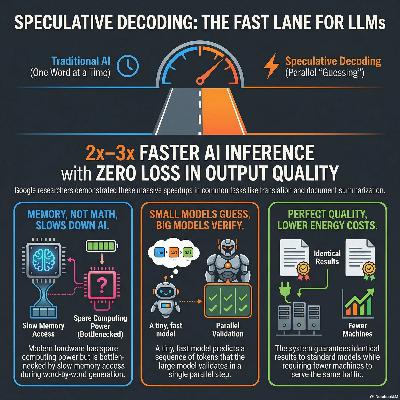

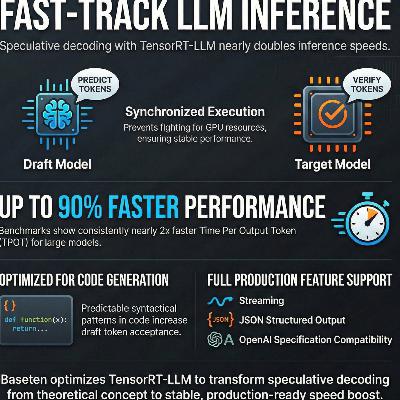

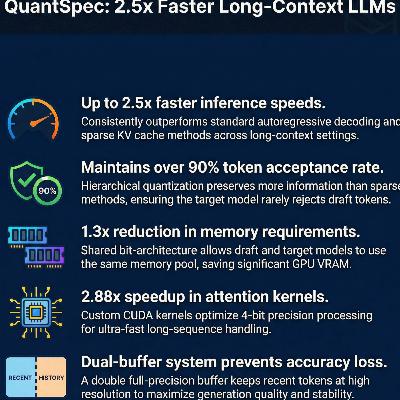

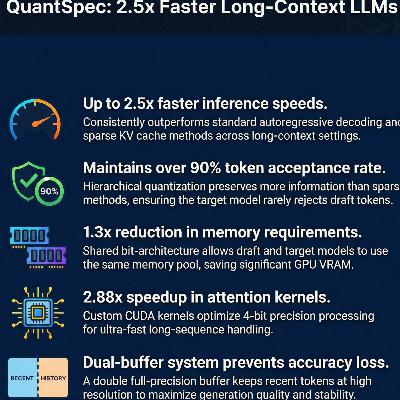

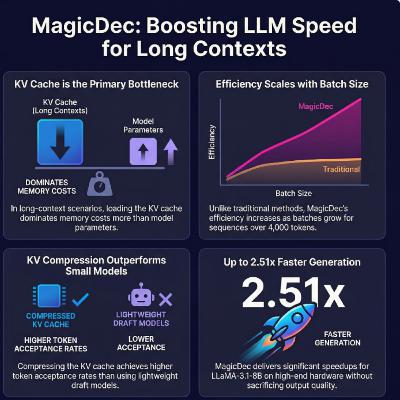

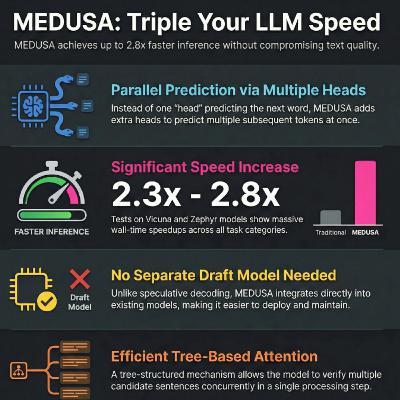

The Deepmind February 3, 2023 paper "Accelerating Large Language Model Decoding with Speculative Sampling introduced speculative sampling, a novel algorithm designed to increase the speed of Large Language Model (LLM) decoding without altering the final output. The researchers utilize a smaller, faster draft model to predict multiple potential tokens, which are then verified in parallel by a larger, more powerful target model. By employing a unique rejection sampling scheme, the system ensures that the generated text remains mathematically identical to the distribution of the original large model. When tested with the 70 billion parameter Chinchilla model, this technique achieved a 2 to 2.5 times speedup in processing. The method is particularly effective because it overcomes the memory bandwidth bottlenecks typical of standard autoregressive generation. Ultimately, it provides a practical way to reduce latency in large-scale AI applications without sacrificing sample quality.

Source:

February 3 2023

Accelerating Large Language Model Decoding with Speculative Sampling

DeepMind

Charlie Chen, Sebastian Borgeaud, Geoffrey Irving, Jean-Baptiste Lespiau, Laurent Sifre, John Jumper

https://arxiv.org/pdf/2302.01318