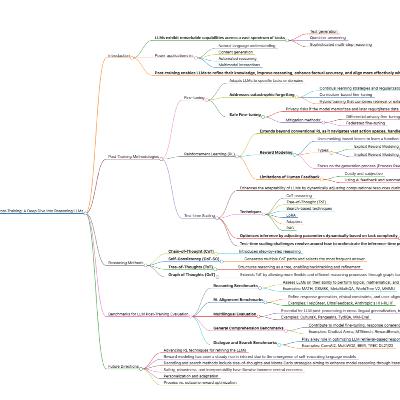

Transformers Without Normalization: Dynamic Tanh Achieves Strong Performance

Update: 2025-03-24

Description

This podcast episode delves into the "Transformers without Normalization" paper, which introduces Dynamic Tanh (DyT) as a potential replacement for normalization layers in Transformers. DyT, a simple operation defined as tanh(αx) with a learnable parameter, aims to replicate the effects of Layer Norm without calculating activation statistics. Could DyT offer similar or better performance and improved efficiency, challenging the necessity of normalization in modern neural networks?

Comments

In Channel