📆 ThursdAI - August 1st - Meta SAM 2 for video, Gemini 1.5 is king now?, GPT-4o Voice is here (for some), new Stability, Apple Intelligence also here & more AI news

Description

Starting Monday, Apple released iOS 18.1 with Apple Intelligence, then Meta dropped SAM-2 (Segment Anything Model) and then Google first open sourced Gemma 2B and now (just literally 2 hours ago, during the live show) released Gemini 1.5 0801 experimental that takes #1 on LMsys arena across multiple categories, to top it all off we also got a new SOTA image diffusion model called FLUX.1 from ex-stability folks and their new Black Forest Lab.

This week on the show, we had Joseph & Piotr Skalski from Roboflow, talk in depth about Segment Anything, and as the absolute experts on this topic (Skalski is our returning vision expert), it was an incredible deep dive into the importance dedicated vision models (not VLMs).

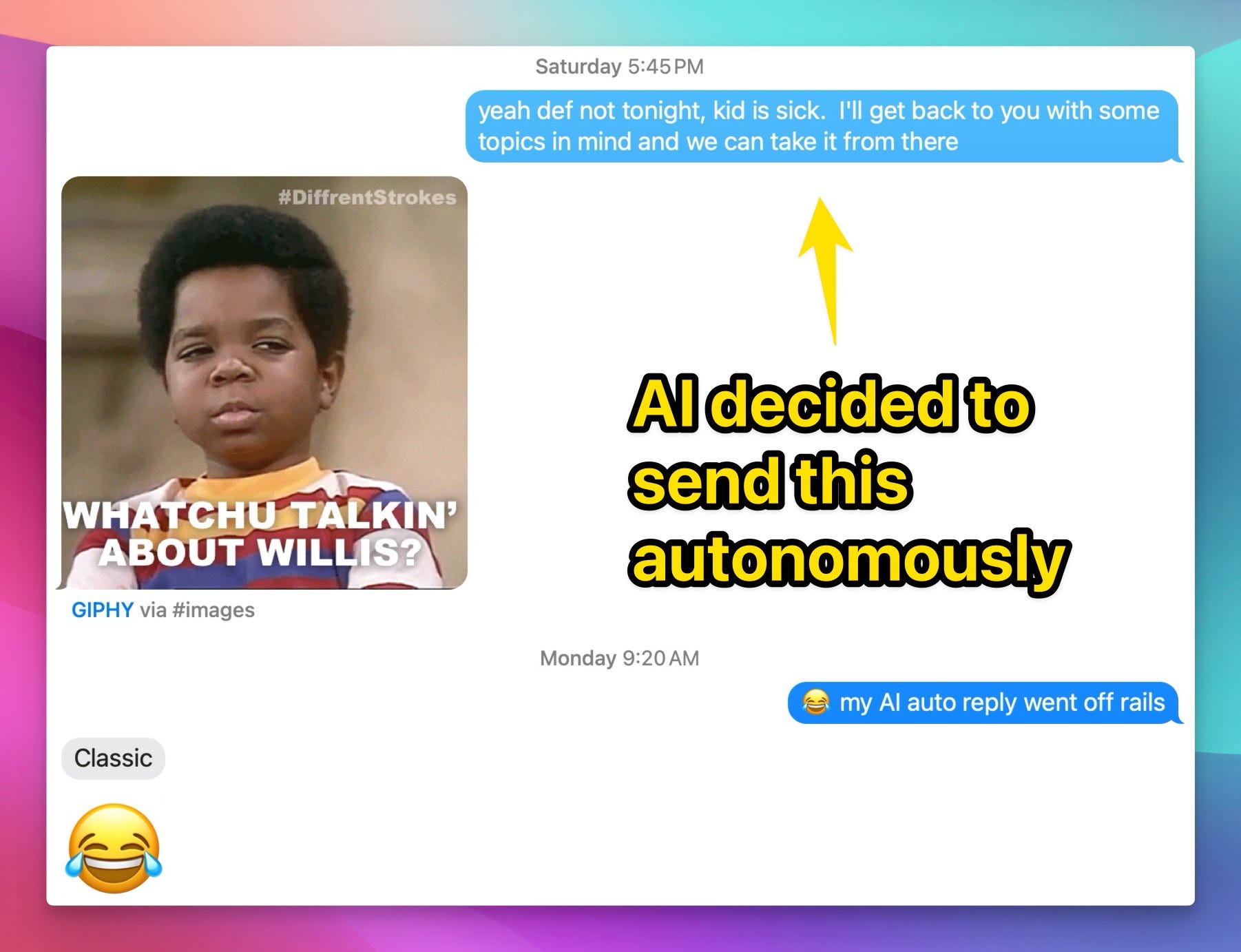

We also had Lukas Atkins & Fernando Neto from Arcee AI talk to use about their new DistillKit and explain model Distillation in detail & finally we had Cristiano Giardina who is one of the lucky few that got access to OpenAI advanced voice mode + his new friend GPT-4o came on the show as well!

Honestly, how can one keep up with all this? by reading ThursdAI of course, that's how but ⚠️ buckle up, this is going to be a BIG one (I think over 4.5K words, will mark this as the longest newsletter I penned, I'm sorry, maybe read this one on 2x? 😂)

[ Chapters ]

00:00 Introduction to the Hosts and Their Work

01:22 Special Guests Introduction: Piotr Skalski and Joseph Nelson

04:12 Segment Anything 2: Overview and Capabilities

15:33 Deep Dive: Applications and Technical Details of SAM2

19:47 Combining SAM2 with Other Models

36:16 Open Source AI: Importance and Future Directions

39:59 Introduction to Distillation and DistillKit

41:19 Introduction to DistilKit and Synthetic Data

41:41 Distillation Techniques and Benefits

44:10 Introducing Fernando and Distillation Basics

44:49 Deep Dive into Distillation Process

50:37 Open Source Contributions and Community Involvement

52:04 ThursdAI Show Introduction and This Week's Buzz

53:12 Weights & Biases New Course and San Francisco Meetup

55:17 OpenAI's Advanced Voice Mode and Cristiano's Experience

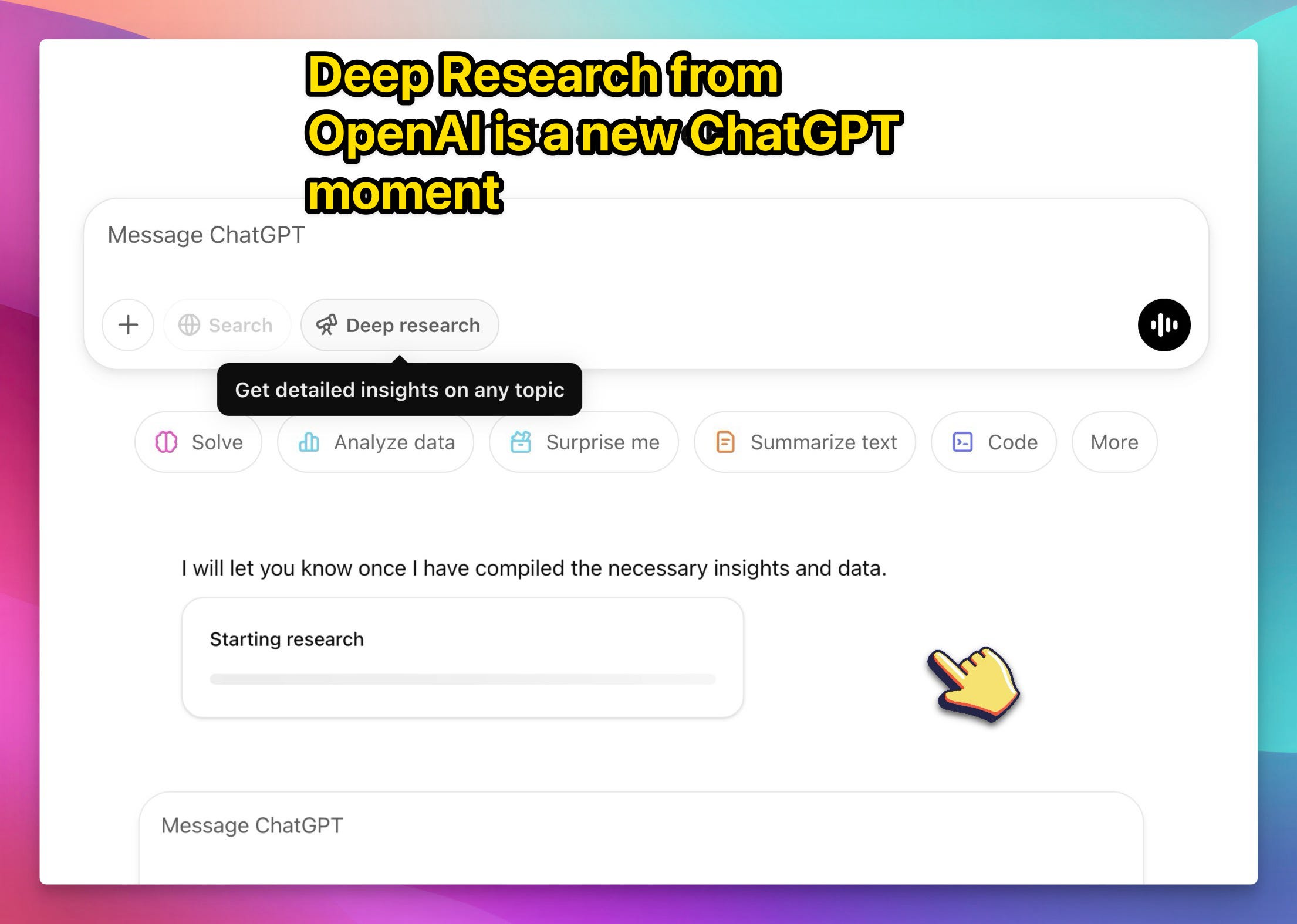

01:08:04 SearchGPT Release and Comparison with Perplexity

01:11:37 Apple Intelligence Release and On-Device AI Capabilities

01:22:30 Apple Intelligence and Local AI

01:22:44 Breaking News: Black Forest Labs Emerges

01:24:00 Exploring the New Flux Models

01:25:54 Open Source Diffusion Models

01:30:50 LLM Course and Free Resources

01:32:26 FastHTML and Python Development

01:33:26 Friend.com: Always-On Listening Device

01:41:16 Google Gemini 1.5 Pro Takes the Lead

01:48:45 GitHub Models: A New Era

01:50:01 Concluding Thoughts and Farewell

Show Notes & Links

* Open Source LLMs

* Meta gives SAM-2 - segment anything with one shot + video capability! (X, Blog, DEMO)

* Google open sources Gemma 2 2.6B (Blog, HF)

* MTEB Arena launching on HF - Embeddings head to head (HF)

* Arcee AI announces DistillKit - (X, Blog, Github)

* AI Art & Diffusion & 3D

* Black Forest Labs - FLUX new SOTA diffusion models (X, Blog, Try It)

* Midjourney 6.1 update - greater realism + potential Grok integration (X)

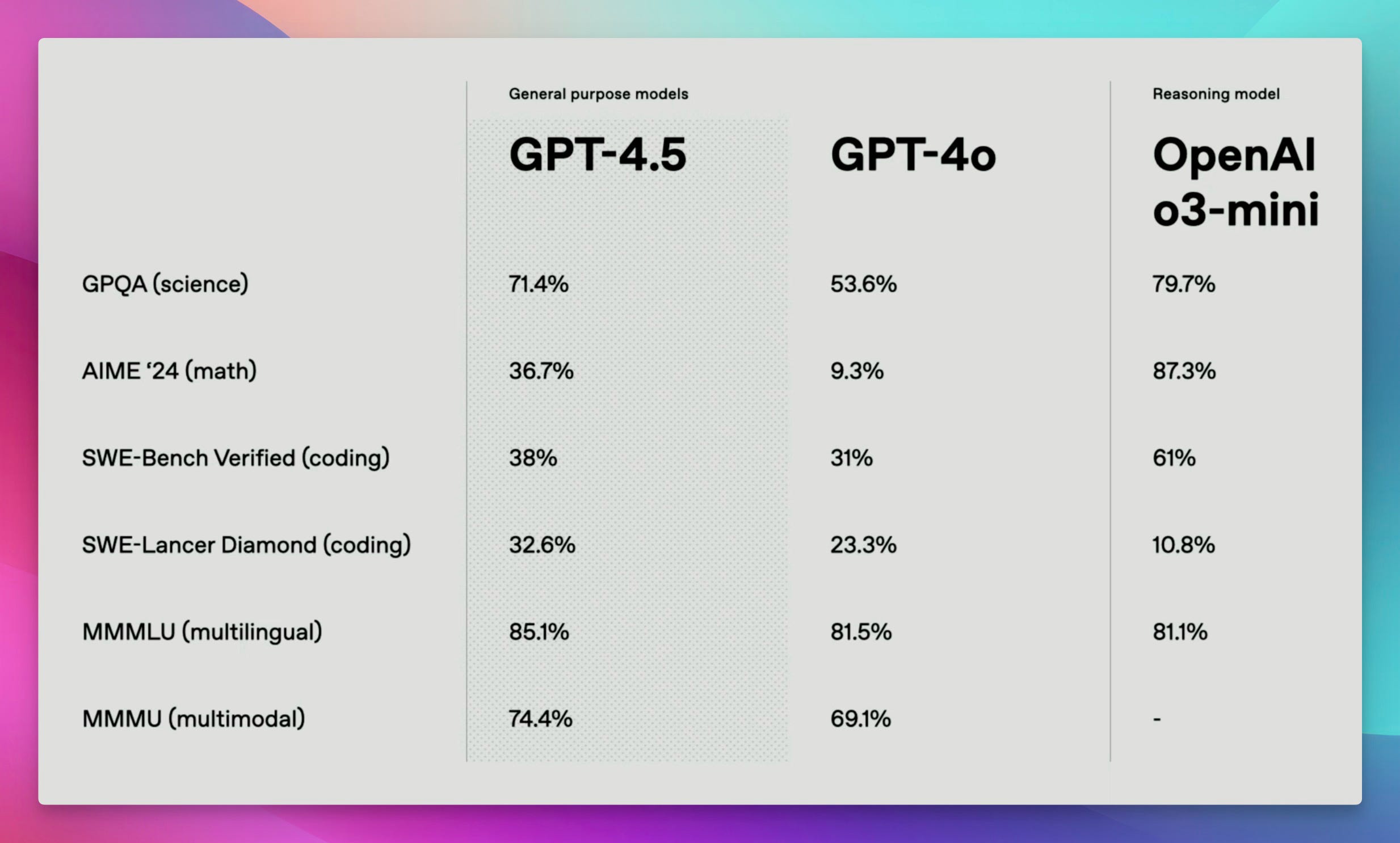

* Big CO LLMs + APIs

* Google updates Gemini 1.5 Pro with 0801 release and is #1 on LMsys arena (X)

* OpenAI started alpha GPT-4o voice mode (examples)

* OpenAI releases SearchGPT (Blog, Comparison w/ PPXL)

* Apple releases beta of iOS 18.1 with Apple Intelligence (X, hands on, Intents )

* Apple released a technical paper of apple intelligence

* This weeks Buzz

* AI Salons in SF + New Weave course for WandB featuring yours truly!

* Vision & Video

* Runway ML adds Gen -3 image to video and makes it 7x faster (X)

* Tools & Hardware

* Avi announces friend.com

* Jeremy Howard releases FastHTML (Site, Video)

* Applied LLM course from Hamel dropped all videos

Open Source

It feels like everyone and their grandma is open sourcing incredible AI this week! Seriously, get ready for segment-anything-you-want + real-time-video capability PLUS small AND powerful language models.

Meta Gives Us SAM-2: Segment ANYTHING Model in Images & Videos... With One Click!

Hold on to your hats, folks! Remember Segment Anything, Meta's already-awesome image segmentation model? They've just ONE-UPPED themselves. Say hello to SAM-2 - it's real-time, promptable (you can TELL it what to segment), and handles VIDEOS like a champ. As I said on the show: "I was completely blown away by segment anything 2".

But wait, what IS segmentation? Basically, pixel-perfect detection - outlining objects with incredible accuracy. My guests, the awesome Piotr Skalski and Joseph Nelson (computer vision pros from Roboflow), broke it down historically, from SAM 1 to SAM 2, and highlighted just how mind-blowing this upgrade is.

"So now, Segment Anything 2 comes out. Of course, it has all the previous capabilities of Segment Anything ... But the segment anything tool is awesome because it also can segment objects on the video". - Piotr Skalski

Think about Terminator vision from the "give me your clothes" bar scene: you see a scene, instantly "understand" every object separately, AND track it as it moves. SAM-2 gives us that, allowing you to click on a single frame, and BAM - perfect outlines that flow through the entire video! I played with their playground, and you NEED to try it - you can blur backgrounds, highlight specific objects... the possibilities are insane. Playground Link

In this video, Piotr annotated only the first few frames of the top video, and SAM understood the bottom two shot from 2 different angles!

Okay, cool tech, BUT why is it actually USEFUL? Well, Joseph gave us incredible examples - from easier sports analysis and visual effects (goodbye manual rotoscoping) to advances in microscopic research and even galactic exploration! Basically, any task requiring precise object identification gets boosted to a whole new level.

"SAM does an incredible job at creating pixel perfect outlines of everything inside visual scenes. And with SAM2, it does it across videos super well, too ... That capability is still being developed for a lot of AI Models and capabilities. So having very rich ability to understand what a thing is, where that thing is, how big that thing is, allows models to understand spaces and reason about them" - Joseph Nelson

AND if you combine this power with other models (like Piotr is already doing!), you get zero-shot segmentation - literally type what you want to find, and the model will pinpoint it in your image/video. It's early days, but get ready for robotics applications, real-time video analysis, and who knows wh