Teaching LLMs to Plan: Logical CoT Instruction Tuning for Symbolic Planning

Description

Large Language Models (LLMs) like GPT and LLaMA have shown remarkable general capabilities, yet they consistently hit a critical wall when faced with structured symbolic planning. This struggle is especially apparent when dealing with formal planning representations such as the Planning Domain Definition Language (PDDL), a fundamental requirement for reliable real-world sequential decision-making systems.

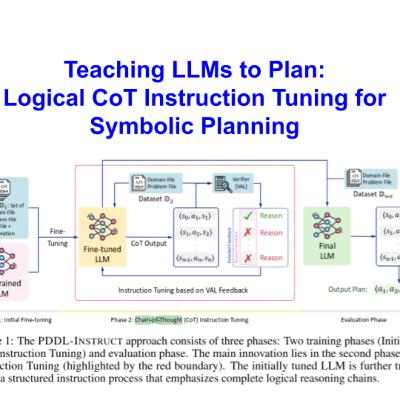

In this episode, we explore PDDL-INSTRUCT, a novel instruction tuning framework designed to significantly enhance LLMs' symbolic planning capabilities. This approach explicitly bridges the gap between general LLM reasoning and the logical precision needed for automated planning by using logical Chain-of-Thought (CoT) reasoning.

Key topics covered include:

- The PDDL-INSTRUCT Methodology: Learn how the framework systematically builds verification skills by decomposing the planning process into explicit reasoning chains about precondition satisfaction, effect application, and invariant preservation. This structure enables LLMs to self-correct their planning processes through structured reflection.

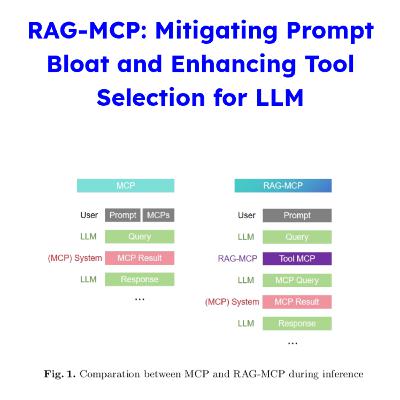

- The Power of External Verification: We discuss the innovative two-phase training process, where an initially tuned LLM undergoes CoT Instruction Tuning, generating step-by-step reasoning chains that are validated by an external module, VAL. This provides ground-truth feedback, a critical component since LLMs currently lack sufficient self-correction capabilities in reasoning.

- Detailed Feedback vs. Binary Feedback (The Crucial Difference): Empirical evidence shows that detailed feedback, which provides specific reasoning about failed preconditions or incorrect effects, consistently leads to more robust planning capabilities than simple binary (valid/invalid) feedback. The advantage of detailed feedback is particularly pronounced in complex domains like Mystery Blocksworld.

- Groundbreaking Results: PDDL-INSTRUCT significantly outperforms baseline models, achieving planning accuracy of up to 94% on standard benchmarks. For Llama-3, this represents a 66% absolute improvement over baseline models.

- Future Directions and Broader Impacts: We consider how this work contributes to developing more trustworthy and interpretable AI systems and the potential for applying this logical reasoning framework to other long-horizon sequential decision-making tasks, such as theorem proving or complex puzzle solving. We also touch upon the next steps, including expanding PDDL coverage and optimizing for optimal planning.